XiongZhongBo

XiongZhongBo

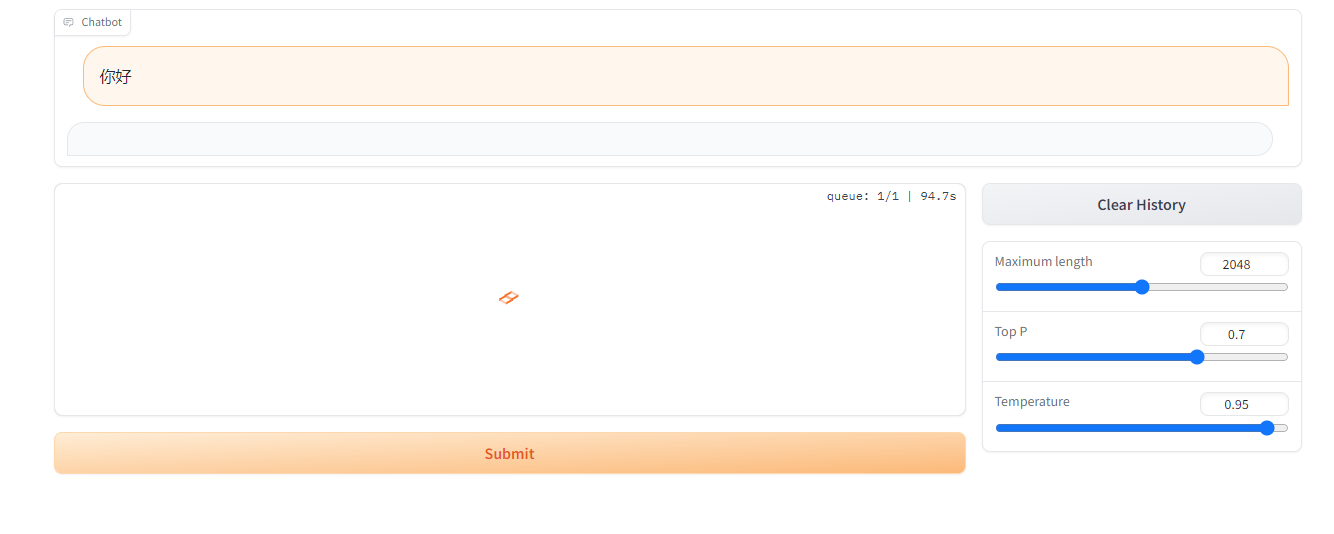

### Is there an existing issue for this? - [X] I have searched the existing issues ### Current Behavior 训练完跑起来以后,一直没有返回  ### Expected Behavior 有回复值 ### Steps To Reproduce A6000显卡,PT训练完自己数据后,拿到webdemo.py里面跑...

### Is your feature request related to a problem? Please describe. _No response_ ### Solutions 模式怎么支持一个字一个字的吐出。 ### Additional context _No response_

tokenizer = AutoTokenizer.from_pretrained("chatglm2-6b", trust_remote_code=True) model = AutoModel.from_pretrained("chatglm2-6b", trust_remote_code=True).cuda() model = PeftModel.from_pretrained(model, "weights").half()

minio文件信息不一致,3.7.1版本,切换回3.3.1后恢复正常 请作者修复一下

@st.cache_resource def get_model(): tokenizer = AutoTokenizer.from_pretrained("chatglm2-6b", trust_remote_code=True) model = AutoModel.from_pretrained("chatglm2-6b", trust_remote_code=True).cuda() model = PeftModel.from_pretrained(model, "weights/sentiment_comp_ie_chatglm2").half() # 多显卡支持,使用下面两行代替上面一行,将num_gpus改为你实际的显卡数量 # from utils import load_model_on_gpus # model = load_model_on_gpus("chatglm2-6b", num_gpus=2) model = model.eval()...

## **描述问题 (Describe the bug)** ## 版本信息 (version info) * DDNS Version: last * OS Version: linux * Type(运行方式): Binary/Python2/Python3 python 3.9 * related issues (相关问题): Cache is empty ##...

P-tuning 方法,训练后,完全无法使用。 直接,你的训练完以后还能正常聊天吗。

torch.cuda.OutOfMemoryError: CUDA out of memory. Tried to allocate 128.00 MiB (GPU 0; 44.99 GiB total capacity; 6.15 GiB already allocated; 37.32 GiB free; 6.15 GiB reserved in total by PyTorch)...