kitti dataset train problem

Hello, author, I am using the kitti dataset to train a network, but I have the following problem:

Traceback (most recent call last): File "/home/eternal/Project/SST-main/tools/train.py", line 232, in

main() File "/home/eternal/Project/SST-main/tools/train.py", line 228, in main meta=meta) File "/home/eternal/Project/SST-main/mmdet3d/apis/train.py", line 34, in train_model meta=meta) File "/home/eternal/miniconda3/envs/openMMLab/lib/python3.7/site-packages/mmdet/apis/train.py", line 244, in train_detector runner.run(data_loaders, cfg.workflow) File "/home/eternal/miniconda3/envs/openMMLab/lib/python3.7/site-packages/mmcv/runner/epoch_based_runner.py", line 127, in run epoch_runner(data_loaders[i], **kwargs) File "/home/eternal/miniconda3/envs/openMMLab/lib/python3.7/site-packages/mmcv/runner/epoch_based_runner.py", line 50, in train self.run_iter(data_batch, train_mode=True, **kwargs) File "/home/eternal/miniconda3/envs/openMMLab/lib/python3.7/site-packages/mmcv/runner/epoch_based_runner.py", line 30, in run_iter **kwargs) File "/home/eternal/miniconda3/envs/openMMLab/lib/python3.7/site-packages/mmcv/parallel/data_parallel.py", line 75, in train_step return self.module.train_step(*inputs[0], **kwargs[0]) File "/home/eternal/miniconda3/envs/openMMLab/lib/python3.7/site-packages/mmdet/models/detectors/base.py", line 248, in train_step losses = self(**data) File "/home/eternal/miniconda3/envs/openMMLab/lib/python3.7/site-packages/torch/nn/modules/module.py", line 1102, in _call_impl return forward_call(*input, **kwargs) File "/home/eternal/miniconda3/envs/openMMLab/lib/python3.7/site-packages/mmcv/runner/fp16_utils.py", line 98, in new_func return old_func(*args, **kwargs) File "/home/eternal/Project/SST-main/mmdet3d/models/detectors/base.py", line 58, in forward return self.forward_train(**kwargs) File "/home/eternal/Project/SST-main/mmdet3d/models/detectors/voxelnet.py", line 90, in forward_train x = self.extract_feat(points, img_metas) File "/home/eternal/Project/SST-main/mmdet3d/models/detectors/dynamic_voxelnet.py", line 43, in extract_feat x = self.middle_encoder(voxel_features, feature_coors, batch_size) File "/home/eternal/miniconda3/envs/openMMLab/lib/python3.7/site-packages/torch/nn/modules/module.py", line 1102, in _call_impl return forward_call(*input, **kwargs) File "/home/eternal/miniconda3/envs/openMMLab/lib/python3.7/site-packages/mmcv/runner/fp16_utils.py", line 98, in new_func return old_func(*args, **kwargs) File "/home/eternal/Project/SST-main/mmdet3d/models/middle_encoders/sst_input_layer_v2.py", line 77, in forward voxel_info = self.drop_voxel(voxel_info, 2) # voxel_info is updated in this function File "/home/eternal/Project/SST-main/mmdet3d/models/middle_encoders/sst_input_layer_v2.py", line 135, in drop_voxel keep_mask_s0, drop_lvl_s0 = self.drop_single_shift(batch_win_inds_s0) File "/home/eternal/Project/SST-main/mmdet3d/models/middle_encoders/sst_input_layer_v2.py", line 107, in drop_single_shift bincount = torch.bincount(batch_win_inds) RuntimeError: CUDA error: no kernel image is available for execution on the device`

I did a simple experiment to confirm that the problem is not with cuda:

Python 3.7.13 (default, Mar 29 2022, 02:18:16) [GCC 7.5.0] :: Anaconda, Inc. on linux Type "help", "copyright", "credits" or "license" for more information. import torch torch.cuda.is_available() True torch.zeros(1).cuda() tensor([0.], device='cuda:0')

The generated config:

voxel_size = (0.16, 0.16, 4) window_shape = (12, 12, 1) drop_info_training = dict({ 0: dict(max_tokens=30, drop_range=(0, 30)), 1: dict(max_tokens=60, drop_range=(30, 60)), 2: dict(max_tokens=100, drop_range=(60, 100000)) }) drop_info_test = dict({ 0: dict(max_tokens=30, drop_range=(0, 30)), 1: dict(max_tokens=60, drop_range=(30, 60)), 2: dict(max_tokens=100, drop_range=(60, 100)), 3: dict(max_tokens=144, drop_range=(100, 100000)) }) drop_info = ({ 0: { 'max_tokens': 30, 'drop_range': (0, 30) }, 1: { 'max_tokens': 60, 'drop_range': (30, 60) }, 2: { 'max_tokens': 100, 'drop_range': (60, 100000) } }, { 0: { 'max_tokens': 30, 'drop_range': (0, 30) }, 1: { 'max_tokens': 60, 'drop_range': (30, 60) }, 2: { 'max_tokens': 100, 'drop_range': (60, 100) }, 3: { 'max_tokens': 144, 'drop_range': (100, 100000) } }) model = dict( type='DynamicVoxelNet', voxel_layer=dict( voxel_size=(0.16, 0.16, 4), max_num_points=-1, point_cloud_range=[0, -40, -3, 70.4, 40, 1], max_voxels=(-1, -1)), voxel_encoder=dict( type='DynamicVFE', in_channels=4, feat_channels=[64, 128], with_distance=False, voxel_size=(0.16, 0.16, 4), with_cluster_center=True, with_voxel_center=True, point_cloud_range=[0, -40, -3, 70.4, 40, 1], norm_cfg=dict(type='naiveSyncBN1d', eps=0.001, momentum=0.01)), middle_encoder=dict( type='SSTInputLayerV2', window_shape=(12, 12, 1), sparse_shape=(468, 468, 1), shuffle_voxels=True, debug=True, drop_info=({ 0: { 'max_tokens': 30, 'drop_range': (0, 30) }, 1: { 'max_tokens': 60, 'drop_range': (30, 60) }, 2: { 'max_tokens': 100, 'drop_range': (60, 100000) } }, { 0: { 'max_tokens': 30, 'drop_range': (0, 30) }, 1: { 'max_tokens': 60, 'drop_range': (30, 60) }, 2: { 'max_tokens': 100, 'drop_range': (60, 100) }, 3: { 'max_tokens': 144, 'drop_range': (100, 100000) } }), pos_temperature=1000, normalize_pos=False), backbone=dict( type='SSTv2', d_model=[128, 128, 128, 128], nhead=[8, 8, 8, 8], num_blocks=4, dim_feedforward=[256, 256, 256, 256], output_shape=[468, 468], num_attached_conv=4, conv_kwargs=[ dict(kernel_size=3, dilation=1, padding=1, stride=1), dict(kernel_size=3, dilation=1, padding=1, stride=1), dict(kernel_size=3, dilation=1, padding=1, stride=1), dict(kernel_size=3, dilation=2, padding=2, stride=1) ], conv_in_channel=128, conv_out_channel=128, debug=True, layer_cfg=dict(use_bn=False, cosine=True, tau_min=0.01), checkpoint_blocks=[0, 1], conv_shortcut=True), neck=dict( type='SECONDFPN', norm_cfg=dict(type='naiveSyncBN2d', eps=0.001, momentum=0.01), in_channels=[128], upsample_strides=[1], out_channels=[384]), bbox_head=dict( type='Anchor3DHead', num_classes=3, in_channels=384, feat_channels=384, use_direction_classifier=True, anchor_generator=dict( type='AlignedAnchor3DRangeGenerator', ranges=[[-74.88, -74.88, -0.0345, 74.88, 74.88, -0.0345], [-74.88, -74.88, -0.1188, 74.88, 74.88, -0.1188], [-74.88, -74.88, 0, 74.88, 74.88, 0]], sizes=[[2.08, 4.73, 1.77], [0.84, 1.81, 1.77], [0.84, 0.91, 1.74]], rotations=[0, 1.57], reshape_out=False), diff_rad_by_sin=True, dir_offset=0.7854, dir_limit_offset=0, bbox_coder=dict(type='DeltaXYZWLHRBBoxCoder', code_size=7), loss_cls=dict( type='FocalLoss', use_sigmoid=True, gamma=2.0, alpha=0.25, loss_weight=1.0), loss_bbox=dict(type='L1Loss', loss_weight=0.5), loss_dir=dict( type='CrossEntropyLoss', use_sigmoid=False, loss_weight=0.2)), train_cfg=dict( assigner=[ dict( type='MaxIoUAssigner', iou_calculator=dict(type='BboxOverlapsNearest3D'), pos_iou_thr=0.55, neg_iou_thr=0.4, min_pos_iou=0.4, ignore_iof_thr=-1), dict( type='MaxIoUAssigner', iou_calculator=dict(type='BboxOverlapsNearest3D'), pos_iou_thr=0.5, neg_iou_thr=0.3, min_pos_iou=0.3, ignore_iof_thr=-1), dict( type='MaxIoUAssigner', iou_calculator=dict(type='BboxOverlapsNearest3D'), pos_iou_thr=0.5, neg_iou_thr=0.3, min_pos_iou=0.3, ignore_iof_thr=-1) ], allowed_border=0, code_weight=[1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0], pos_weight=-1, debug=False), test_cfg=dict( use_rotate_nms=True, nms_across_levels=False, nms_pre=4096, nms_thr=0.25, score_thr=0.1, min_bbox_size=0, max_num=500)) dataset_type = 'KittiDataset' data_root = '/home/eternal/Project/SST-main/data/kitti/' class_names = ['Car', 'Pedestrian', 'Cyclist'] point_cloud_range = [0, -40, -3, 70.4, 40, 1] input_modality = dict(use_lidar=True, use_camera=False) db_sampler = dict( data_root='/home/eternal/Project/SST-main/data/kitti/', info_path= '/home/eternal/Project/SST-main/data/kitti/kitti_dbinfos_train.pkl', rate=1.0, prepare=dict( filter_by_difficulty=[-1], filter_by_min_points=dict(Car=5, Pedestrian=10, Cyclist=10)), classes=['Car', 'Pedestrian', 'Cyclist'], sample_groups=dict(Car=12, Pedestrian=6, Cyclist=6)) file_client_args = dict(backend='disk') train_pipeline = [ dict( type='LoadPointsFromFile', coord_type='LIDAR', load_dim=4, use_dim=4, file_client_args=dict(backend='disk')), dict( type='LoadAnnotations3D', with_bbox_3d=True, with_label_3d=True, file_client_args=dict(backend='disk')), dict( type='ObjectSample', db_sampler=dict( data_root='/home/eternal/Project/SST-main/data/kitti/', info_path= '/home/eternal/Project/SST-main/data/kitti/kitti_dbinfos_train.pkl', rate=1.0, prepare=dict( filter_by_difficulty=[-1], filter_by_min_points=dict(Car=5, Pedestrian=10, Cyclist=10)), classes=['Car', 'Pedestrian', 'Cyclist'], sample_groups=dict(Car=12, Pedestrian=6, Cyclist=6))), dict( type='ObjectNoise', num_try=100, translation_std=[1.0, 1.0, 0.5], global_rot_range=[0.0, 0.0], rot_range=[-0.78539816, 0.78539816]), dict(type='RandomFlip3D', flip_ratio_bev_horizontal=0.5), dict( type='GlobalRotScaleTrans', rot_range=[-0.78539816, 0.78539816], scale_ratio_range=[0.95, 1.05]), dict( type='PointsRangeFilter', point_cloud_range=[0, -40, -3, 70.4, 40, 1]), dict( type='ObjectRangeFilter', point_cloud_range=[0, -40, -3, 70.4, 40, 1]), dict(type='PointShuffle'), dict( type='DefaultFormatBundle3D', class_names=['Car', 'Pedestrian', 'Cyclist']), dict(type='Collect3D', keys=['points', 'gt_bboxes_3d', 'gt_labels_3d']) ] test_pipeline = [ dict( type='LoadPointsFromFile', coord_type='LIDAR', load_dim=4, use_dim=4, file_client_args=dict(backend='disk')), dict( type='MultiScaleFlipAug3D', img_scale=(1333, 800), pts_scale_ratio=1, flip=False, transforms=[ dict( type='GlobalRotScaleTrans', rot_range=[0, 0], scale_ratio_range=[1.0, 1.0], translation_std=[0, 0, 0]), dict(type='RandomFlip3D'), dict( type='PointsRangeFilter', point_cloud_range=[0, -40, -3, 70.4, 40, 1]), dict( type='DefaultFormatBundle3D', class_names=['Car', 'Pedestrian', 'Cyclist'], with_label=False), dict(type='Collect3D', keys=['points']) ]) ] eval_pipeline = [ dict( type='LoadPointsFromFile', coord_type='LIDAR', load_dim=4, use_dim=4, file_client_args=dict(backend='disk')), dict( type='DefaultFormatBundle3D', class_names=['Car', 'Pedestrian', 'Cyclist'], with_label=False), dict(type='Collect3D', keys=['points']) ] data = dict( samples_per_gpu=1, workers_per_gpu=1, train=dict( type='RepeatDataset', times=2, dataset=dict( type='KittiDataset', data_root='/home/eternal/Project/SST-main/data/kitti/', ann_file= '/home/eternal/Project/SST-main/data/kitti/kitti_infos_train.pkl', split='training', pts_prefix='velodyne_reduced', pipeline=[ dict( type='LoadPointsFromFile', coord_type='LIDAR', load_dim=4, use_dim=4, file_client_args=dict(backend='disk')), dict( type='LoadAnnotations3D', with_bbox_3d=True, with_label_3d=True, file_client_args=dict(backend='disk')), dict( type='ObjectSample', db_sampler=dict( data_root='/home/eternal/Project/SST-main/data/kitti/', info_path= '/home/eternal/Project/SST-main/data/kitti/kitti_dbinfos_train.pkl', rate=1.0, prepare=dict( filter_by_difficulty=[-1], filter_by_min_points=dict( Car=5, Pedestrian=10, Cyclist=10)), classes=['Pedestrian', 'Cyclist', 'Car'], sample_groups=dict(Car=12, Pedestrian=6, Cyclist=6))), dict( type='ObjectNoise', num_try=100, translation_std=[1.0, 1.0, 0.5], global_rot_range=[0.0, 0.0], rot_range=[-0.78539816, 0.78539816]), dict(type='RandomFlip3D', flip_ratio_bev_horizontal=0.5), dict( type='GlobalRotScaleTrans', rot_range=[-0.78539816, 0.78539816], scale_ratio_range=[0.95, 1.05]), dict( type='PointsRangeFilter', point_cloud_range=[0, -40, -3, 70.4, 40, 1]), dict( type='ObjectRangeFilter', point_cloud_range=[0, -40, -3, 70.4, 40, 1]), dict(type='PointShuffle'), dict( type='DefaultFormatBundle3D', class_names=['Pedestrian', 'Cyclist', 'Car']), dict( type='Collect3D', keys=['points', 'gt_bboxes_3d', 'gt_labels_3d']) ], modality=dict(use_lidar=True, use_camera=False), classes=['Pedestrian', 'Cyclist', 'Car'], test_mode=False, box_type_3d='LiDAR'), pipeline=[ dict( type='LoadPointsFromFile', coord_type='LIDAR', load_dim=4, use_dim=4, file_client_args=dict(backend='disk')), dict( type='LoadAnnotations3D', with_bbox_3d=True, with_label_3d=True, file_client_args=dict(backend='disk')), dict( type='ObjectSample', db_sampler=dict( data_root='/home/eternal/Project/SST-main/data/kitti/', info_path= '/home/eternal/Project/SST-main/data/kitti/kitti_dbinfos_train.pkl', rate=1.0, prepare=dict( filter_by_difficulty=[-1], filter_by_min_points=dict( Car=5, Pedestrian=10, Cyclist=10)), classes=['Car', 'Pedestrian', 'Cyclist'], sample_groups=dict(Car=12, Pedestrian=6, Cyclist=6))), dict( type='ObjectNoise', num_try=100, translation_std=[1.0, 1.0, 0.5], global_rot_range=[0.0, 0.0], rot_range=[-0.78539816, 0.78539816]), dict(type='RandomFlip3D', flip_ratio_bev_horizontal=0.5), dict( type='GlobalRotScaleTrans', rot_range=[-0.78539816, 0.78539816], scale_ratio_range=[0.95, 1.05]), dict( type='PointsRangeFilter', point_cloud_range=[0, -40, -3, 70.4, 40, 1]), dict( type='ObjectRangeFilter', point_cloud_range=[0, -40, -3, 70.4, 40, 1]), dict(type='PointShuffle'), dict( type='DefaultFormatBundle3D', class_names=['Car', 'Pedestrian', 'Cyclist']), dict( type='Collect3D', keys=['points', 'gt_bboxes_3d', 'gt_labels_3d']) ], classes=['Car', 'Pedestrian', 'Cyclist']), val=dict( type='KittiDataset', data_root='/home/eternal/Project/SST-main/data/kitti/', ann_file= '/home/eternal/Project/SST-main/data/kitti/kitti_infos_val.pkl', split='training', pts_prefix='velodyne_reduced', pipeline=[ dict( type='LoadPointsFromFile', coord_type='LIDAR', load_dim=4, use_dim=4, file_client_args=dict(backend='disk')), dict( type='MultiScaleFlipAug3D', img_scale=(1333, 800), pts_scale_ratio=1, flip=False, transforms=[ dict( type='GlobalRotScaleTrans', rot_range=[0, 0], scale_ratio_range=[1.0, 1.0], translation_std=[0, 0, 0]), dict(type='RandomFlip3D'), dict( type='PointsRangeFilter', point_cloud_range=[0, -40, -3, 70.4, 40, 1]), dict( type='DefaultFormatBundle3D', class_names=['Car', 'Pedestrian', 'Cyclist'], with_label=False), dict(type='Collect3D', keys=['points']) ]) ], modality=dict(use_lidar=True, use_camera=False), classes=['Car', 'Pedestrian', 'Cyclist'], test_mode=True, box_type_3d='LiDAR'), test=dict( type='KittiDataset', data_root='/home/eternal/Project/SST-main/data/kitti/', ann_file= '/home/eternal/Project/SST-main/data/kitti/kitti_infos_val.pkl', split='training', pts_prefix='velodyne_reduced', pipeline=[ dict( type='LoadPointsFromFile', coord_type='LIDAR', load_dim=4, use_dim=4, file_client_args=dict(backend='disk')), dict( type='DefaultFormatBundle3D', class_names=['Car', 'Pedestrian', 'Cyclist'], with_label=False), dict(type='Collect3D', keys=['points']) ], modality=dict(use_lidar=True, use_camera=False), classes=['Car', 'Pedestrian', 'Cyclist'], test_mode=True, box_type_3d='LiDAR')) evaluation = dict( interval=1, pipeline=[ dict( type='LoadPointsFromFile', coord_type='LIDAR', load_dim=4, use_dim=4, file_client_args=dict(backend='disk')), dict( type='DefaultFormatBundle3D', class_names=['Car', 'Pedestrian', 'Cyclist'], with_label=False), dict(type='Collect3D', keys=['points']) ]) lr = 1e-05 optimizer = dict( type='AdamW', lr=1e-05, betas=(0.9, 0.999), weight_decay=0.05, paramwise_cfg=dict(custom_keys=dict(norm=dict(decay_mult=0.0)))) optimizer_config = dict(grad_clip=dict(max_norm=10, norm_type=2)) lr_config = dict( policy='cyclic', target_ratio=(100, 0.001), cyclic_times=1, step_ratio_up=0.1) momentum_config = None runner = dict(type='EpochBasedRunner', max_epochs=12) checkpoint_config = dict(interval=1) log_config = dict( interval=50, hooks=[dict(type='TextLoggerHook'), dict(type='TensorboardLoggerHook')]) dist_params = dict(backend='nccl') log_level = 'INFO' work_dir = '/home/eternal/Project/SST-main/work_dirs/sst_waymoD5_1x_3class_centerhead_My/' load_from = None resume_from = None workflow = [('train', 1)] device = 'cuda' gpu_ids = range(0, 1)

My runtime environnment is RTX208SUPER, cuda 11.3 and pytorch1.10.0. What is the reason for the above problem? How can I fix this?

My suggestions:

- Make sure you could train other models like PointPillars.

- Using single GPU non-distributed training and CUDA_LAUNCH_BLOCKING=1 to locate the problem.

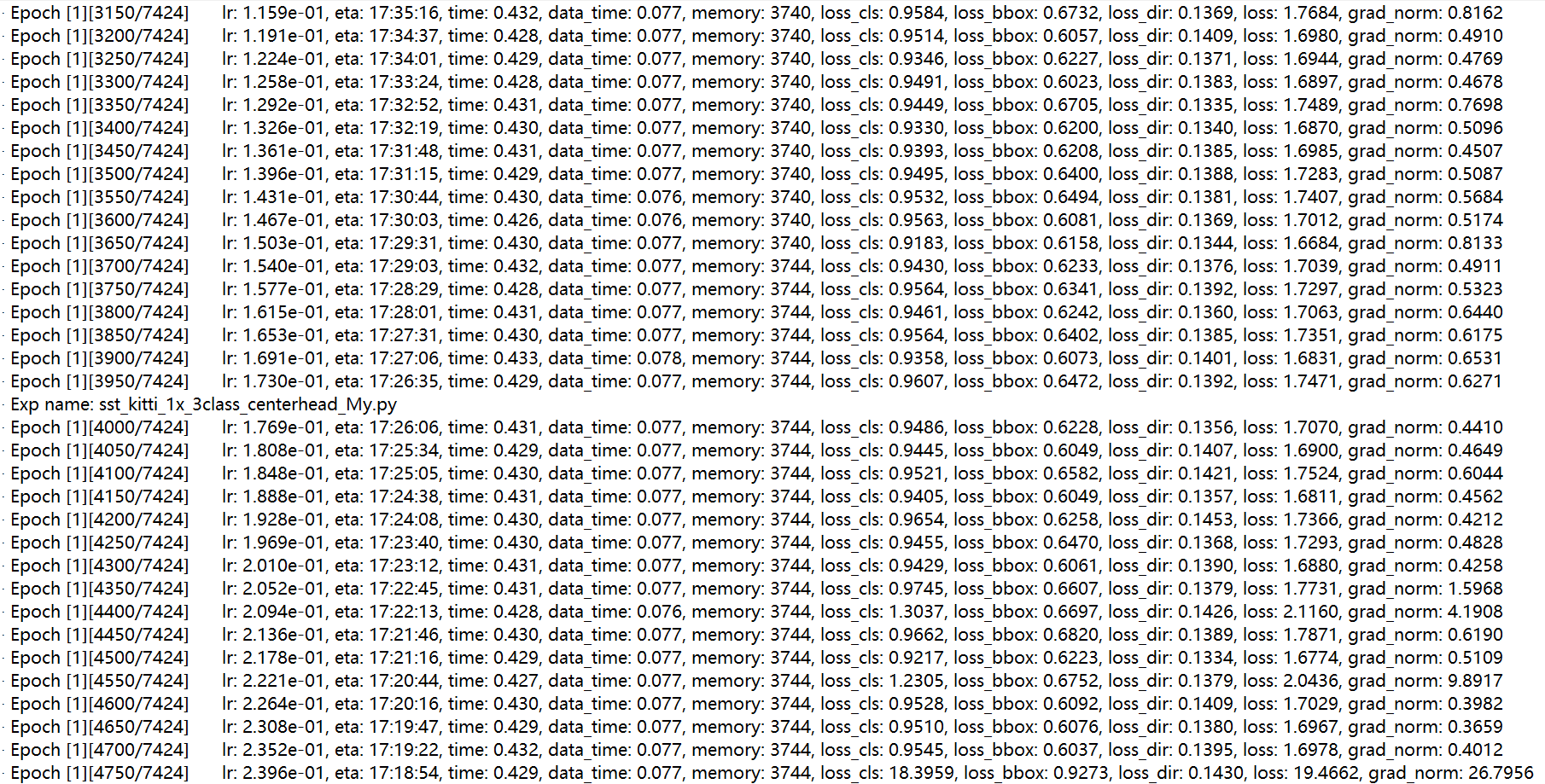

According to your suggestion, I solved the problem of code feedback and the network is able to train. But there is a very strange phenomenon during training, even though I have disabled learning rate scaling, the network is still increasing the learning rate, and the loss has no trend of decreasing. The dataset I use is the public kitti dataset, and I have also changed the network configuration file for some parameter requirements in the kitti dataset, but the training effect of the network is still not improved. Excuse me, is my parameter change wrong? Or some other problem?

Sorry for late reply. According to the log, the learning rate seems too large, which may lead to fail to converge.