scanpy

scanpy copied to clipboard

scanpy copied to clipboard

Use umap's pairwise_special_metric as a fallback for small AnnDatas with metrics unsupported by sklearn

This is an attempt to fix #1011

I have taken my cue from https://github.com/lmcinnes/umap/pull/259/files as suggested in the issue's thread. I have also added some tests (which focus on ensuring that results are successfully created rather than ensuring correctness).

I hope this helps.

The CI tests failed due to the image matching problems (in test_violin and test_pbmc3k) fixed by PR #1422 which is now merged into master. Should I merge master into my fix branch?

yes, please merge with the master branch

Thanks so much for your contribution @dwnorton ! Looks good. Two questions: 1) is there any way to do without densifying the matrix? it's not a big deal though since the matrix is small. 2) Is the UMAP API for the umap.distances.pairwise_special_metric function version-dependent somehow in other words do we need to constrain our umap dependency to a specific version, or is the API stable across all commonly used UMAP versions?

Thanks for the feedback. I have now merged master into the fix branch and the CI tests are happy. To address the shrewd questions asked by @gokceneraslan:

- I pondered over this myself. As I mentioned (above), I based my approach on a pull request in umap's own codebase which also resorts to densification of the matrix. As far as I can see,

pairwise_special_metricdoesn't directly support a sparse matrix and I don't see an alternative approach that wouldn't involve duplicating some of its implementation and (probably) sacrificing some of the benefits (i.e. parallel processing). A deeper analysis by another brain might draw different conclusions. - Another good question. Based on my "git blame" detective work,

pairwise_special_metricwas introduced in a change on 20 Nov 2018 which should have seen it incorporated into version 0.3.7. Since then its signature has remained compatible (with the only change being the addition of thekwdsargument). scanpy'srequirements.txtalready hasumap-learnset to a minimum version of 0.3.10 so I believe we're good on that front.

Hi @dwnorton,

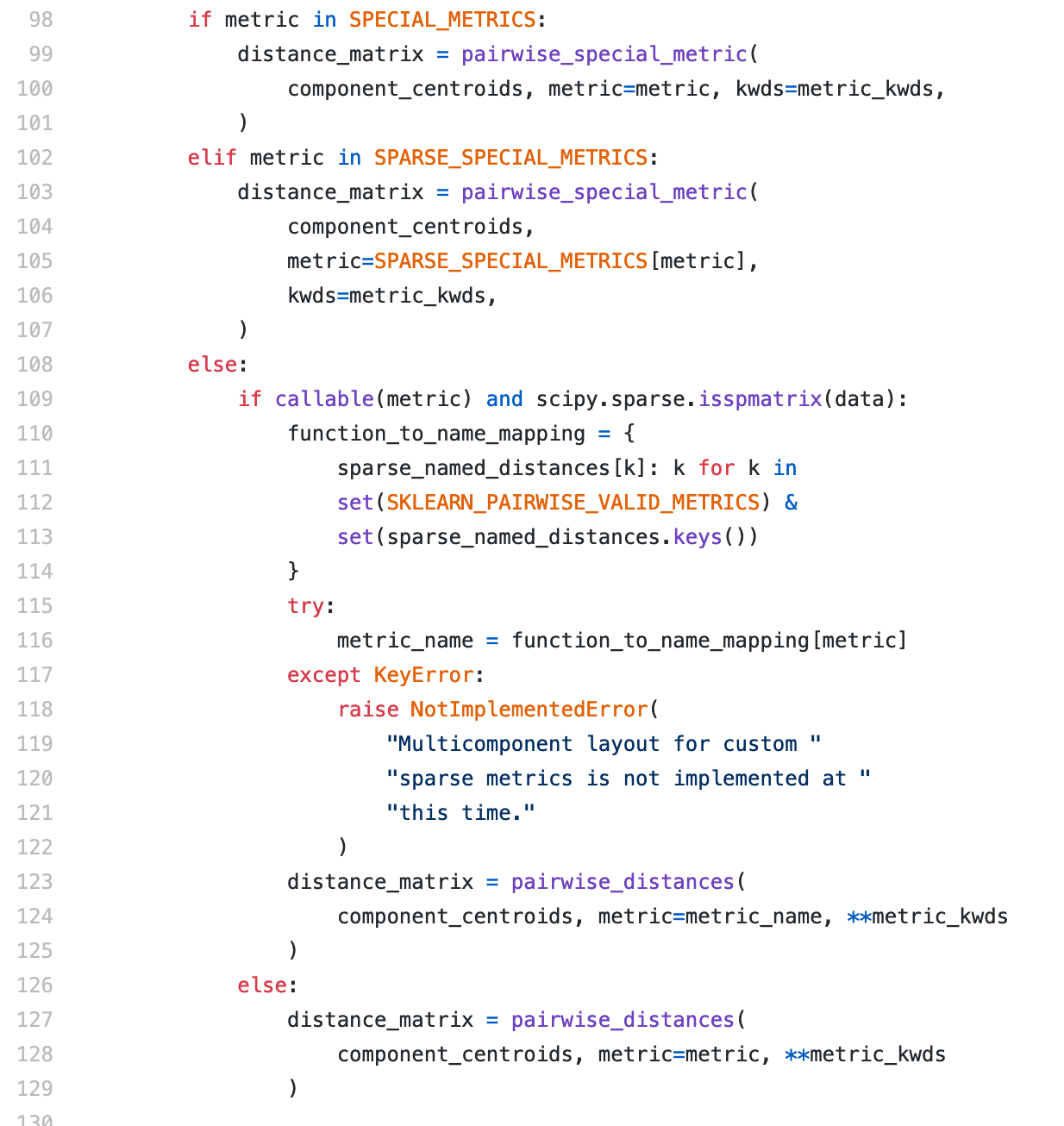

Sorry for the delay. I can see now that there are a few changes about this on the UMAP side:

Few questions:

- Can we improve the sparse array handling (e.g. mimicking UMAP e.g. using

SPARSE_SPECIAL_METRICS) - Can we also use the

SKLEARN_PAIRWISE_VALID_METRICSinstead of catching the ValueError? Would that be safer? - If we start using these keywords, do we need to change any of the requirements of scanpy?

Would it be easier to just use NNDescent's handling of distances here?

@dwnorton seems fine, but could you please commit anything here to trigger ci?

@ivirshup do we want to merge this or replace the current neighbors computation method with nndescent?

Hello. Sorry I have been silent but I haven't used scanpy for a few months and thought there was nothing more for me to do with this pull request.

@Koncopd: the commit I made in September last year (e4483e9) triggered the CI and passed the tests. Is there something further you need me to do?

Thanks.

Long term, I'd prefer to just use pynndescent since it would be a more simple implementation. That could change some results. What do you think?

/AzurePipelines run

Azure Pipelines successfully started running 1 pipeline(s).

Codecov Report

:exclamation: No coverage uploaded for pull request base (

master@62bb643). Click here to learn what that means. The diff coverage is74.48%.

@@ Coverage Diff @@

## master #1413 +/- ##

=========================================

Coverage ? 71.34%

=========================================

Files ? 92

Lines ? 11186

Branches ? 0

=========================================

Hits ? 7981

Misses ? 3205

Partials ? 0

| Impacted Files | Coverage Δ | |

|---|---|---|

| scanpy/__main__.py | 0.00% <0.00%> (ø) |

|

| scanpy/plotting/_tools/__init__.py | 76.74% <ø> (ø) |

|

| scanpy/plotting/_tools/paga.py | 70.62% <ø> (ø) |

|

| scanpy/plotting/_tools/scatterplots.py | 86.95% <ø> (ø) |

|

| scanpy/plotting/_utils.py | 54.61% <ø> (ø) |

|

| scanpy/plotting/palettes.py | 52.77% <ø> (ø) |

|

| scanpy/preprocessing/__init__.py | 100.00% <ø> (ø) |

|

| scanpy/preprocessing/_combat.py | 90.69% <ø> (ø) |

|

| scanpy/preprocessing/_deprecated/__init__.py | 88.88% <ø> (ø) |

|

| ...preprocessing/_deprecated/highly_variable_genes.py | 94.18% <ø> (ø) |

|

| ... and 52 more |

@ivirshup i would also prefer pynndescent. I can write it, but i need to look and check that there are no potential problems with this replacement.

@Koncopd when we talked about this last there were concerns about backwards reproducibility. I'm wondering if this logic would fix that:

- If the dataset is "small" and the metric is defined by scikit-learn, compute complete distances

- For all other cases use pynndescent

Would this be sufficient to keep results the same, or do we compute dense distances for the more esoteric metrics now?

Yes, we can do like this. "do we compute dense distances for the more esoteric metrics now?" - actually i am not sure, it needs to be checked.