linklist2

linklist2

Firstly, thanks for your good work! BTW, How can I use the code? Could you please give me an expample to run these py files?

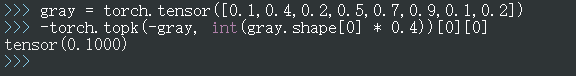

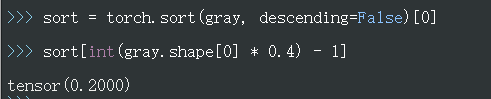

value = -torch.topk(-gray, int(gray.shape[0] * 0.4))[0][0] 您好,您这里求得了gray的最小值,而不是40%所在的值。 举例如下图所示:  我觉得应该是这样写可能更符合论文中描述的前40%最暗的像素值:

First of all, thanks for your work. But it seems that the ChipNet just uses masks, there is no real removal parameter. How to get the actual model compression ratio?

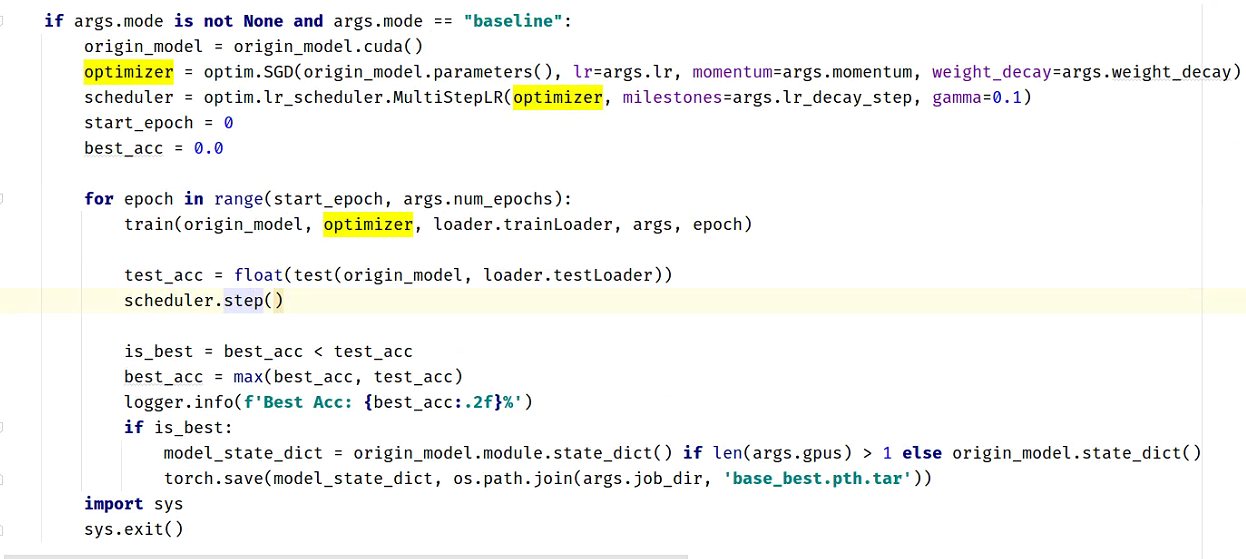

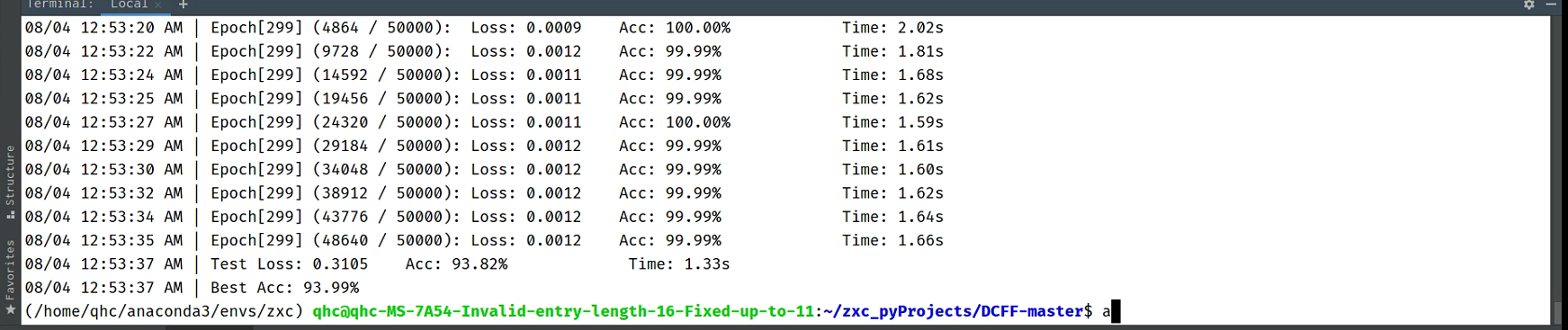

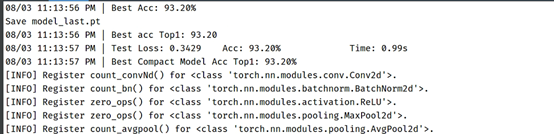

您好,我有一个困惑,在本地机器上添加如下代码训练originVGG却有高达94点0几的准确率:  请问这是一个合理的值吗? 按照readme的步骤,训练compact vgg却只有93.52%的准确率。 然后我尝试添加如下代码,以便进行复现 ``` torch.manual_seed(0) torch.cuda.manual_seed(0) torch.backends.cudnn.deterministic = True torch.backends.cudnn.benchmark = False ``` 得到的结果是orginVGG的准确率仍有93.99%,而compact vgg却剩下了93.20%   请问我该如何处理,才能达到论文中的效果呢?

``` def get_wav(): try: # 获取用户标识(例如,通过POST请求中的JSON数据) data = request.json user = data.get('user', 'User') # 通过HTTP GET请求从指定地址获取最新数据 course_id = data.get('course_id', 1164) headers = { 'easegen-api-key': 'ak_SzEhMFPTKjIQBhGVmkle' } response = requests.get( "http://36.103.251.108:48080/admin-api/digitalcourse/courses/getCourseText",...