Kevin Musgrave

Kevin Musgrave

Yes they will be considered positive samples. So if you're using it for MoCo you have to keep incrementing your labels so that no positive pairs are formed between the...

That isn't implemented in `CrossBatchMemory`. I suppose I could add it for convenience. Maybe it could be used like this: ```python from pytorch_metric_learning.losses import ContrastiveLoss, CrossBatchMemory loss_fn_xbm = CrossBatchMemory(ContrastiveLoss(), embedding_size,...

Link to [paper](https://arxiv.org/pdf/1903.03238.pdf) as mentioned [here](https://github.com/KevinMusgrave/pytorch-metric-learning/issues/285)

@elanmart Thanks for your interest in contributing! I think this one (DynamicSoftMarginLoss) or [P2SGrad](https://github.com/KevinMusgrave/pytorch-metric-learning/issues/9#issue-567243436) would be good additions. But really, any new additions are appreciated, so if these ones are...

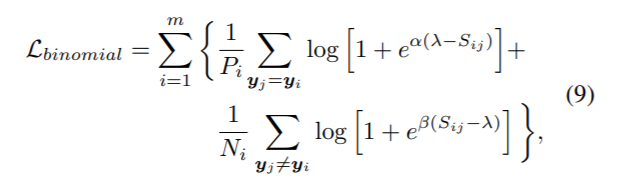

The binomial deviance loss would also be a good addition, and would probably be an easier loss to start with. Here's the equation (taken from the [multi-similarity paper](https://arxiv.org/pdf/1904.06627.pdf))

Maybe consider switching from mkdocs to docusaurus: https://docusaurus.io/docs/versioning

Versioning is now integrated with material for mkdocs: https://squidfunk.github.io/mkdocs-material/setup/setting-up-versioning/

Could you provide a snippet of code that produces the error?

@cwkeam Do you remember how this part works? https://github.com/KevinMusgrave/pytorch-metric-learning/blob/58247798ca9bf62ff49874e5cd07c41424e64fe9/src/pytorch_metric_learning/losses/centroid_triplet_loss.py#L124 It fails this test that I've added to the dev branch: https://github.com/KevinMusgrave/pytorch-metric-learning/blob/3930db293cd2c71181ffb776cc71b5e8a7d1f502/tests/losses/test_centroid_triplet_loss.py#L20-L24 The error message: ``` Traceback (most recent call last):...

Thanks @cwkeam, I think raising a ValueError makes sense.