chunjun

chunjun copied to clipboard

chunjun copied to clipboard

A data integration framework

client module does not support authentication such as Kerberos now.

fix:HdfsOrcOutputFormat can't get fullColumnType or Name from HdfsConf https://github.com/DTStack/chunjun/issues/987

{ "job": { "content": [{ "reader": { "name": "sqlservercdcreader", "parameter": { "cat": "insert,update,delete", "databaseName": "test", "password": "Admin_123,", "pavingData": false, "splitUpdate": false, "tableList": ["test.test_aa"], "url": "jdbc:sqlserver://192.168.1.211:1433;DatabaseName=test", "username": "sa" } },...

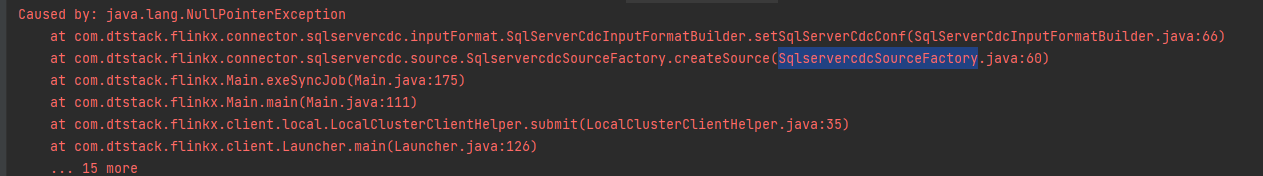

**Describe the bug** 使用1.12.4版本测试, 运行之前正常的任务,报错: ``` 2022-06-22 15:15:49.849 [flink-akka.actor.default-dispatcher-3] INFO org.apache.flink.runtime.rpc.akka.AkkaRpcService - Stopped Akka RPC service. Exception in thread "main" org.apache.flink.runtime.client.JobExecutionException: Job execution failed. at org.apache.flink.runtime.jobmaster.JobResult.toJobExecutionResult(JobResult.java:144) at org.apache.flink.runtime.minicluster.MiniCluster.executeJobBlocking(MiniCluster.java:811) at com.dtstack.chunjun.environment.MyLocalStreamEnvironment.execute(MyLocalStreamEnvironment.java:174)...

**Describe the bug** 写入doris的json中batchSize配置无效,任务运行时显示该参数为1,当同步501条时任务失败,导入过频繁,doris合并不过来,任务失败 If applicable, add screenshots to help explain your problem.

**Describe the bug** I want to use the inceptor connector, run the following command to compile and get the following error ``` $ mvn clean package -DskipTests -P tdh ```...

logminer插件从日志中读取到的sql中文有UNISTR转码,sink为oracle时将oracle的unistr函数当作字符串写入 ![Uploading 微信截图_20220507172455.png…]() ![Uploading 微信截图_20220507172615.png…]()

我使用sync数据同步,mysql->oracle,schema名不同,报错表无法找到。 经分析,在PreparedStmtProxy类getOrCreateFieldNamedPstmt方法时,获取database和tableName均为从源数据库获取,而去目标数据源查该表,此处应该使用用户配置的数据(如无配置,可默认当前模式),请核实。谢谢。