Intel MPI Support

What happened: I attempted to use Intel MPI with the Volcano MPI integration but the MPI launcher cannot create the the hydra proxy on the worker nodes.

Commands and output:

> for host in $(cat /etc/volcano/worker.host); do ssh $host date; while test $? -gt 0; do echo "Could not reach host '$host', trying again in 5s."; sleep 5; ssh $host date; done; echo "$host is ready."; done;

Fri Jul 29 15:11:48 UTC 2022

mpi-test-worker-0.mpi-test is ready.

> mpirun -ppn 1 mpi_test_program;

[mpiexec@mpi-test-launcher-0] Launch arguments: /usr/bin/ssh -q -x mpi-test-worker-0.mpi-test /opt/intel/oneapi/mpi/2021.6.0//bin//hydra_bstrap_proxy --upstream-host mpi-test-launcher-0 --upstream-port 42983 --pgid 0 --launcher ssh --launcher-number 0 --base-path /opt/intel/oneapi/mpi/2021.6.0//bin/ --tree-width 16 --tree-level 1 --time-left -1 --launch-type 2 --debug --proxy-id 0 --node-id 0 --subtree-size 1 /opt/intel/oneapi/mpi/2021.6.0//bin//hydra_pmi_proxy --usize -1 --auto-cleanup 1 --abort-signal 9

[mpiexec@mpi-test-launcher-0] check_exit_codes (../../../../../src/pm/i_hydra/libhydra/demux/hydra_demux_poll.c:117): unable to run bstrap_proxy on mpi-test-worker-0.mpi-test (pid 247, exit code 65280)

[mpiexec@mpi-test-launcher-0] poll_for_event (../../../../../src/pm/i_hydra/libhydra/demux/hydra_demux_poll.c:159): check exit codes error

[mpiexec@mpi-test-launcher-0] HYD_dmx_poll_wait_for_proxy_event (../../../../../src/pm/i_hydra/libhydra/demux/hydra_demux_poll.c:212): poll for event error

[mpiexec@mpi-test-launcher-0] HYD_bstrap_setup (../../../../../src/pm/i_hydra/libhydra/bstrap/src/intel/i_hydra_bstrap.c:1061): error waiting for event

[mpiexec@mpi-test-launcher-0] HYD_print_bstrap_setup_error_message (../../../../../src/pm/i_hydra/mpiexec/intel/i_mpiexec.c:1027): error setting up the bootstrap proxies

[mpiexec@mpi-test-launcher-0] Possible reasons:

[mpiexec@mpi-test-launcher-0] 1. Host is unavailable. Please check that all hosts are available.

[mpiexec@mpi-test-launcher-0] 2. Cannot launch hydra_bstrap_proxy or it crashed on one of the hosts. Make sure hydra_bstrap_proxy is available on all hosts and it has right permissions.

[mpiexec@mpi-test-launcher-0] 3. Firewall refused connection. Check that enough ports are allowed in the firewall and specify them with the I_MPI_PORT_RANGE variable.

[mpiexec@mpi-test-launcher-0] 4. Ssh bootstrap cannot launch processes on remote host. Make sure that passwordless ssh connection is established across compute hosts.

[mpiexec@mpi-test-launcher-0] You may try using -bootstrap option to select alternative launcher.

Some notes about the possible reasons:

- The host is definitely available, I can ssh directly and did in the first command.

- The executable is available on all the hosts, though it could have crashed (though I did not receive any output indicating that on the workers)

- All ports should be open. OpenMPI works fine with the same spec/template.

- Passwordless ssh works, again the first command tested it.

Finally, on the worker node, sshd gives me the following output for the connection corresponding to the mpirun command:

debug1: sshd version OpenSSH_8.9, OpenSSL 3.0.2 15 Mar 2022

debug1: private host key #0: <key>

debug1: private host key #1: <key>

debug1: private host key #2: <key>

debug1: rexec_argv[0]='/usr/sbin/sshd'

debug1: rexec_argv[1]='-Dde'

debug1: Set /proc/self/oom_score_adj from -997 to -1000

debug1: Bind to port 22 on 0.0.0.0.

Server listening on 0.0.0.0 port 22.

debug1: Bind to port 22 on ::.

Server listening on :: port 22.

debug1: Server will not fork when running in debugging mode.

debug1: rexec start in 5 out 5 newsock 5 pipe -1 sock 8

debug1: sshd version OpenSSH_8.9, OpenSSL 3.0.2 15 Mar 2022

debug1: private host key #0: <key>

debug1: private host key #1: <key>

debug1: private host key #2: <key>

debug1: inetd sockets after dupping: 3, 3

Connection from 10.42.16.103 port 55206 on 10.42.5.74 port 22 rdomain ""

debug1: Local version string SSH-2.0-OpenSSH_8.9p1 Ubuntu-3

debug1: Remote protocol version 2.0, remote software version OpenSSH_8.9p1 Ubuntu-3

debug1: compat_banner: match: OpenSSH_8.9p1 Ubuntu-3 pat OpenSSH* compat 0x04000000

debug1: permanently_set_uid: 105/65534 [preauth]

debug1: list_hostkey_types: rsa-sha2-512,rsa-sha2-256,ecdsa-sha2-nistp256,ssh-ed25519 [preauth]

debug1: SSH2_MSG_KEXINIT sent [preauth]

debug1: SSH2_MSG_KEXINIT received [preauth]

debug1: kex: algorithm: curve25519-sha256 [preauth]

debug1: kex: host key algorithm: ssh-ed25519 [preauth]

debug1: kex: client->server cipher: [email protected] MAC: <implicit> compression: none [preauth]

debug1: kex: server->client cipher: [email protected] MAC: <implicit> compression: none [preauth]

debug1: expecting SSH2_MSG_KEX_ECDH_INIT [preauth]

debug1: SSH2_MSG_KEX_ECDH_INIT received [preauth]

debug1: rekey out after 134217728 blocks [preauth]

debug1: SSH2_MSG_NEWKEYS sent [preauth]

debug1: Sending SSH2_MSG_EXT_INFO [preauth]

debug1: expecting SSH2_MSG_NEWKEYS [preauth]

debug1: SSH2_MSG_NEWKEYS received [preauth]

debug1: rekey in after 134217728 blocks [preauth]

debug1: KEX done [preauth]

debug1: userauth-request for user dev service ssh-connection method none [preauth]

debug1: attempt 0 failures 0 [preauth]

...

debug1: attempt 1 failures 0 [preauth]

debug1: userauth_pubkey: publickey test pkalg rsa-sha2-512 pkblob <key> [preauth]

debug1: temporarily_use_uid: 999/999 (e=0/0)

debug1: trying public key file /home/dev/.ssh/authorized_keys

debug1: fd 4 clearing O_NONBLOCK

debug1: /home/dev/.ssh/authorized_keys:1: matching key found: RSA <key>

debug1: /home/dev/.ssh/authorized_keys:1: key options: agent-forwarding port-forwarding pty user-rc x11-forwarding

Accepted key <key> found at /home/dev/.ssh/authorized_keys:1

debug1: restore_uid: 0/0

Postponed publickey for dev from 10.42.16.103 port 55206 ssh2 [preauth]

debug1: userauth-request for user dev service ssh-connection method [email protected] [preauth]

debug1: attempt 2 failures 0 [preauth]

debug1: temporarily_use_uid: 999/999 (e=0/0)

debug1: trying public key file /home/dev/.ssh/authorized_keys

debug1: fd 4 clearing O_NONBLOCK

debug1: /home/dev/.ssh/authorized_keys:1: matching key found: RSA <key>

debug1: /home/dev/.ssh/authorized_keys:1: key options: agent-forwarding port-forwarding pty user-rc x11-forwarding

Accepted key <key> found at /home/dev/.ssh/authorized_keys:1

debug1: restore_uid: 0/0

debug1: auth_activate_options: setting new authentication options

debug1: do_pam_account: called

Accepted publickey for dev from 10.42.16.103 port 55206 ssh2: RSA <key>

debug1: monitor_child_preauth: user dev authenticated by privileged process

debug1: auth_activate_options: setting new authentication options [preauth]

debug1: monitor_read_log: child log fd closed

debug1: PAM: establishing credentials

User child is on pid 217

debug1: SELinux support disabled

debug1: PAM: establishing credentials

debug1: permanently_set_uid: 999/999

debug1: rekey in after 134217728 blocks

debug1: rekey out after 134217728 blocks

debug1: ssh_packet_set_postauth: called

debug1: active: key options: agent-forwarding port-forwarding pty user-rc x11-forwarding

debug1: Entering interactive session for SSH2.

debug1: server_init_dispatch

debug1: server_input_channel_open: ctype session rchan 0 win 2097152 max 32768

debug1: input_session_request

debug1: channel 0: new [server-session]

debug1: session_new: session 0

debug1: session_open: channel 0

debug1: session_open: session 0: link with channel 0

debug1: server_input_channel_open: confirm session

debug1: server_input_global_request: rtype [email protected] want_reply 0

debug1: server_input_channel_req: channel 0 request exec reply 1

debug1: session_by_channel: session 0 channel 0

debug1: session_input_channel_req: session 0 req exec

Starting session: command for dev from 10.42.16.103 port 55206 id 0

debug1: Received SIGCHLD.

debug1: session_by_pid: pid 218

debug1: session_exit_message: session 0 channel 0 pid 218

debug1: session_exit_message: release channel 0

debug1: session_by_channel: session 0 channel 0

debug1: session_close_by_channel: channel 0 child 0

Close session: user dev from 10.42.16.103 port 55206 id 0

debug1: channel 0: free: server-session, nchannels 1

Received disconnect from 10.42.16.103 port 55206:11: disconnected by user

Disconnected from user dev 10.42.16.103 port 55206

debug1: do_cleanup

debug1: temporarily_use_uid: 999/999 (e=999/999)

debug1: restore_uid: (unprivileged)

debug1: do_cleanup

debug1: PAM: cleanup

debug1: PAM: closing session

debug1: PAM: deleting credentials

debug1: temporarily_use_uid: 999/999 (e=0/0)

debug1: restore_uid: 0/0

debug1: audit_event: unhandled event 12

Additional Information:

Relevant environment variables:

env:

- name: I_MPI_HYDRA_HOST_FILE

value: /etc/volcano/worker.host

- name: I_MPI_FABRICS

value: ofi_rxm;tcp

- name: I_MPI_DEBUG

value: "1000"

I also tried enabling the NET_BIND_SERVICE for the worker nodes, which was motivated by an example for kubeflow, but that had no effect.

securityContext:

capabilities:

add:

- NET_BIND_SERVICE

(source: https://github.com/kubeflow/mpi-operator/commit/990bf1c39dc641c65f9f56f2e9d2fbf1d9c31a81#diff-54bcd2533c0a0b2cea3564445c4462f46c0e3d0881affa627e69eb3237c7ca03)

I was looking at the implementation for a different project (kubeflow) that supports MPI, and some comments mention:

// The Intel implementation requires workers to communicate with the

// launcher through its hostname. For that, we create a Service which

// has the same name as the launcher's hostname.

(source: https://github.com/kubeflow/mpi-operator/commit/990bf1c39dc641c65f9f56f2e9d2fbf1d9c31a81#diff-c8a6d890af37a490e4a62a2fdc60f23f417d64858196413b84b53821c54f9c49)

Perhaps that is applicable here?

Environment:

- Volcano Version: uncertain, I will ask the maintainer of the kubernetes cluster

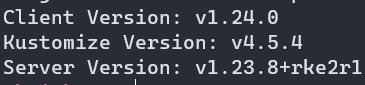

- Kubernetes version (use

kubectl version): v4.5.4 - Cloud provider or hardware configuration: closed cluster

- OS (e.g. from /etc/os-release): Ubuntu 22.04

Closing Remarks: Any help would be greatly appreciated. If required, I will find time to set up a test repo with an image with Intel MPI. I will not have time for a while though.

Execuse me, are you sure the version of K8s you provide is correct?

My apologies, I think I grabbed Kustomize's version accidentally. Here is the version:

Well, I have an update. Some colleagues more skilled in Kubernetes than I worked with me to take a look at what Kubeflow was doing to support Intel MPI, and we found a workaround for now, though support for Intel MPI would ideally be built into Volcano. A couple of important points:

- For Intel MPI, the launcher container SSH's into the workers (those delineated in the host file generated by volcano) and calls the Hydra proxy.

- The Hydra proxy tries to communicate back to the host via SSH with the hostname (not IP) to presumably communicate std out and err back to the launcher process.

- Volcano creates a DNS entry for each worker, but not the launcher container. This is the issue that causes Intel MPI to hang until the timeout is hit.

- Adding to the yaml file that specifies the volcano job to also create a service works around the issue by creating a DNS entry for the launcher container, for example:

apiVersion: v1

kind: Service

metadata:

# It is important that this service match the name of the launcher pod exactly

name: <volcano job name>-launcher-0

spec:

# Don't create an IP address for this service (the launcher container already has one that we want to use).

# Just creates the DNS record in this case.

clusterIP: None

selector:

volcano.sh/job-name: <volcano job name>

volcano.sh/task-spec: launcher

---

<volcano job yaml goes here>

Ideally, volcano would create this service under the hood, which is how Kubeflow supports Intel MPI. However, I am not knowledgeable enough in this sector to add this myself quickly.

@Thor-wl any update?

Hello 👋 Looks like there was no activity on this issue for last 90 days. Do you mind updating us on the status? Is this still reproducible or needed? If yes, just comment on this PR or push a commit. Thanks! 🤗 If there will be no activity for 60 days, this issue will be closed (we can always reopen an issue if we need!).

Closing for now as there was no activity for last 60 days after marked as stale, let us know if you need this to be reopened! 🤗