Draft: Off-main-thread compilation and other performance improvements

I'm investigating use of worker threads to keep the server responsive when compiling large projects. As a first iteration on a solution, we create a worker thread and a temporary Vala.CodeContext to compile the updated build target. Then we replace the old code context when we're done.

Additionally, I've converted all the LSP response handlers to async code. This will also keep the server responsive when launching subprocesses and performing I/O.

Problems:

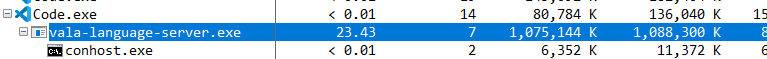

- There is an increase in memory consumption with this solution. VLS on Geary shoots up to 3.5 GB now. Hence why this is still a draft.

- It may be possible to avoid recompiling the entire code context in many situations. I need to look into

fast-vapiand keeping a code context per source file.

TODO:

- [ ] fix increased memory usage

- [ ] fix server's copy of the text document getting out-of-sync with the client, particularly when using undo

Closes #223 Closes #114

-

The experience is good enough, thanks for your hard work. The project's data auto-completion is right and quick.

-

Kangaroo project memory usage

- has 421 compilation objects,

- VLS will cost about 750M memory with any operation, then will cost about 1G memory with auto-completion. it has 5-8 threads and keeps 6 threads in normal.

-

The response speed of libgda 6.0 and Json-glib it will cost 10 seconds to show the list while input string

Gda., Json-glibJson.costs 9 seconds, Gee costs 1 second.

Maybe we could do:

return the first page members immediately for global auto-completion, then return much more and precise members with prefix string. for example:

Input Gda., return 10 members immediately(maybe could set by user)

Input Gda.a, return all members with the prefix a, if the count of member less than a number, then ignore the case.

This PR should be superceded by new work on parallelizing the compiler and language server.