KeyError: 'masks' at batch variable.

Search before asking

- [X] I have searched the YOLOv8 issues and discussions and found no similar questions.

Question

Traceback (most recent call last):

File "/usr/local/bin/yolo", line 8, in <module>

sys.exit(entrypoint())

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/configs/__init__.py", line 209, in entrypoint

func(cfg)

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/v8/segment/train.py", line 154, in train

model.train(**vars(cfg))

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/engine/model.py", line 203, in train

self.trainer.train()

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/engine/trainer.py", line 181, in train

self._do_train(int(os.getenv("RANK", -1)), world_size)

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/engine/trainer.py", line 299, in _do_train

self.loss, self.loss_items = self.criterion(preds, batch)

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/v8/segment/train.py", line 44, in criterion

return self.compute_loss(preds, batch)

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/v8/segment/train.py", line 93, in __call__

masks = batch["masks"].to(self.device).float()

KeyError: 'masks'

this error happened while I run this prompt.

!yolo task=segment mode=train model=yolov8s-seg.pt data={dataset.location}/data.yaml v5loader=True epochs=100 imgsz=640 batch=32 pretrained=True optimizer=Adam

and I just try to print(batch.keys())

dict_keys(['ori_shape', 'ratio_pad', 'im_file', 'img', 'cls', 'bboxes', 'batch_idx'])

and print(batch)

{'ori_shape': [None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None], 'ratio_pad': [None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None, None], 'im_file': ['/content/datasets/ins_seg-1/train/images/000405_jpg.rf.4f5e46f24a0d06a2176512b97207aedd.jpg', '/content/datasets/ins_seg-1/train/images/000343_jpg.rf.53ee9fc68f89d8147729946bab9341c3.jpg', '/content/datasets/ins_seg-1/train/images/000320_jpg.rf.8ca08c6674a0595ca94213d53e0e0bfb.jpg', '/content/datasets/ins_seg-1/train/images/000380_jpg.rf.ba7e5bf2d8be13353068d9a886f1c8ee.jpg', '/content/datasets/ins_seg-1/train/images/000325_jpg.rf.8262e400e82999146e0449ab883324fb.jpg', '/content/datasets/ins_seg-1/train/images/000300_jpg.rf.13b42497394879c39ba696832271753e.jpg', '/content/datasets/ins_seg-1/train/images/000356_jpg.rf.469c2ace233806715b10b70db662b4b2.jpg', '/content/datasets/ins_seg-1/train/images/000065_jpg.rf.9ae5d64cd031207a67f8ff81f64871e1.jpg', '/content/datasets/ins_seg-1/train/images/000345_jpg.rf.02a4abca260db1c5bfa640fec1fa98b9.jpg', '/content/datasets/ins_seg-1/train/images/000346_jpg.rf.c6d794b35a165576bbfc04b1c913426f.jpg', '/content/datasets/ins_seg-1/train/images/000371_jpg.rf.65892f0c34b6d7d354272c36d5440acb.jpg', '/content/datasets/ins_seg-1/train/images/000458_jpg.rf.d633c7d640c706821a8b9176cd9d56e3.jpg', '/content/datasets/ins_seg-1/train/images/000008_jpg.rf.70b63d6bac391c62aaf479a7cebbc655.jpg', '/content/datasets/ins_seg-1/train/images/000092_jpg.rf.d604e7ff4ed443774c338141235a9024.jpg', '/content/datasets/ins_seg-1/train/images/000000_jpg.rf.a8c05ce8d0700f351b7fc8ffcbcfbfac.jpg', '/content/datasets/ins_seg-1/train/images/000257_jpg.rf.10fb4b6a5d4834086ce6b14db1893eab.jpg', '/content/datasets/ins_seg-1/train/images/000406_jpg.rf.79abd6e45f8e40f33bc6b816b469a0de.jpg', '/content/datasets/ins_seg-1/train/images/000080_jpg.rf.58f2e098f59a4baa998541d11a9cd72d.jpg', '/content/datasets/ins_seg-1/train/images/000306_jpg.rf.b71d68a0919b57938cc913c39c03c2ec.jpg', '/content/datasets/ins_seg-1/train/images/000024_jpg.rf.bd6cecf7c2921f1e6ebd02e87c397cc5.jpg', '/content/datasets/ins_seg-1/train/images/000212_jpg.rf.f16f3f3fb31f7f36ab98e17004a37975.jpg', '/content/datasets/ins_seg-1/train/images/000286_jpg.rf.e074960be98989035b30f6350316400f.jpg', '/content/datasets/ins_seg-1/train/images/000344_jpg.rf.d1773ee2f442c39092396605c3a63e1e.jpg', '/content/datasets/ins_seg-1/train/images/000439_jpg.rf.32305dcdd354c3a97b5b3842b9c20ab4.jpg', '/content/datasets/ins_seg-1/train/images/000168_jpg.rf.44d1b982c64c7a8a6d4a9db7e9584ddb.jpg', '/content/datasets/ins_seg-1/train/images/000133_jpg.rf.fe8434ef054659d7ad72db10fd23576e.jpg', '/content/datasets/ins_seg-1/train/images/000065_jpg.rf.9d18e97be4311824f3a766bf722a29af.jpg', '/content/datasets/ins_seg-1/train/images/000242_jpg.rf.fb7ccdbe3e5b0d8fb7b35fda816777c4.jpg', '/content/datasets/ins_seg-1/train/images/000304_jpg.rf.cca88d0ee7bf9471b54fae289dacac97.jpg', '/content/datasets/ins_seg-1/train/images/000412_jpg.rf.dda3ebc459ecd96a0b774d23f2d144ab.jpg', '/content/datasets/ins_seg-1/train/images/000161_jpg.rf.d607dcd00f5d282da6457edd0a68e985.jpg', '/content/datasets/ins_seg-1/train/images/000143_jpg.rf.c1f3ef5086827abe850efe022e8dd84b.jpg'], 'img': tensor([[[[0.53725, 0.58824, 0.68235, ..., 0.26275, 0.25882, 0.25882],

[0.52157, 0.54510, 0.62353, ..., 0.22353, 0.21961, 0.21961],

[0.49804, 0.48235, 0.54510, ..., 0.24706, 0.24706, 0.24706],

...,

[0.75686, 0.72157, 0.72549, ..., 0.66275, 0.60392, 0.64314],

[0.79216, 0.72549, 0.73333, ..., 0.66667, 0.68235, 0.70980],

[0.81176, 0.75294, 0.74510, ..., 0.56471, 0.72549, 0.81961]],

[[0.52549, 0.58431, 0.67451, ..., 0.40784, 0.40392, 0.40392],

[0.50980, 0.54118, 0.61961, ..., 0.37255, 0.36471, 0.36471],

[0.48627, 0.47843, 0.54118, ..., 0.39216, 0.39216, 0.39216],

...,

[0.76078, 0.72549, 0.72941, ..., 0.68627, 0.61569, 0.65098],

[0.79608, 0.72941, 0.73725, ..., 0.69020, 0.69804, 0.72157],

[0.81176, 0.75294, 0.74902, ..., 0.58824, 0.74510, 0.83529]],

[[0.52941, 0.59216, 0.68627, ..., 0.49412, 0.49020, 0.49020],

[0.51373, 0.54902, 0.62745, ..., 0.45882, 0.45098, 0.45098],

[0.49020, 0.48627, 0.55294, ..., 0.47843, 0.47843, 0.47843],

...,

[0.75686, 0.72941, 0.73333, ..., 0.52549, 0.45490, 0.49804],

[0.79216, 0.73333, 0.74118, ..., 0.53725, 0.54902, 0.56863],

[0.81176, 0.75294, 0.75294, ..., 0.44706, 0.60784, 0.69804]]],

[[[0.00000, 0.00000, 0.00000, ..., 0.62745, 0.63137, 0.62745],

[0.00000, 0.00000, 0.00000, ..., 0.61961, 0.62353, 0.62745],

[0.00000, 0.00000, 0.00000, ..., 0.63137, 0.63529, 0.63529],

...,

[0.62353, 0.63137, 0.61569, ..., 0.10196, 0.10980, 0.10980],

[0.61569, 0.62353, 0.62353, ..., 0.10588, 0.11765, 0.11765],

[0.61569, 0.61569, 0.63137, ..., 0.10588, 0.11765, 0.12157]],

[[0.00000, 0.00000, 0.00000, ..., 0.62745, 0.63137, 0.63137],

[0.00000, 0.00000, 0.00000, ..., 0.61961, 0.62353, 0.63137],

[0.00000, 0.00000, 0.00392, ..., 0.63137, 0.63529, 0.63529],

...,

[0.61961, 0.62745, 0.60784, ..., 0.09804, 0.10196, 0.10196],

[0.61176, 0.61569, 0.61569, ..., 0.09804, 0.10980, 0.10980],

[0.61176, 0.60784, 0.62353, ..., 0.09804, 0.10980, 0.11373]],

[[0.00000, 0.00000, 0.00000, ..., 0.63137, 0.63529, 0.62745],

[0.00000, 0.00000, 0.00000, ..., 0.62353, 0.62745, 0.62745],

[0.00000, 0.00000, 0.00000, ..., 0.63529, 0.63922, 0.63922],

...,

[0.61569, 0.62353, 0.60000, ..., 0.07843, 0.08627, 0.08627],

[0.60784, 0.61176, 0.60784, ..., 0.08627, 0.09804, 0.09804],

[0.60784, 0.60000, 0.61569, ..., 0.08627, 0.09804, 0.10196]]],

[[[0.31373, 0.31373, 0.31373, ..., 0.12157, 0.11373, 0.44314],

[0.31373, 0.31373, 0.31373, ..., 0.11765, 0.11765, 0.11373],

[0.31373, 0.31373, 0.31373, ..., 0.10196, 0.10588, 0.10196],

...,

[0.31373, 0.31373, 0.31373, ..., 0.09804, 0.09804, 0.09804],

[0.31373, 0.31373, 0.31373, ..., 0.09804, 0.09804, 0.09804],

[0.31373, 0.31373, 0.31373, ..., 0.09804, 0.09804, 0.09804]],

[[0.31373, 0.31373, 0.31373, ..., 0.21176, 0.20392, 0.51765],

[0.31373, 0.31373, 0.31373, ..., 0.20784, 0.20784, 0.20392],

[0.31373, 0.31373, 0.31373, ..., 0.19216, 0.19608, 0.19216],

...,

[0.31373, 0.31373, 0.31373, ..., 0.12549, 0.12549, 0.12549],

[0.31373, 0.31373, 0.31373, ..., 0.12549, 0.12157, 0.12549],

[0.31373, 0.31373, 0.31373, ..., 0.12549, 0.12549, 0.12157]],

[[0.31373, 0.31373, 0.31373, ..., 0.27451, 0.26667, 0.54118],

[0.31373, 0.31373, 0.31373, ..., 0.27059, 0.27059, 0.26667],

[0.31373, 0.31373, 0.31373, ..., 0.25490, 0.25882, 0.25490],

...,

[0.31373, 0.31373, 0.31373, ..., 0.14510, 0.14510, 0.13725],

[0.31373, 0.31373, 0.31373, ..., 0.14510, 0.14118, 0.13725],

[0.31373, 0.31373, 0.31373, ..., 0.14510, 0.14510, 0.14118]]],

...,

[[[0.59608, 0.58431, 0.61176, ..., 0.21176, 0.21176, 0.21176],

[0.60784, 0.60000, 0.61176, ..., 0.21176, 0.21176, 0.21176],

[0.60000, 0.60392, 0.61176, ..., 0.21176, 0.21176, 0.21176],

...,

[0.18039, 0.18039, 0.17647, ..., 0.14118, 0.16078, 0.20392],

[0.17647, 0.18039, 0.17647, ..., 0.15686, 0.16863, 0.21176],

[0.17647, 0.17647, 0.17647, ..., 0.20392, 0.21961, 0.26275]],

[[0.59608, 0.58431, 0.61176, ..., 0.21176, 0.21176, 0.21176],

[0.60784, 0.60000, 0.61176, ..., 0.21176, 0.21176, 0.21176],

[0.60000, 0.60392, 0.61176, ..., 0.21176, 0.21176, 0.21176],

...,

[0.18431, 0.18431, 0.18039, ..., 0.12157, 0.14118, 0.18824],

[0.18039, 0.18431, 0.18039, ..., 0.13725, 0.14902, 0.19608],

[0.18039, 0.18039, 0.18039, ..., 0.18431, 0.20000, 0.24314]],

[[0.62745, 0.61569, 0.65098, ..., 0.21176, 0.21176, 0.21176],

[0.63922, 0.63137, 0.65098, ..., 0.20784, 0.20784, 0.20784],

[0.63137, 0.63529, 0.65098, ..., 0.20392, 0.20392, 0.20392],

...,

[0.16078, 0.16078, 0.15686, ..., 0.10196, 0.12549, 0.17647],

[0.15686, 0.16078, 0.15686, ..., 0.11373, 0.13333, 0.18431],

[0.15686, 0.15686, 0.15686, ..., 0.16078, 0.18431, 0.23137]]],

[[[0.55294, 0.50588, 0.44314, ..., 0.56471, 0.54118, 0.51373],

[0.52941, 0.44314, 0.40784, ..., 0.48235, 0.49412, 0.52549],

[0.56078, 0.45882, 0.41176, ..., 0.55686, 0.54118, 0.51765],

...,

[0.47843, 0.47451, 0.45882, ..., 0.63922, 0.61569, 0.58824],

[0.50196, 0.49804, 0.47059, ..., 0.64314, 0.62745, 0.61176],

[0.49020, 0.49412, 0.47843, ..., 0.65882, 0.64706, 0.65098]],

[[0.61961, 0.56471, 0.49412, ..., 0.56078, 0.53725, 0.50980],

[0.60000, 0.51373, 0.47059, ..., 0.47843, 0.49020, 0.52157],

[0.63529, 0.53725, 0.48235, ..., 0.55294, 0.53725, 0.51373],

...,

[0.40392, 0.39216, 0.37647, ..., 0.74510, 0.72157, 0.69412],

[0.42745, 0.41569, 0.38824, ..., 0.76863, 0.75294, 0.73333],

[0.40784, 0.41176, 0.39608, ..., 0.80000, 0.78824, 0.77647]],

[[0.63529, 0.56078, 0.48235, ..., 0.53333, 0.50588, 0.48235],

[0.61961, 0.51765, 0.45490, ..., 0.45098, 0.46275, 0.49412],

[0.64706, 0.52549, 0.45882, ..., 0.52549, 0.50588, 0.48627],

...,

[0.34510, 0.32941, 0.31373, ..., 0.34902, 0.32549, 0.30588],

[0.36863, 0.35294, 0.32549, ..., 0.35686, 0.34118, 0.32941],

[0.35294, 0.34902, 0.33333, ..., 0.37647, 0.36471, 0.35686]]],

[[[0.54510, 0.54510, 0.54510, ..., 0.63137, 0.63922, 0.64314],

[0.53725, 0.54118, 0.54902, ..., 0.63137, 0.63922, 0.64706],

[0.53333, 0.53725, 0.54118, ..., 0.63529, 0.64314, 0.65098],

...,

[0.50588, 0.42745, 0.40392, ..., 0.27059, 0.27451, 0.27451],

[0.52157, 0.52157, 0.52157, ..., 0.26667, 0.27059, 0.27059],

[0.52157, 0.52157, 0.52549, ..., 0.25882, 0.25882, 0.25882]],

[[0.54902, 0.54902, 0.54902, ..., 0.63529, 0.64314, 0.65098],

[0.54118, 0.54510, 0.55294, ..., 0.63529, 0.64314, 0.65490],

[0.53725, 0.54118, 0.54510, ..., 0.63922, 0.64706, 0.65882],

...,

[0.51373, 0.43529, 0.41176, ..., 0.42353, 0.42745, 0.42745],

[0.52549, 0.52549, 0.52549, ..., 0.41569, 0.41961, 0.41961],

[0.52549, 0.52549, 0.52941, ..., 0.40784, 0.40784, 0.40784]],

[[0.52941, 0.52941, 0.52941, ..., 0.65490, 0.66275, 0.67059],

[0.52157, 0.52549, 0.53333, ..., 0.65490, 0.66275, 0.67451],

[0.51765, 0.52157, 0.52549, ..., 0.65882, 0.66667, 0.67843],

...,

[0.53333, 0.45098, 0.42745, ..., 0.51373, 0.51765, 0.51765],

[0.54118, 0.54118, 0.54118, ..., 0.50588, 0.50588, 0.50588],

[0.53725, 0.54118, 0.54118, ..., 0.49804, 0.49804, 0.49804]]]], device='cuda:0'), 'cls': tensor([[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.],

[0.]]), 'bboxes': tensor([[0.24502, 0.19796, 0.05981, 0.35918],

[0.93919, 0.68271, 0.03262, 0.17661],

[0.38525, 0.78009, 0.76839, 0.43958],

[0.03903, 0.51653, 0.07560, 0.95727],

[0.46849, 0.10881, 0.02912, 0.21663],

[0.57778, 0.71162, 0.20071, 0.57507],

[0.37344, 0.70940, 0.20318, 0.57217],

[0.17957, 0.71104, 0.20893, 0.57607],

[0.09395, 0.81392, 0.07376, 0.36860],

[0.93172, 0.71576, 0.13594, 0.56750],

[0.70352, 0.08813, 0.02699, 0.17597],

[0.35744, 0.14137, 0.07873, 0.24320],

[0.26507, 0.07555, 0.04463, 0.15037],

[0.71333, 0.56531, 0.06865, 0.49777],

[0.11974, 0.47548, 0.07926, 0.40351],

[0.90751, 0.51335, 0.18450, 0.96049],

[0.46816, 0.15832, 0.10906, 0.31445],

[0.66972, 0.73596, 0.15902, 0.52646],

[0.27731, 0.36994, 0.09382, 0.65930],

[0.52503, 0.37679, 0.08261, 0.55033],

[0.07242, 0.84933, 0.05333, 0.29948],

[0.70756, 0.84883, 0.10577, 0.29848],

[0.58947, 0.39743, 0.82081, 0.68987],

[0.58411, 0.90657, 0.16034, 0.18612],

[0.07817, 0.19322, 0.15598, 0.38040],

[0.76486, 0.11588, 0.06338, 0.23103],

[0.11703, 0.79739, 0.08353, 0.40499],

[0.69307, 0.69134, 0.61335, 0.61549],

[0.07879, 0.49896, 0.15711, 0.99515],

[0.80149, 0.20461, 0.02891, 0.18928],

[0.23036, 0.23682, 0.05312, 0.28356],

[0.57695, 0.69632, 0.12954, 0.46396],

[0.63428, 0.69545, 0.10575, 0.47268],

[0.69174, 0.69588, 0.08666, 0.46484],

[0.75449, 0.69772, 0.06521, 0.46466],

[0.81762, 0.69981, 0.06612, 0.46397],

[0.87434, 0.69914, 0.09477, 0.46532],

[0.93273, 0.70197, 0.12056, 0.46662],

[0.36472, 0.70270, 0.08012, 0.32831],

[0.20398, 0.25642, 0.06903, 0.51041],

[0.26833, 0.82635, 0.11534, 0.34537],

[0.04193, 0.10880, 0.08367, 0.21612],

[0.08311, 0.97440, 0.04024, 0.04988],

[0.81688, 0.05605, 0.02298, 0.11074],

[0.86935, 0.22359, 0.06415, 0.43682],

[0.62041, 0.33906, 0.07520, 0.50199],

[0.44034, 0.31764, 0.02529, 0.22586],

[0.15484, 0.09691, 0.07351, 0.19341],

[0.60553, 0.04574, 0.04856, 0.09110],

[0.59956, 0.65729, 0.14773, 0.63702],

[0.49136, 0.43822, 0.05732, 0.21754],

[0.52522, 0.67351, 0.02121, 0.10446],

[0.39341, 0.43951, 0.08678, 0.21721],

[0.36359, 0.82106, 0.02712, 0.09340],

[0.88503, 0.17864, 0.06435, 0.35702],

[0.61112, 0.93148, 0.03578, 0.13515],

[0.29908, 0.10446, 0.09112, 0.20831],

[0.23522, 0.73639, 0.14624, 0.27795],

[0.02817, 0.80638, 0.05578, 0.38163],

[0.67763, 0.72429, 0.25277, 0.55056],

[0.70671, 0.50097, 0.29029, 0.99716],

[0.55339, 0.38378, 0.16555, 0.67717],

[0.07807, 0.29735, 0.15610, 0.57715],

[0.99628, 0.85936, 0.00732, 0.17710],

[0.42720, 0.20527, 0.03954, 0.21529],

[0.03816, 0.17090, 0.07627, 0.33931],

[0.56898, 0.64122, 0.17786, 0.54621],

[0.96177, 0.05077, 0.05229, 0.09911],

[0.39116, 0.06762, 0.05904, 0.13465],

[0.26009, 0.63568, 0.13823, 0.70242],

[0.63878, 0.23760, 0.38904, 0.46504],

[0.41803, 0.68350, 0.35058, 0.63255],

[0.28358, 0.16830, 0.04085, 0.33366],

[0.92216, 0.92012, 0.04072, 0.15814]]), 'batch_idx': tensor([ 0., 0., 0., 1., 2., 2., 2., 2., 2., 2., 3., 3., 3., 3., 3., 4., 5., 5., 6., 6., 6., 6., 7., 7., 8., 8., 8., 8., 9., 10., 10., 10., 10., 10., 10., 10., 10., 10., 10., 12., 12., 13., 13., 14., 15., 16., 16., 17., 17., 17., 17., 17., 17., 17., 18., 18., 19., 19., 20., 20., 22., 23.,

24., 24., 26., 26., 26., 27., 27., 27., 28., 29., 31., 31.])}

Somebody tell me this error crash from my data or code ? and how to fixed this ? thank you so much !

Additional

I run via this colab : https://colab.research.google.com/github/roboflow-ai/notebooks/blob/main/notebooks/train-yolov8-instance-segmentation-on-custom-dataset.ipynb#scrollTo=D2YkphuiaE7_

just replace with my datasets and adjust training prompt.

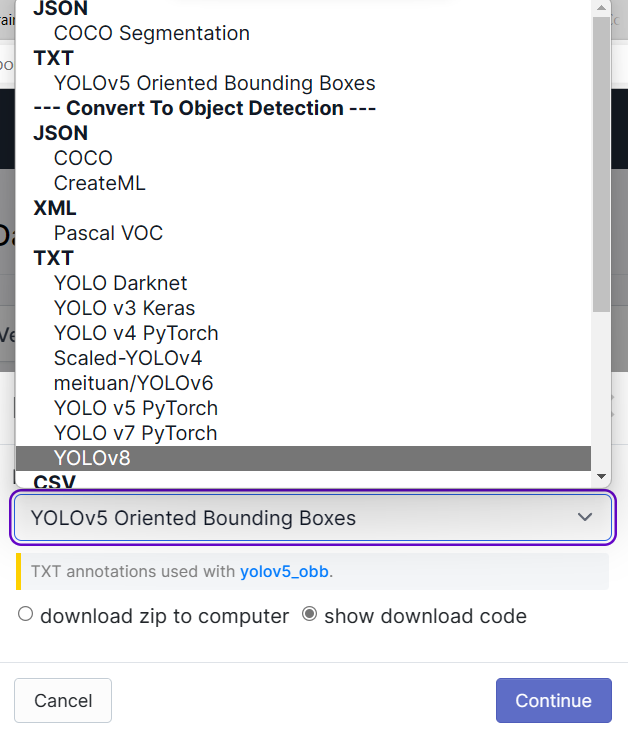

Is your dataset detection or segmentation?

I labelled via roboflow and chose instance segmentation task, then I'm done with label I will export dataset yolov8:

follow by :

@rungrodkspeed hi, v5loader=True only supports detection, please set it to False if you're going to train segmentation. :)

@Laughing-q then v5loader=False, this error is happen :

Traceback (most recent call last):

File "/usr/local/bin/yolo", line 8, in <module>

sys.exit(entrypoint())

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/cfg/__init__.py", line 237, in entrypoint

func(cfg)

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/v8/segment/train.py", line 151, in train

model.train(**vars(cfg))

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/engine/model.py", line 201, in train

self.trainer.train()

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/engine/trainer.py", line 182, in train

self._do_train(int(os.getenv("RANK", -1)), world_size)

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/engine/trainer.py", line 283, in _do_train

for i, batch in pbar:

File "/usr/local/lib/python3.8/dist-packages/tqdm/std.py", line 1195, in __iter__

for obj in iterable:

File "/usr/local/lib/python3.8/dist-packages/torch/utils/data/dataloader.py", line 628, in __next__

data = self._next_data()

File "/usr/local/lib/python3.8/dist-packages/torch/utils/data/dataloader.py", line 1333, in _next_data

return self._process_data(data)

File "/usr/local/lib/python3.8/dist-packages/torch/utils/data/dataloader.py", line 1359, in _process_data

data.reraise()

File "/usr/local/lib/python3.8/dist-packages/torch/_utils.py", line 543, in reraise

raise exception

ValueError: Caught ValueError in DataLoader worker process 0.

Original Traceback (most recent call last):

File "/usr/local/lib/python3.8/dist-packages/torch/utils/data/_utils/worker.py", line 302, in _worker_loop

data = fetcher.fetch(index)

File "/usr/local/lib/python3.8/dist-packages/torch/utils/data/_utils/fetch.py", line 58, in fetch

data = [self.dataset[idx] for idx in possibly_batched_index]

File "/usr/local/lib/python3.8/dist-packages/torch/utils/data/_utils/fetch.py", line 58, in <listcomp>

data = [self.dataset[idx] for idx in possibly_batched_index]

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/data/base.py", line 180, in __getitem__

return self.transforms(self.get_label_info(index))

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/data/augment.py", line 48, in __call__

data = t(data)

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/data/augment.py", line 48, in __call__

data = t(data)

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/data/augment.py", line 361, in __call__

i = self.box_candidates(box1=instances.bboxes.T,

File "/usr/local/lib/python3.8/dist-packages/ultralytics/yolo/data/augment.py", line 375, in box_candidates

return (w2 > wh_thr) & (h2 > wh_thr) & (w2 * h2 / (w1 * h1 + eps) > area_thr) & (ar < ar_thr) # candidates

ValueError: operands could not be broadcast together with shapes (6,) (7,)

;(

Same error, @AyushExel you can use this dataset

https://app.roboflow.com/roboflow-100/cables-nl42k/1

It's suppose to be for OD, I am running v8 for OD not sure how/why this is linked to segmentation

okay thanks for reporting. @Laughing-q @glenn-jocher this seems reproducible in the colab. We need to take a deeper look at differences between the dataloaders. @FrancescoSaverioZuppichini btw you were able to train on this dataset in yolov5 repo?

Yes I was, happy to help btw since this is a priority for us

@AyushExel I'd like to ask you what happen if I have a mixed dataset (bboxes and segments) and I train yolov8 for object detection on it

@FrancescoSaverioZuppichini v8 should train detection models but segmentation models in such cases should cause problems. v5 failed silently in this case. We're addressing that issue here - https://github.com/ultralytics/ultralytics/pull/598

@AyushExel Thanks for the response 🙏 Not sure I got this, when I have mixed bboxes and segments/polygons what is the behaviour of yolov8 and/or yolov5? Are the segments converted to bboxes?

@FrancescoSaverioZuppichini dataset - Mixed boxes and segments -> let's call it mixed dataset

YOLOv5: Current Behaviour: for detection - Should work for segmentation - Should work, but not as expected. The mixed dataset will fail silently without throwing errors. This is not desired. Desired Behavior - In case the dataset is mixed, don't support segmentation training. Addressed here -https://github.com/ultralytics/yolov5/pull/10820

Ultralytics Repo: Current Behaviour: for detection - Should work for segmentation - Doesn't work. The mixed dataset will fail explicitly throwing errors. But it's not handled properly so it fails after some time into training. Desired Behavior - In case the dataset is mixed, don't support segmentation training and force training detection only with a warning. Change addressed here -https://github.com/ultralytics/ultralytics/pull/598 I don't think that this is the ideal behavior, the training should just fail for segmentation but we're getting multiple users with errors related to mixed labels so until we figure out the source, this seems to be the right compromise. @Laughing-q please add if I missed/misrepresented something.

@AyushExel thanks for the deep reply, makes sense! I totally agree on your opinions about segmentation

For detection, are segments converted to bboxes before data augmentation?

@FrancescoSaverioZuppichini Segment labels are used for better augmenting the detection labels if segments labels are available. It's used for more accurate copy-paste augmentations. Some details here https://github.com/ultralytics/yolov5/pull/3845 (There is a better issue thread but I can't find it. I'm on phone now I'll search for it when I'm back on my system) But I'm not sure if they're converted to boxes. But I might be wrong as I didn't implement the augmentation pipeline. @Laughing-q might be able to confirm

EDIT: here's the copyPaste augmentation implementation https://github.com/ultralytics/ultralytics/blob/936414c615bb8635f081b36b98641aac0ed17e64/ultralytics/yolo/data/augment.py#L495

@AyushExel Thanks! I just saw this. @AyushExel @FrancescoSaverioZuppichini corrected some details here. :)

dataset - Mixed boxes and segments -> let's call it mixed dataset

YOLOv5: Current Behaviour: for detection && segmentation - Should work, but not as expected. The mixed dataset will fail silently without throwing errors. This is not desired. Desired Behavior - In case the dataset is mixed, don't support segmentation training. Addressed here -https://github.com/ultralytics/yolov5/pull/10820

Ultralytics Repo: Current Behaviour: for detection && segmentation - Doesn't work. The mixed dataset will fail explicitly throwing errors. But it's not handled properly so it fails after some time into training. Desired Behavior - In case the dataset is mixed, don't support segmentation training and force training detection only with a warning. Change addressed here -https://github.com/ultralytics/ultralytics/pull/598 I don't think that this is the ideal behavior, the training should just fail for segmentation but we're getting multiple users with errors related to mixed labels so until we figure out the source, this seems to be the right compromise.

Also segment labels are used for better augmenting the detection labels if segments labels are available. It's used for more accurate random_perspective and supporting copy-paste augmentations. And the segment labels are converted to bboxes after copy-paste and random_perspective. Yeah there's a example picture in somewhere yolov5's issues which is able to show that the augmentations with segments labels would be more accurate, but I also can't find it.😂

@rungrodkspeed closing the issue as it has been solved in #598. now segmentation training works correctly with v5loader=False. Feel free to reopen it if you have related issues. :)

@Laughing-q copy that, thanks for the amazing work :hugs: