Yolo OBB, poor orientation on squares - proposal on fixing isotropic bounding box issue

Search before asking

- [x] I have searched the Ultralytics YOLO issues and found no similar bug report.

Ultralytics YOLO Component

Train

Bug

Hi @glenn-jocher,

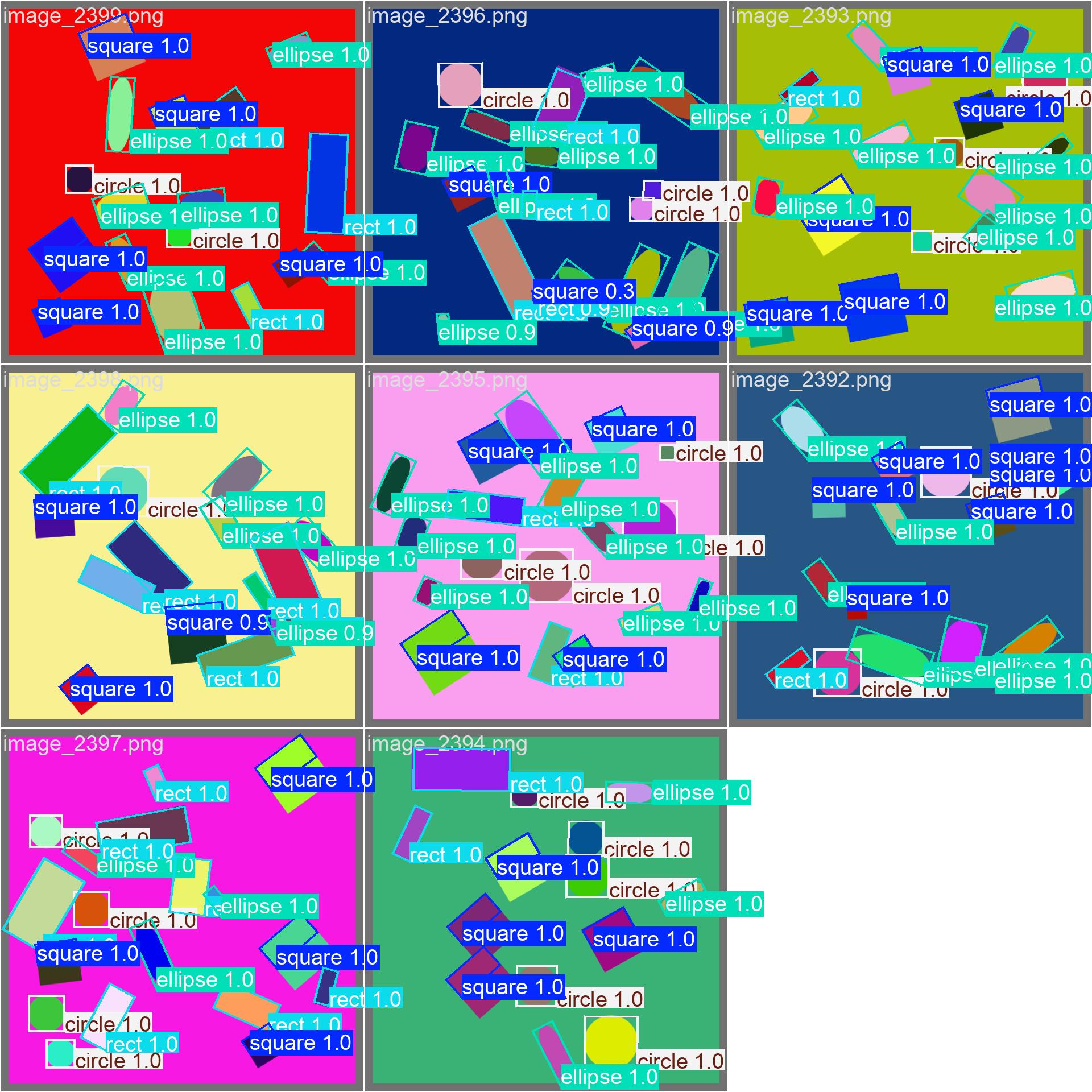

I did some evaluation on training YOLO11 OBB on shapes (square, rectangle, circle, ellipsis) with 100% synthetic data. Based on label annotation the images and labels are correct:

I've also limited the rotation angle for squares (pi/4 ... p/2] and for circles (zero) to avoid issues related to symmetry.

While creating the labels with the build in xywhr2xyxyxyxy() the training results are looking good for other shapes, the orientations of squares are quite poor:

Interestingly after I choose to label the left half of the squares (rotated pi/4... pi/2, hence looks like top half) the training went unexpectedly well:

Regarding to rotation topic, why the top half is shown selected on images:

Standard coordinate system (→, ↑, geometry, center origo) the rotation using sin, cos functions with positive angle will result ccw (↺) rotation.

Computer graphics coordinate system (→, ↓, due to upside-down y, with top left origo) the rotation using sin, cos function with positive angle will result cw (↻) rotation.

Edit:

I saw the discussion on the regularize_rboxes at OBB Datasets Overview. Can we disable the h <--> w swap somehow? For squares the h and w are approximately equal, and it will swap 50% of the case, possibly causing the square related issue. Since we use cv2's minAreaRect the swap shouldn't be needed!?

Edit2:

I've checked and the regularization_rboxes is not in use during training so it can not affect this issue.

Edit3: The following line causing probably the issue link:

return a * cos2 + b * sin2, a * sin2 + b * cos2, (a - b) * cos * sin

This term is insensitive to $$Theta$$ in case a and b gets close to each other. After some fix all squares are trained correctly as well!

I'm not sure if only this implementation is incorrect or the original paper has some flaw.

Edit4:

Yup, the demonstration implementation linked to the paper also has this issue link:

def gbb_form(boxes):

return torch.cat((boxes[:,:2],torch.pow(boxes[:,2:4],2)/12,boxes[:,4:]),1)

def rotated_form(a_, b_, angles):

a = a_*torch.pow(torch.cos(angles),2.)+b_*torch.pow(torch.sin(angles),2.)

b = a_*torch.pow(torch.sin(angles),2.)+b_*torch.pow(torch.cos(angles),2.)

c = a_*torch.cos(angles)*torch.sin(angles)-b_*torch.sin(angles)*torch.cos(angles)

return a,b,c

def probiou_loss(pred, target, eps = 1e-3, mode='l1'):

"""

pred -> a matrix [N,5](x,y,w,h,angle - in radians) containing ours predicted box ;in case of HBB angle == 0

target -> a matrix [N,5](x,y,w,h,angle - in radians) containing ours target box ;in case of HBB angle == 0

eps -> threshold to avoid infinite values

mode -> ('l1' in [0,1] or 'l2' in [0,inf]) metrics according our paper

"""

gbboxes1 = gbb_form(pred)

gbboxes2 = gbb_form(target)

x1, y1, a1_, b1_, c1_ = gbboxes1[:,0], gbboxes1[:,1], gbboxes1[:,2], gbboxes1[:,3], gbboxes1[:,4]

x2, y2, a2_, b2_, c2_ = gbboxes2[:,0], gbboxes2[:,1], gbboxes2[:,2], gbboxes2[:,3], gbboxes2[:,4]

a1, b1, c1 = rotated_form(a1_, b1_, c1_)

a2, b2, c2 = rotated_form(a2_, b2_, c2_)

isotropic issues on square OBB

Environment

Ultralytics 8.3.240 🚀 Python-3.12.9 torch-2.9.1+cu128 CUDA:0 (Tesla T4, 15931MiB) Setup complete ✅ (8 CPUs, 54.9 GB RAM, 57.5/122.9 GB disk)

OS Linux-6.14.0-1017-azure-x86_64-with-glibc2.35 Environment Docker Python 3.12.9 Install git Path /workspace/ultralytics/ultralytics RAM 54.87 GB Disk 57.5/122.9 GB CPU AMD EPYC 7V12 64-Core Processor CPU count 8 GPU Tesla T4, 15931MiB GPU count 1 CUDA 12.8

numpy ✅ 2.2.6>=1.23.0 matplotlib ✅ 3.10.8>=3.3.0 opencv-python ✅ 4.12.0.88>=4.6.0 pillow ✅ 12.0.0>=7.1.2 pyyaml ✅ 6.0.3>=5.3.1 requests ✅ 2.32.5>=2.23.0 scipy ✅ 1.16.3>=1.4.1 torch ✅ 2.9.1>=1.8.0 torch ✅ 2.9.1!=2.4.0,>=1.8.0; sys_platform == "win32" torchvision ✅ 0.24.1>=0.9.0 psutil ✅ 7.1.3>=5.8.0 polars ✅ 1.36.1>=0.20.0 ultralytics-thop ✅ 2.0.18>=2.0.18

Minimal Reproducible Example

See detail above. Also discussed in previous already closed issue: link

Related experiments in yolo-shapes repository.

Additional

No response

Are you willing to submit a PR?

- [x] Yes I'd like to help by submitting a PR!

👋 Hello @Scherlac, thank you for your detailed report and for digging so deep into the OBB square/isotropic edge case 🚀🧩 This is an automated response, and an Ultralytics engineer will also assist soon.

We recommend a visit to the Docs for new users where you can find many Python and CLI usage examples and where many of the most common questions may already be answered.

If this is a 🐛 Bug Report, please provide a minimum reproducible example to help us debug it. Since you’ve shared investigation details and screenshots, it would still really help to include an explicit MRE that we can run end-to-end (single script + exact command) that reproduces the square-orientation behavior on a fresh clone, ideally with a tiny synthetic dataset subset and fixed seeds.

If this is a custom training ❓ Question, please provide as much information as possible, including dataset image examples and training logs, and verify you are following our Tips for Best Training Results.

Join the Ultralytics community where it suits you best. For real-time chat, head to Discord 🎧. Prefer in-depth discussions? Check out Discourse. Or dive into threads on our Subreddit to share knowledge with the community.

Upgrade

Upgrade to the latest ultralytics package including all requirements in a Python>=3.8 environment with PyTorch>=1.8 to verify your issue is not already resolved in the latest version:

pip install -U ultralytics

Environments

YOLO may be run in any of the following up-to-date verified environments (with all dependencies including CUDA/CUDNN, Python and PyTorch preinstalled):

-

Notebooks with free GPU:

- Google Cloud Deep Learning VM. See GCP Quickstart Guide

- Amazon Deep Learning AMI. See AWS Quickstart Guide

-

Docker Image. See Docker Quickstart Guide

Status

If this badge is green, all Ultralytics CI tests are currently passing. CI tests verify correct operation of all YOLO Modes and Tasks on macOS, Windows, and Ubuntu every 24 hours and on every commit.

To help the team quickly validate and triage the square orientation/isotropy behavior you’re describing (and your suspected hotspot in metrics), please add the following to the issue if possible:

-Exact training command (or Python snippet) you ran for YOLO11 OBB, including all args (imgsz, epochs, batch, device, seed, augment settings) 📌

-A tiny attached/linked dataset subset sufficient to reproduce (a handful of images + labels), or steps to generate the synthetic data deterministically

-Expected vs actual outputs with the same val_batch0_pred.jpg/val_batch0_labels.jpg artifacts you already shared (perfect)

-If you have a local patch, sharing the minimal diff (or pointing to the exact file/lines changed) will help an engineer review quickly before you open a PR 🙏

There was a PR for this before: https://github.com/ultralytics/ultralytics/pull/16851

Although it decreased performance on DOTA so wasn't merged

The mentioned PR seems to be quite different.

would not make sens to make some performance benchmark?

I'm not sure about all the checks in CI currently failing. Almost all test on local env runs. I'm about to check what might fail.

Any hint on failing Benchmarks?

The CI fails because the changes causes lower mAP during validation with pretrained YOLO11n OBB model on DOTA8.

mAP should not be decreased by a PR.

What's the scale of decrease? Can you link the location of the test? (source code, pipeline to check and expected values would nice)

It shows it in the CI logs

https://github.com/ultralytics/ultralytics/actions/runs/20414707682/job/58656628491?pr=23012#step:12:1866

The test is here. The threshold is being passed as verbose

https://github.com/ultralytics/ultralytics/blob/9fb990d1a2c38ed0bacd2d2b37f8309ee60028a5/.github/workflows/ci.yml#L145

Just to clarify:

I should not worry about errors like:

ERROR ❌ Benchmark failure for Axelera: inference not supported on CPU

But ones like:

assert all(x > floor for x in metrics if not np.isnan(x)), f"Benchmark failure: metric(s) < floor {floor}" ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ AssertionError: Benchmark failure: metric(s) < floor 0.597 Error: Process completed with exit code 1.

And about changes in the chart:

From:

| 1 PyTorch ✅ 5.5 0.6504 20.93 47.78 | | 2 TorchScript ✅ 10.6 0.5981 39.62 25.24 | | 3 ONNX ✅ 10.3 0.5981 8.49 117.77 |

To:

| 1 PyTorch ✅ 5.5 0.5479 29.79 33.56 | | 2 TorchScript ✅ 10.6 0.5772 42.54 23.51 | | 3 ONNX ✅ 10.3 0.3402 9.8 102.0 |

Yes—ignore the “inference not supported” lines from optional backends; the PR is failing on the hard gate: Benchmark failure: metric(s) < floor, because CI runs Benchmark mode with verbose set to a float minimum-metric floor and asserts exported-format metrics stay above it (see the benchmarks.py floor check and the Benchmark mode docs).

yolo benchmark model=yolo11n-obb.pt data=dota8.yaml imgsz=640 verbose=0.597

Are there any optimizations related to the poor performance of YOLO OBB in square object detection on YOLOv26?

For true squares the rotation is fundamentally ambiguous (when w≈h, many angles represent the same box and the rotation term becomes weak/unstable), so there isn’t a YOLO26-specific “square optimization” beyond enforcing a consistent labeling convention (fixed corner ordering in xyxyxyxy, or a tiny ε perturbation to break w==h ties) and evaluating with OBB/polygon IoU rather than raw angle; see the supported angle constraints in the OBB task docs.

You can try YOLO26-OBB. There's a new angle loss.

@Y-T-G Is the metric calculation different in yolo26?

The main issue is the in _get_covariance_matrix function where a and b gets about the same.

@glenn-jocher Still no regression test. Sorry no ETA.

You mean mAP? Should be same.

There's an angle loss in YOLO26. I think it might fix this issue.