tokio: add io_uring support

Motivation

This PR aims to explore one of the ways that io_uring support

could be added to the tokio runtime. In the following paragraphs

I'll briefly explain some of the challenges and performance implications

of integrating io_uring with the tokio runtime in this way.

tokio::platform::linux::uring

The tokio-uring crate is placed as a module at

tokio::platform::linux::uring and all module paths inside have been

updated to match the new path. The tokio_uring::runtime module is

removed and the io_uring driver is integrated with the tokio runtime.

The inclusion of this module in the module tree is conditioned on the

uring feature flag.

The single io_uring instance and its operations slab are protected by a mutex; this

can be improved in the future by creating a thread-per-core runtime or

using a sharded mutex for the multi-threaded runtime.

Other changes have been made to the driver to prepare it for integration into the tokio runtime.

uring feature flag

A new feature flag called uring is added to the Cargo.toml

along with its dependencies. Enabling this feature flag allows

the user to use uring operations inside any tokio runtime.

Inclusion of uring::Driver in the runtime::Driver

io_uring_driver is added alongside time_driver in the tokio

runtime driver. The runtime driver now also creates an instance

of uring::Driver and returns its handle together with the handle

of other drivers bundled in a runtime::driver::Resources instance.

Associated functions have been added to runtime::Context to allow

various components of the runtime to interact with the current

Context's io_uring driver

Integration with mio and the io driver

The uring driver now uses the io driver's handle to register itself with

the io driver and upon dropping, it deregisters itself. Io driver

now flushes the io_uring submission queue entries before waiting

on mio::poll::Poll for mio events.

After waking up, the io driver checks the read-readiness status of

the io_uring driver and if the io_uring driver was read-ready it

ticks the io_uring driver, dispatching resources associated with each

completion queue entry.

Hooks in the runtime

A new parameter called io_uring_interval is added to the runtime.

In the maintenance function, after every io_uring_interval ticks the submission queue of the io_uring

driver is flushed.

Flush before parking

All threads must flush the submission queue entries before going to sleep. This is needed to avoid situations where the runtime hangs because there are SQEs stuck in the submission queue (the kernel has not been notified about them).

Tests

- All tokio-uring tests are passing using

#[tokio::test]. Theuring_fs_filetest is non-deterministic and sometimes fails. This behavior is similar to the originalfs_filetest in thetokio-uringcrate. - All

tokiotests are passing with and withouturingfeature flag.

Performance

The following code is used to compare threadpool-based fs operations with io_uring fs operations.

tokio bench

use std::time::Instant;

use tokio::{

fs::File,

io::{AsyncReadExt, AsyncWriteExt},

task::JoinHandle,

};

async fn compute() {

let handles: Vec<JoinHandle<_>> = (0..1000)

.map(|_| {

tokio::spawn(async move {

let mut buffer = [0; 10];

{

let mut dev_urandom = File::open("/dev/urandom").await.unwrap();

dev_urandom.read(&mut buffer).await;

}

let mut dev_null = File::create("/dev/null").await.unwrap();

dev_null.write(&mut buffer).await.unwrap();

})

})

.collect();

for handle in handles {

handle.await.unwrap();

}

}

#[tokio::main]

async fn main() {

// warmup

compute().await;

let before = Instant::now();

for _ in 0..10 {

compute().await;

}

let elapsed = before.elapsed();

println!(

"{:?} total, {:?} avg per iteration",

elapsed,

elapsed / 1000

);

}

uring bench

use std::time::Instant;

use tokio::runtime::Builder;

use tokio::{runtime::Runtime, task::JoinHandle};

use tokio::platform::linux::uring::fs::File as UringFile;

async fn compute() {

let handles: Vec<JoinHandle<_>> = (0..1000)

.map(|_| {

tokio::spawn(async move {

let mut buffer = vec![0; 10];

{

let dev_urandom = UringFile::open("/dev/urandom").await.unwrap();

let (_res, buf) = dev_urandom.read_at(buffer, 0).await;

buffer = buf;

}

let dev_null = UringFile::create("/dev/null").await.unwrap();

dev_null.write_at(buffer, 0).await.0.unwrap();

})

})

.collect();

let mut _count = 0;

for handle in handles {

handle.await.unwrap();

_count += 1;

}

}

#[tokio::main]

async fn main() {

// warmup

compute().await;

let before = Instant::now();

for _ in 0..10 {

compute().await;

}

let elapsed = before.elapsed();

println!(

"{:?} total, {:?} avg per iteration",

elapsed,

elapsed / 1000

);

}

The code is taken from this github gist.

Release Build

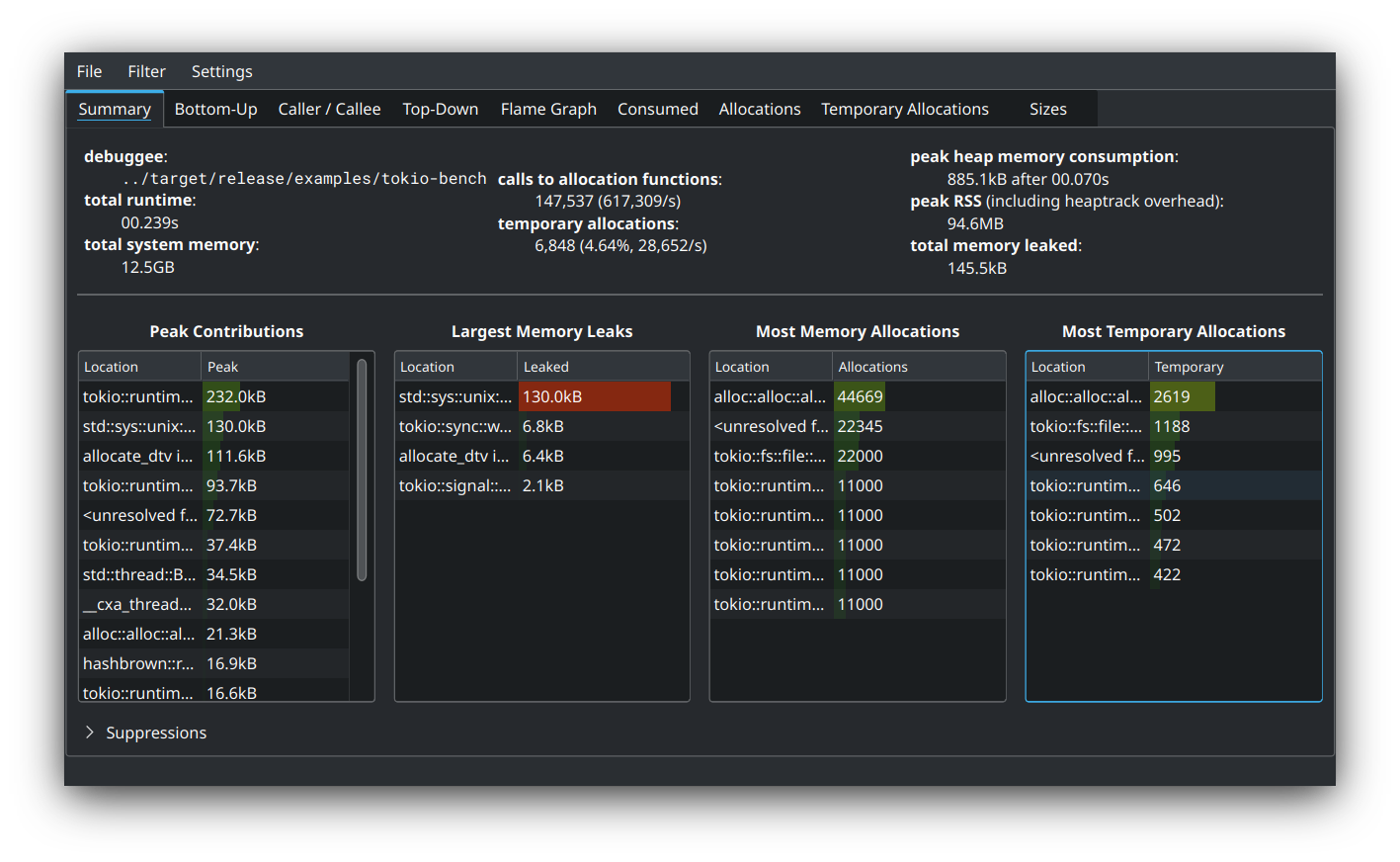

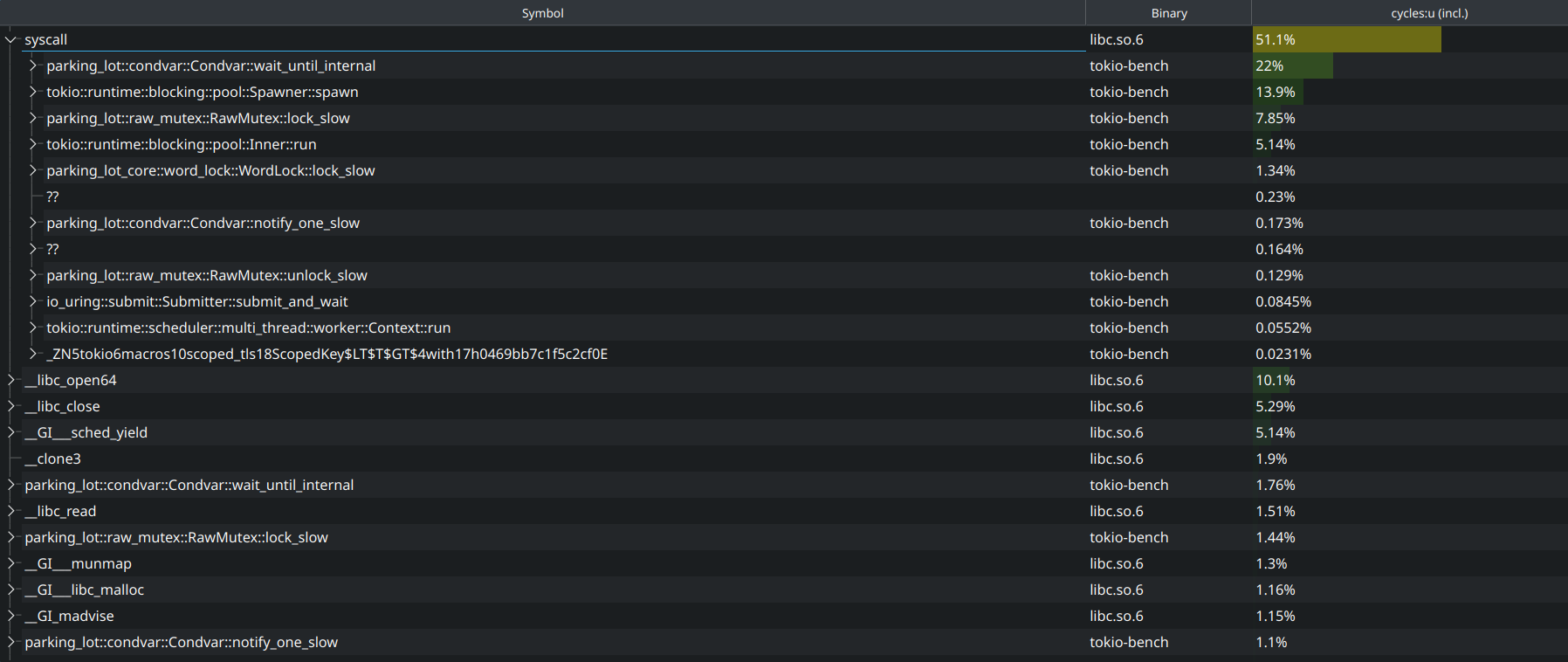

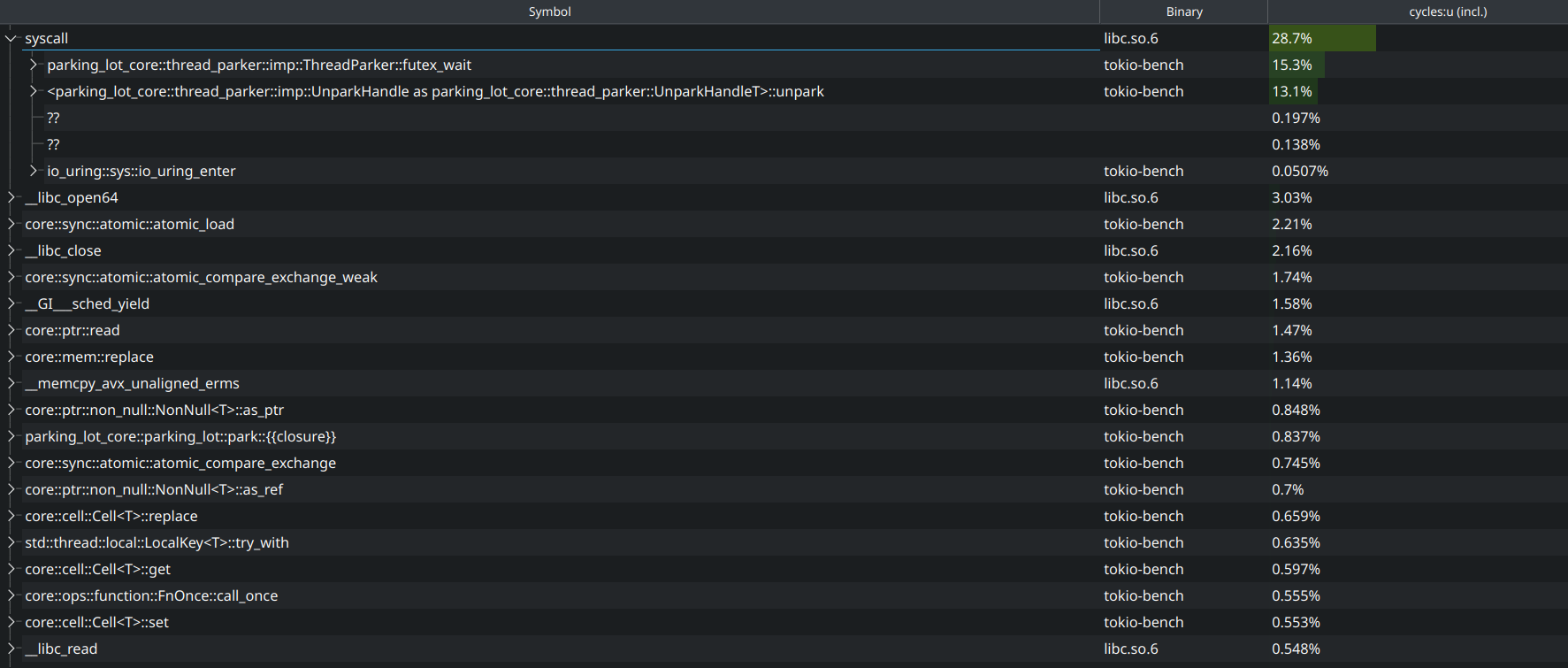

tokio-bench

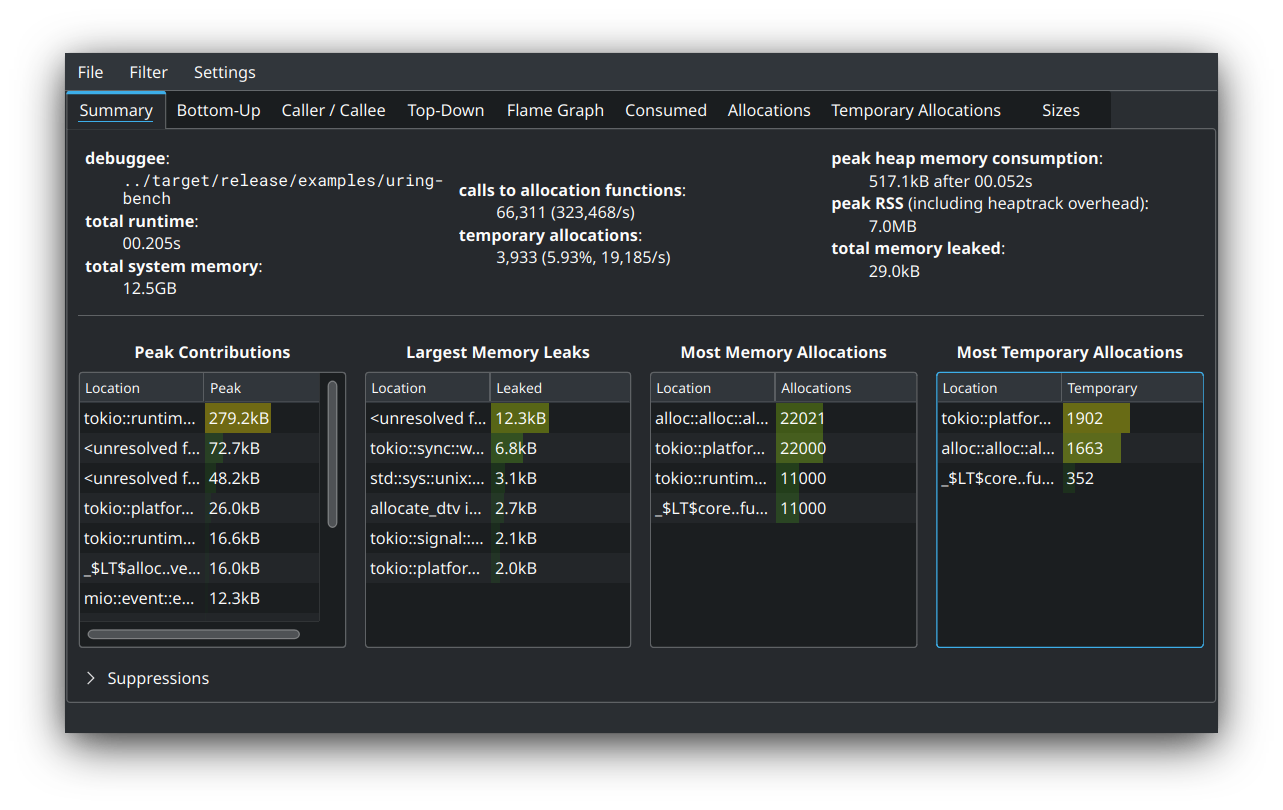

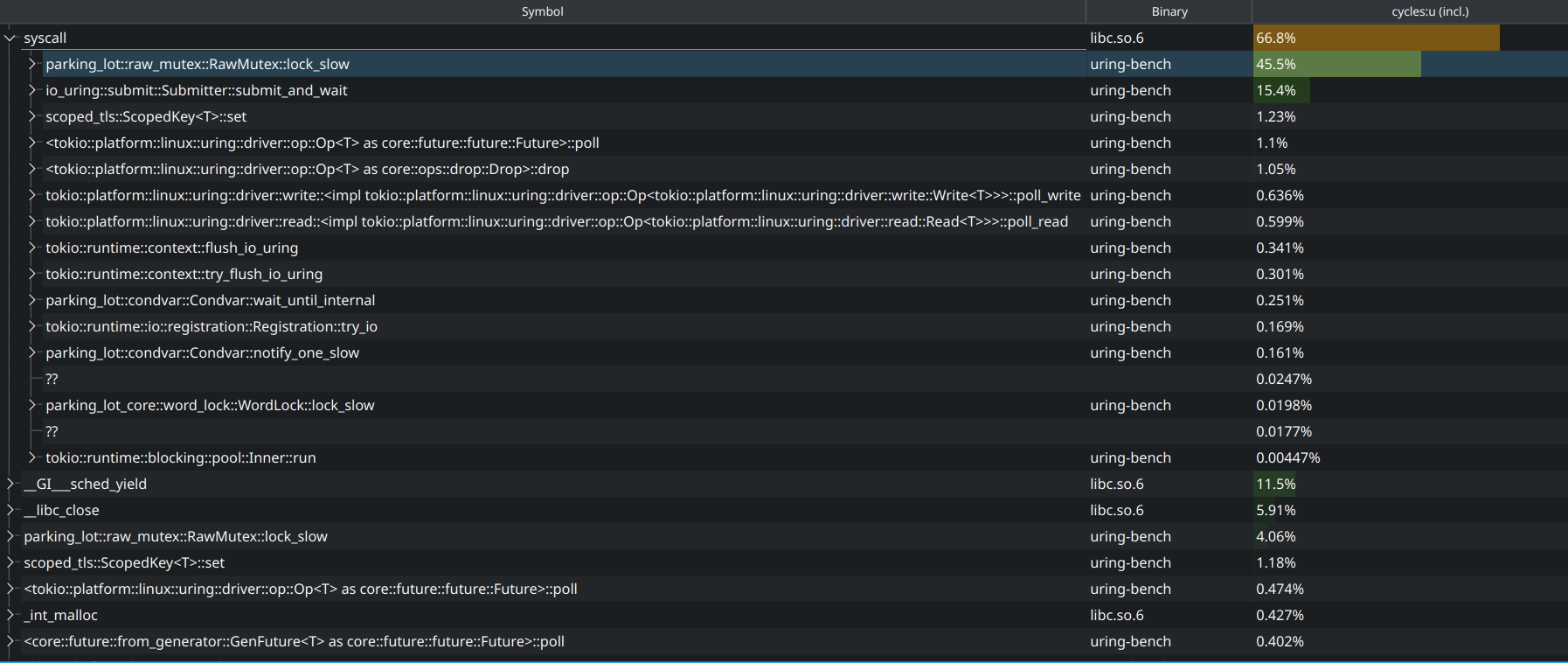

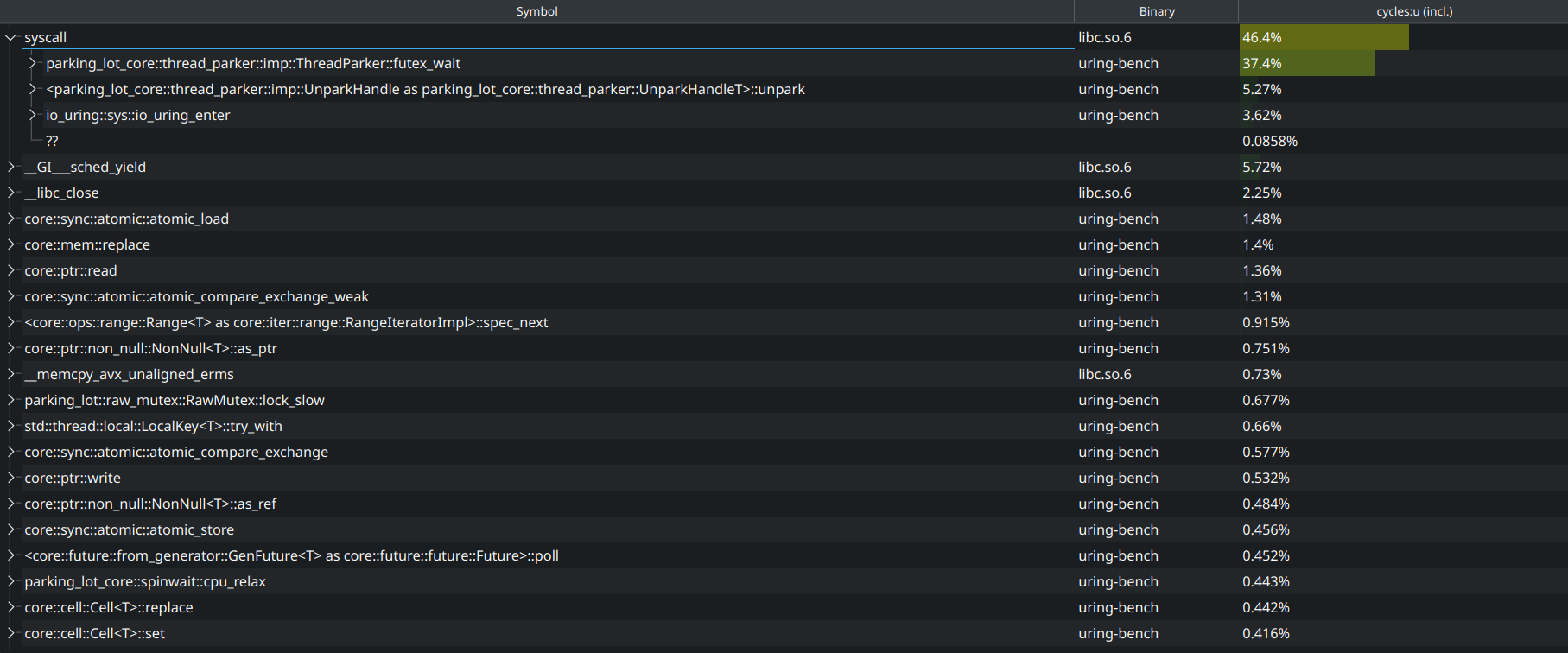

uring-bench

Debug Build

tokio-bench

uring-bench

Software used:

In the debug build, they both perform basically the same. In the release build, however, tokio performs better by about 30ms. This benchmark is an extreme case where all threads are only doing file io and are constantly trying to acquire the heavily contested mutex of uring driver to submit their operations. As stated above, this may be improved in the future by creating a thread-per-core runtime or using a sharded mutex for the multi-threaded runtime. In the latter approach, one may create a bunch of io_uring instances each behind a mutex, then use something like sharded_slab or sharded_mutex and instruct each thread to quickly find the least contested uring to submit its operation into.

After ironing out the fs operations in platform::linux::uring and improving the documentation, it is possible to have tokio::fs transparently use uring::fs conditioned on the uring feature flag. Maybe something like the following:

cfg_io_uring! {

pub use platform::linux::uring::fs::File

}

cfg_not_io_uring! {

pub use self::file::File

}

I'm waiting for @carllerche to finish his refactoring, but I'll be doing my own one of these as well.

I have some different views on how to manage the driver. I'm likely going to either modify or abstract over Mio in order to avoid the dual-driver structure here. I'm also looking to go for a driver-per-core structure with a shared registration table rather than sharing the driver, as uring's design heavily favors driver-per-core semantics.

As I've said at https://github.com/tokio-rs/tokio-uring/discussions/109, I strongly suggest make some types not tokio-uring or linux only, e.g. IoBuf.