Support for month, year and time zones in time_bucket

Time_bucket feature works great but why can't we use SELECT time_bucket('1 month', date)...

I would like to know how to get month bucket and even yearly buckets?

time_bucket is designed for regular intervals like days, hours, minutes. As months and years are variable time units, the function does not support them. A separate function that will deal with irregular time units is being considered, but for now, if you are trying to get a month long interval, you can use 30 days. Alternatively, you can use the PostgreSQL date_trunc function which offers a less powerful version of the same functionality, but takes months and years (and decades and millenia...) as parameters.

@solugebefola date_trunc does not support Offsets as time_bucket do.

(Ex: make month starting on the 5th)

Do you know a workaround ?

@mmouterde if a "month" starts on the 5th of a calendar month, wouldn't it be sufficient to use time_bucket("30 days", time)?

On topic, time_bucket("month", time) would actually be nice, but would perhaps require either parsing the string representation of a time point, enums with set "month name = days" pairs or some other such approach.

unfortunately, time_bucket("30 days", time) can't fit my needs.

If I extract the month from the date '02/05/2018' that give May, I don't thinks it's possible to group this point in the January bucket..

Yep I probably have to use a such month/DayInMonth map and same CASE WHEN :/

@mmouterde yes, a trigger would also help with that, I think!

Hey, any updates on this issue.

When I used time_bucket('30 days') I got the weird results from the database table.

For now, even 30 days isn't working fine or me.

The docs should really mention that it works only up to days.

When the docs say "more powerful version", we expect this command to do everything date_trunc can do plus some additional features.

I also would like to see this working for at least up to a year, including months and quarters.

I would love this feature. Its important to create monthly values when doing reporting.

Adding my voice to the pile. At a technical level, I can appreciate why this functionality doesn't exist. At a practical level, though, I don't understand it at all. This lack of functionality has the effect of making timescaledb more of a hassle than it's worth, since I have to write my own code to handle months anyway. A separate function or wrapper could do wonders..

Please implement this functionality!

Yep, it's essential to have months and years, taking into account variable month lengths.

In the meantime I suggest use something like this to concat the year and month from a datetime and group by that: SELECT CONCAT( EXTRACT(year FROM datetime), '-', LPAD(EXTRACT(month FROM datetime)::text, 2, '0')) AS period, FROM table GROUP BY period

Gives: 2018-01 2018-02 2018-03 ...

@cryptoflipper Does this give different results, than the postgres trunc function?

Also I wonder, if the time_bucket function could just delegate to trunc when month, etc are specified (and maybe throw an error when the interval is not 1 in this case)

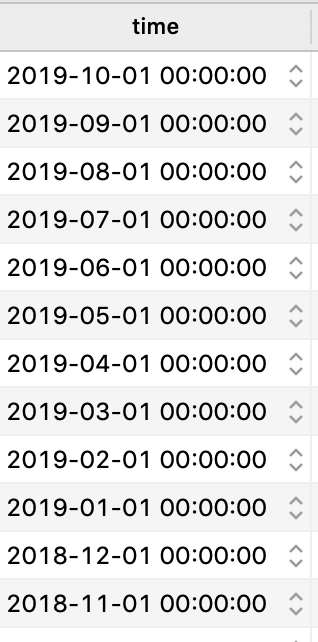

I'm getting these results with date_trunc in postgres 11, seems good to me or am I missing something

I think also a fairly big argument that hasn't yet been discussed on this issue is that date_trunc obviously does not support gap filling like you can get with the time_bucket_gapfill method available with TimescaleDB.

Is there anything in the roadmap for looking into adding this variable width time lengths/buckets? Or is this not something the team is looking at any time soon.

hey @alsotop thanks for commenting I am just trying to wrap my head around what you are asking when you say variable width time buckets? Could you give me an example? Apologies for being a little slow on the uptake I just want to make sure I understand!

@bboule Sorry for the confusion, I just meant variable width time buckets as in "months, quarters, years, etc" that are not currently supported since they're not regular intervals (i.e. September is only 30 days, but October is 31 days).

Ahh I see I was not making the connection thank you!! So we are doing some product planning now and I think this makes sense... Keep an eye on this issue as I hope to set a milestone (release target) for this in the next week or so as we work through the backlog... but this indeed does seem to have some momentum... if anyone on the thread is willing to share can you tell me a bit about your timescale use case that drives this (this is more for my own curiosity not as any sort of justification). @alsotop thanks for clearing that up sometimes I am a little slow on the uptake :)

Here is our use case:

We are using timescale to store environmental sensor data (for example, data from a weather station). It makes sense to compare these timeseries year-by-year to see trends in the seasonal statistics. For example, if we have a 20-year water temp timeseries, we calculate the average/min/max air temp in the month of March across all years, then when the user picks a specific year, they can compare that timeseries to the historic stats.

This chart shows that 2019 was one of the warmest years ever at this station. The gray dotted line is the average per month across all years, and the grey envelope shows the min/max. The blue line is the currently selected year.

This chart shows that 2019 was one of the warmest years ever at this station. The gray dotted line is the average per month across all years, and the grey envelope shows the min/max. The blue line is the currently selected year.

Currently we pull data from the db, calculate the statistics in python code, for weekly, monthly, and seasonal bins, and store those results. We're using pandas, which offers a great range of frequency options -- for example we use Q-NOV for our seasonal bins (winter=Nov,Dec,Jan; spring=Feb,Mar,Apr; etc). I tried doing this in timescale, but quickly ran into this issue and have been tracking it since.

But here's the thing: we are currently calculating stats one timeseries at a time. I see timescale coming in here and offering ways to quickly calculate stats like this across timeseries -- for example, all water temp sensors in the gulf of mexico. That would be really powerful! And it's the kind of thing that would be very simple with postgis/timescale, compared to doing that with python/pandas.

@bboule thanks for diving into this.

My use case is creating Timescale Continuous Aggregates based on time_bucket in monthly and yearly resolutions, and have them aligned with 1st-of-month and 1st-of-year. I tried to accommodate my use case to doing time_bucket('30 days' ... ) but as I ended up with mis-aligned buckets, I resorted to PostgreSQL's date_trunc. This also means I cannot manage my materialized view through Timescale, and it's a shame as Timescale does a great job in managing materialized views.

@bboule we use timescale db for candle stick information. A monthly candle stick view is used a lot.

Hey guys this is really good stuff I really appreciate everyone taking the time to comment and share how this fits into the specific use cases (great learning for me as well)... I will make sure we have a goal for this as part of our planning within the next week or so via setting a milestone and will also add a comment as to some target dates as we work through this!! Again THANK YOU guys and stay tuned!!!

As stated in 2nd comment, time_bucket is designed for regular intervals. However, how will it behave in the context of leap seconds? Maybe with introduction of new functionality for variable width intervals, the behaviour could be documented.

Those that need this capability, while TimescaleDB works on this issue, can use this function that I wrote for my own project.

CREATE OR REPLACE FUNCTION tools.time_bucket (

bucket_width interval,

ts timestamptz

)

RETURNS TIMESTAMP WITH TIME ZONE AS

$body$

/*

millennium = 1000 years

century = 100 years

decade = 10 years

year = x year

months = x months

You may use any combination of years and months.

Example: 1 year 6 months aka 1.5 years

*/

DECLARE

origin_ts timestamptz := '0001-01-01T00:00:00Z';

months integer;

bucket_months integer;

bucket_month integer;

BEGIN

IF bucket_width >= '1 month'::interval THEN

bucket_months :=

(EXTRACT(MONTH FROM bucket_width) + -- months

(EXTRACT(YEAR FROM bucket_width) * 12)); -- years

months := (((EXTRACT(YEAR FROM ts)-EXTRACT(YEAR FROM origin_ts))*12)+EXTRACT(MONTH FROM ts)-1);

bucket_month := floor(months/bucket_months)*bucket_months;

RETURN make_timestamptz(

(EXTRACT(YEAR FROM origin_ts)+floor(bucket_month/12))::integer, -- year

(bucket_month%12)+1, -- month

1, --day

0, -- hour

0, -- minute

0 --second

);

ELSE

RETURN public.time_bucket(bucket_width, ts);

END IF;

END;

$body$

LANGUAGE 'plpgsql'

IMMUTABLE

RETURNS NULL ON NULL INPUT

SECURITY INVOKER;

I found a bug my my code, if you set a local timezone other than UTC. I have updated the function to correct this issue. I have now also included a timestamp and date version of this function.

timestaptz version of the function

bucket_width interval,

ts timestamptz,

"offset" interval = '00:00:00'::interval,

origin timestamptz = '0001-01-01 00:00:00+00'::timestamptz

)

RETURNS TIMESTAMPTZ AS

$body$

/*

millenium = 1000 years

century = 100 years

decade = 10 years

1.5 years aka 1 year 6 months or 18 months

year aka 12 months

half year aka 6 months

quarter aka 3 months

months = months

*/

DECLARE

months integer;

bucket_months integer;

bucket_month integer;

BEGIN

IF EXTRACT(MONTH FROM bucket_width) >= 1 OR EXTRACT(YEAR FROM bucket_width) >= 1 THEN

origin := origin + ((0-date_part('timezone_hour', now()))::text || ' hours')::interval;

bucket_months :=

(EXTRACT(MONTH FROM bucket_width) + -- months

(EXTRACT(YEAR FROM bucket_width) * 12)); -- years

months := (((EXTRACT(YEAR FROM ts)-EXTRACT(YEAR FROM origin))*12)+EXTRACT(MONTH FROM ts)-1);

bucket_month := floor(months/bucket_months)*bucket_months;

RETURN make_timestamptz(

(EXTRACT(YEAR FROM origin)+floor(bucket_month/12))::integer, -- year

(bucket_month%12)+1, -- month

1, --day

0, -- hour

0, -- minute

0, --second

'Z');

ELSE

CASE

WHEN "offset" > '0s'::interval THEN

RETURN public.time_bucket(bucket_width, ts-"offset") + "offset";

WHEN origin <> '0001-01-01T00:00:00Z'::timestamptz THEN

RETURN public.time_bucket(bucket_width, ts, origin);

ELSE

RETURN public.time_bucket(bucket_width, ts);

END CASE;

END IF;

END;

$body$

LANGUAGE 'plpgsql'

IMMUTABLE

RETURNS NULL ON NULL INPUT

SECURITY INVOKER;

timestamp version of the function

CREATE OR REPLACE FUNCTION tools.time_bucket (

bucket_width interval,

ts timestamp,

"offset" interval = '00:00:00'::interval,

origin timestamp = '0001-01-01 00:00:00'::timestamp

)

RETURNS TIMESTAMP AS

$body$

/*

millenium = 1000 years

century = 100 years

decade = 10 years

1.5 years aka 1 year 6 months or 18 months

year aka 12 months

half year aka 6 months

quarter aka 3 months

months = months

*/

DECLARE

months integer;

bucket_months integer;

bucket_month integer;

BEGIN

IF EXTRACT(MONTH FROM bucket_width) >= 1 OR EXTRACT(YEAR FROM bucket_width) >= 1 THEN

bucket_months :=

(EXTRACT(MONTH FROM bucket_width) + -- months

(EXTRACT(YEAR FROM bucket_width) * 12)); -- years

months := (((EXTRACT(YEAR FROM ts)-EXTRACT(YEAR FROM origin))*12)+EXTRACT(MONTH FROM ts)-1);

bucket_month := floor(months/bucket_months)*bucket_months;

RETURN make_timestamp(

(EXTRACT(YEAR FROM origin)+floor(bucket_month/12))::integer, -- year

(bucket_month%12)+1, -- month

1, --day

0, -- hour

0, -- minute

0 --second

);

ELSE

CASE

WHEN "offset" > '0s'::interval THEN

RETURN public.time_bucket(bucket_width, ts-"offset") + "offset";

WHEN origin <> '0001-01-01T00:00:00'::timestamp THEN

RETURN public.time_bucket(bucket_width, ts, origin);

ELSE

RETURN public.time_bucket(bucket_width, ts);

END CASE;

END IF;

END;

$body$

LANGUAGE 'plpgsql'

IMMUTABLE

RETURNS NULL ON NULL INPUT

SECURITY INVOKER;

date version of the function

CREATE OR REPLACE FUNCTION tools.time_bucket (

bucket_width interval,

ts date,

"offset" interval = '00:00:00'::interval,

origin date = '0001-01-01'::date

)

RETURNS DATE AS

$body$

/*

millenium = 1000 years

century = 100 years

decade = 10 years

1.5 years aka 1 year 6 months or 18 months

year aka 12 months

half year aka 6 months

quarter aka 3 months

months = months

*/

DECLARE

months integer;

bucket_months integer;

bucket_month integer;

BEGIN

IF EXTRACT(MONTH FROM bucket_width) >= 1 OR EXTRACT(YEAR FROM bucket_width) >= 1 THEN

bucket_months :=

(EXTRACT(MONTH FROM bucket_width) + -- months

(EXTRACT(YEAR FROM bucket_width) * 12)); -- years

months := (((EXTRACT(YEAR FROM ts)-EXTRACT(YEAR FROM origin))*12)+EXTRACT(MONTH FROM ts)-1);

bucket_month := floor(months/bucket_months)*bucket_months;

RETURN make_date(

(EXTRACT(YEAR FROM origin)+floor(bucket_month/12))::integer, -- year

(bucket_month%12)+1, -- month

1 --day

);

ELSE

CASE

WHEN "offset" > '0s'::interval THEN

RETURN public.time_bucket(bucket_width, ts-"offset") + "offset";

WHEN origin <> '0001-01-01'::date THEN

RETURN public.time_bucket(bucket_width, ts, origin);

ELSE

RETURN public.time_bucket(bucket_width, ts);

END CASE;

END IF;

END;

$body$

LANGUAGE 'plpgsql'

IMMUTABLE

RETURNS NULL ON NULL INPUT

SECURITY INVOKER;

+1 to the use case presented by @aguformoso - time_bucket('month') and time_bucket('year') support with CREATE VIEW for continuous aggregates. Practically every query we need to run is for monthly, yearly, or all-time data, and it's horribly inefficient to be querying at SELECT time from daily values only.

@LloydAlbin thanks! and do you have gapfill version?

I'm also keen to see efficient calendar month bucketing ( and cal/years but cal months are more applicable to us). Often from a reporting pov people care about calendar months not 30 day intervals. I can fully understand why days / fixed time periods are only supported technically, however if you are chunking by time anyway, it doesn't sound like a particularly unsolveable problem and I think there would be a lot of interest.

Any suggestions on the most efficient way to do a gapfill with @LloydAlbin's version? I've been using a CTE with generate_series and JOINing them, but I feel like there's probably a better way.

The CTE is the fastest way within Postgres itself. The TimescaleDB extension does the gapfill faster than the CTE as I have tested both for doing the time periods that TimescaleDB supports. For the time periods that TimescaleDB does not support, then the CTE will be faster as you TimescaleDB would have to be generating a lot of extra date/time’s that will later on be thrown away during the conversion from day to month or day to year, etc. The best solution would be to update the TimescaleDB extension to support the month, year, etc time_bucket long with their respective gapfill’s. I just have not had any free time to do this myself and submit it as a patch. My code was just to prove it was theoretically possible to do, since the TimescaleDB people said it was impossible, and should be rewritten into C to be used in the TimescaleDB extension.

From: Ricky [email protected] Sent: Thursday, March 26, 2020 11:18 PM To: timescale/timescaledb [email protected] Cc: Albin, Lloyd P [email protected]; Mention [email protected] Subject: Re: [timescale/timescaledb] support for month, year time_bucket? (#414)

Any suggestions on the most efficient way to do a gapfill with @LloydAlbinhttps://urldefense.proofpoint.com/v2/url?u=https-3A__github.com_LloydAlbin&d=DwMCaQ&c=eRAMFD45gAfqt84VtBcfhQ&r=Xh-FbvkmuY2wtq98eUcUtlVLZcPEI5HL7TuqKWjcsAI&m=uNciOTqURc49owJpc0QYzoqNnzSz2phPMcrppsMokG0&s=jPLLIIlYFxtdiqtWAKff484LxTlxTwA-k6Qkikyo7SA&e='s version? I've been using a CTE with generate_series and JOINing them, but I feel like there's probably a better way.

— You are receiving this because you were mentioned. Reply to this email directly, view it on GitHubhttps://urldefense.proofpoint.com/v2/url?u=https-3A__github.com_timescale_timescaledb_issues_414-23issuecomment-2D604834554&d=DwMCaQ&c=eRAMFD45gAfqt84VtBcfhQ&r=Xh-FbvkmuY2wtq98eUcUtlVLZcPEI5HL7TuqKWjcsAI&m=uNciOTqURc49owJpc0QYzoqNnzSz2phPMcrppsMokG0&s=3CJs4R2C3omiqRE7FKzoS8OkHg8L6pSJmew7QRpcA3o&e=, or unsubscribehttps://urldefense.proofpoint.com/v2/url?u=https-3A__github.com_notifications_unsubscribe-2Dauth_ACB4JQ5EE2SRJICZYKYGGBTRJRAHZANCNFSM4EOS33WA&d=DwMCaQ&c=eRAMFD45gAfqt84VtBcfhQ&r=Xh-FbvkmuY2wtq98eUcUtlVLZcPEI5HL7TuqKWjcsAI&m=uNciOTqURc49owJpc0QYzoqNnzSz2phPMcrppsMokG0&s=ynU2RNtFe1kldhKBBWi4DOtmcO1Ww0iLn9xtQBiCYN0&e=.

I will make sure we have a goal for this as part of our planning within the next week or so via setting a milestone and will also add a comment as to some target dates as we work through this!! Again THANK YOU guys and stay tuned!!!

Has there been any progress in adding this feature to a milestone ? The quoted text above was from mid-January. Apologies in advance for the noise if this feature is already scheduled to a milestone and I could not find it.