serving

serving copied to clipboard

serving copied to clipboard

A flexible, high-performance serving system for machine learning models

## Feature Request ### Describe the problem the feature is intended to solve Our team is struggling with cumulation of metrics volume forcing us to preform regular restarts of tf-serving...

We just realized that we were spending quite a bit of money on merely polling for version updates for our models which are hosted in GCS. It was doing a...

Hi, In the last months I've been using docker in linux with tensorflow serving gpu image, this week I was trying to run this image that always worked using ports...

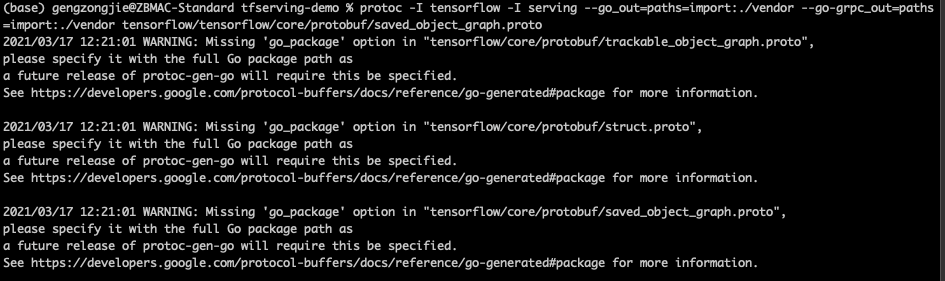

almost all the proto file in serving is lack of go_package option, which cased a lot of warning when compling to generate go code. just like below:

Please excuse me if this is the wrong place to post this. Doing it here because I think its related to problems with documentation and examples, but if there's a...

### Describe the problem the feature is intended to solve We would like to serve fully-convolutional segmentation models whose input and output tensor sizes are flexible, but not identical. In...

Now I have model A and model B. I want to config a process that predict use both A and B,but only export one restful/grpc API. How can I get...

I don’t have a problem when creating my own serving image in docker using 1 model but when I try to build a serving image with multiple models it doesn’t...

The about tab of github shows this link - www.tensorflow.org/serving The new link is - https://www.tensorflow.org/tfx/guide/serving

Excuse me, how to solve the problem of slow speed? shape:(1, 32, 387, 1) data time: 0.005219221115112305 post time: 0.24771547317504883 end time: 0.2498164176940918 shape:(2, 32, 387, 1) data time: 0.0056378841400146484...