fix(postgres): correctly re-acquire connection while using pg-native

Unfortunately https://github.com/sequelize/sequelize/pull/14090 didn't resolve it as code could get into situation where all dead connections are considered as in use and sequelize-pool acquire() was stuck in infinite loop.

I have tested against local PostgreSQL database with pg-native plugin enabled.

Fixes https://github.com/sequelize/sequelize/issues/14086

I wish we could add a test to this to prevent future changes to the same, but it seems quite difficult to implement.

So I'm OK with the current changes.

I wish we could add a test to this to prevent future changes to the same, but it seems quite difficult to implement.

So I'm OK with the current changes.

Thank you. I am stress testing it in our UAT instance to make sure all connection handles will recover.

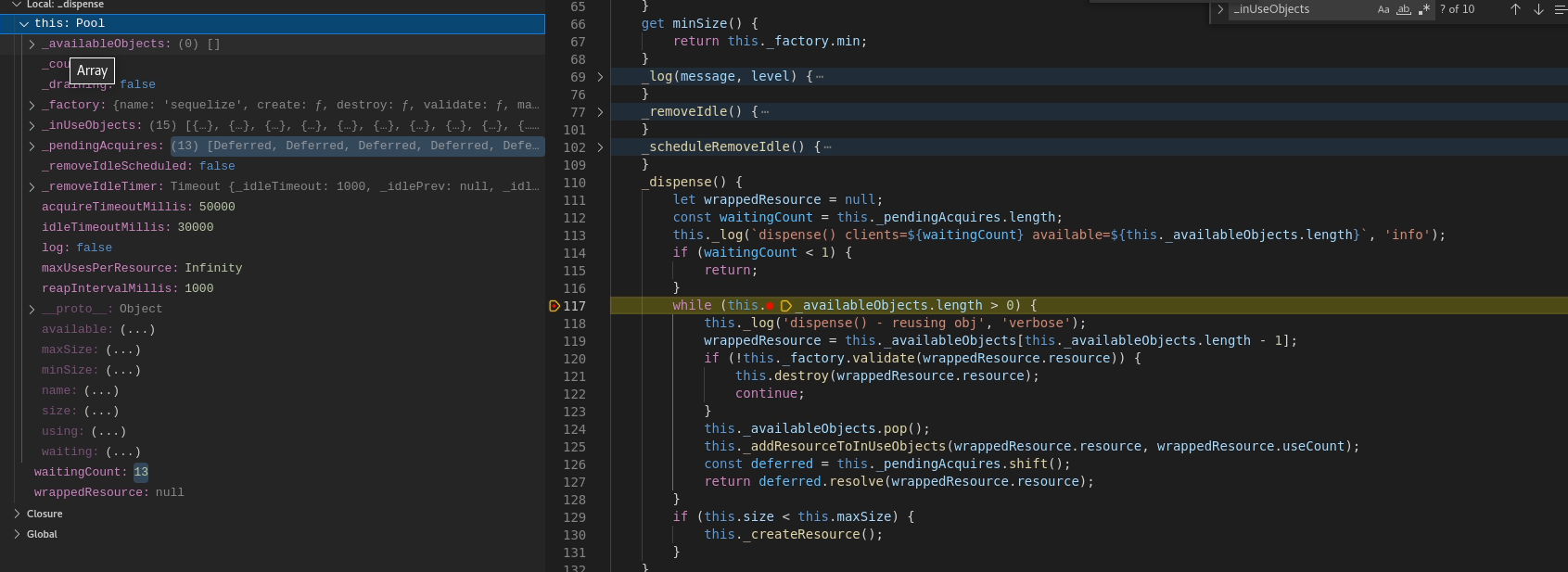

It is unbelievable, but even this fix doesn't fully resolve it yet ! I am thinking on what to do. The issue is that sequelize-pool uses internal _inUseObjects array.

If a situation happens that we have N parallel requests during connectivity issue, those N requests will timeout eventually, but still connection will stay inside _inUseObjects. I am looking into the code on how this can be fixed.

I was thinking about it and I think with current design the only solution is to call destroyAllNow() in case retry-as-promised loop times out. The current code only handles case when I am able to reacquire the connection within given time limit.

I have performed another round of testing. The sequelize-pool library always ends up with some connections inside _inuseobjects which will forever get stuck there. I also see my latest changes also lead to breakage of your integration tests.

I believe there is no other way than to introduce some mechanism in sequelize-pool so that when connection manager notifies library that he timed out trying to do the reacquire(), sequelize-pool would consider all connections in use as valid.

What would you propose as a solution?

Hell yeah I did it ! I have tested multiple scenarios and all are passing.

-

Let server connect to PostgreSQL. Make some parallel requests to force library use all connections in pool (15 for test). After that run tcpkill, make single request. Thanks to this request tcpkill will reset all connections. The ongoing request will eventually timeouts after timeout_period (in my case 55 seconds). After the timeout occurs, pool successfully recovers all 15 connections again.

-

Similar to test case 1), but try to run parallel requests during the time all connections are dead and timeout (55 seconds) didn't occur. As expected these queries will keep stacking up, BUT eventually after the timeout expires, new handles are re-acquired and requests will be processed !

@bl0cknumber , @WikiRik , @ephys I believe we are ready to merge it now.

This solution will destroy every connection, including potentially alive ones used by other queries, every time a query has an execution time that's too long, or the network slows down. I'm not super familiar with the connection code yet but this could cause a lot of stability issues, couldn't it?

From your research, it would seem that the issue is that broken connections are not removed from _inUseObjects Do we know why they're never removed?

Let me take a step back and explain the root cause of the issue. Sequelize-pool relies on implementation of create, destroy and validate functions provided by given driver. I can say with certainty that the driver for PostgreSQL doesn't provide a way to check whether a socket is alive or not. The only way how to tell that something is wrong is to try to execute a query. If I am able to receive a handle from pool by calling acquire(), I am okay and let me explain why. There are two situations:

-

The connection handle is alive In this case I will always fulfill the request either by completing it or timing out (depending on my timeout and retry settings)

-

Connection handle is dead The fix I provided in last request was to fix run() function for PostgreSQL to correctly mark connection as invalid, so that connectionManager.validate() function can distinguish between dead connections or not. https://github.com/brano543/sequelize/blob/main/src/dialects/postgres/query.js#L95 So after exception is thrown, we can check whether connection is still valid or not as done in this pull request https://github.com/brano543/sequelize/blob/main/src/sequelize.js#L658

The problem arises if my retry timeout expires before I am able to get the error from driver. Let me give a simple example. Imagine I have setup retry logic as follows: Timeout = 2.2 seconds Backoff = 1 second Query time = 500 ms

In this case the execution in case there are network issues will be as follows: 0...500 ms socket error, acquire would eventually destroy https://github.com/sequelize/sequelize-pool/blob/master/src/Pool.ts#L332 1500 ms .... 2000ms 2000ms ... timeout (2200ms) ... never throw error and never marking connection as _invalid, but what is worse, never even ending so connection stays indefinitely as running

Now each query like this since it times out, it will be consider from pool side as _objectsInuse. This way you will eventually end up in situation like in my screenshot. After that happens if you take a look at _dispense function https://github.com/sequelize/sequelize-pool/blob/master/src/Pool.ts#L327, it will be in infinite loop as there is no object available as all N connections are in use.

Hi! Haven't had the time to fully check this out, but do I understand correctly that it might be better to solve this in sequelize-pool? If so, maybe you could make a PR there?

Hi! Haven't had the time to fully check this out, but do I understand correctly that it might be better to solve this in sequelize-pool? If so, maybe you could make a PR there?

It won't be that easy to solve it just in sequelize-pool, because it has no way to tell that handle acquired by acquire() method is already dead and stuck inside running queue - _inUseObjects.

When you mentioned this, maybe this deadlock situation could be solved by introducing timeout value for in use connections https://github.com/sequelize/sequelize-pool/blob/master/src/Pool.ts#L405 and adding loop to check for timeout inside _inUseObjects as well https://github.com/sequelize/sequelize-pool/blob/master/src/Pool.ts#L264

I'm sorry for delaying this but this is something I want to properly understand before merging it

Is this an issue with pg-native only, or is pg impacted too?

You mentionned that the issue was that the retry timeout expired before the error is caught.

If I understand correctly, the error to catch is https://github.com/brano543/sequelize/blob/main/src/dialects/postgres/query.js#L88-L96

And the retry timeout is the one from retry-as-promised

But retry-as-promised isn't able to cancel the execution of Query.run, so in theory that catch block should eventually execute? Wouldnt the connection eventually be marked as _invalid?

If so, would it work to replace connection._invalid = true; with this.pool.destroy(connection); here?

Is this an issue with pg-native only, or is pg impacted too? Both are affected. Pg will either use JS implementation or native implemention, but both don't support autoreconnect feature.

You mentionned that the issue was that the retry timeout expired before the error is caught. Yes and the only way how to fix it is how I did in the pull request. If you check the current code of sequelize query() function. Retry-as-promise receives a callback https://github.com/sequelize/sequelize/blob/v6.17.0/src/sequelize.js#L624 function inside which we wait until query.run() finishes https://github.com/sequelize/sequelize/blob/v6.17.0/src/sequelize.js#L643.

Postgre version of query run is catching ECONNRESET exception for pg and SSL EOF and others in case of pg-native to mark connection as _invalid. https://github.com/sequelize/sequelize/blob/v6.17.0/src/dialects/postgres/query.js#L89

You are correct that replacing this line with destroy of connection is equivalent as what I have done in sequelize.query() which checks whether connection is still valid (in case of PostgreSQL it will compare _invalid flag and _ending flags) for all drivers. https://github.com/brano543/sequelize/blob/main/src/sequelize.js#L659

However initially in this pull request I have added only this part and it was not enough to solve the issue in case retry-as-promise timeout expires before callback finishes (the callback in which we set _invalid or we could even delete the connection).

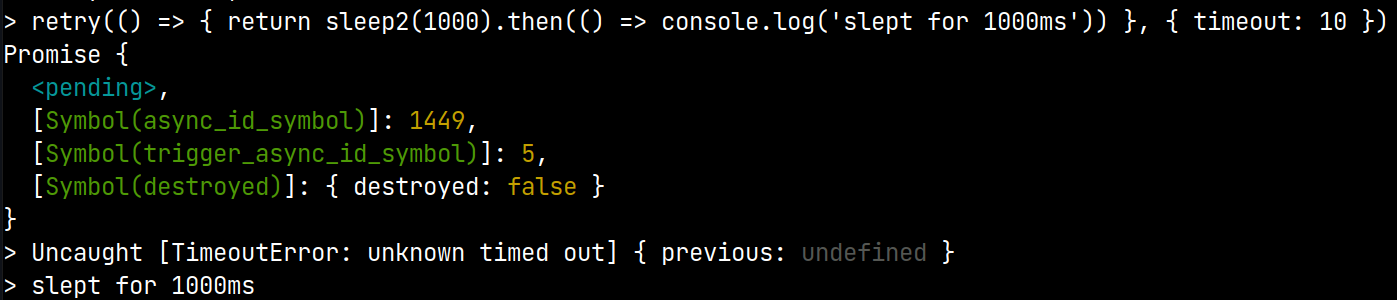

If you take a look at retry-as-promised timeout logic https://github.com/mickhansen/retry-as-promised/blob/master/index.js#L65 you can see it will reject if timeout expires.

What is happening in my test case is that after tcpkill kills connections to DB, sequelize-pool acquire will keep returning dead handle, so eventually we end up with "unknown timed out" and these connections will stay inside _inUsearray forever causing next calls to acquire() end up in infinite loop.

This solution will destroy every connection, including potentially alive ones used by other queries, every time a query has an execution time that's too long, or the network slows down. I'm not super familiar with the connection code yet but this could cause a lot of stability issues, couldn't it?

You are right. I was thinking about what you said. There are really two scenarios we need to distinguish:

- Long running query which just times out, but connection handle is still alive

- Query which times out because I am not able to acquire live handle and being stuck in a deadlock

I will test whether adding boolean variable to check whether we acquired connection or not https://github.com/brano543/sequelize/blob/main/src/sequelize.js#L640 will still work and adjust the pull request. This would mean that on timeout we would destroy connections if and only if I know for certain that I have not acquired connection before timing out.

retry-as-promise timeout expires before callback finishes

It does yes, but that doesn't mean the function was cancelled, as you can see here:

(retry is retry-as-promised, sleep2 is require('timers/promises').setTimeout)

The code that invalidates the query will still be called eventually

This pull request has been marked as maybe-abandoned and has not seen any activity for 14 days. It will be closed if no further activity occurs within the next 30 days. If you would still like to work on this, please comment to let us know and the label will be removed.

This pull request has been marked as maybe-abandoned and has not seen any activity for 14 days. It will be closed if no further activity occurs within the next 30 days. If you would still like to work on this, please comment to let us know and the label will be removed.

This pull request has been marked as maybe-abandoned and has not seen any activity for 14 days. It will be closed if no further activity occurs within the next 30 days. If you would still like to work on this, please comment to let us know and the label will be removed.