Consider validation of string lengths

This would cover the fairly frequent use case of checking lengths of inputs (particularly relevant for various IDs etc.)

The current solution using regex seems a bit clunky for such a simple problem.

I am imagining a function called something like col_strlen_[equal|between|gt(e)|lt(e)]() with its test_/expect_ equivalents.

Two aspects I am not clear on:

- what should the function return when given a column not of type character

- specifically, should it handle labels in factors?

The safe option would be to error out or record failures in all such cases.

What do you think about using preconditions? We can essentially mutate the target column and validate the column using the suite of already available functions. For checking an exact string length, we could make a sample table based on small_table:

library(tidyverse)

library(pointblank)

id_table <-

small_table %>%

select(id = b) %>%

distinct() %>%

bind_rows(

tibble(id = c("6-elwp-284", "1-lwp-909", "2-bnq-811000"))

)

id_table

# A tibble: 15 x 1

id

<chr>

1 1-bcd-345

2 5-egh-163

3 8-kdg-938

4 5-jdo-903

5 3-ldm-038

6 2-dhe-923

7 1-knw-093

8 5-boe-639

9 5-bce-642

10 2-dmx-010

11 7-dmx-010

12 3-dka-303

13 6-elwp-284

14 1-lwp-909

15 2-bnq-811000

and then use this:

create_agent(tbl = id_table, actions = action_levels(stop_at = 1)) %>%

col_vals_equal(

columns = vars(id),

value = 9,

preconditions = ~ . %>% mutate(id = nchar(id))

) %>%

interrogate()

── Interrogation Started - there is a single validation step ───────────

x Step 1: STOP condition met.

── Interrogation Completed ─────────────────────────

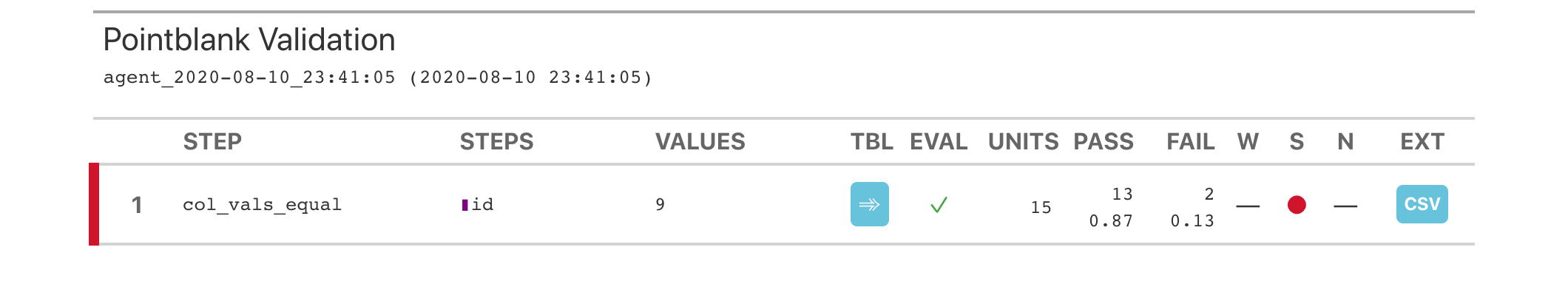

The report looks like this:

...where two test units are flagged as failures (rows 13 and 15).

There's both good and bad things with this methodology. Some good things: no proliferation of new functions, and, we can use validation functions on computed columns, aggregate data, etc. The bad: the nchar() function may not work in a database back end (or it might, it basically needs to be tested). Also, we are not checking whether the column is a character column or a numeric column (though, separate validation steps can do that).

If possible, I'd like to get your opinion on all of this. Thanks!

Many thanks - agree that yours is a workable and parsimonious solution in terms of not proliferating functions. I suspect it is really an empirical question: if something like "checking IDs in character columns" is a common enough use case (which it is for me but may not be for many others), then the accessibility of pointblank's API and readability of the resulting code achieved by a dedicated function might be worth it, together with some predictable handling of non-character columns.

On that last point:

-

nchars()throws an error on a factor but works on a numeric -

stringr::str_length()handles both by converting to character first -

col_vals_regex()also seems to convert numerics and strings to characters before validating)

See reprex below extending yours:

library(tidyverse)

library(pointblank)

id_table <-

small_table %>%

select(id = b) %>%

distinct() %>%

bind_rows(

tibble(id = c("6-elwp-284", "1-lwp-909", "2-bnq-811000"))

)

id_table_fct <- id_table %>%

mutate(id_factor = as.factor(id),

id_num = 1:n())

create_agent(id_table_fct, actions = action_levels(stop_at = 1)) %>%

col_vals_equal(

columns = vars(id),

value = 9,

preconditions = ~ . %>% mutate(id = nchar(id))

) %>%

col_vals_regex(id, "[0-9]{1}") %>% # all should pass

col_vals_regex(id, "[0-9]{10}") %>% # all should fail

col_vals_regex(id_factor, "[0-9]{1}") %>% # all should pass

col_vals_regex(id_factor, "[0-9]{10}") %>% # all should fail

col_vals_regex(id_num, "[0-9]{1}") %>% # all should pass

col_vals_regex(id_num, "[0-9]{10}") %>% # all should fail

interrogate()