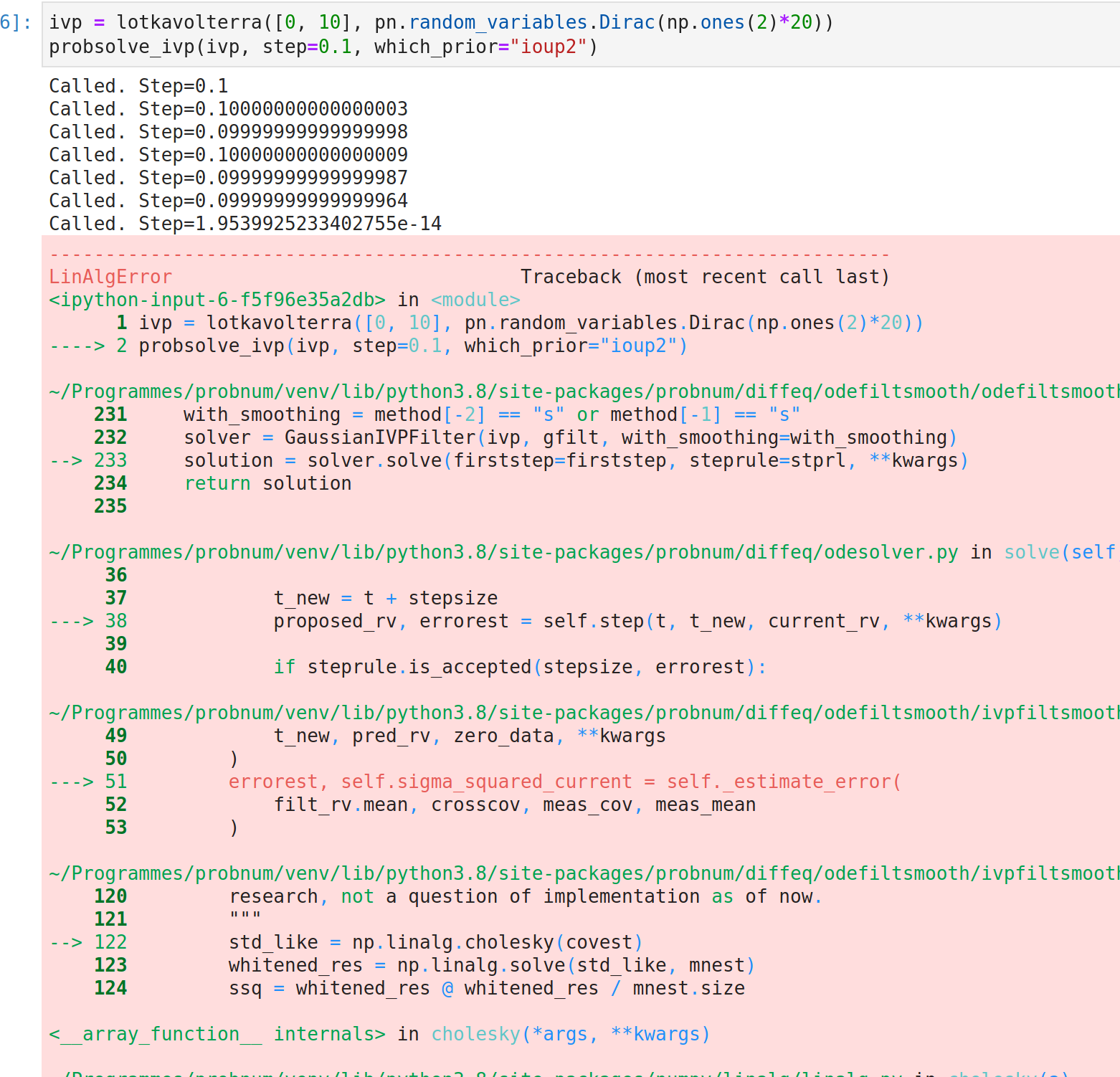

Fixed steps in ODE solver go beyond the domain due to round-off errors

Recently, it started raining LinAlgErrors (out of nowhere). I think I found the culprit.

In ODESolver.solve(), right before the end, it does

suggested_stepsize = self._suggest_step(stepsize, errorest, steprule)

stepsize = min(suggested_stepsize, self.ivp.tmax - t)

which leads to the fact that at the end of the time interval, extremely small values may happen:

As a result, the prediction steps break down because nothing is quite PSD anymore. Not sure what my favourite way of fixing this is. Thoughts, @nathanaelbosch ?

...yes, I am aware that the issue title is clickbait ;)

I'm also not sure about my "favourite way", but I can state what I used in some of my own code.

I would describe the method right now as "if the next step overshoots, then we reduce the step size such that we hit t_max exactly", but as you say, this leads to small steps. Instead I started doing "if the step after this one would be smaller than we'd like, then let's have a slightly larger step right now".

In code, right now we have

stepsize = min(suggested_stepsize, self.ivp.tmax - t)

and I describe something like

stepsize = suggested_stepsize if t+suggested_stepsize+minstep < self.ivp.tmax else self.ivp.tmax - t

with some new parameter minstep which should describe the minimum step we are willing to take.

From my own experience, this resolved some problems I ran into. But at the same time it does not feel ideal having to do a larger step than planned. Still, for the case of fixed steps, this last minuscule step is just a numerical artifact anyways, and increasing the previous step by 1e-10 or something should not alter the result in most cases.

Hmmm interesting. I think that is a valid approach. What I fixed in my own code currently (locally) is to just stop if the step is unpredictably small. To this end, I slightly rewrote the solve thing:

while stepsize > 0:

t_new = t + stepsize

proposed_rv, errorest = self.step(t, t_new, current_rv, **kwargs)

if steprule.is_accepted(stepsize, errorest):

self.num_steps += 1

t = t_new

current_rv = proposed_rv

times.append(t)

rvs.append(current_rv)

self.method_callback(

time=t_new, current_guess=proposed_rv, current_error=errorest

)

stepsize = self._new_step(stepsize, errorest, steprule, t)

odesol = self.postprocess(times=times, rvs=rvs)

return odesol

def _new_step(self, step, errorest, steprule, t):

"""

Suggests step according to steprule and warns if step is extremely small.

Raises

------

RuntimeWarning

If suggested step is smaller than :math:`10^{-15}`.

"""

suggested_step = steprule.suggest(step, errorest)

step = min(suggested_step, self.ivp.tmax - t)

if step < 1e-13:

warnmsg = f" Stepsize is almost zero: step={str(step)} (t={str(t)})"

warnings.warn(message=warnmsg, category=RuntimeWarning)

return -1.0

return step

It essentially keeps going while proposed steps are positive and if it is too small, it just says -1 and done. This isn't optimal either, but it somewhat felt natural to put this into the warning-check inside suggest step

Not sure how exactly, but it feels like this while step > 0 is kind of a proxy for while status is None: solver.step() which is what scipy does (https://github.com/scipy/scipy/blob/v1.5.2/scipy/integrate/_ivp/ivp.py#L156-L663)

How about, if a step that is larger than what is needed is proposed, this large step is done and the final point is evaluated with interpolation? This would be the smallest code change, no?

Yes that could work and sounds like a sensible thing to do :+1:

I could not reproduce this btw. Is still still an open problem?

I mean, we haven't fixed it. Though if the tests pass, it may have fixed itself somehow...

Shall we close this? It seems to have fixed itself.

Yes I'd also say so, we can always re-open if we get these errors again.