debugger: Message Queue Dumping not showing any pending operations

Hello my name is Josh Cottingham, and I work for Arm.

This is new issue with MPICH 4.1a1 and is not experienced with MPICH 4.0.2. Possibly due to a change in #5757, it appears as though even with a simple scenario our calls to mqs_pending_operation are always returning non zero (mqs_end_of_list) after the operation iterator is set up (even when there is definitely expected to be a pending in the queue).

Reproducer

#include <stdlib.h>

#include <stdio.h>

#include <string.h>

#include <mpi.h>

int main(int argc, char **argv)

{

int bufferSize = 137;

char buffer[bufferSize];

MPI_Status status;

int src, dest, receiver;

int rank, size;

int tag;

MPI_Init(&argc, &argv);

MPI_Comm_rank(MPI_COMM_WORLD, &rank);

MPI_Comm_size(MPI_COMM_WORLD, &size);

receiver = (rank&1) == 0;

if (receiver) {

src = rank + 1;

dest = rank;

tag = 1<<dest;

MPI_Recv(buffer, bufferSize, MPI_CHAR, src, tag, MPI_COMM_WORLD, &status); // [at receive]

printf("Rank %d message receieved from %d\n", rank, src);

}

printf("Rank %d\n", rank);

if (!receiver) {

src = rank;

dest = rank - 1;

tag = 1<<dest;

MPI_Ssend("Hi", 3, MPI_CHAR, dest, tag, MPI_COMM_WORLD); // [at send]

printf("Rank %d message sent to %d\n", rank, dest);

}

printf("Rank %d\n", rank);

MPI_Finalize();

return 0;

}

You can reproduce this issue using Arm DDT. Compile the above code and open with DDT with 2 processes. Run to the [at send] tag with DDT. Open the Message Queue (Tools->Message Queues) and notice that there is nothing reported in the queue.

Let us know if you having any trouble reproducing this issue and we can see how we can assist further, e.g by enabling extended debug logging? If you do not currently have a Arm Forge license let me know and we can sort you out with one.

Also worth noting that we were hitting a separate issue with MPICH 4.0.x. This was a bug in mqs_image_has_queues where offsets were wrong for MPIDI_Devreq_t (following an incorrect structure), which caused it to read from the wrong parts of memory and giving bad rank/tag values. This is no longer present with the changes in #5757. Not sure if there is a plan to release any more support releases for 4.0.x so haven't raised a separate ticket yet but can raise one if there are more releases planned for this branch.

I suspect we aren't tracking blocking operations with the changes to the message queue code. I'll try a fix today and tag you once I have something to try.

Not sure if there is a plan to release any more support releases for 4.0.x

@raffenet can confirm, but I believe we will have more support releases for 4.0.x, at least a last one right before we mark 4.1 as stable.

Thanks @hzhou, will wait till @raffenet confirms before raising that issue then.

Another bugfix release in 4.0.x is a good idea. I'll have to check whether fixing that bug in place is worth the effort, or if we can just backport the new queue dumping code as a whole.

@Josh-Cottingham-Arm I created #6133 to hopefully address this issue. Since it applies to the binding generation code, you need to run ./autogen.sh -do=gen_binding_c from the base source directory, then re-compile in order for the change to take effect.

Hey @raffenet , thanks for the quick response and sorry for the delay (was off on holiday). I tried the fix provided in #6133 (making sure to re-generate the c bindings) but it appears that the issue is still present and it always returns 2 (mqs_end_of_list).

Just for clarity, we were getting returns of mqs_ok with MPICH 4.0.2 (just that the returned info contained incorrect data because of the bug I previously mentioned).

Created #6141 for the 4.0.x bug

Hey @raffenet , thanks for the quick response and sorry for the delay (was off on holiday). I tried the fix provided in #6133 (making sure to re-generate the c bindings) but it appears that the issue is still present and it always returns 2 (mqs_end_of_list).

Just for clarity, we were getting returns of mqs_ok with MPICH 4.0.2 (just that the returned info contained incorrect data because of the bug I previously mentioned).

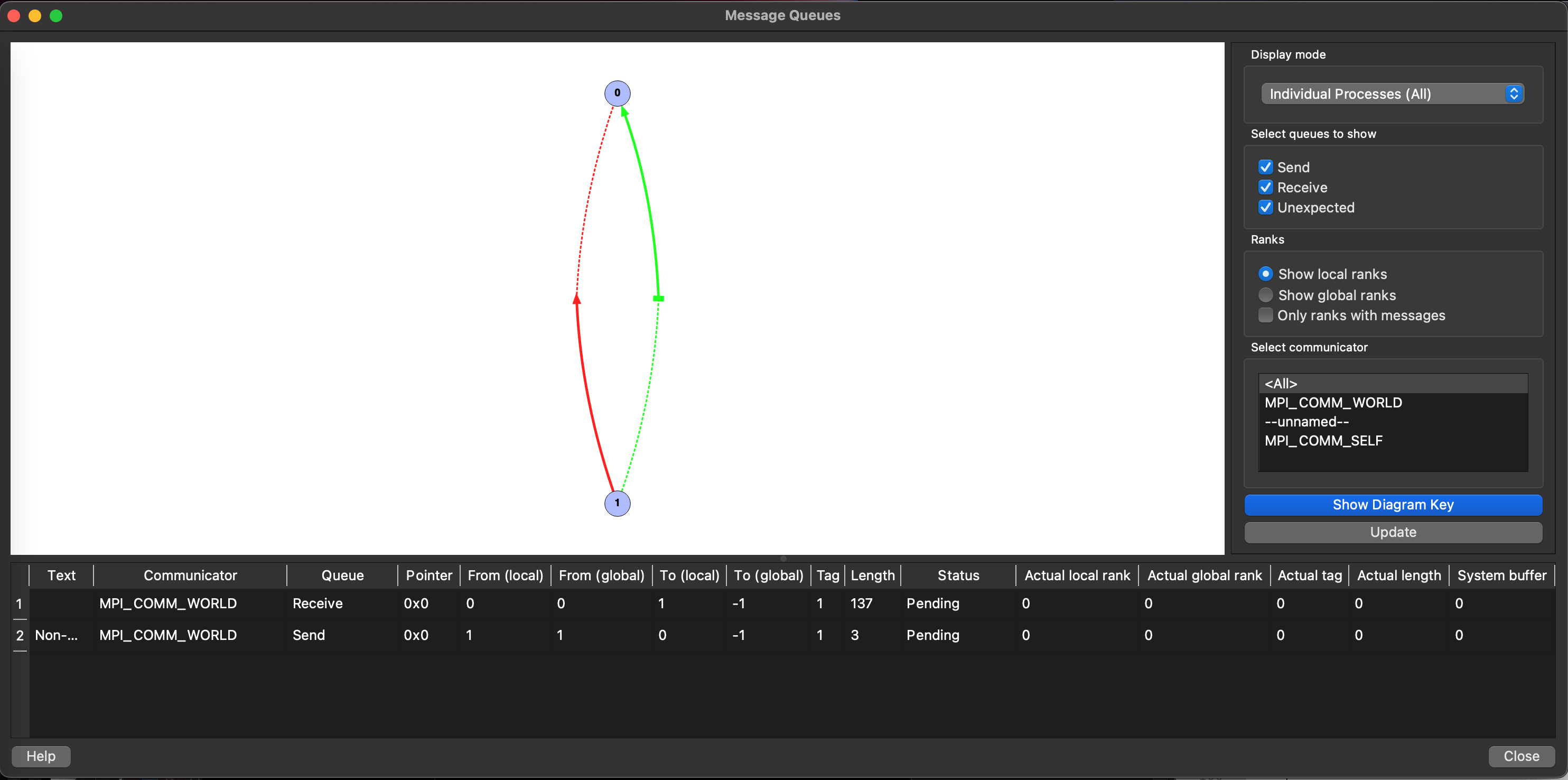

Here is what I see using the test program and my PR branch. Process 1 is paused at line 34, so the MPI_Ssend has not yet begun. Process 0 is paused inside the MPI_Recv, which is shown in the message queue info.

After stepping Process 0 into the MPI_Ssend far enough to expose the uncompleted request to the debugger:

Sorry for the long response, have not yet been able to pick up testing this again.

Perhaps the issue I was facing was due to me incorrectly building (I did regenerate the binding code with ./autogen.sh -do=gen_binding_c but couldn't build from source as was struggling with automake version mismatch). I instead patched the 4.1a1 source tar with this change and rebuilt.

One note that is interesting from the screenshots you sent is that it looks like we are still facing similar issues to what were described in https://github.com/pmodels/mpich/issues/6141. I would be interested in gathering some logs from you to help diagnose why these numbers do not look correct. Perhaps we can take that discussion offline to see if we can work through that together?

One note that is interesting from the screenshots you sent is that it looks like we are still facing similar issues to what were described in #6141. I would be interested in gathering some logs from you to help diagnose why these numbers do not look correct. Perhaps we can take that discussion offline to see if we can work through that together?

Which numbers in particular? To my knowledge, some information such as local-to-global rank translation has never been implemented in MPICH, so those fields can't be trusted. Tag and length look good. "Actual" values, I'll have to take a closer look at.

I do plan on getting the local-to-global translation working at some point, it just hasn't been very high priority.

Ah apologies, I was not aware that the local-to-global rank translations have not been implemented yet for MPICH. In that case please ignore that comment there, everything looks as expected.

Am still interested as to why when I tried the fix that I did not see the same. Out of curiosity does it show nothing in the Message Queue dialog without this fix (i.e. with 4.1.a1)?

Am still interested as to why when I tried the fix that I did not see the same. Out of curiosity does it show nothing in the Message Queue dialog without this fix (i.e. with 4.1.a1)?

That's interesting. My patch should only affect the visibility of the MPI_Ssend operation, as it was previously ignored by the debug interface. AFAICT, the blocking MPI_Recv should have been visible even in 4.1a1.

AFAICT, the blocking MPI_Recv should have been visible even in 4.1a1.

I have had a bit more time to investigate this and turns out we were building our MPICH packages with --with-device=ch3:sock and this seems to have been what has caused us to see the issue to begin with. Not sure this is really needed in our case anyway and using default settings appears to work fine.