Question : about "gym.vector.SyncVectorEnv"

Hello,

I have question regarding "gym.vector.SyncVectorEnv", in the documentation there are this explanation : "where the different copies of the environment are executed sequentially".

My question is what do you mean by sequentially?, do you mean with every episode we excecute one env?

Maybe to clarifiy my question, I explain what Iam trying to do:

Iam trying to use DDPG (stable baseline3) to solve a problem.

I would like to know, how can we change the env sampled values with every episode "and it should be reproducible"

for example, assume we have an env where we harvest energy, we assume that the harvested energy is normally distributed, and then in every episode, I will sample DIFFERENT Values of my harvested energy.I would just like to emphasize again, that I would like that the different values of my harvested energy to be reproducible, so I can compare the RL method to other methods.

PS: the customer env is already created where can I change the sampled value with every episode (using seed which gets as input the episode "i"), now my problem how can I fit it to the code using gym and stable baseline.

I think "gym.vector.SyncVectorEnv" is my solution but Iam not sure.

Thank you Best regards

Hi @Missourl, in this context sequentially basically just means we run a for loop over the environments. Within the sync vector env, the step look similar to this

def step(self, actions):

for env, action in zip(envs, actions):

results = env.step(action)

There is additional grouping of the results to return the data in the expected format.

Could you clarify the second part of your question with this answer

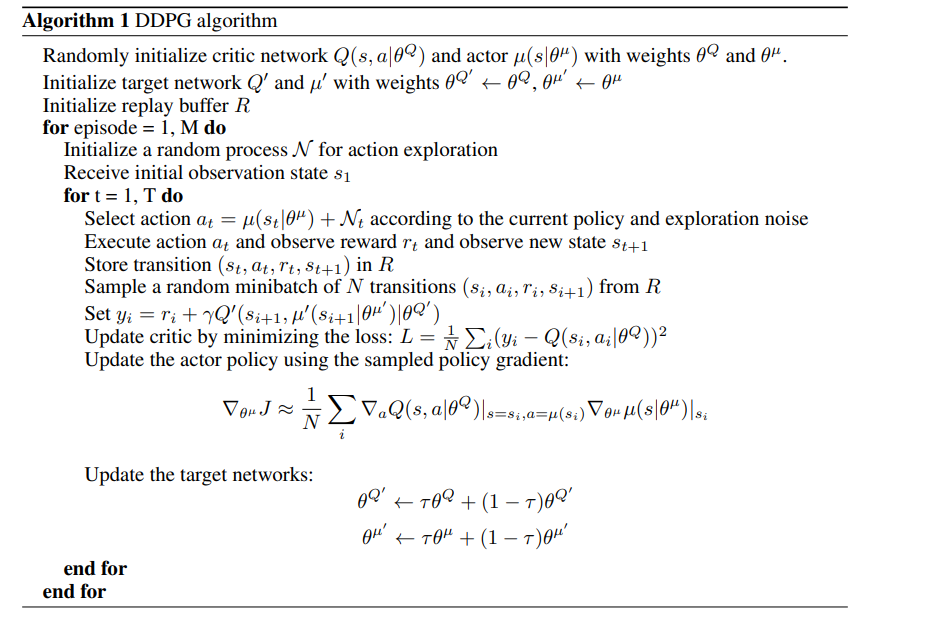

Thank you for your answer. I try to explain with the help of DDPG Paper.

in the first loop episode from 1 to M I call random. seed(episode). in the second loop t from 1 to T, I implemented inside the env, so it will be automatically incremented till achieving T.

As already mentionned , my aim is to have reproducible value of my env , so I can compare RL to other algorithm.

Iam not sure if Iam clear , but please if not tell me again so I try again.

Thank you Best regards

Gym is agnostic to RL algorithms, its sole purpose is an API for environment development.

Reading the pseudocode, my understanding of "initialize a random process N for action exploration" is not the same as env.seed(episode). These are completely different random processes. The algorithm is agnostic to the environment's random process.

SyncVectorEnv is designed to help speed up code through running the environment in batches rather than one at a time

No Iam not using random.seed() to implement this "initialize a random process N for action exploration". Iam using env.seed(episode) "call it episode or iteration ", to train my agent for many epoch , and in each epoch different reproducible values of my env .

But I got my answer , SyncVectorEnv is not what Iam looking for.

Thank you a lot :)