evals

evals copied to clipboard

evals copied to clipboard

Word Count Eval (40% accuracy, 100+ samples)

Thank you for contributing an eval! ♥️

🚨 Please make sure your PR follows these guidelines, failure to follow the guidelines below will result in the PR being closed automatically. Note that even if the criteria are met, that does not guarantee the PR will be merged nor GPT-4 access granted. 🚨

PLEASE READ THIS:

In order for a PR to be merged, it must fail on GPT-4. We are aware that right now, users do not have access, so you will not be able to tell if the eval fails or not. Please run your eval with GPT-3.5-Turbo, but keep in mind as we run the eval, if GPT-4 gets higher than 90% on the eval, we will likely reject since GPT-4 is already capable of completing the task.

We plan to roll out a way for users submitting evals to see the eval performance on GPT-4 soon. Stay tuned! Until then, you will not be able to see the eval performance on GPT-4. We encourage partial PR's with ~5-10 example that we can then run the evals on and share the results with you so you know how your eval does with GPT-4 before writing all 100 examples.

Eval details 📑

Eval name

Word Count Eval 127 samples. 40% accuracy.

Eval description

Given a set of popular movie phrases (generated by ChatGPT), this eval attempts to find out how many words are in a phrase. Some phrases are fairly short as well (less than 5 words), but even then, it fails more often than not.

[2023-03-14 20:00:05,583] [data.py:78] Fetching word_count/samples.jsonl

[2023-03-14 20:00:05,587] [eval.py:30] Evaluating 127 samples

[2023-03-14 20:00:05,594] [eval.py:136] Running in threaded mode with 10 threads!

100%|██████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 127/127 [00:08<00:00, 15.28it/s]

[2023-03-14 20:00:13,923] [record.py:320] Final report: {'accuracy': 0.4015748031496063}. Logged to /tmp/evallogs/230315030005OBO6TV34_gpt-3.5-turbo_word-count.jsonl

[2023-03-14 20:00:13,924] [oaieval.py:209] Final report:

[2023-03-14 20:00:13,924] [oaieval.py:211] accuracy: 0.4015748031496063

[2023-03-14 20:00:13,943] [record.py:309] Logged 381 rows of events to /tmp/evallogs/230315030005OBO6TV34_gpt-3.5-turbo_word-count.jsonl: insert_time=18.995ms

What makes this a useful eval?

This is a very simple task for humans, counting the number of words in a sentence. What would be interesting is using this to find out when we have the emergent behavior to perform basic arithmetic.

These are very basic questions for a human, that the model gets consistently wrong. I think this eval makes for a really solid baseline when comparing to human intelligence.

Criteria for a good eval ✅

Below are some of the criteria we look for in a good eval. In general, we are seeking cases where the model does not do a good job despite being capable of generating a good response (note that there are some things large language models cannot do, so those would not make good evals).

Your eval should be:

- [x] Thematically consistent: The eval should be thematically consistent. We'd like to see a number of prompts all demonstrating some particular failure mode. For example, we can create an eval on cases where the model fails to reason about the physical world.

- [x] Contains failures where a human can do the task, but either GPT-4 or GPT-3.5-Turbo could not.

- [x] Includes good signal around what is the right behavior. This means either a correct answer for

Basicevals or theFactModel-graded eval, or an exhaustive rubric for evaluating answers for theCriteriaModel-graded eval. - [x] Include at least 100 high quality examples (it is okay to only contribute 5-10 meaningful examples and have us test them with GPT-4 before adding all 100)

If there is anything else that makes your eval worth including, please document it below.

Unique eval value

Insert what makes your eval high quality that was not mentioned above. (Not required)

Eval structure 🏗️

Your eval should

- [x] Check that your data is in

evals/registry/data/{name} - [x] Check that your yaml is registered at

evals/registry/evals/{name}.jsonl - [x] Ensure you have the right to use the data you submit via this eval

(For now, we will only be approving evals that use one of the existing eval classes. You may still write custom eval classes for your own cases, and we may consider merging them in the future.)

Final checklist 👀

Submission agreement

By contributing to Evals, you are agreeing to make your evaluation logic and data under the same MIT license as this repository. You must have adequate rights to upload any data used in an Eval. OpenAI reserves the right to use this data in future service improvements to our product. Contributions to OpenAI Evals will be subject to our usual Usage Policies (https://platform.openai.com/docs/usage-policies).

- [x] I agree that my submission will be made available under an MIT license and complies with OpenAI's usage policies.

Email address validation

If your submission is accepted, we will be granting GPT-4 access to a limited number of contributors. Access will be given to the email address associated with the merged pull request.

- [x] I acknowledge that GPT-4 access will only be granted, if applicable, to the email address used for my merged pull request.

Limited availability acknowledgement

We know that you might be excited to contribute to OpenAI's mission, help improve our models, and gain access to GPT-4. However, due to the requirements mentioned above and high volume of submissions, we will not be able to accept all submissions and thus not grant everyone who opens a PR GPT-4 access. We know this is disappointing, but we hope to set the right expectation before you open this PR.

- [x] I understand that opening a PR, even if it meets the requirements above, does not guarantee the PR will be merged nor GPT-4 access granted.

Submit eval

- [x] I have filled out all required fields in the evals PR form

- [x] (Ignore if not submitting code) I have run

pip install pre-commit; pre-commit installand have verified thatblack,isort, andautoflakeare running when I commit and push

Failure to fill out all required fields will result in the PR being closed.

Eval JSON data

Since we are using Git LFS, we are asking eval submitters to add in as many Eval Samples (at least 5) from their contribution here:

View evals in JSON

Eval

{"input": [{"role": "system", "content": "You are a word counter. Reply ONLY with the number of words in the message."}, {"role": "user", "content": "Are you not entertained?"}], "ideal": "4"}

{"input": [{"role": "system", "content": "You are a word counter. Reply ONLY with the number of words in the message."}, {"role": "user", "content": "As if!"}], "ideal": "2"}

{"input": [{"role": "system", "content": "You are a word counter. Reply ONLY with the number of words in the message."}, {"role": "user", "content": "As you wish."}], "ideal": "3"}

{"input": [{"role": "system", "content": "You are a word counter. Reply ONLY with the number of words in the message."}, {"role": "user", "content": "Be careful what you wish for."}], "ideal": "6"}

{"input": [{"role": "system", "content": "You are a word counter. Reply ONLY with the number of words in the message."}, {"role": "user", "content": "Bond. James Bond."}], "ideal": "3"}

Wow, this is really interesting. I tried a few with GPT-4, and it does indeed fail! Do you know what makes this task is so difficult?

Wow, this is really interesting. I tried a few with GPT-4, and it does indeed fail! Do you know what makes this task is so difficult?

I'm sure others can speak more intelligently on this topic, but I think it exemplifies the "next-token-predictor" nature of GPT models. It's fascinating to me that GPT-4 can perform high-level logic tasks (indicating that there is at least some emergent behavior beyond just "predict next token") - yet somehow fails at a grade-school task.

I wonder what's so difficult about arithmetic. Perhaps just the way in which number tokens are weighted?

Semi-related HN discussion on GPT-2 being more than just a next-word predictor: https://news.ycombinator.com/item?id=34821414

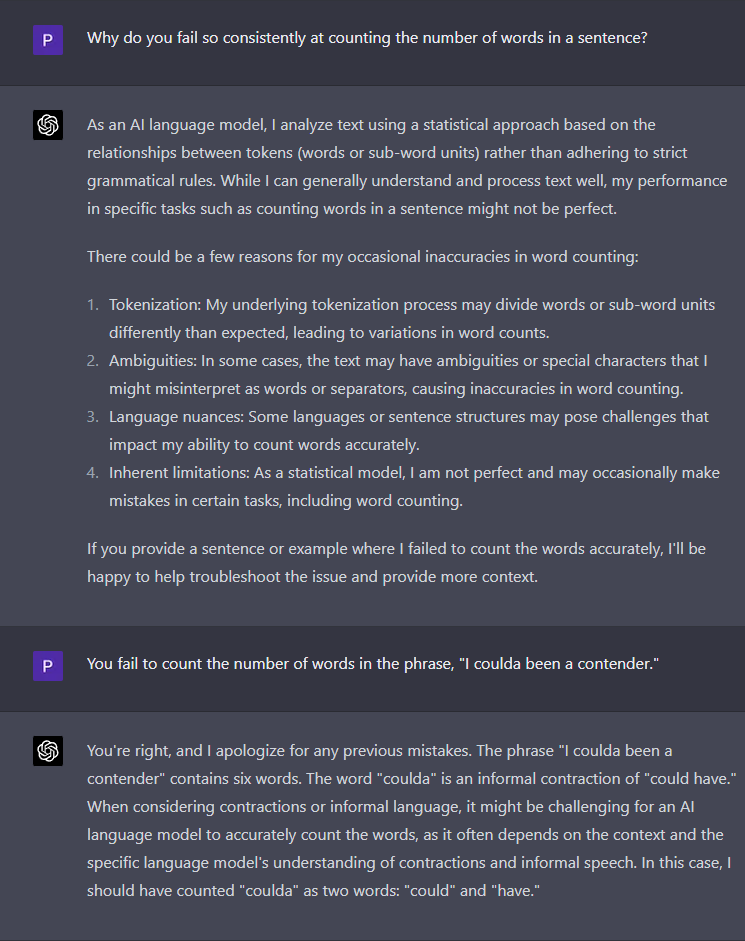

Some self-analysis by Chat GPT-4:

Cool eval but I wonder if there are false negatives where the AI is replying in English instead of writing the digits? Could add multiple ideal values and use the fuzzy matcher to be sure.

@andrew-openai if you have a moment, would you mind taking a look at this? Thanks!

Just rebased on main, so we can run this in the CI (after your approval)!

Thanks for opening this PR, counting is a well-known failure mode of the model due to a common underlying issue in LLMs. In its current form, this eval does not seem to expose any new gaps in our understanding of model performance. We also know that this could be solved by giving the model a code interpreter. For example, in this particular case the bash command wc -w will do the job.

If you're still interested in writing an eval, we've noticed that these criteria make good evals. If you have any particular use case in mind for the model, can you come up with an eval that has some of these attributes?

- Multi-step reasoning

- Domain or Application specific

- Open-Ended responses

- Complex instructions

- The eval seems obvious, but tricks the model in a novel way

I'm closing this PR, but feel free to open a new one with the above suggestions.