text-generation-webui

text-generation-webui copied to clipboard

text-generation-webui copied to clipboard

AssertionError: Torch not compiled with CUDA enabled

Describe the bug

I fix few of the problem I had when installed this but this code is keep popping up for the past of couple days and I am dying for help (AssertionError: Torch not compiled with CUDA enabled)

Is there an existing issue for this?

- [X] I have searched the existing issues

Reproduction

https://www.youtube.com/watch?v=lb_lC4XFedU&ab_channel=Aitrepreneur

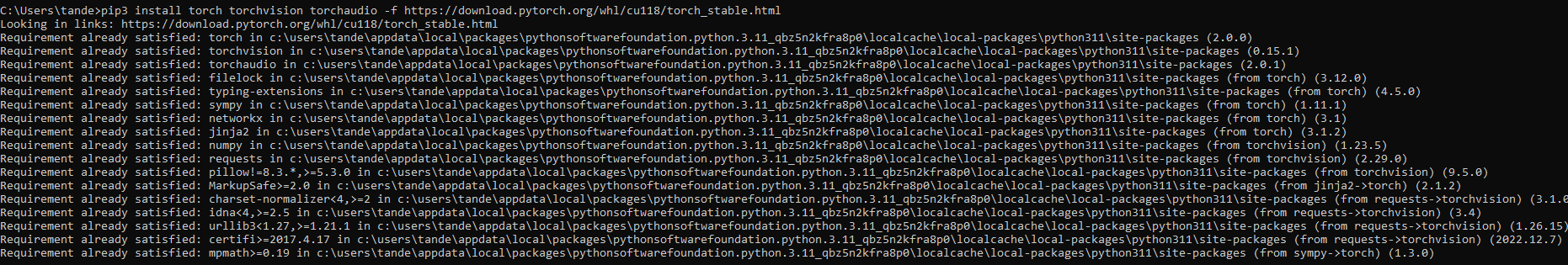

Screenshot

Logs

INFO:Gradio HTTP request redirected to localhost :)

bin C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\installer_files\env\lib\site-packages\bitsandbytes\libbitsandbytes_cpu.dll

C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\installer_files\env\lib\site-packages\bitsandbytes\cextension.py:33: UserWarning: The installed version of bitsandbytes was compiled without GPU support. 8-bit optimizers, 8-bit multiplication, and GPU quantization are unavailable.

warn("The installed version of bitsandbytes was compiled without GPU support. "

INFO:Loading PygmalionAI_pygmalion-6b...

Loading checkpoint shards: 100%|█████████████████████████████████████████████████████████| 2/2 [00:37<00:00, 18.69s/it]

Traceback (most recent call last):

File "C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\text-generation-webui\server.py", line 872, in <module>

shared.model, shared.tokenizer = load_model(shared.model_name)

File "C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\text-generation-webui\modules\models.py", line 90, in load_model

model = model.cuda()

File "C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\installer_files\env\lib\site-packages\torch\nn\modules\module.py", line 905, in cuda

return self._apply(lambda t: t.cuda(device))

File "C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\installer_files\env\lib\site-packages\torch\nn\modules\module.py", line 797, in _apply

module._apply(fn)

File "C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\installer_files\env\lib\site-packages\torch\nn\modules\module.py", line 797, in _apply

module._apply(fn)

File "C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\installer_files\env\lib\site-packages\torch\nn\modules\module.py", line 820, in _apply

param_applied = fn(param)

File "C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\installer_files\env\lib\site-packages\torch\nn\modules\module.py", line 905, in <lambda>

return self._apply(lambda t: t.cuda(device))

File "C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\installer_files\env\lib\site-packages\torch\cuda\__init__.py", line 239, in _lazy_init

raise AssertionError("Torch not compiled with CUDA enabled")

AssertionError: Torch not compiled with CUDA enabled

System Info

Processor AMD Ryzen 5 3600 6-Core Processor 3.59 GHz

Installed RAM 16.0 GB

System type 64-bit operating system, x64-based processor

Edition Windows 10 Home

Version 22H2

I also know other had an issue with this but there solution didn't help me (https://github.com/open-mmlab/mmsegmentation/issues/1192)(https://github.com/oobabooga/text-generation-webui/discussions/351)

You did not install support for GPU. But I don't know what your gpu is, so hard to say if it can be used at all. Either try installing again with GPU support or use cpu with --cpu flag.(also option in webui when you load a model)

where do you add that flag I don't want to make worse now my gpu is an AMD Radeon RX 6600 GPU installed on your system I read that I can modify my code to use only the CPU for computation, to remove the --cuda flag or any other references to CUDA in your code but it seem not to work either. @LaaZa

I think you should use a GGML model instead. You are going to run into issues with memory otherwise.

TehVenom/Pygmalion-7b-4bit-Q4_1-GGML Here is a GGML model of Pymalion-7B, which is the same model but with different, better base model, LLaMA-7B. It should be a bit better than the original 6B. Or use a smaller variant of the normal pygmalion like 2.7B It is not going to be very good though.

Any flags you have can be edited in the webui.py line 164

run_cmd("python server.py --cpu --chat --model-menu", environment=True) If you want to load the model in the webui and not before, where you can also change settings, remove the --model-menu

okay, sorry but I just don't know what to do with the information you provided above. the webui.py won't stay open after couple second and I'm just going reinstall with model you gibing me @LaaZa

okay, sorry but I just don't know what to do with the information you provided above. the webui.py won't stay open after couple second and I'm just going reinstall with model you gibing me @LaaZa

What do you mean by won't stay open? You should still run using the start_windows.bat

like I open click on it and it won't open start_windows and keep gibing me that error I got in the beginning. Plus I try your suggest about https://huggingface.co/TehVenom/Pygmalion-7b-4bit-Q4_1-GGML and it giving me an error ( OSError: models\TehVenom_Pygmalion-7b-4bit-Q4_1-GGML does not appear to have a file named config.json. Checkout 'https://huggingface.co/models\TehVenom_Pygmalion-7b-4bit-Q4_1-GGML/None' for available files) an I readed it and try to download (TehVenom/Pygmalion-7b-4bit-Q4_1-GGML) by paste it when ask what model to use, I pasted it and received that error.@LaaZa

Okay, it appears that it is trying to load a normal model and not ggml because the model isn't named properly for textgen. Rename the Pygmalion-7b-4bit-Q4_1-GGML.bin to something like ggml-Pygmalion-7b-4bit-Q4_1.bin

INFO:Gradio HTTP request redirected to localhost :) bin C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\installer_files\env\lib\site-packages\bitsandbytes\libbitsandbytes_cpu.dll C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\installer_files\env\lib\site-packages\bitsandbytes\cextension.py:33: UserWarning: The installed version of bitsandbytes was compiled without GPU support. 8-bit optimizers, 8-bit multiplication, and GPU quantization are unavailable. warn("The installed version of bitsandbytes was compiled without GPU support. " The following models are available:

- ggml-Pygmalion-7b-4bit-Q4_1.bin

- TehVenom_Pygmalion-7b-4bit-Q4_1-GGML

Which one do you want to load? 1-2

1

INFO:Loading ggml-Pygmalion-7b-4bit-Q4_1.bin...

Traceback (most recent call last):

File "C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\text-generation-webui\server.py", line 884, in

shared.model, shared.tokenizer = load_model(shared.model_name)

File "C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\text-generation-webui\modules\models.py", line 139, in load_model

model_file = list(Path(f'{shared.args.model_dir}/{model_name}').glob('ggml.bin'))[0]

IndexError: list index out of range

INFO:Gradio HTTP request redirected to localhost :) bin C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\installer_files\env\lib\site-packages\bitsandbytes\libbitsandbytes_cpu.dll C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\installer_files\env\lib\site-packages\bitsandbytes\cextension.py:33: UserWarning: The installed version of bitsandbytes was compiled without GPU support. 8-bit optimizers, 8-bit multiplication, and GPU quantization are unavailable. warn("The installed version of bitsandbytes was compiled without GPU support. " The following models are available:

- ggml-Pygmalion-7b-4bit-Q4_1.bin

- TehVenom_Pygmalion-7b-4bit-Q4_1-GGML

Which one do you want to load? 1-2

2

INFO:Loading TehVenom_Pygmalion-7b-4bit-Q4_1-GGML...

Traceback (most recent call last):

File "C:\Users\tande\OneDrive\Documents\oobabooga_windows\oobabooga_windows\text-generation-webui\server.py", line 884, in

This is what I got by doing @LaaZa

You changed the folder name and not the .bin file?

you might be upset but I understand what you mean by .bin file and Pygmalion-7b-4bit-Q4_1-GGML.bin and not Pygmalion-7b-4bit-Q4_1-GGML-V2.bin which is the thing I download first because I thought better version of Pygmalion-7b-4bit-Q4_1-GGML.bin so I'm to download it. @LaaZa

also now it work thanks for your understand and help these past fews day @LaaZa