gRPC Roundtrip Latency Jitter on LVRT

I'm experiencing jitter in roundtrip latency of gRPC calls while running on LabVIEW RT. I've been able to isolate that this jitter is not coming from my specific application (the jitter happens in a very simple example) and would like to understand if this is expected behavior and where this jitter may be coming from.

I have set up a gRPC server with a single server, which has 4 methods to it. Each method does practically nothing, other than returning a current timestamp. I then have set up a parallel loop to the gRPC server which performs a call to these 4 gRPC methods repeatedly. I'm measuring the time of the unary client call while both calling a single method and while calling all 4 methods in parallel. In this fashion, both the server and client are running under LabVIEW RT (on a PXIe-8881 controller) and the client is communicating with the server on localhost (so a physical Ethernet connection is not used).

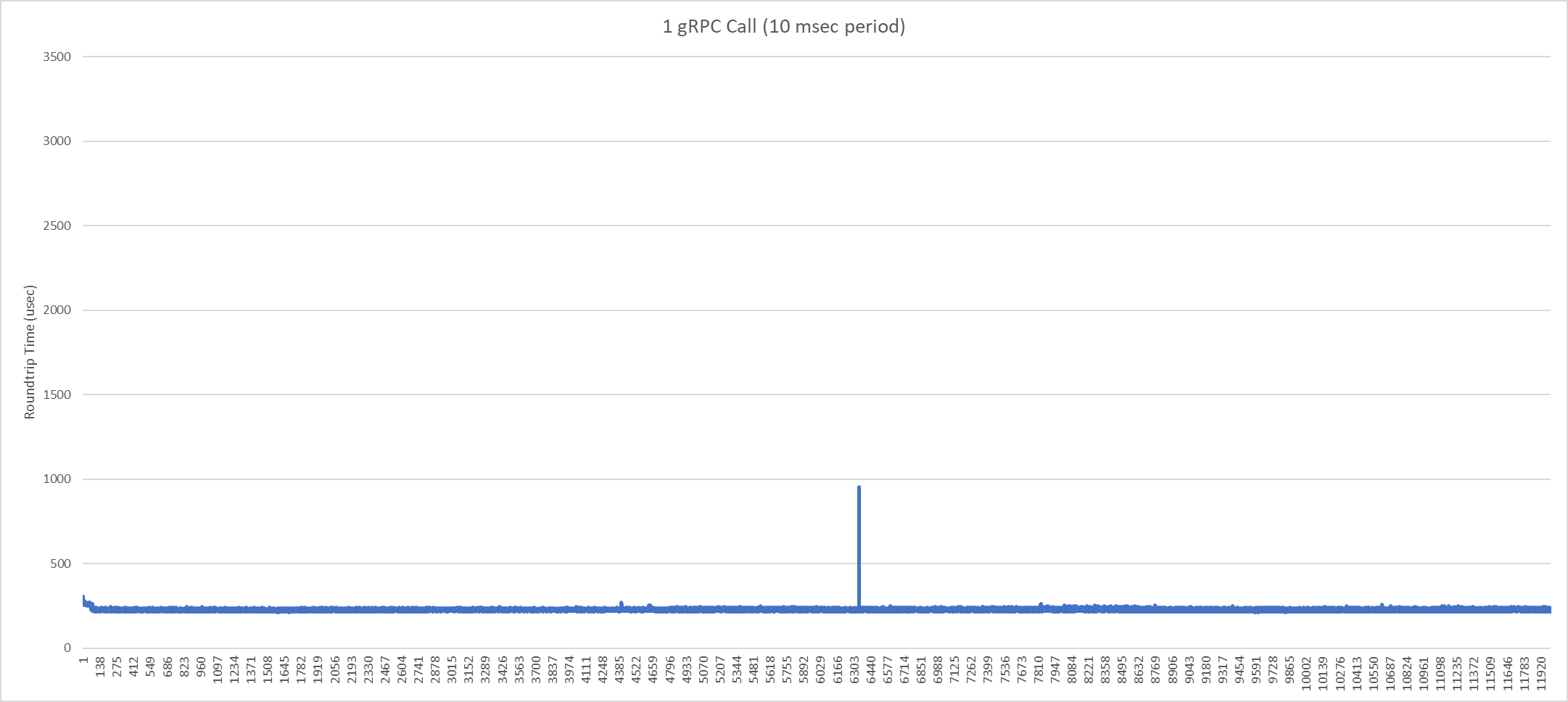

When iterating over a single gRPC client method call, jitter is reduced, but I still see 'spikes' in the roundtrip call latency. Here is a graph for calling 1 gRPC client call every 10 msec for 2 minutes:

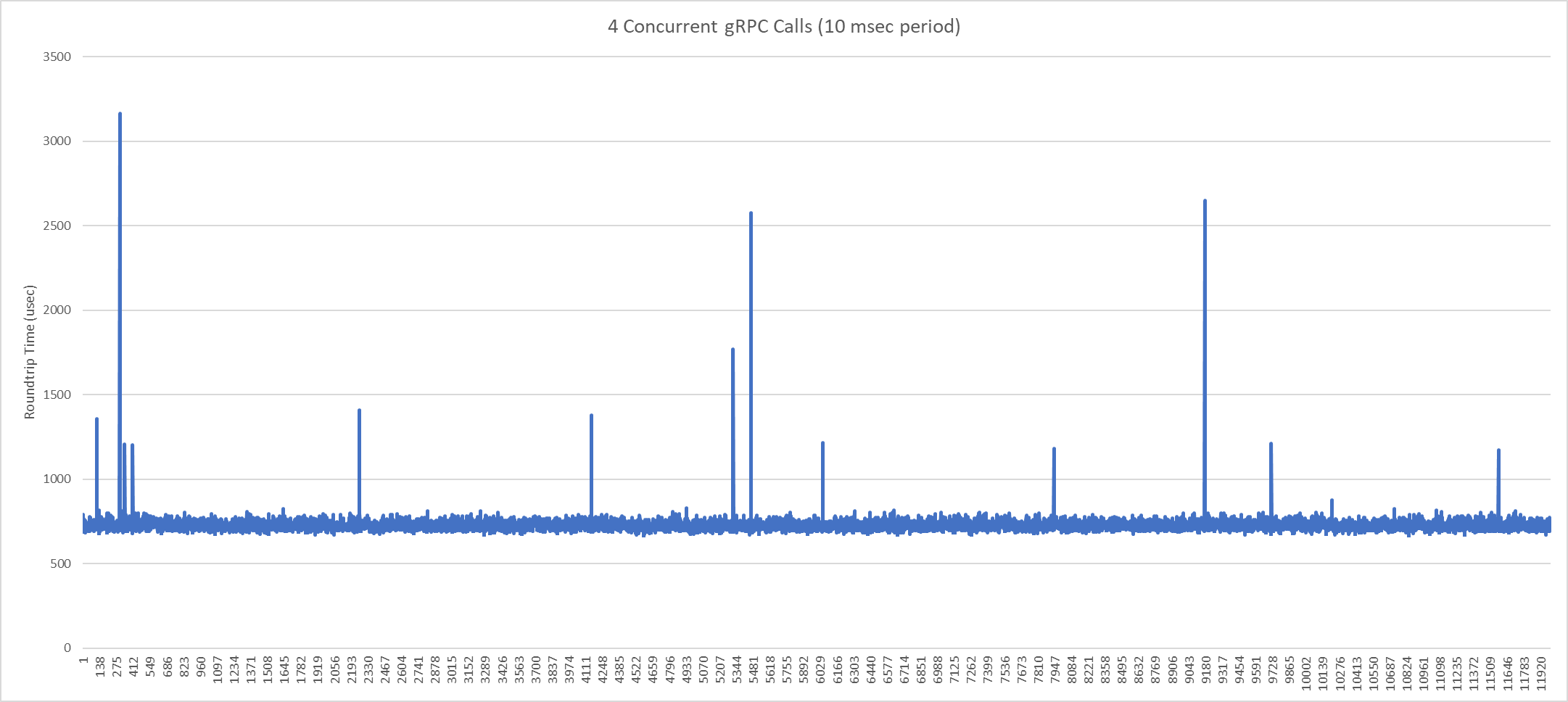

The 'spikes' are exacerbated when calling all 4 methods in parallel. In this graph, the roundtrip time is the time it takes for all 4 methods to return:

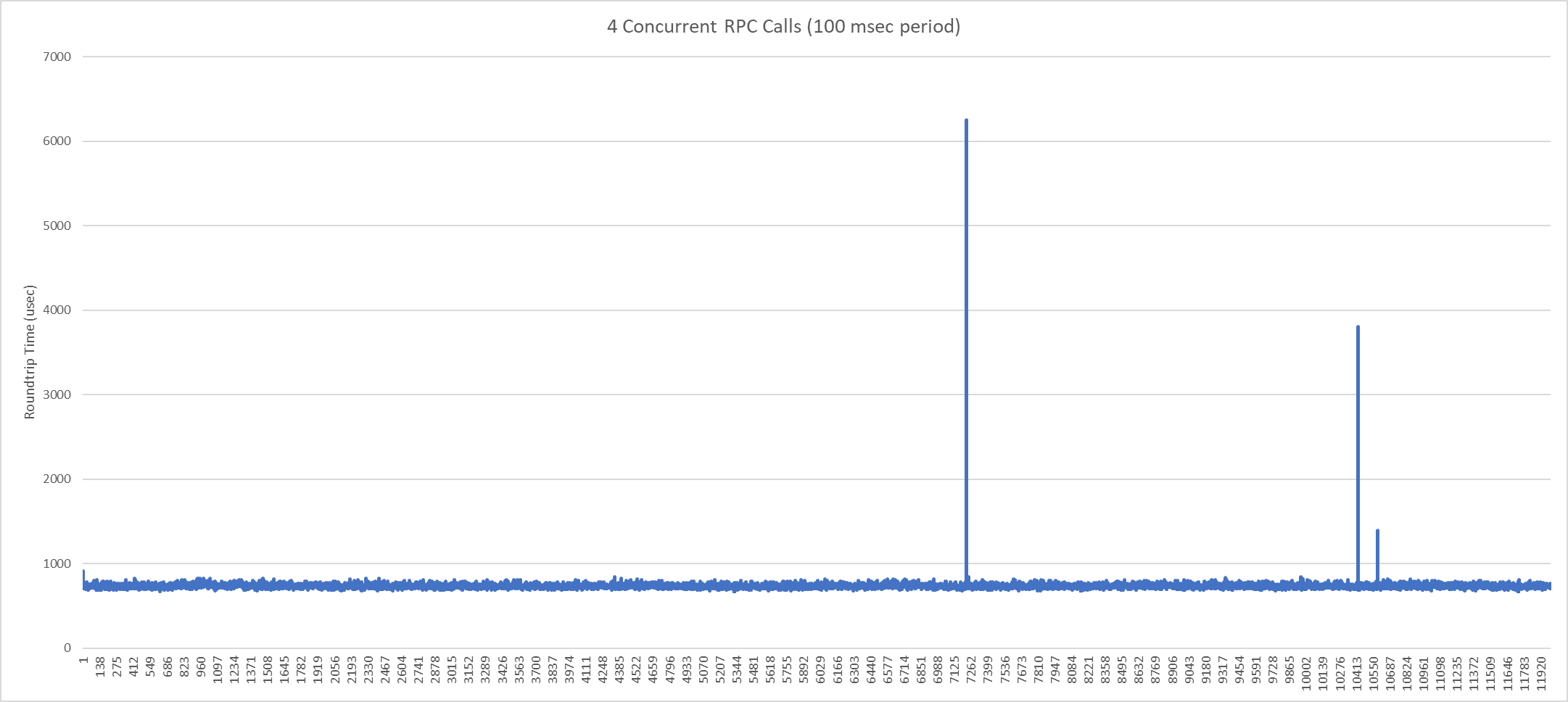

I've also tried reducing the interval period of sending these commands. In this graph, I'm sending 4 gRPC client calls in parallel, but at 100 msec intervals (for 20 minutes):

While running on Linux RT and using a localhost connection, I'd expect more deterministic results. Can you help confirm where the latency jitter is coming from?

I have uploaded all of my code that generated these results here: https://github.com/kt-jplotzke/grpc-labview-timingdemo For reference, I am using the latest grpc-labview release v1.0.0.1.

AB#2342032

AB#2386409

I don't have an RT target to hand but it may be worth changing the priority and re-entrancy of the handler VIs and see if that helps. I suspect it won't and the jitter is more likely to be in the network stack but worth a try.

I'll admit that I haven't looked deeper into this for several months, but I'm fairly confident that I was able to determine that the network stack was not to blame. I captured some kernel tracing logs while trying to capture the exact point where these "spikes" occurred. My initial findings pointed more towards jitter associated with launching async threads for the grpc client (from this line of code). When the spikes occurred, I saw large memory management functions occurring in the kernel, blocking the async thread from launching for a few usec/msec.

In the end, my main focus was creating a deterministic server (and not a client). When I de-coupled testing the server from the client functions, I was able to run only the gRPC server code and have relatively stable determinism without these spikes. (which makes me further believe that the spikes come from the client functions and not the server functions).

That said, I did not spend much time doing a deep investigation.