webrtc-streamer

webrtc-streamer copied to clipboard

webrtc-streamer copied to clipboard

Can we run it on a 海思 or 联咏 motion camera? If you can, it's very cool.

Is your feature request related to a problem? Please describe. A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

Describe the solution you'd like A clear and concise description of what you want to happen.

Describe alternatives you've considered A clear and concise description of any alternative solutions or features you've considered.

Additional context Add any other context or screenshots about the feature request here.

Hi zsinba,

It depends on what is motion camera protocol, anyway it is possible to extend backend supported protocols.

Implementing RMTP or multipart jpeg over http or ... need implementing another capturer that extend rtc::VideoSourceInterface<webrtc::VideoFrame>

Best Regards, Michel.

thanks mpromonet: I am very glad to receive your reply. I haven't tried it on a motion camera yet. I have a hisi(海思) development board. I tried to run it on hisi(海思)s' board, but it didn't work. The development of hisi(海思) is the structure of ARM. As you said, there is no WebRTC related content on this development board. Do you have any suggestions if I want to run it on a Huawei Hisi(海思)or Motion Camera?

My development board don't have the "rtc::VideoSourceInterfacewebrtc::VideoFrame" interface. but I could get the rtc frame binary data from the camera. What I felt I wanted to do was push video streaming to Jitsi via the development board.

Hi,

I just made a google serach on Hisi development board, it seems an ARMv7 with a JPEG camera. If the JPEG frames are available from V4L2 interface, it should work. I test the ARMv6 & ARMv7 build on a raspberry, I don't know if it can run on this board, you may need to make your build with this target.

Best Regards, Michel.

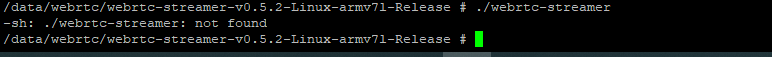

I tried to run it, but it didn't work. You don't mention the compilation process in your documentation. Is there more documentation on compilation?

Hi,

I just made a google serach on Hisi development board, it seems an ARMv7 with a JPEG camera. If the JPEG frames are available from V4L2 interface, it should work. I test the ARMv6 & ARMv7 build on a raspberry, I don't know if it can run on this board, you may need to make your build with this target.

Best Regards, Michel.

raspberry is work. I do.

Hi,

The compilation is described in Dockerfile, for cross-compilation you can look to https://github.com/mpromonet/webrtc-streamer/blob/master/Dockerfile.rpi or https://github.com/mpromonet/webrtc-streamer/blob/master/Dockerfile.arm64. You may start installing your target cross compiler and adapt cross compilation variables, after depending on result, you will have more or less work to do.

Best Regards, Michel.

I tried to compile it under HiLinux(海思). It didn't work out. The dependency is more.

Another, I tried using it under Raspberry. The image from the camera is very blurry, even if it is viewed by a self machine. Why is that?

Hi @zsinba

Compiling WebRTC is probably a first step.

When the image is blurry (with grey squares) it may be when network is dropping lots of frames.

You could try to reduce the capture resolution to see if it becomes better. Another possibility if you have a V4L2 that support H264 capture (like raspicam does) is to use the -o option that allow H264 capture, reducing cpu ressource needed.

Best Regards, Miche