Set order of CV

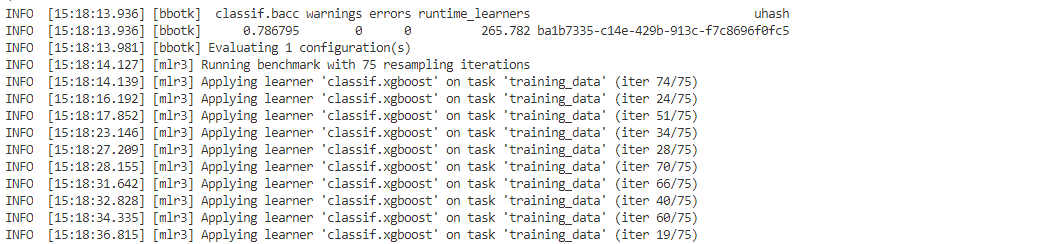

Hi, I am running a parameter optimisation using a custom resampling function. However, I noticed that when I start the tuning instance that although the tuning instance uses the cross-validations blocks that I provide, they come in a different order that I put (see image)

Here is a sample code:

# Create a task

task = tsk("penguins")

task$filter(1:50)

# Instantiate Resampling:

rc <- rsmp("custom")

rc$instantiate(traintask,

list(1:10, 21:30, 41:45),

list(11:20, 31:40, 46:50))

measure <- msr("classif.fbeta", na_value = 0)

term_evals <- trm("evals", n_evals = 20)

# Create tuning instance

xgb_learner <- lrn("classif.xgboost",

eval_metric = "error",

predict_type = "prob")

XGB_parameters <- ps(eta = p_dbl(default = 0.15, lower = 0.1, upper = 0.5))

instance <- TuningInstanceMultiCrit$new(

task = task ,

learner = xgb_learner,

resampling = rc,

measure = measure ,

search_space = XGB_parameters,

terminator = term_evals )

The image is a capture of my actual data, but it is clear that the order of the "iter" seems random rather than linear.

Please provide a reproducible example. It looks like there's a typo, the measure, learner, and task are not compatible, and you haven't defined the tuner.

This should run properly. Note that there is only three CV sets but the issue stands the same, the groups are selected in a random order (1, 3, 2 ; 2, 3, 1 ; 1, 2, 3) instead of sequentially (1, 2, 3)

library(mlr3)

library(mlr3learners)

# Create a task

task = tsk("iris")

# Instantiate Resampling:

rc <- rsmp("custom")

rc$instantiate(task,

list(1:10, 21:30, 41:45),

list(11:20, 31:40, 46:50))

measure <- msr("classif.ce")

term_evals <- trm("evals", n_evals = 10)

# Create tuning instance

learner <- lrn("classif.xgboost")

parameters <- ps(eta = p_dbl(lower = 0.001, upper = 0.1))

tuner <- tnr("random_search")

instance <- TuningInstanceMultiCrit$new(

task = task ,

learner = learner,

resampling = rc,

measure = measure ,

search_space = parameters,

terminator = term_evals )

tuner$optimize(instance)

Thanks -- internally, mlr3 uses future_mapply(), which, like the base R function mapply(), doesn't guarantee any particular order of execution.

What's the use case for needing a particular order?

The order of execution is randomized for parallelization. This affects only the log output, the result object should be stored in the same order as the custom resampling.

Admittedly, the log output is a bit confusing here. For longer, parallel calculations, I would suggest to enable progress bars.

I thought tha the order of the CV sampling affected the accuracy of predictions as described here. Due to the fact I am dealing with time series signal, I thought I need to input the samples sequentially. Is not that the case?

I think you might require a different resampling strategy alltogether in that case. I am trying to include such strategies in mlr3temporal, perhaps give it a look. It is not really finished though, so there’s a caveat in using it

OK, I am trying to implement a by work using the function tune_nested(), however, the code does not run because "custom resampling could not be implemented". Here is the change:

rc$instantiate(traintask,

list(1:10, 21:30, 41:45),

list(11:20, 31:40, 46:50))

# Now understandimg from the diagram in MLR3 book (chapter 4.3) is that the

# outer sampling is a list of index for the inner sampling lists so that they will

# be selected or at least reserved in that order. Is that correct?

r_out <- rsmp("custom")

r_out$instantiate(traintask,

list(1, 2, 3),

list(2, 3, 1))

rr <- tune_nested(

method = "random_search",

task = traintask,

learner = xgb_learner,

inner_resampling = rc,

outer_resampling = r_out,

measure = measure,

search_space = XGB_parameters,

term_evals = 20)

In general, I would be happy if I could reserve the last n rows for calculating the error measure.

PS, I noticed the mlr3temporal build status is failing.

The package is currently not actively developed as there is noone who has time

@sebffischer oh, that would be a pity as I found lots of useful tools in the package. In that case, it would be useful to mention it on the README page or at least add the "not under maintenance" badger. Hopefully, a backer will come up soon to allocate funding to the project.

@phisanti Ups I think I just spread some misinformation, looks like @pfistfl is working on it. What are the exact plans here @pfistfl ? And would you be interested in contributing @phisanti ?

Hey, I am currently trying to maintain it on a minimum effort Basis but I am willing to maintain / update things if you see need. just raise an issue and ping me if you find any problems with the current package

@phisanti Can this issue be closed? To me it looks like your question has been answered by Michel and that there's no issue in the package :)

Closed as the issue needs to be solved via supporting ordered resamplings.