Pabot got stuck even after Test timeout was mentioned in the test case.

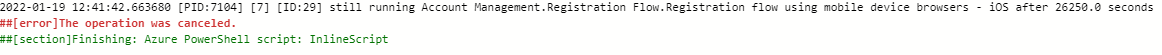

Recently we have encountered an issue where in a test case was stuck causing the automation to go forward. After looking into the logs i saw below process was running and it didnt timeout even when the Timeout condition met.

Robot Logs: /bin/sh -c robot --test 'Test1' --suite 'Test API' --include RFR --exclude exclude --variable CALLER_ID:19911a7c488a43d9b51b1e6d1e9091fa --variable PABOTLIBURI:127.0.0.1:8270 --variable PABOTEXECUTIONPOOLID:2 --variable PABOTISLASTEXECUTIONINPOOL:0 --variable PABOTNUMBEROFPROCESSES:15 --variable PABOTQUEUEINDEX:261 --variable 'Test1' --variablefile Properties/dev_env_variables.py --outputdir ./output/pabot_results/261 --loglevel INFO --log NONE --report NONE --xunit NONE --consolecolors off --consolemarkers off .

Console logs: [36mrobotframework |[0m 2021-02-01 03:41:38.131201 [PID:1089] [8] [ID:260] still running Test1 after 39990.0 seconds

The same with me. One of pabot thread will run forever even the suite under execution is quite small. I even set the Test Timeout for each testcase to 5 min. But it doesn't help

I think @mkorpela should add an option to the pabot command, such as --thread-timeout 1000 sec for example so we can stop the execution if error happens

@lynhbn same with me T.T

Experiencing exactly the same issue with Chrome browser using a Browser library. On Firefox with Browser lib all is fine. Also same tests were running fine without hanging via Selenium library for both Chrome and Firefox before migration to Browser library. What could be the reason? Test are running in parallel by testlevelsplit option. Issue occurs only on docker running with Jenkins.

@mkorpela is there any possibility to add some timeout argument to force to kill a process that took more than X seconds?

same for me

I am having the same issue. I am using RFBrowser lib for UI tests and sometimes a test just hangs for thousands of seconds. I have only noticed this happening while tests are running in a pipeline on TFS.

I have also encountered this. In my case I tried to run tests over the weekend so I ended up being stuck in this loop for over 2 days. I am using Browser library.

I located the output.xml of the test suite that got stuck and it contains <stat pass="7" fail="0" skip="0">All Tests</stat>. That test suite contains 7 tests so everything seems to have been run successfully but for some reason pabot has not gotten the signal.

Thanks everyone for reporting this!

In my experience, this only happen when your pc run out of CPU or ram, so make sure your pc is still have a lots of resources before running pabot

This is mostly happening for me in Docker container running via Jenkins. In theory there are a lot of resources, but I think you may have a right as many jobs are running over there.

That may also be because of running out of RAM.. Memory swapping is extremely slow.

Ouhhh cr*p... we have the same problem. We are using pabot in a Docker container on a Kubernetes cluster. I never thought about running out of memory :/

Is there any workaround for that? :)

Hey all, we are trying to solve this problem right now. We have contacted our team responsible for Kubernetes and each container has 10GB.

So we are searching in an other direction. Do any of you are using a listeners and pabot?

Cheers

only pabot here

Each container has 10GB of RAM 🤔 .. big containers.

We weren't using listeners when I observed this failure. However we haven't had a single instance since January. We've mostly stayed up to date with Robot Framework and Pabot stable versions.

So we are searching in an other direction. Do any of you are using a listeners and pabot?

Yes, I do. I can see the stuck problem quite frequently in out GitLab CI executions. (So the pabot runs in a docker container in my case too.) We use the following modules:

- robotframework (4.1.3)

- robotframework-pabot (2.4.0)

The command that leads to the stuck:

poetry run pabot --pabotlib --processes 6 --listener mylistener.py --outputdir ./report -T --exclude tag1 --include tag2 --skiponfailure tag3 ./tests/

any updates on this issue?

Hi @mkorpela, I'm facing the same issue recently. I'm using AWS's ECS and as far as I see it, we're far from short of memory. Are there any other things that I can look up/fix before the fix is released in Pabot?

Hello, @mkorpela . I'm facing this exact issue. Timeout is mentioned, some random TC gets stuck forever. I'm working with my org's internal APIs. My machines have more than enough memory. I'm looking out for CPU usage, the machine has 8 cores and I'm running 2 TC in parallel. Can we start an active discussion on this?

Hello all,

we had the same problem. The problem is in the handling of subprocesses in Python. Sometimes the subprocess gets stuck and no termination occurs. Due to the nature of pabot, the run continues because the process never terminates. This can be seen here:

def _wait_for_return_code(process, item_name, pool_id, item_index):

rc = None

elapsed = 0

ping_time = ping_interval = 150

while rc is None: #(endless loop if no retun code is setted by the subprocess)

rc = process.poll() #(poll the return code of the process)

time.sleep(0.1)

elapsed += 1

if elapsed == ping_time:

ping_interval += 50

ping_time += ping_interval

_write_with_id(

process,

pool_id,

item_index,

"still running %s after %s seconds" % (item_name, elapsed / 10.0),

)

return rc, elapsed / 10.0

pabot waits until the subprocess returns its code (the return code is either 0 if all tests of the process succeeded, or X for the number of failed tests).

So, it is not trivial to solve this problem. By default, Python's subprocess package cannot be easily fixed. To really fix it, another package/framework might be the way to go. To handle the current situation, a timeout that can be set as a start argument would solve most of the problems, I think (at least a hard set timeout solved the current situation in our scenario).

Hi guys! I managed to fix it on our side. It turned out that for us, a root cause was a tracing option enabled for New Context keyword. Usually using it for debugging but it was accidently enabled for regular parallel test runs. It was generating a lot of data files and reducing test run performance dramatically eating up resources. Since I disabled it (~1 month) we haven't seen this issue. Before it was occurring everyday. Hopefully it will help you too or at least guide you to some path of solution. Good luck!

Hi guys! I managed to fix it on our side. It turned out that for us, a root cause was a tracing option enabled for New Context keyword. Usually using it for debugging but it was accidently enabled for regular parallel test runs. It was generating a lot of data files and reducing test run performance dramatically eating up resources. Since I disabled it (~1 month) we haven't seen this issue. Before it was occurring everyday. Hopefully it will help you too or at least guide you to some path of solution. Good luck!

Hey man can you show me how do disable it?

Hi guys! I managed to fix it on our side. It turned out that for us, a root cause was a tracing option enabled for New Context keyword. Usually using it for debugging but it was accidently enabled for regular parallel test runs. It was generating a lot of data files and reducing test run performance dramatically eating up resources. Since I disabled it (~1 month) we haven't seen this issue. Before it was occurring everyday. Hopefully it will help you too or at least guide you to some path of solution. Good luck!

Hey man can you show me how do disable it?

It should be disabled by default, but you can check how it's on your side (if using Browser library)

New Context tracing=${NONE}

remember to use RF ${NONE} instead None as it's not converted from string to Python object. Issue details here https://github.com/MarketSquare/robotframework-browser/issues/2520

It should be disabled by default, but you can check how it's on your side (if using Browser library)

New Context tracing=${NONE}remember to use RF ${NONE} instead None as it's not converted from string to Python object. Issue details here MarketSquare/robotframework-browser#2520

We don't use this keyword anywhere, but still, face the problem.

Hi guys! I managed to fix it on our side. It turned out that for us, a root cause was a tracing option enabled for New Context keyword. Usually using it for debugging but it was accidently enabled for regular parallel test runs. It was generating a lot of data files and reducing test run performance dramatically eating up resources. Since I disabled it (~1 month) we haven't seen this issue. Before it was occurring everyday. Hopefully it will help you too or at least guide you to some path of solution. Good luck!

Hey man can you show me how do disable it?

It should be disabled by default, but you can check how it's on your side (if using Browser library)

New Context tracing=${NONE}remember to use RF ${NONE} instead None as it's not converted from string to Python object. Issue details here MarketSquare/robotframework-browser#2520

We don't use Browser library so we do not have this keyword man

@lynhbn @zzmolnar so maybe you can investigate potential places where resources being eaten up? Something that can slow down your tests and make them stuck.

The issue to be solved is that a timeout doesn't kill a stuck Test Case. Am I Right?

The issue to be solved is that a timeout doesn't kill a stuck Test Case. Am I Right?

Yes, this is the main issue

Hello all,

we had the same problem. The problem is in the handling of subprocesses in Python. Sometimes the subprocess gets stuck and no termination occurs. Due to the nature of pabot, the run continues because the process never terminates. This can be seen here:

def _wait_for_return_code(process, item_name, pool_id, item_index): rc = None elapsed = 0 ping_time = ping_interval = 150 while rc is None: #(endless loop if no retun code is setted by the subprocess) rc = process.poll() #(poll the return code of the process) time.sleep(0.1) elapsed += 1 if elapsed == ping_time: ping_interval += 50 ping_time += ping_interval _write_with_id( process, pool_id, item_index, "still running %s after %s seconds" % (item_name, elapsed / 10.0), ) return rc, elapsed / 10.0pabot waits until the subprocess returns its code (the return code is either 0 if all tests of the process succeeded, or X for the number of failed tests).

So, it is not trivial to solve this problem. By default, Python's subprocess package cannot be easily fixed. To really fix it, another package/framework might be the way to go. To handle the current situation, a timeout that can be set as a start argument would solve most of the problems, I think (at least a hard set timeout solved the current situation in our scenario).

@mkorpela Can you take a look? It's been a long time, still no solution for this one. It's quite serious issue for us