VIBE

VIBE copied to clipboard

VIBE copied to clipboard

Official implementation of CVPR2020 paper "VIBE: Video Inference for Human Body Pose and Shape Estimation"

Thanks for your great work! Can I run the demo with my own trained model (trained with train.py)? Naively trying to pass the resulted `model_best.pth.tar` to `VIBE_Demo(pretrained=...)` runs into the...

Hello. Thanks for your excellent work. I wonder whether I can train with only posetrack and 3dpw dataset?

Hi, I got a dataset of different keypoints from standard SMPL, and I want to use this dataset to train a net which output SMPL params. so I wonder Where...

Hi, How can we calculate translation parameters ? The output file consist of pose , shape but I don't find any translation parameters.

operating system and the version: ubuntu 16 python version: python=3.7 pytorch version: pytorch=1.6.0 the stack trace of the error: Traceback (most recent call last): File "demo.py", line 470, in main(args)...

The problem is that the estimated camera parameters are calculated using the size of the bbox, but it is not that the larger the bbox, the closer to the camera,...

@jkamalu solved by adding the 3dpw training dataset https://github.com/mkocabas/VIBE/issues/140 _Originally posted by @jiandandian2 in https://github.com/mkocabas/VIBE/issues/168#issuecomment-759252424_

Thanks for your interest in our research! If you have problems running our code, please include; 1. windows 10 , 2. Python 3.7.10, 3. 1.4.0+cu92, 4 . venv_vibe) D:\video\3\4>python demo_alter.py...

Operating system: win10 Python:python3.7.10 pytorch:1.5.0+cu101 operation result:  sample_video_vibe_result.mp4:  Why the output result is the same as the input video?

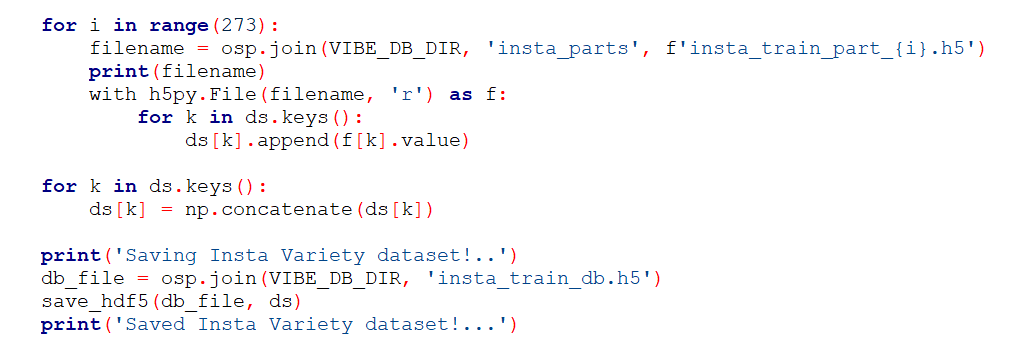

It is a brilliant work! I want to start training on my computer,but I find that the time is too long when processing the "insta_variety_tfrecords".  As the /lib/data_utils/insta_utils.py writing,...