Swin-Transformer

Swin-Transformer copied to clipboard

Swin-Transformer copied to clipboard

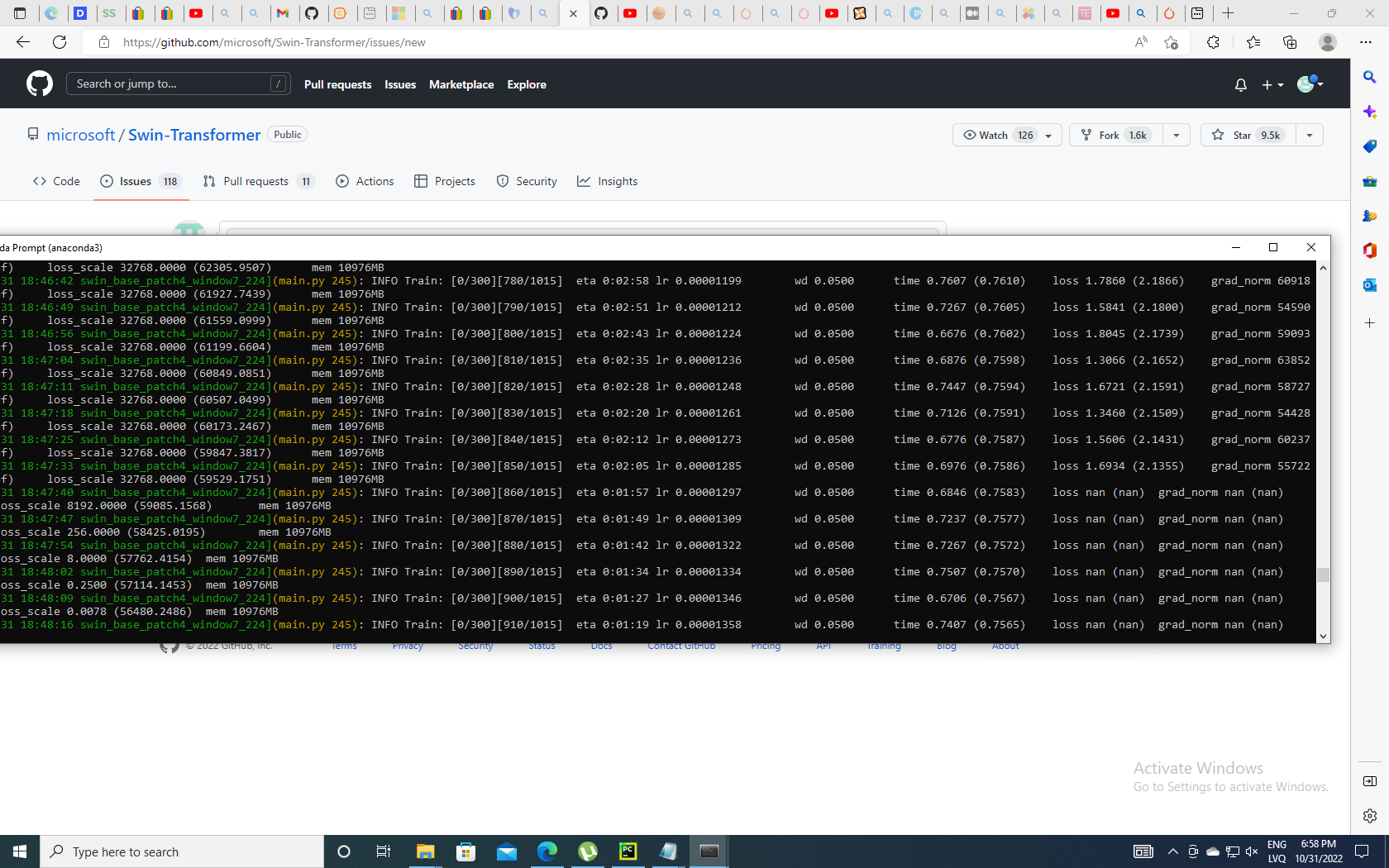

After several iterations gradient norm and loss becomes nan

Hello,

It would be nice if you could help me to solve this issue. I have been trying to train the swin transformer model (swin_base_patch4_window7_224) on imagenet dataset with 100 classes (I exchanged the mlp head from 1000 to 100 dimension output). However, after some iterations the gradient norm and loss become nan. I have tried several lr and gradient clip values but the issue persists.

Best regards, Roberts

Same issue here, except I am using all default config and hyper parameters for Swin-B from scratch on ImageNet-1k. Gradient norm explodes quickly after several epochs.

@neurosynapse @byronyi This issue is due to AMP using the torch.float16 dtype by default. Use torch.bfloat16 instead.