Metabase becomes inoperable, leaves too many hanging DB connections

Bugs

- Your browser and the version: tested in chrome, firefox,

- Your operating system: (e.x. OS X 10, Windows XP, etc)

- Your databases: AWS RDS PostgreSQL

- Metabase version: 0.28.0, 0.29, 0.30.0, 0.31.0RC

- Metabase hosting environment: AWS ECS

- Metabase internal database: AWS RDS PostgreSQL

Basically at some point, everyday, the queries don't return anything due to the amount of hanging connections. Status goes from "doing science" to "took too much time".

Would really appreciate some pointers or a way to tell met abase to kill DB Connections. It becomes inoperable every day - I suspect due to the amount of hanging/idle connections to the DB. As soon as I restart the container the connections to the RDS drop to 0 and the queries work again immediately.

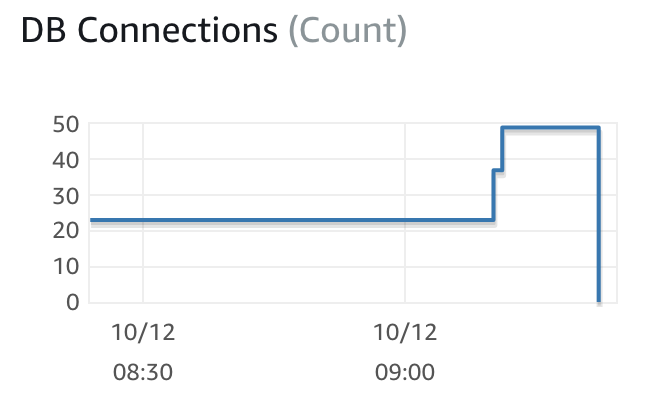

Below what happened, before 9h30 is when I restarted the container.

It would be good to test this out on the most recent version of Metabase 0.30.4 as there have been several fixes recently that I think would help prevent this issue. Although https://github.com/metabase/metabase/issues/8312 doesn't specifically say it was leaving database connections open, I think it's pretty likely that it would.

If you are still seeing this issue after upgrading, what would be most useful is a thread dump of the Metabase process when you observe this connection issue. A thread dump will capture what each thread in the JVM is doing at that exact moment. I'm not sure how you have deployment Metabase, but the easiest way to get a thread dump is to find out the PID of your Metabase process and run jstack on that PID. You can then redirect the output of that command to a file, i.e. jstack 123 >> thread-dump.txt. If you would like you can email it to me directly, my address is my first name at metabase.com.

@senior I'm seeing this issue happening even in the 31.0 RC. Isn't it supposed to incorporate all the mentioned fix from the 30.4?

@joaoferrao 0.31.0.RC1 hasn't been released yet. Are you building your own off of the release branch?

@senior that's correct.

@joaoferrao You should have the fixes then. What would be helpful would be figuring out what those connections are doing via the thread dump that I described above.

@senior I'm trying to follow your instructions but the alpine dist where metabase is included doesn't include jstack apparently and I'm trying to find a way to install it without resorting to restart the production instance. suggestions?

We're using release v0.32.9 and it also shows this problem; no active users of Metabase yet it has 195 open connections to my DB server (postgresql 11). I have metabase configured to view 350 databases; pg connection max is 200; it would be nice if unused connections could be closed.

We experience the same issue with a lower amount of databases (around 30). We are thinking of putting PgBouncer in between to handle the connections.

PgBouncer did not work for us.

We are now testing with the latest RC (0.33.0-preview2). The same thing happens. It opens a lot of connections (97 of the allowed 100 on that postgres) and keeps them open for ever. This means other processes can no longer connect to that database. For example the one that inserts the data in the first place.

That proces will run into this:

psycopg2.OperationalError: FATAL: remaining connection slots are reserved for non-replication superuser connections

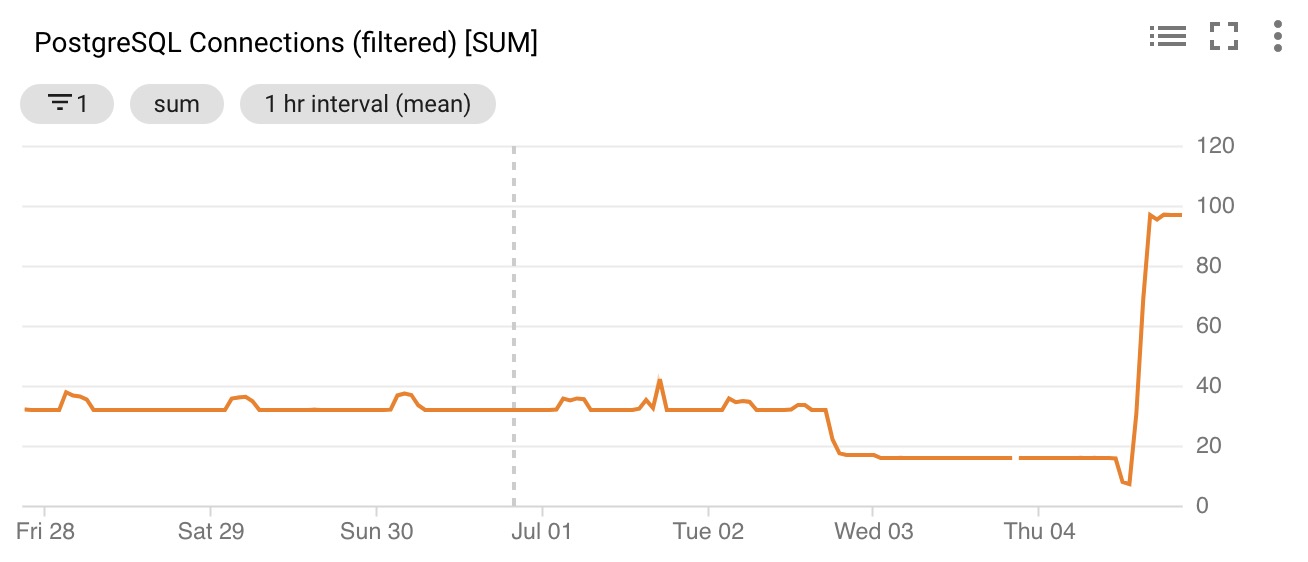

See also the open connections from before (v 0.31.2) and then the spike once we upgraded to 0.33preview2. This also happened with 0.32. We go from around 20 to 40 connections open to "max connections allowed" open. So I guess something changed. after 0.31.2 in terms of the handling of connections to the db.

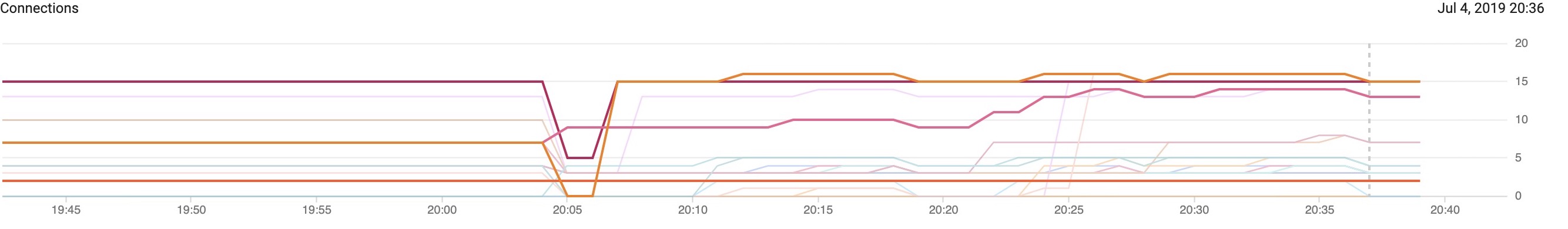

What I noticed is that each connected database seems to max out at around 15 connections per database (or user, not sure what determines the limit). That would mean that you need at least number of databases x 15 connections + some extra to keep the database accessible for other processes.

I now upgraded to 200 allowed connections and while using Metabase those also slowly get filled up.

In the case of @djbusby that would mean a whopping 15 * 350 in the worst case. Hopefully somebody can come up with a good solution: something like "close connections after x amount of time not active".

@emetselaar I don't know if it will help or cause more problems, so please use with caution. The 15 connections per database is a current default, but with PR #9788, it looks like you can send parameter that changes the maximum pool size of c3p0 - example 10 connections:

java -Dc3p0.maxPoolSize=10 -jar metabase.jar

You might want to look at the c3p0 section about Managing Pool Size and Connection Age, since you might be able to close idle connections.

Thanks @flamber - I will try it out tomorrow. Now that I know where to look I see that the maxIdleTime is set at 3 hours, but the idleConnectionTestPeriod at 3 minutes is probably preventing the maxIdleTime to be ever hit. So the connections are never discarded?

Update: I could not get it to work so I updated the number of allowed open connections to the database.

However, I think that the inconsistency between maxIdleTime and idleConnectionTestPeriod looks like a bug. I do not understand the intention so it could be by design, but if that is the case then maxIdleTime does not have to be there at all right?

from /metabase/src/metabase/driver/sql_jdbc/connection.clj

(def ^:private data-warehouse-connection-pool-properties

"c3p0 connection pool properties for connected data warehouse DBs. See

https://www.mchange.com/projects/c3p0/#configuration_properties for descriptions of properties."

{"maxIdleTime" (* 3 60 60)

"minPoolSize" 1

"initialPoolSize" 1

"maxPoolSize" 15

;; prevent broken connections closed by dbs by testing them every 3 mins

"idleConnectionTestPeriod" (* 3 60)

;; prevent overly large pools by condensing them when connections are idle for 15m+

"maxIdleTimeExcessConnections" (* 15 60)})

Facing the same issue with AWS RDS MySQL. @emetselaar Were you able to resolve it anyhow?

Facing the same issue with AWS RDS MySQL. @emetselaar Were you able to resolve it anyhow?

I worked around this by increasing the amount of allowed connections on the database itself. Not ideal, but for my purposes it works.

I am also encountering long running queries/connections, thought I would post them in case it's helpful:

Just now

analytics

SELECT "core_session"."created_at", "core_session"."user_id", "core_user"."is_superuser" FROM "core_session" LEFT JOIN "core_user" ON "core_session"."user_id" = "core_user"."id" WHERE ("core_user"."is_active" = TRUE AND "core_session"."id" = $1) LIMIT 1

344737.12ms

Just now

analytics

SHOW TRANSACTION ISOLATION LEVEL

344736.97ms

Just now

analytics

SELECT "core_session"."created_at", "core_session"."user_id", "core_user"."is_superuser" FROM "core_session" LEFT JOIN "core_user" ON "core_session"."user_id" = "core_user"."id" WHERE ("core_user"."is_active" = TRUE AND "core_session"."id" = $1) LIMIT 1

344081.78ms

Just now

analytics

SELECT "pulse_channel"."id", "pulse_channel"."pulse_id", "pulse_channel"."schedule_type", "pulse_channel"."channel_type" FROM "pulse_channel" WHERE ("enabled" = TRUE AND ("schedule_type" = $1 OR ("schedule_type" = $2 AND "schedule_hour" = 8) OR ("schedule_type" = $3 AND "schedule_hour" = 8 AND "schedule_day" = $4) OR ("schedule_type" = $5 AND "schedule_hour" = 8 AND "schedule_frame" = $6 AND ("schedule_day" = $7 OR "schedule_day" = $8))))

12703.728ms

Fixed by #11832

Hi all. I'm still experiencing the above problem. Metabase keeps taking up more and more connection slots until nothing is able to connect. Is this regression known?

@Mapiarz Please post "Diagnostic Info" from Admin > Troubleshooting, and which database you're seeing this with. And logs from Admin > Troubleshooting > Logs. And any process/connection stats from your database, which could be helpful.

Here's diagonostic info:

{

"browser-info": {

"language": "en-US",

"platform": "Linux x86_64",

"userAgent": "Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:81.0) Gecko/20100101 Firefox/81.0",

"vendor": ""

},

"system-info": {

"file.encoding": "UTF-8",

"java.runtime.name": "OpenJDK Runtime Environment",

"java.runtime.version": "11.0.7+10",

"java.vendor": "AdoptOpenJDK",

"java.vendor.url": "https://adoptopenjdk.net/",

"java.version": "11.0.7",

"java.vm.name": "OpenJDK 64-Bit Server VM",

"java.vm.version": "11.0.7+10",

"os.name": "Linux",

"os.version": "4.14.186-146.268.amzn2.x86_64",

"user.language": "en",

"user.timezone": "GMT"

},

"metabase-info": {

"databases": [

"mongo",

"googleanalytics",

"postgres"

],

"hosting-env": "unknown",

"application-database": "postgres",

"application-database-details": {

"database": {

"name": "PostgreSQL",

"version": "11.7"

},

"jdbc-driver": {

"name": "PostgreSQL JDBC Driver",

"version": "42.2.8"

}

},

"run-mode": "prod",

"version": {

"date": "2020-09-21",

"tag": "v1.36.6.1",

"branch": "enterprise-release-1.36.x",

"hash": "5695e2b"

},

"settings": {

"report-timezone": "US/Eastern"

}

}

}

Here are the final moments of my psql server before becoming full unresponsive:

2020-10-14 02:00:14.508 UTC [31786] analysis@merged-watchdog STATEMENT: -- Metabase

SELECT "public"."rest_framework_api_key_apikey"."hashed_key" AS "hashed_key", "public"."rest_framework_api_key_apikey"."name" AS "name", "public"."rest_framework_api_key_apikey"."created" AS "created", "pu

blic"."rest_framework_api_key_apikey"."expiry_date" AS "expiry_date", "public"."rest_framework_api_key_apikey"."revoked" AS "revoked", "public"."rest_framework_api_key_apikey"."prefix" AS "prefix" FROM "public"."r

est_framework_api_key_apikey" LIMIT 10000

2020-10-14 02:00:14.601 UTC [31786] analysis@merged-watchdog ERROR: permission denied for relation rest_hooks_hook

2020-10-14 02:00:14.601 UTC [31786] analysis@merged-watchdog STATEMENT: -- Metabase

SELECT "public"."rest_hooks_hook"."target" AS "target", "public"."rest_hooks_hook"."event" AS "event", "public"."rest_hooks_hook"."created" AS "created", "public"."rest_hooks_hook"."updated" AS "updated",

"public"."rest_hooks_hook"."user_id" AS "user_id" FROM "public"."rest_hooks_hook" LIMIT 10000

2020-10-14 02:05:03.440 UTC [31785] analysis@merged-watchdog LOG: could not receive data from client: Connection reset by peer

2020-10-14 02:05:03.440 UTC [31786] analysis@merged-watchdog LOG: could not receive data from client: Connection reset by peer

2020-10-14 03:00:15.030 UTC [16489] analysis@merged-watchdog ERROR: permission denied for relation rest_framework_api_key_apikey

2020-10-14 03:00:15.030 UTC [16489] analysis@merged-watchdog STATEMENT: -- Metabase

SELECT "public"."rest_framework_api_key_apikey"."hashed_key" AS "hashed_key", "public"."rest_framework_api_key_apikey"."name" AS "name", "public"."rest_framework_api_key_apikey"."created" AS "created", "pu

blic"."rest_framework_api_key_apikey"."expiry_date" AS "expiry_date", "public"."rest_framework_api_key_apikey"."revoked" AS "revoked", "public"."rest_framework_api_key_apikey"."prefix" AS "prefix" FROM "public"."r

est_framework_api_key_apikey" LIMIT 10000

2020-10-14 03:00:15.131 UTC [16489] analysis@merged-watchdog ERROR: permission denied for relation rest_hooks_hook

2020-10-14 03:00:15.131 UTC [16489] analysis@merged-watchdog STATEMENT: -- Metabase

SELECT "public"."rest_hooks_hook"."target" AS "target", "public"."rest_hooks_hook"."event" AS "event", "public"."rest_hooks_hook"."created" AS "created", "public"."rest_hooks_hook"."updated" AS "updated",

"public"."rest_hooks_hook"."user_id" AS "user_id" FROM "public"."rest_hooks_hook" LIMIT 10000

2020-10-14 03:39:50.685 UTC [18135] analysis@merged-watchdog LOG: could not receive data from client: Connection reset by peer

2020-10-14 04:00:12.359 UTC [6674] analysis@merged-watchdog ERROR: permission denied for relation rest_framework_api_key_apikey

2020-10-14 04:00:12.359 UTC [6674] analysis@merged-watchdog STATEMENT: -- Metabase

SELECT "public"."rest_framework_api_key_apikey"."hashed_key" AS "hashed_key", "public"."rest_framework_api_key_apikey"."name" AS "name", "public"."rest_framework_api_key_apikey"."created" AS "created", "pu

blic"."rest_framework_api_key_apikey"."expiry_date" AS "expiry_date", "public"."rest_framework_api_key_apikey"."revoked" AS "revoked", "public"."rest_framework_api_key_apikey"."prefix" AS "prefix" FROM "public"."r

est_framework_api_key_apikey" LIMIT 10000

2020-10-14 04:00:12.437 UTC [6674] analysis@merged-watchdog ERROR: permission denied for relation rest_hooks_hook

2020-10-14 04:00:12.437 UTC [6674] analysis@merged-watchdog STATEMENT: -- Metabase

SELECT "public"."rest_hooks_hook"."target" AS "target", "public"."rest_hooks_hook"."event" AS "event", "public"."rest_hooks_hook"."created" AS "created", "public"."rest_hooks_hook"."updated" AS "updated",

"public"."rest_hooks_hook"."user_id" AS "user_id" FROM "public"."rest_hooks_hook" LIMIT 10000

2020-10-14 04:06:28.072 UTC [6674] analysis@merged-watchdog LOG: could not receive data from client: Connection reset by peer

2020-10-14 04:24:09.483 UTC [9994] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:09.501 UTC [9993] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:09.501 UTC [9992] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:09.577 UTC [9997] analysis@merged-watchdog FATAL: sorry, too many clients already

2020-10-14 04:24:09.581 UTC [9996] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:09.583 UTC [9995] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:09.596 UTC [9998] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:09.608 UTC [9999] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:10.641 UTC [10007] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:10.650 UTC [10005] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:10.656 UTC [10006] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:10.675 UTC [10004] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:10.689 UTC [10003] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:10.691 UTC [10002] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:10.799 UTC [10009] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:10.801 UTC [10008] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:11.707 UTC [10012] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:11.711 UTC [10011] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:11.718 UTC [10010] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:11.750 UTC [10013] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:11.761 UTC [10015] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:11.767 UTC [10014] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:11.855 UTC [10017] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:11.858 UTC [10016] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:12.764 UTC [10018] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:12.772 UTC [10020] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:12.775 UTC [10019] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:12.801 UTC [10022] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:12.811 UTC [10023] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:12.816 UTC [10024] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:12.917 UTC [10025] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:12.919 UTC [10026] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:13.816 UTC [10027] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:13.825 UTC [10029] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:13.826 UTC [10028] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:13.869 UTC [10031] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:13.872 UTC [10032] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:13.878 UTC [10030] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:13.979 UTC [10033] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:13.986 UTC [10034] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:14.889 UTC [10035] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:14.893 UTC [10036] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:14.894 UTC [10037] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:14.927 UTC [10040] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:14.927 UTC [10038] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:14.928 UTC [10039] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:15.040 UTC [10043] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:15.042 UTC [10041] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

2020-10-14 04:24:15.946 UTC [10047] analysis@merged-watchdog FATAL: remaining connection slots are reserved for non-replication superuser connections

The metabase logs seem to only be available for the last hour or so, so I will have to wait until metabase eats up all my connections again. Is there another way @flamber ?

@Mapiarz You should use the support email, when using the Enterprise Edition. Also, the latest release is 1.36.7 I don't know which database you're showing logs from, but I'm guessing it's Postgres. Are you using pgbouncer or similar? How many connections does the database allow? How many connections is Metabase configured to use? I don't know how you're hosting Metabase, but it looks like EC2, but I don't know if you're using JAR or Docker. The Metabase logs, should indicate if connections are open, and if they are queuing.

@flamber We are not self-hosting Metabase, we are using the Metabase Cloud Starter plan. As for other questions:

- Yes, sorry, I'm talking about Postgres

- No pgbouncer or equivalent

- Postgres configured for 100 connections

- No idea how the Cloud hosted Metabase is configured

@Mapiarz Okay, very helpful information. Are you using SSH tunnel?

Still the log will show details about threads, connections, queues etc like:

2020-10-15T15:58:19+02:00 DEBUG metabase.middleware.log POST /api/card/1471/query 202 [ASYNC: completed] 537.3 ms (26 DB calls) App DB connections: 1/13 Jetty threads: 2/50 (8 idle, 0 queued) (151 total active threads) Queries in flight: 1 (0 queued)

It looks like it's running out of connections hourly, which is the default sync-process, and that makes me think that the previous sync somehow doesn't complete.

But it should only consume 15 connections max - unless you have configured multiple database in Admin > Databases, since each configured here can handle up to 15 connections simultaneously.

@flamber Some answers:

- Not using SSH tunnel

- Just 1 postgres DB configured

- The default sync process does not seem to make Postgres run out of available connection slots if we are not actively using metabase, the number of idle connections is not growing

- We've ran out of available connection slots after my co-worker does some analysis in Metabase.

I've just opened up metabase and opened one of our dashboards with a bunch of custom questions and the number of idle connections to Postgres grew to 33. The more questions/dashboards I open, the more connections open up. It seems that whenever I open something new, the number of connections grows, but when I navigate away (or even close the Metabase tab) the connections are not closed. Surely that's not the intended behaviour? The good news is that I now know how to reproduce the behaviour so I'm sure we'll get to the bottom of this.

Here's a log I just grabbed from the Metabase admin panel: https://dpaste.org/Dc4X/slim (it's long so I've pasted it elsewhere).

@Mapiarz Very useful information. Thank you! I'm not sure if this is specific to Metabase Cloud or not, but we'll look through this and figure out a way to reproduce and fix the issue. Reopening this issue for now.

EDIT: Someone reported a different, but related problem with Redshift: https://discourse.metabase.com/t/redshift-bombarded-by-timed-out-query/13230

@flamber Thank you and lmk if you need anything else to reproduce.

@flamber Hi. We experienced this again last evening. Nothing changed on our end config wise since the last time. Is this issue still being investigated?

@Marpairz are you hitting connection limits on your application database or a data warehouse?

The more questions/dashboards I open, the more connections open up. It seems that whenever I open something new, the number of connections grows, but when I navigate away (or even close the Metabase tab) the connections are not closed. Surely that's not the intended behaviour?

It is intended behavior. We use connection pooling so we don't have the overhead of creating new connections every time you run queries.

Connections should stay in the pool for a while, but as @flamber mentioned you shouldn't have more than 15 connections per data warehouse per Metabase instance by default, because that's the max connection pool size we configure (unless you set MB_JDBC_DATA_WAREHOUSE_MAX_CONNECTION_POOL_SIZE to something different).

We don't currently set a max pool size by default for the application database connection pool, although you can set one by specifying the env var MB_APPLICATION_DB_MAX_CONNECTION_POOL_SIZE. At any rate, this number probably won't get much higher than 50 or so because that's the default size of the Jetty HTTP request threadpool. We'd only hit that number if you had 50 open requests that were all hitting the application DB at the exact same time -- possible but unlikely since app DB requests tend to be pretty quick and most long-running requests spend the majority of their time on running data warehouse queries.

@camsaul Talking with @Mapiarz via ticket 4075. There's more things going on and it isn't closing idle connections.

to the application database or the data warehouse?

it isn't closing idle connections

It's not supposed to. That's the point of a connection pool. Right now we don't close out idle ones for 3 hours.

https://github.com/metabase/metabase/blob/master/src/metabase/driver/sql_jdbc/connection.clj#L50-L51