about YOLOv6L

I am now reproducing yolov6l, the default settings width 1.0 and depth 1.0 shows that level of speed is twice as yolov5l and yolov7, and flops is four times, is this result as expected?

I am now reproducing yolov6l, the default settings width 1.0 and depth 1.0 shows that level of speed is twice as yolov5l and yolov7, and flops is four times, is this result as expected?

I think you should compare it with yolox-L. In addition, it seems that your yolox/v6 has not been completely implementated.

you are right, in my YOLOX, the depth ratio also control the number of decoupled conv in the head part, I just want to check if the 1.0 width and depth ratio in YOLOv6 leads to the flops and param showed in the figure is corrected? but why you think the YOLOv6 implement is not correct because I just borrowed all the backbone\neck\head part from this repo.

you are right, in my YOLOX, the depth ratio also control the number of decoupled conv in the head part, I just want to check if the 1.0 width and depth ratio in YOLOv6 leads to the flops and param showed in the figure is corrected? but why you think the YOLOv6 implement is not correct because I just borrowed all the backbone\neck\head part from this repo.

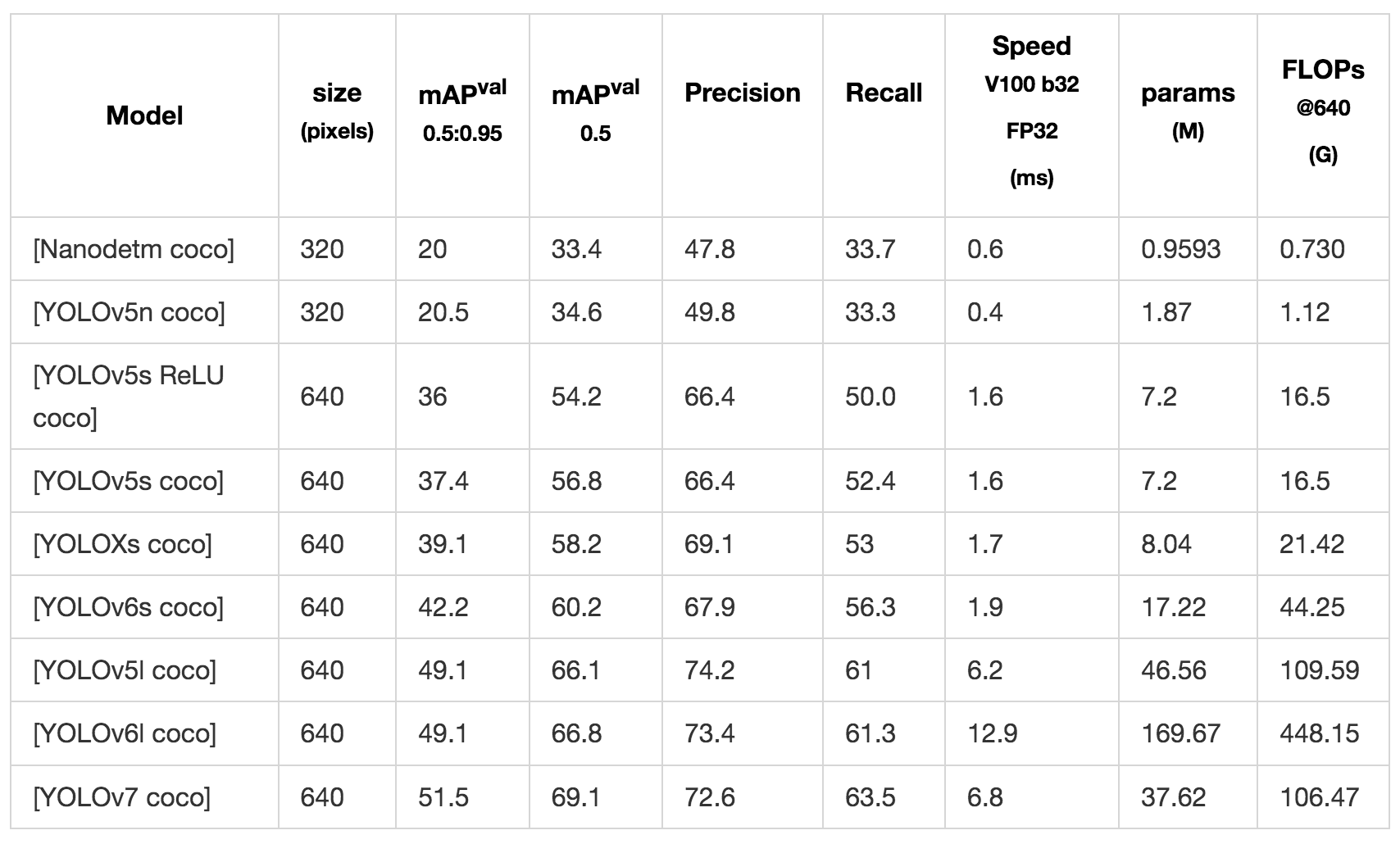

Your yolov6s only got 42.2 mAP ? or just 300epoch? And your yolox-s just got 39.1...

@BowieHsu Thanks for your attention! We 'll release large size models for YOLOv6, and the results shown in the table seem much different from ours.

Our work starts from reproducing yolov5 with more flexible training arch design. After obtaining the same ap/param/flops effect as the github version, we use consistent hyperparameters to train the newly added yolov6/yolov7 network structure, in order to use the control variable method to looking at the help of yolov6/yolov7 for the structure of yolov5, so the effect we reproduced is somewhat different from the open source version, but we are more concerned about whether there is an improvement, and there is absolutely no meaning to degrade yolov6/yolov7 work.

@BowieHsu Thanks for your attention! We 'll release large size models for YOLOv6, and the results shown in the table seem much different from ours.

Thank you for your reply. We are just trying to verify the stability of our internally developed training framework by reproducing valuable open source work. It is bound to happen that the reported metrics are different from your work. We hope to communicate more.