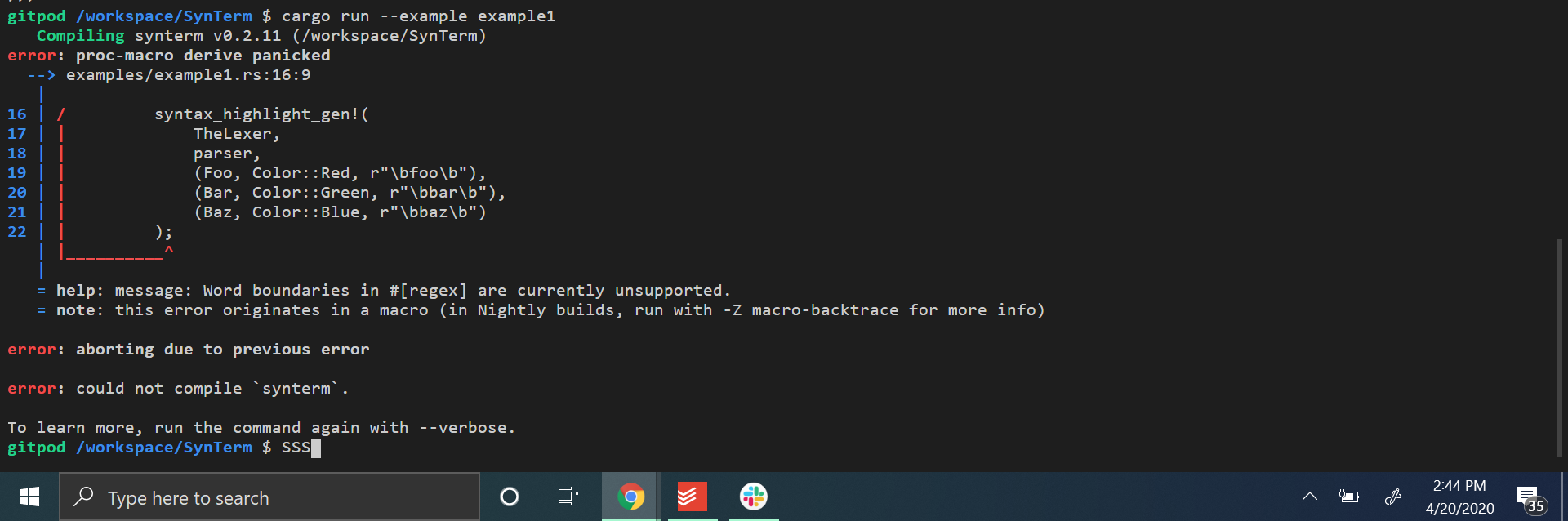

Support for word boundries

when writing a regex using word boundaries I get this

Is there any plan to support something like this?

Possibly, at least at the tail position, but it's far down on my list. Word boundries are a bit of a performance foot gun since they need to either backtrack or look-ahead, and AFAIU like \w they would have to expand into full unicode matching graph for all unicode alphabets, which tends to bloat the state machine.

What is it that you are syntax highlighting there that you need them? I'm using Logos to do syntax highlighting on my blog without any issues.

Edit: if it's not clear, if you have two definitions, one for [a-z]+, and one for foo, a string sequence of abfoocd will always match [a-z]+, while foo will only match literal foo.

I'm trying to highlight keywords

typically I would do

\bkeyword\b

right now keywordfoo highlights keyword and it should not highlight at all

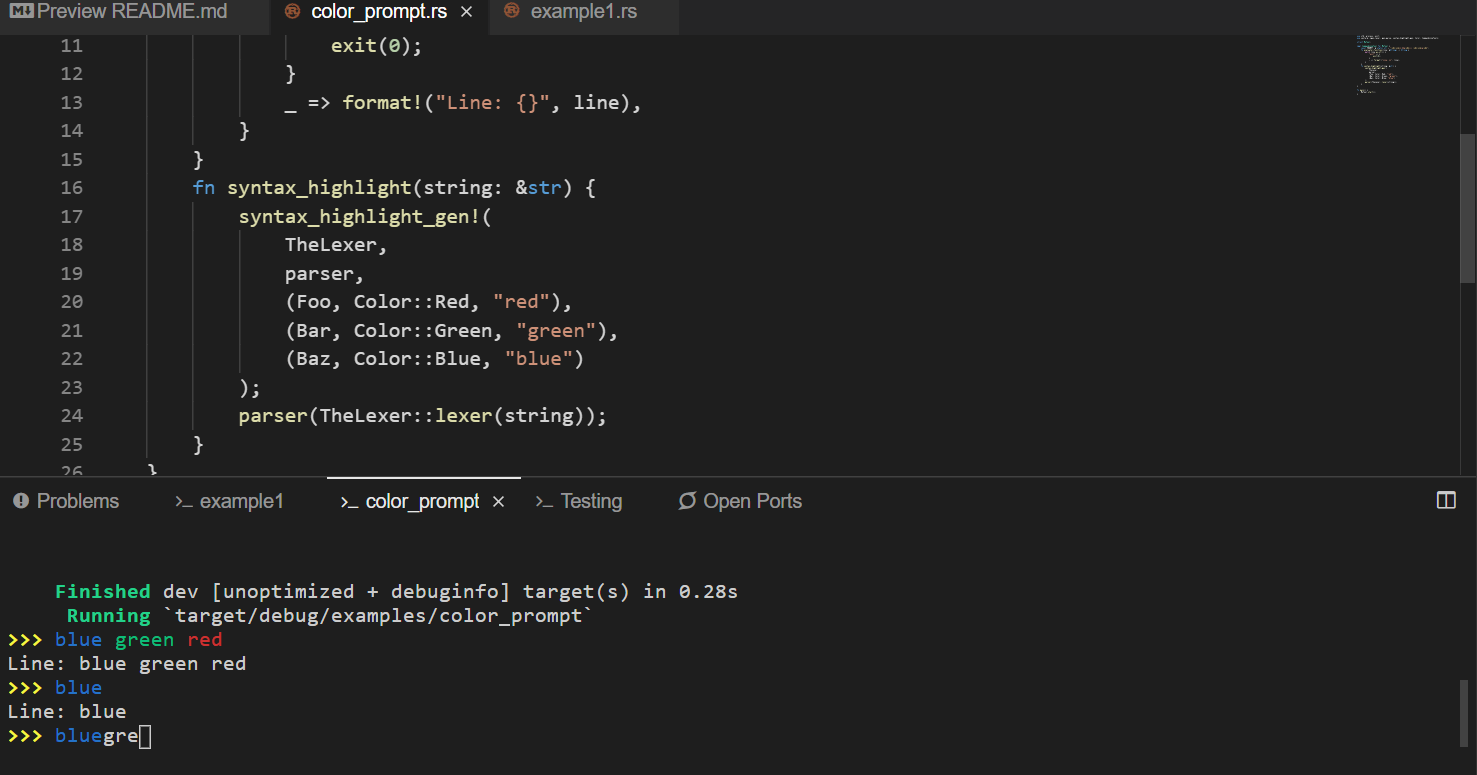

EDIT: To demonstrate

OK, I read the blog and added a new regex.

FYI The lexer expands to (using cargo expand)

enum TheLexer {

#[end]

End,

#[error]

Error,

#[token = " "]

Whitespace,

#[regex = "red"]

Red,

#[regex = "green"]

Green,

#[regex = "blue"]

Blue,

#[regex = "[a-zA-Z0-9_$]+"]

NoHighlight,

}

This fixes a lot, thanks however there are still a few more issues here is the state of highlighting.

Why do you think redf isn't invalidated

That looks like a bug! Let me check this out.

@JesterOrNot you'll need to upgrade to 0.11, this has been already fixed.

I've added a test for this, and it's passing.

How would I translate this

while tokens.token != $enumName::End

to 0.11

i.e. how can I check the current token before I would use tokens.token

Lexer is an iterator now. You could wrap it in peekable, though in this case you don't need to check since there is no end token anymore, you can just do:

for token in lexer {

// ...

}

// Or, if you want to keep using the lexer:

while let Some(token) = lexer.next() {

// ...

}

If you post a link to the code you are having troubles with I can help out with upgrading. If there is something missing in the API that would make things more ergonomic for more use cases, it would also be good to know :)

https://github.com/JesterOrNot/SynTerm I have been using logos as a compile to target for a lexer generator generator, the idea is to easily add syntax highlighting for any repl or shell. I've been using macros to generate the lexer struct

Yeah, so for 0.11 you change this:

while tokens.token != $enumName::End {

match tokens.token {

$(

$enumName::$token => print!("\x1b[{}m{}\x1b[m", $ansi, tokens.slice()),

)*

_ => print!("{}", tokens.slice())

}

tokens.advance();

}

To this:

while let Some(token) = tokens.next() {

match token {

$(

$enumName::$token => print!("\x1b[{}m{}\x1b[m", $ansi, tokens.slice()),

)*

_ => print!("{}", tokens.slice())

}

}

And remove the end variant, it's no longer necessary since next returns Option<YourEnum>:

#[end]

End,

Edit: also, your function signature should now be just:

fn $funcName(mut tokens: Lexer<$enumName>) {

Release notes for 0.11 might be helpful too. There is a lot of breaking changes in this version, which I'm sorry for, but it should hopefully be last version that does so many sweeping API changes.

Thank you so much for all the help :)

Thanks for using Logos :)