Run stereo.launch.py but pointcloud2 fps is very low

Use the default demo and run stereo.launch.py, but the pointcloud2 fps on RVIz looks pretty low at about one frame per second

Can I know which device is used here and what host ?

Can I know which device is used here and what host ?

device oak-d and host is laptop computer

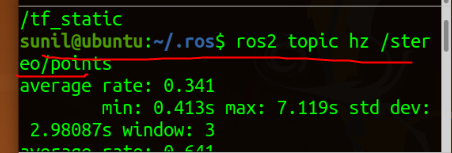

Ok. Can you do rostopic hz test on the depth message and the pointcloud topics and share the screenshot with us please?

And I would suggest switching to stereo_inertial_node since it has lot of improvements.

Ok. Can you do

rostopic hztest on the depth message and the pointcloud topics and share the screenshot with us please? And I would suggest switching tostereo_inertial_nodesince it has lot of improvements. This use stereo_inertial_node and screenshot

What about depth image frame rate ? And just to cross confirm. The repo is up-to date right ?

Another thing you could share is resource consumption using top if it's okay."

And just to second confirm this is OAK-D. Not OAK-D-POE ?

What about depth image frame rate ? And just to cross confirm. The repo is up-to date right ?

1. 2.yes

3.

2.yes

3.

4.oak-d

4.oak-d

Thanks for the details. Can I know which ROS version this is ?

Thanks for the details. Can I know which ROS version this is ? Ros version is ros galactic

From

From stereo.launch.py. I don't see an issue on mine.

Things to check.

- DDS connection.

- Bandwidth available

I have tried many times and it is still the same. How should I check it?

@daxoft may be able to advise.

I haven't been able to reproduce the bug, do you have at your disposal another camera and/or host? Does the same issue occur with other examples as well?

I haven't been able to reproduce the bug, do you have at your disposal another camera and/or host? Does the same issue occur with other examples as well?

I am using other computers as well, it is worth noting that they are all running ubantu20 on VMware

thanks, VM might affect it, I will try it on VMware also; might not matter too much but are you running VMware from linux or windows? And does the host VM has ROS2 installed as well?

thanks, VM might affect it, I will try it on VMware also; might not matter too much but are you running VMware from linux or windows? And does the host VM has ROS2 installed as well?

1.In the Windows 2.yes

Is this using a reliable publisher? You might be facing issues if that's the case if the point cloud is sufficiently big.

Another thing -- what RMW do you use? If it's galactic the default is cyclonedds, and I have observed that without configuration sometimes cyclonedds may try to multicast large messages.

By default depth_image_proc uses Best Effort policy. There's a lot of parameters that influence framerate of such big messages in ROS such as DDS configuration, network speed and some internal linux configs, we'll probably add some DDS configurations to the examples, but for now you can check out https://docs.ros.org/en/humble/How-To-Guides/DDS-tuning.html

FYI I just ran into this issue last night with my OAK-D Pro, W, over USB 3. Dell laptop running Ubuntu 20.04.5 LTS (GNU/Linux 5.15.0-56-generic x86_64) , ROS 2 Galactic, CycloneDDS. Switched to RMW_IMPLEMENTATION=rmw_fastrtps_cpp and problem disappeared.

Edit: I should clarify: I see this only when there's another machine subscribes to /stereo/points. If there are no off-machine subscribers, this does not occur with CycloneDDS.

If you want to restrict CycloneDDS to only use multicast for discovery (and not for message transmission) you can set your config xml to something like this:

<?xml version="1.0" encoding="UTF-8" ?>

<CycloneDDS xmlns="https://cdds.io/config" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="https://cdds.io/config https://raw.githubusercontent.com/eclipse-cyclonedds/cyclonedds/iceoryx/etc/cyclonedds.xsd">

<Domain id="any">

<General>

<AllowMulticast>spdp</AllowMulticast>

</General>

</Domain>

</CycloneDDS>

export CYCLONEDDS_URI=file:///absolute/path/to/config_file.xml

This has helped us for large messages such as pointclouds on our cyclonedds config.

Closing due to inactivity, please reopen if there are still questions.