langflow

langflow copied to clipboard

langflow copied to clipboard

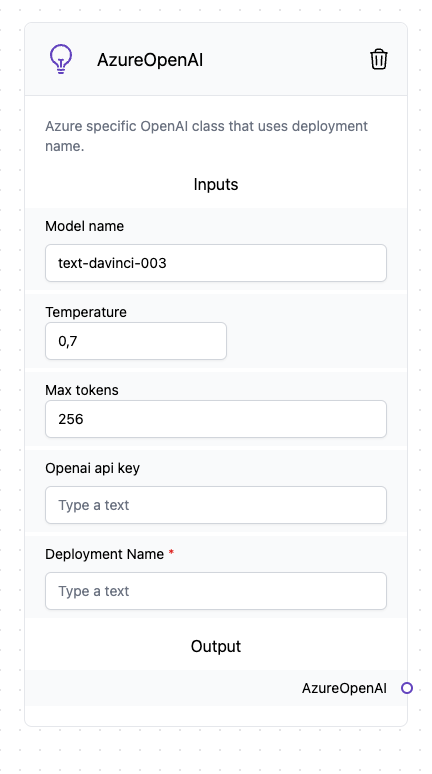

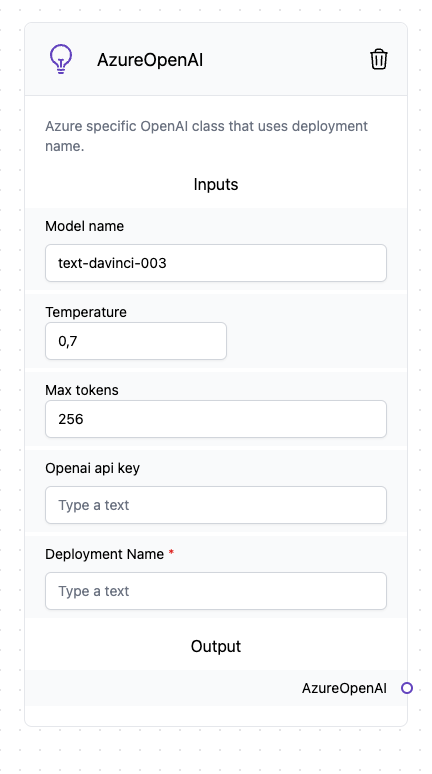

Support AzureOpenAI configuration

The AzureOpenAI LLM is functionally identical to the OpenAI LLM but requires different parameters.

It would be great to be able to use this configuration!

Hey, we are adding it in our next release. It might some more testing but should work.

Just FYI this would also make it possible to use any llama.cpp compatible models (llama, alpaca, gpt4all, etc) via https://github.com/abetlen/llama-cpp-python#web-server

This looks very interesting. I have started working on it. It requires a few extra parameters other than the API key. In the case of llama-cpp-python is it exactly the same as the docs?

In LangFlow, if you don't pass the API key, LangChain will still try to find it in the environment but according to the example in the docs, there are a few variables you need to set to be able to use it. What is your opinion on that?

Are those variables normally set in the environment of the average Azure user?

The deployment_name param is now really simple to add, but we must have a clear template of the node and how it is used.

What do you think of this approach?

What do you think of this approach?

@ogabrielluiz woops, after looking into it a little deeper it looks like this was actually already possible with the regular OpenAI llm. I missed that you could just change the OPENAI_API_BASE environment variable. This is actually preferrable as the azure endpoints seem to be using an older version of the OpenAI API.

Anyways, sorry for the confusion. Great tool btw!

Oh, nice!

Maybe we could put that functionality into the OpenAI node to allow the person to chose.

I've been thinking about dynamic nodes that change depending on params. Maybe this would be a good use case to explore.

Anyway, with what you described it is possible to use your project?

Yes it should, I'm trying to make it as interoperable as possible with existing tools that already are built against the OpenAI API.

Currently switching between models doesn't actually do anything as the server only supports a single llama.cpp model loaded in at once but I'm working on allowing the user to provide aliases so e.g. gpt-3.5-turbo -> llama-7b or text-davinci-003 -> llama-30b

What do you think of this approach?

Nice, but right now the OpenAI node takes the OPENAI_API_KEY. Azure OpenAI requires 4 values: OPENAI_API_TYPE, OPENAI_API_KEY``, OPENAI_API_BASEandOPENAI_API_VERSION`. The user should also be able to provide them.

In addition we'd also need an Azure Open AI Embedding node.

I got an Azure OpenAI account and can test if necessary. Is the code already written? Do you need help?

Same here I have an Azure Account, I can help with testing.

El mar, 18 abr 2023, 20:03, Michel Hua @.***> escribió:

I got an Azure OpenAI account and can test if necessary. Is the code already written? Do you need help?

— Reply to this email directly, view it on GitHub https://github.com/logspace-ai/langflow/issues/85#issuecomment-1513588841, or unsubscribe https://github.com/notifications/unsubscribe-auth/AACRLWWTUAUBKTP34XR3D73XB3JODANCNFSM6AAAAAAWNPWKJQ . You are receiving this because you commented.Message ID: @.***>

Me too!

Just bumping this one, I feel it will enable a lot of users. And indeed, I can also help test.

bump!

This issue has been automatically marked as stale because it has not had recent activity. It will be closed if no further activity occurs. Thank you for your contributions.