shut down k8s.gcr.io bigquery analysis

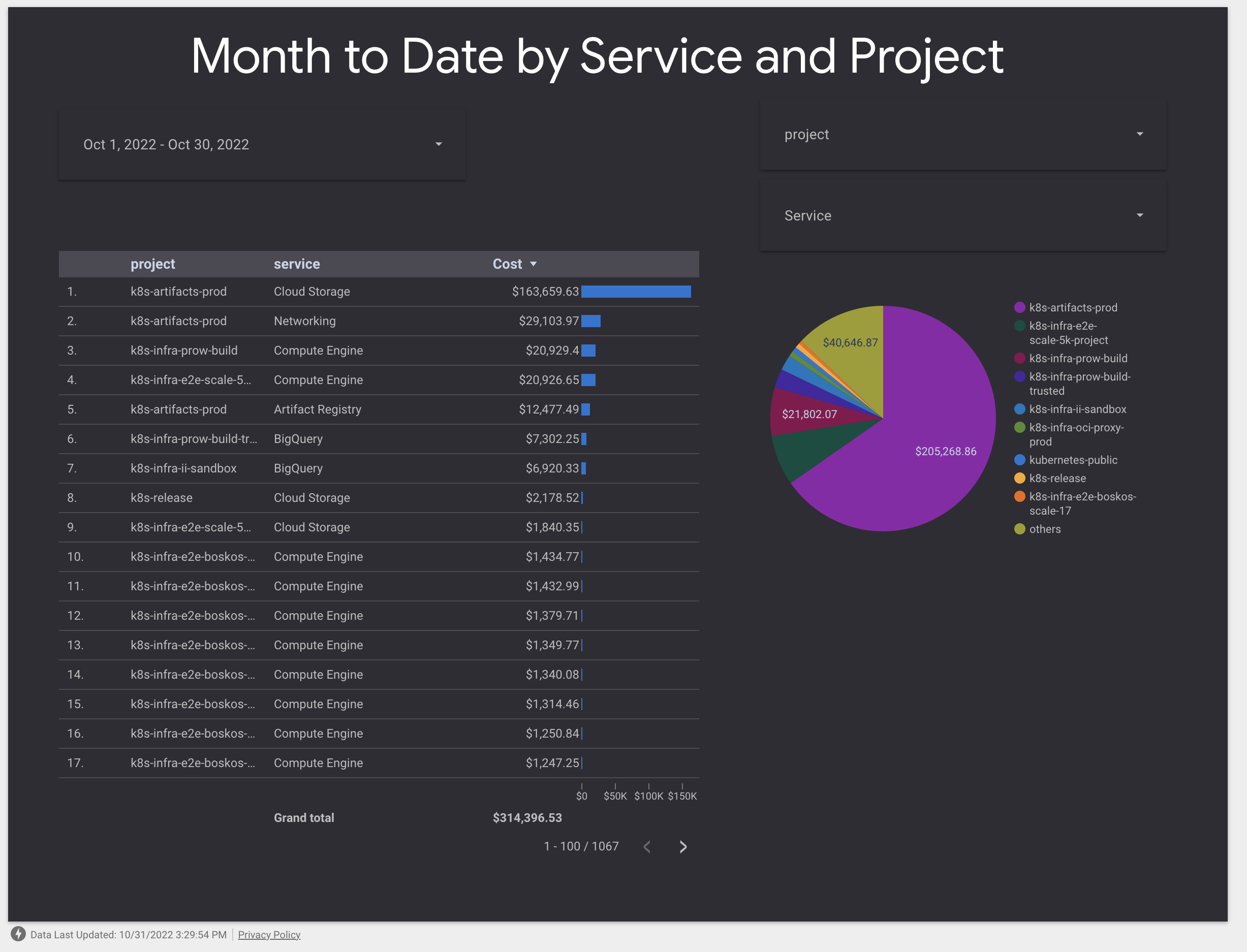

We have all the existing historical data, this is still costing ~$7k/mo or $6,920.33 / $314,396.53 * 100 = 2.2% of our spend. (see: k8s-infra-ii-sandbox, BigQuery in the billing report at #7).

Also, the historical data is more valuable, because it's 100% traffic there vs now some traffic is at registry.k8s.io

We should immediately disable these jobs.

~~IIRC we already requested this earlier this year multiple times including at the SIG meeting, but it still appears to be spending $$$, so let's turn these off now?~~

EDIT: per https://github.com/kubernetes/k8s.io/issues/4402#issuecomment-1297814983 this is storage costs not processing? even still, this is a quite noticable charge, let's grab high level info and then purge this cost.

https://datastudio.google.com/c/u/0/reporting/14UWSuqD5ef9E4LnsCD9uJWTPv8MHOA3e/page/8fWn

/assign

The cost is related to active storage not processing or querying but I agree with the idea to reduce the resources consumption.

I'll clean up the project in the incoming weeks.

@hh @Riaankl this data is transferable to another GCP (owned by ii or CNCF). Please take a look at other options.

Can we grab the high level results if we haven't already and then remove this dataset? This is # 7 on our spend costing about as much as analyzing CI logs for error clusters.

Cleanup was done. One dataset is remaining.

ameukam@cloudshell:~ (k8s-infra-ii-sandbox)$ gcloud alpha bq datasets list --project k8s-infra-ii-sandbox

ID: k8s-infra-ii-sandbox:etl_script_generated_set

LOCATION: US

We should see cost related to BQ go down over the next days.

Yes, I just deleted all the historical datasets, keeping only the latest set that feed the data studio report. If new data is needed we can generate it.

thank you!!

This is done, one report data set remains. /close

This is now costing very little, at $6.64 out of $10,100.89 yesterday. Thanks all!