kustomize

kustomize copied to clipboard

kustomize copied to clipboard

[Question] With extraArgs deprecated on kustomize version 4.1.2 how to pass --api-versions ?

Hi,

I have the following manifest definition

` apiVersion: kustomize.config.k8s.io/v1beta1 kind: Kustomization

helmCharts:

- name: grafana version: 6.8.2 repo: https://grafana.github.io/helm-charts valuesFile: grafana-values.yaml releaseName: grafana `

The Ingress configuration is defined to use the apiVersion: networking.k8s.io/v1, but this is not being respected, it keeps applying the apiVersion: extensions/v1beta1, how could I force now in Kustomize version 4.1.2 to use the parameter that was working fine on version 3.4 extraArgs:

- "--api-versions=networking.k8s.io/v1/IngressClass"

I have tried to check the code implementation here -> https://github.com/kubernetes-sigs/kustomize/blob/master/api/types/helmchartargs.go but I don't see any way to pass extra arguments to helm.

The explanation here -> https://github.com/kubernetes-sigs/kustomize/pull/3784 is not clear in how to handle it.

Could someone please help me to understand how to handle this in this new version?

Thank you.

I am currently running in the same problem.

is there a solution for setting the api-versions or the kube-version to get the correct

.Capabilities.APIVersions.Has setuped for helm?

in my case I try to render traefik with ingressClass but this is not possible since helm does not show the Capabilities for networking.k8s.io/v1beta1 or networking.k8s.io/v1 ingressClass

example:

helmCharts:

- name: traefik

includeCRDs: true

valuesInline:

ingressClass:

enabled: true

deployment:

replicas: 3

releaseName: traefik

version: 10.6.1

repo: https://helm.traefik.io/traefik

@Berveglieri or @Links2004 Did you figure out a workaround for this? I'm also having this problem.

Hi @metasim, I was able to make it work passing the following labelSelector

labelSelector: "app.kubernetes.io/managed-by=Helm"

So in my kustomization.yaml I did the following to replace to the new ingress format before upgrading kubernetes to 1.21

` - patch: |-

- op: replace

path: "/apiVersion"

value: networking.k8s.io/v1

target:

group: networking.k8s.io

version: v1beta1

kind: Ingress

labelSelector: "app.kubernetes.io/managed-by=Helm"

name: oauth2-proxy

namespace: stable`

and patched my ingress file with the new format for the version networking.k8s.io/v1

apiVersion: extensions/v1beta1 kind: Ingress metadata: name: grafana spec: rules: - host: my-hostname.com http: paths: - backend: service: name: grafana port: number: 80 path: / pathType: ImplementationSpecific

if you have any questions feel free to ping me directly.

Thats...not a solution to the problem at all though? Being able to use a patch to fix the thing is not a substitute for being able to pass the correct argument.

I never said it was a solution for the problem, I said that was the way I've found to make it work and shared. But as mentioned, there is a comment of the developer saying why it was disabled and the security reasons implied.

Ah I didn't fully understand the original comment. Fair enough, though if you wanted to leave this ticket open so that maybe it can be fixed somehow that would make sense to me ;)

/reopen /area helm /tirage accepted

@natasha41575: Reopened this issue.

In response to this:

/reopen /area helm /tirage accepted

Instructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository.

The Kubernetes project currently lacks enough contributors to adequately respond to all issues and PRs.

This bot triages issues and PRs according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Mark this issue or PR as fresh with

/remove-lifecycle stale - Mark this issue or PR as rotten with

/lifecycle rotten - Close this issue or PR with

/close - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/lifecycle stale

The Kubernetes project currently lacks enough active contributors to adequately respond to all issues and PRs.

This bot triages issues and PRs according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Mark this issue or PR as fresh with

/remove-lifecycle rotten - Close this issue or PR with

/close - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/lifecycle rotten

Any update on this?

The Kubernetes project currently lacks enough active contributors to adequately respond to all issues and PRs.

This bot triages issues according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Reopen this issue with

/reopen - Mark this issue as fresh with

/remove-lifecycle rotten - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/close not-planned

@k8s-triage-robot: Closing this issue, marking it as "Not Planned".

In response to this:

The Kubernetes project currently lacks enough active contributors to adequately respond to all issues and PRs.

This bot triages issues according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied- After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied- After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closedYou can:

- Reopen this issue with

/reopen- Mark this issue as fresh with

/remove-lifecycle rotten- Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/close not-planned

Instructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository.

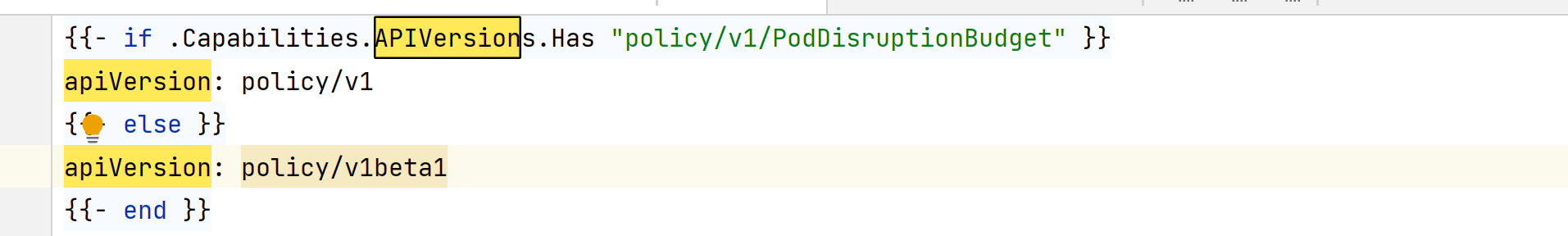

its very basic feature, lots of template make use of Capabilities.APIVersions.Has

due to even very basic aws csi driver don't work with kubernetes version 1.25 either it should pick version from cluster or let us define the kuberetes version or simply the extra flag of extraArgs like before

patching is ok for short time, but not good practice

Can we reopen this issue? As the poster above said, many Charts use .Capabilities.