Use only nat gateway for outbound connections

/kind feature

With https://github.com/kubernetes-sigs/cluster-api-provider-azure/pull/1411, we are going to allow to configure multiple subnets for worker nodes. We also support nat gateway for outbound connections which means when some subnets have nat gateway configured, and some do not, we fallback to outbound load balancer. While this works, this adds some complexity to the code, and the network topology. Since we now have support for nat gateway, this is a good chance to get rid of outbound load balancer as they serve the same purpose. Also microsoft recommends using nat gateway in favor of outbound loadbalacers.

Describe the solution you'd like Remove all outbound lbs and only use nat gateway for outbound connectivity.

Anything else you would like to add: Handling older clusters might be tricky.

- [ ] Replace Node outbound load balancer with nat gateway

- [ ] Replace Control Plane outbound load balancer with nat gateway (For private clusters)

Environment:

- cluster-api-provider-azure version:

- Kubernetes version: (use

kubectl version): - OS (e.g. from

/etc/os-release):

Two things we need to figure out before doing this:

- How to handle back compat for existing clusters that have outbound LBs

- are there any use cases in which you'd want an LB or not want a NAT Gateway (but still want outbound)

@jackfrancis @Michael-Sinz tagging you in case you have any feedback or user data on this (especially 2)

I would love to get rid of unnecessary Load Balancers. LBs for the sole purpose of providing outbound egress + SNAT is overkill, IMO (I assume that's what we're talking about removing here).

That said, in Azure there are "tricks" that we rely upon the LB for in order to ensure that our clusters don't run out of outbound SNAT ports (in other words, when you have large clusters that maintain a bunch of connections that talk outside of the VNET, you accumulate SNAT ports that are counted against a hard limit, I think you have a certain amount of ports per "outbound IP" associated with the LB). Does the NAT Gateway solution present a comparable path forward for folks who need, let's call it, a whole boat ton of outbound SNAT ports?

@jackfrancis one of the main benefits of NAT gateway is actually that it doesn't have the same SNAT port exhaustion limits

Using a NAT gateway is the best method for outbound connectivity. A NAT gateway is highly extensible, reliable, and doesn't have the same concerns of SNAT port exhaustion.

from https://docs.microsoft.com/en-us/azure/load-balancer/load-balancer-outbound-connections#scenarios

Yay sign me up 🍺

@jackfrancis one of the main benefits of NAT gateway is actually that it doesn't have the same SNAT port exhaustion limits

Using a NAT gateway is the best method for outbound connectivity. A NAT gateway is highly extensible, reliable, and doesn't have the same concerns of SNAT port exhaustion.

from https://docs.microsoft.com/en-us/azure/load-balancer/load-balancer-outbound-connections#scenarios

This sounds wonderful - what does it do as far as handling clusters with, say, 45,000 pods / 900 nodes? We have had problems in the past with SNAT and had to add extra outbound IP addresses to get enough concurrent streams.

what does it do as far as handling clusters with, say, 45,000 pods / 900 nodes? We have had problems in the past with SNAT and had to add extra outbound IP addresses to get enough concurrent streams.

From https://docs.microsoft.com/en-us/azure/load-balancer/load-balancer-outbound-connections#associating-a-nat-gateway-to-the-subnet: Using a NAT gateway is the best method for outbound connectivity. A NAT gateway is highly extensible, reliable, and doesn't have the same concerns of SNAT port exhaustion.

However, reading more about On-demand SNAT with multiple IP addresses for scale : A public IP address attached to NAT provides up to 64,000 concurrent flows for UDP and TCP respectively.

Maybe I'm missing something here, but the limitations seem fairly similar to what we have for outbound load balancers presently.

what does it do as far as handling clusters with, say, 45,000 pods / 900 nodes? We have had problems in the past with SNAT and had to add extra outbound IP addresses to get enough concurrent streams.

From https://docs.microsoft.com/en-us/azure/load-balancer/load-balancer-outbound-connections#associating-a-nat-gateway-to-the-subnet:

Using a NAT gateway is the best method for outbound connectivity. A NAT gateway is highly extensible, reliable, and doesn't have the same concerns of SNAT port exhaustion.However, reading more about On-demand SNAT with multiple IP addresses for scale :

A public IP address attached to NAT provides up to 64,000 concurrent flows for UDP and TCP respectively.Maybe I'm missing something here, but the limitations seem fairly similar to what we have for outbound load balancers presently.

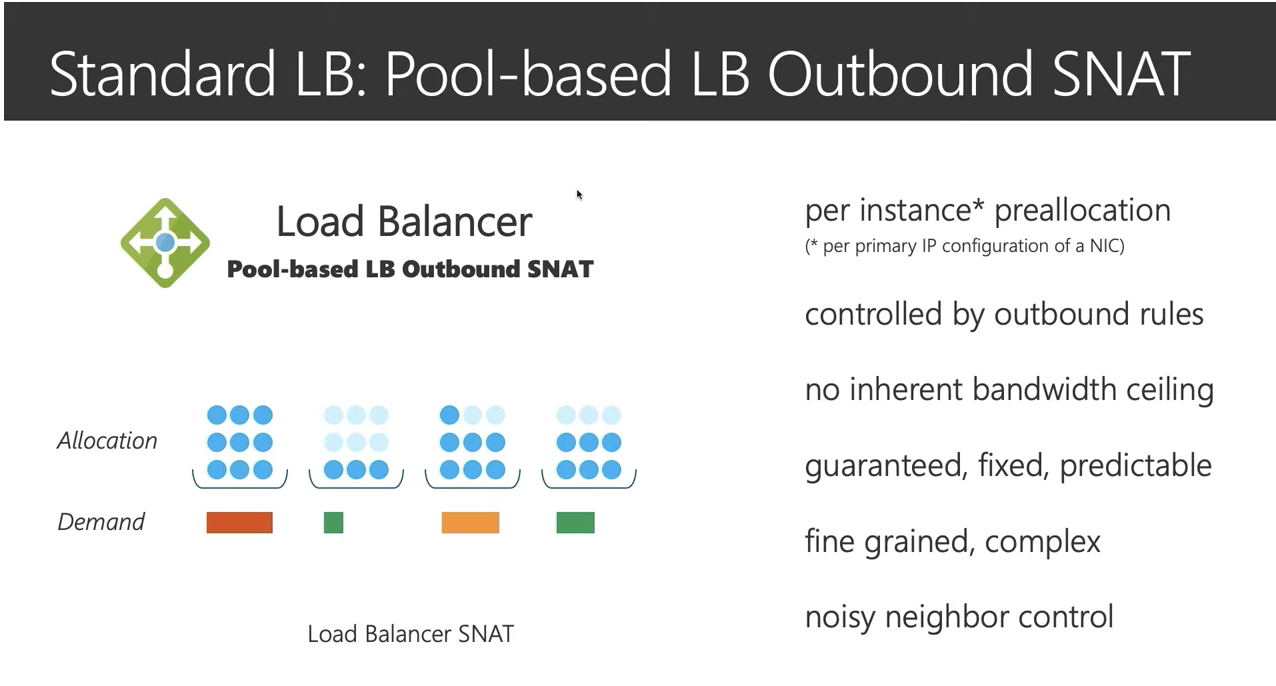

One of the challenges with the loadbalancer SNAT thing is that the 64,000 streams are divided up "equally" among the VMs (nodes) in the vnet (cluster). This is both good and bad. The good is that you can't have one node hogging all of the stream SNAT ports and thus deny some other nodes connectivity. However, this is a double edged sword since the as this also means that you have to have enough SNAT ports per node to handle the "worst case" needs on any given node.

It gets even worse if you use the load balancer in dynamic mode as scaling the cluster changes the number of SNAT ports available per node and thus can cause problems just because the cluster got larger (went over the next allocation granularity)

Note that this applies to node level work (docker->acr image pulls, package updates, etc) and pod workloads (getting data from blob store, talking to keyvault, accessing other microservices in different clusters, etc)

Thus there are some interesting challenges here. One that we have is that we need some of our outbound requests to come from our service tag as the customer wishes those outbound requests to their systems to be covered by a service tag network controls. (In addition to the auth that will happen) Thus, careful managing of the outbound IP addresses is something we can't avoid.

This slide from the video on that doc is a good visual summary:

One limitation from the docs: NAT Gateways only support IPv4, they don't work with subnets with IPv6 addresses. So we would have to keep LB support for IPv6 clusters for now, at least until NAT Gateways support IPv6.

The Kubernetes project currently lacks enough contributors to adequately respond to all issues and PRs.

This bot triages issues and PRs according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Mark this issue or PR as fresh with

/remove-lifecycle stale - Mark this issue or PR as rotten with

/lifecycle rotten - Close this issue or PR with

/close - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/lifecycle stale

/remove-lifecycle stale

we have already updated templates to use NAT Gateway, we should change the webhook defaults to set the NAT Gateway by default if not using IPv6

/help

@CecileRobertMichon: This request has been marked as needing help from a contributor.

Guidelines

Please ensure that the issue body includes answers to the following questions:

- Why are we solving this issue?

- To address this issue, are there any code changes? If there are code changes, what needs to be done in the code and what places can the assignee treat as reference points?

- Does this issue have zero to low barrier of entry?

- How can the assignee reach out to you for help?

For more details on the requirements of such an issue, please see here and ensure that they are met.

If this request no longer meets these requirements, the label can be removed

by commenting with the /remove-help command.

In response to this:

/remove-lifecycle stale

we have already updated templates to use NAT Gateway, we should change the webhook defaults to set the NAT Gateway by default if not using IPv6

/help

Instructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository.

/assign

The Kubernetes project currently lacks enough contributors to adequately respond to all issues and PRs.

This bot triages issues and PRs according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Mark this issue or PR as fresh with

/remove-lifecycle stale - Mark this issue or PR as rotten with

/lifecycle rotten - Close this issue or PR with

/close - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/lifecycle stale

/remove-lifecycle stale

The Kubernetes project currently lacks enough contributors to adequately respond to all issues and PRs.

This bot triages issues and PRs according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Mark this issue or PR as fresh with

/remove-lifecycle stale - Mark this issue or PR as rotten with

/lifecycle rotten - Close this issue or PR with

/close - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/lifecycle stale

/remove-lifecycle stale

The Kubernetes project currently lacks enough contributors to adequately respond to all issues and PRs.

This bot triages issues and PRs according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Mark this issue or PR as fresh with

/remove-lifecycle stale - Mark this issue or PR as rotten with

/lifecycle rotten - Close this issue or PR with

/close - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/lifecycle stale

The Kubernetes project currently lacks enough active contributors to adequately respond to all issues and PRs.

This bot triages issues and PRs according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Mark this issue or PR as fresh with

/remove-lifecycle rotten - Close this issue or PR with

/close - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/lifecycle rotten

The Kubernetes project currently lacks enough active contributors to adequately respond to all issues and PRs.

This bot triages issues according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied - After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied - After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closed

You can:

- Reopen this issue with

/reopen - Mark this issue as fresh with

/remove-lifecycle rotten - Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/close not-planned

@k8s-triage-robot: Closing this issue, marking it as "Not Planned".

In response to this:

The Kubernetes project currently lacks enough active contributors to adequately respond to all issues and PRs.

This bot triages issues according to the following rules:

- After 90d of inactivity,

lifecycle/staleis applied- After 30d of inactivity since

lifecycle/stalewas applied,lifecycle/rottenis applied- After 30d of inactivity since

lifecycle/rottenwas applied, the issue is closedYou can:

- Reopen this issue with

/reopen- Mark this issue as fresh with

/remove-lifecycle rotten- Offer to help out with Issue Triage

Please send feedback to sig-contributor-experience at kubernetes/community.

/close not-planned

Instructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository.

/reopen /remove-lifecycle rotten

@jackfrancis: Reopened this issue.

In response to this:

/reopen /remove-lifecycle rotten

Instructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository.

/help-wanted

/unassign @jackfrancis

I would like to help on this issue. /assign xiujuanx