kfp-tekton

kfp-tekton copied to clipboard

kfp-tekton copied to clipboard

Regression in 0.6

/kind bug

@animeshsingh there are regressions in the 0.6 release

According to the official tutorial i tried the following:

kubectl apply -f https://storage.googleapis.com/tekton-releases/pipeline/previous/v0.20.1/release.yaml

YAML=https://github.com/kubeflow/kfp-tekton/raw/master/install/v0.6.0/kfp-tekton.yaml

kubectl apply --selector kubeflow/crd-install=true -f $YAML

kubectl apply -f $YAML

From https://github.com/kubeflow/kfp-tekton/blob/master/samples/big_data_passing/big_data_passing_description.ipynb

from typing import NamedTuple

import kfp

from kfp.components import InputPath, InputTextFile, OutputPath, OutputTextFile

from kfp.components import func_to_container_op

from kfp_tekton.compiler import TektonCompiler

import os

os.environ["DEFAULT_ACCESSMODES"] = "ReadWriteOnce"

os.environ["DEFAULT_STORAGE_SIZE"] = "2Gi"

# Writing big data

@func_to_container_op

def repeat_line(line: str, output_text_path: OutputPath(str), count: int = 10):

'''Repeat the line specified number of times'''

with open(output_text_path, 'w') as writer:

for i in range(count):

writer.write(line + '\n')

# Reading big data

@func_to_container_op

def print_text(text_path: InputPath()): # The "text" input is untyped so that any data can be printed

'''Print text'''

with open(text_path, 'r') as reader:

for line in reader:

print(line, end = '')

def print_repeating_lines_pipeline():

repeat_lines_task = repeat_line(line='Hello', count=5000)

# Commenting this line leads to an error:

print_text(repeat_lines_task.output) # Don't forget .output !

TektonCompiler().compile(print_repeating_lines_pipeline, __file__ + '.yaml')

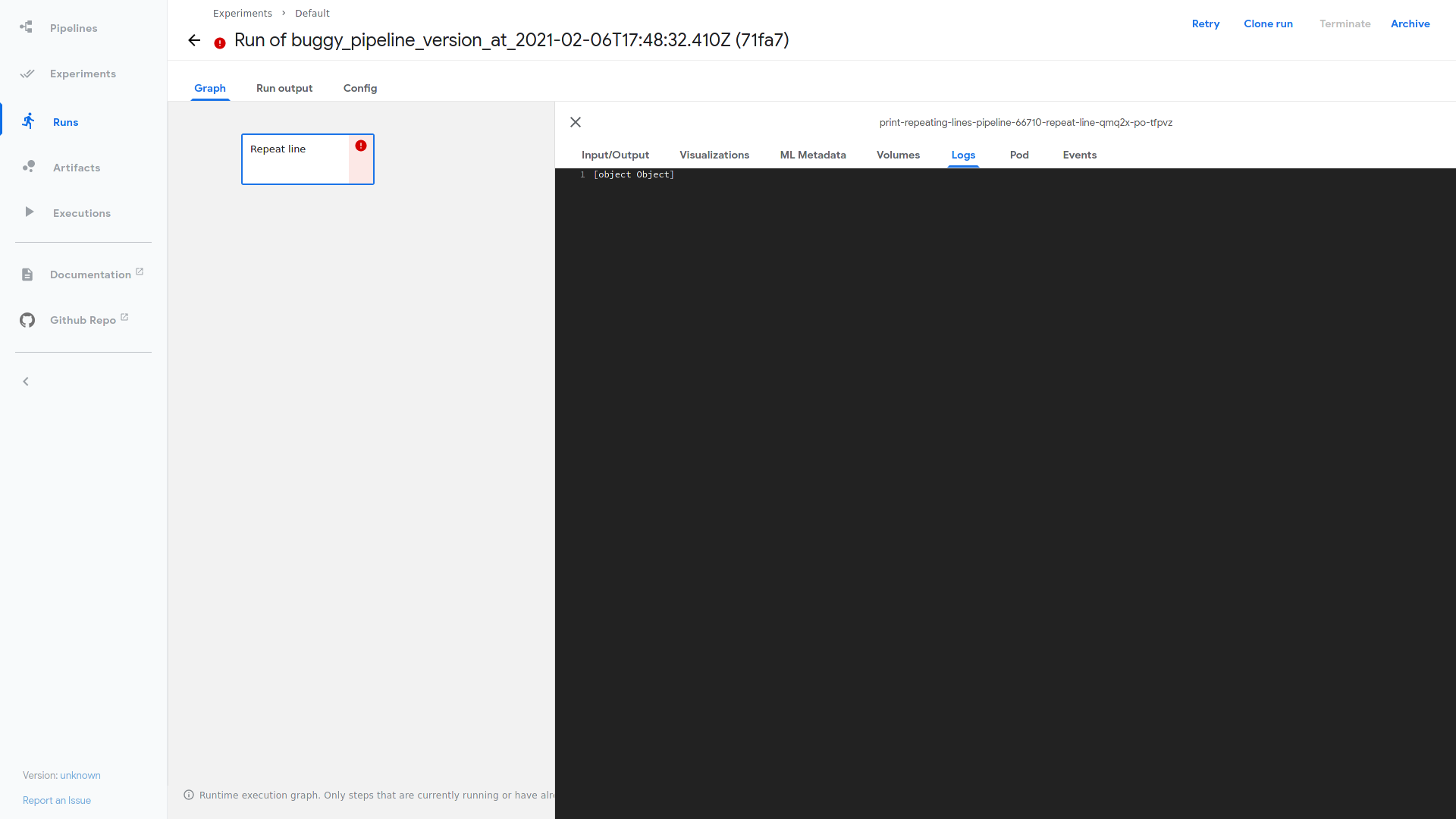

There are two different bugs here. 1. A known one https://github.com/kubeflow/kfp-tekton/issues/273

File "/tmp/tmp.mtJQDl0uc5", line 10

writer.write(line + '

^

SyntaxError: EOL while scanning string literal

You need to replace '\n' with "\N{LF}" or '\u000A'

if you comment out print_text(repeat_lines_task.output) # Don't forget .output ! then you will get the following error

[julius@localhost kubeflow]$ kubectl -n kubeflow logs pod/print-repeating-lines-pipeline-66710-repeat-line-qmq2x-po-tfpvz -c step-copy-artifacts

Added `storage` successfully.

tar: removing leading '/' from member names

tekton/results/output-text

`output_text.tgz` -> `storage/mlpipeline/artifacts/print-repeating-lines-pipeline-66710/repeat-line/output_text.tgz`

Total: 0 B, Transferred: 186 B, Speed: 13.34 KiB/s

{"level":"fatal","ts":1612633728.3991394,"caller":"entrypoint/entrypointer.go:155","msg":"Error while handling results: Termination message is above max allowed size 4096, caused by large task result.","stacktrace":"github.com/tektoncd/pipeline/pkg/entrypoint.Entrypointer.Go\n\tgithub.com/tektoncd/pipeline/pkg/entrypoint/entrypointer.go:155\nmain.main\n\tgithub.com/tektoncd/pipeline/cmd/entrypoint/main.go:118\nruntime.main\n\truntime/proc.go:204"}

[julius@localhost kubeflow]$

What did you expect to happen:

-

I should be able to use '\n' in pipelines without errors. Maybe just replace '\n' internally with "\N{LF}" or '\u000A'

-

Please make sure that an unused output does not fail the task or pipeline. This has to be fixed in the compiler i guess.

Additional information: [Miscellaneous information that will assist in solving the issue.]

Environment:

- Python Version (use

python --version): 3.9 - SDK Version: 0.6.0

- Tekton Version (use

tkn version): 0.6.0 - Kubernetes Version (use

kubectl version): 1.20.2 - OS (e.g. from

/etc/os-release): Fedora 33

buggy_pipeline.py_1.yaml.txt buggy_pipeline.py_2.yaml.txt

As you can see in the files, the workspace is missing

Looks like there's a bug with using newlines. This is fixed in the latest kfp code https://github.com/kubeflow/pipelines/pull/4993

For a quick fix, you can run pip install kfp==v1.4.0-rc1. We will also update the kfp-tekton dependency to a later version when kfp v1.4.0 is official.

Looks like there's a bug with using newlines. This is fixed in the latest kfp code kubeflow/pipelines#4993

For a quick fix, you can run

pip install kfp==v1.4.0-rc1. We will also update the kfp-tekton dependency to a later version when kfp v1.4.0 is official.

Thank you very much, I will test it and report back. What do you think about the workspace issue?

Looks like there's a bug with using newlines. This is fixed in the latest kfp code kubeflow/pipelines#4993 For a quick fix, you can run

pip install kfp==v1.4.0-rc1. We will also update the kfp-tekton dependency to a later version when kfp v1.4.0 is official.Thank you very much, I will test it and report back. What do you think about the workspace issue?

Thanks @juliusvonkohout for reporting this. Looks like the workspace won't be enabled when there's no input artifact which default back to Tekton results that has size limit of 4 MB. We will double check with the Argo implementation and update it correspondingly.

/cc @fenglixa

The pipeline runs fine with argo. E.g. emissary executor can be used and has no limitations regarding the output size. Workspaces should be enabled as soon as there is an output artifact, no matter whether it is used as input later on.

1.4.0 is avavilable now https://pypi.org/project/kfp/ https://github.com/kubeflow/pipelines/tags

https://github.com/kubeflow/kfp-tekton/issues/469

Looks like there's a bug with using newlines. This is fixed in the latest kfp code kubeflow/pipelines#4993 For a quick fix, you can run

pip install kfp==v1.4.0-rc1. We will also update the kfp-tekton dependency to a later version when kfp v1.4.0 is official.Thank you very much, I will test it and report back. What do you think about the workspace issue?

Thanks @juliusvonkohout for reporting this. Looks like the workspace won't be enabled when there's no input artifact which default back to Tekton results that has size limit of 4 MB. We will double check with the Argo implementation and update it correspondingly.

/cc @fenglixa

@fenglixa are there any news regarding the workspace creation without input artifacts?

Oh, let me check, will response to you soon. /assign

@juliusvonkohout I tried https://github.com/kubeflow/kfp-tekton/blob/master/samples/big_data_passing/big_data_passing_description.ipynb with latest code just now. But I didn't encounter the issues your mentioned here, could you help double confirm with latest releases? Thanks!

@Tomcli, @ckadner Do you know more details of this issue? Thanks!

@juliusvonkohout I tried https://github.com/kubeflow/kfp-tekton/blob/master/samples/big_data_passing/big_data_passing_description.ipynb with latest code just now. But I didn't encounter the issues your mentioned here, could you help double confirm with latest releases? Thanks!

@Tomcli, @ckadner Do you know more details of this issue? Thanks!

It is important, that you only use the first step. So the pipeline consist of exactly one, not two steps. The single step just outputs a big (>4KB) file, that is NOT used as input anywhere because there is only one step. Does this really work for you? Is a workspace created even though it is not used as input artifact? If yes, i will retest too.

Thanks @juliusvonkohout, I get your mean. This should be an issue need to be addressed, I will provide PR for it later.

Thanks for submitting this issue @juliusvonkohout

@animeshsingh @Tomcli @ckadner I would like to discuss this issue with you here.

In Argo, there is no this issue, because: Argo will handle the output as below definition, even it is or not big data passing:

outputs:

parameters:

- name: gcs-download-2-data

valueFrom: {path: /tmp/results.txt}

artifacts:

- {name: gcs-download-2-data, path: /tmp/results.txt}

But for tekton, the output will be translated to results by default:

results:

- {name: output-text, description: /tmp/outputs/output_text/data}

The issue for us here: We don't know which case we should translate the output to results, and which case we should enable workspace, if the output was not used by any other tasks.

Do you have any ideas on it? Thanks

@fenglixa so we only need to enable big data passing when the user defines the output as outputPath or input as inputPath. https://github.com/kubeflow/pipelines/blob/master/sdk/python/kfp/components/_python_op.py#L78-L81

If we don't have to use big data passing, then we will default it back to Tekton results.

Thanks @Tomcli , yes, you are right, we should capture whether user defined outputPath or inputPath. But from tekton complier. There are no any metrics defined if user defined outputPath (inputPath is OK). That's the issue my concerned.

The outputPath and inputPath should be part of the pipeline object in the compiler. Probably it wasn't capture when passing to the big data passing function.

Was it fixed in 0.8 ?

This issue has been automatically marked as stale because it has not had recent activity. It will be closed if no further activity occurs. Thank you for your contributions.

Im facing the same problem.Is there any further information? if there is no task to consume the 「outputPath」produced by the previous one,The 「outputPath」will be compiled as 「Tekton results」 which cannot hold large data since 4096 size limitation. If i am right the usage of 「outputPath」 is to pass binary data such as dataset or model file.Compile to「Tekton workspace」should be the expected behavior. hope this could be fixed ,also im glad to help if necessary

@tks-ai thanks for reporting this. We updated the big data passing recently to run on CSI Storage. This could be possible if we update the compiler a little bit.

We are finalizing what the new big data passing artifact path should be. Once that's finalized, we can come back to this issue before we cut the next release.

This issue has been automatically marked as stale because it has not had recent activity. It will be closed if no further activity occurs. Thank you for your contributions.

@tks-ai thanks for reporting this. We updated the big data passing recently to run on CSI Storage. This could be possible if we update the compiler a little bit.

We are finalizing what the new big data passing artifact path should be. Once that's finalized, we can come back to this issue before we cut the next release.

Will this be fixed in 1.2 then?

closed due to inactivity from maintainers.