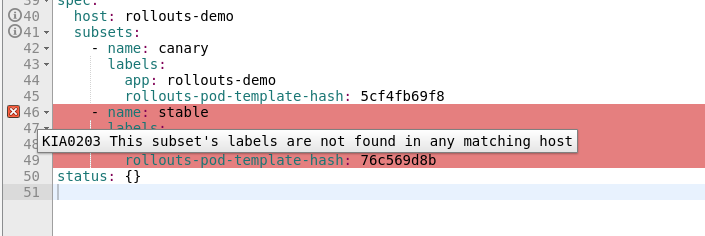

"KIA0203 This subset's labels are not found in any matching host" For Argo Rollout canary scenario

During canary rollout with Argocd with subset-traffic splitting: https://argoproj.github.io/argo-rollouts/features/traffic-management/istio/#subset-level-traffic-splitting

One of the destination is marked as: "KIA0203 This subset's labels are not found in any matching host", however its live and istio routes traffic correctly.

Istio routes traffic fine to both destinations and pods with labels from destination rule are available as well

$kubectl get pods -l app=example,rollouts-pod-template-hash=6fd44bcc96

NAME READY STATUS RESTARTS AGE

example-6fd44bcc96-csqrf 2/2 Running 0 6h2m

$kubectl get pods -l app=example,rollouts-pod-template-hash=64db898946

NAME READY STATUS RESTARTS AGE

example-64db898946-hxq22 2/2 Running 0 23m

Versions used Kiali: 1.37.0 Istio: 1.8.1 Kubernetes flavour and version: AKS 1.20

To Reproduce

- Set up Canary Release with subset-level traffic splitting: https://argoproj.github.io/argo-rollouts/features/traffic-management/istio/#subset-level-traffic-splitting

- Trigger Canary rollout

- Check destination rule in Kiali

Expected behavior Subset should be available in Kiali.

output of: curl 'http://<kiali>/kiali/api/namespaces/example-dev/istio/destinationrules/example?validate=true'

out.txt

cc @xeviknal , any thoughts?

thank you @Yorkerrr It is weird indeed. Because the second subset is almost the same that the first one, just changing one label. Is the second pod created the same exact way that the first one? Is it behind the same service? I think I definitely install jargo and try one example.

@xeviknal , Yes, pods are created in the same way, the only thing is that Argorollout may change labels on pods on a fly and if kiali caches it, there might be some issues. It might be connected to https://github.com/kiali/kiali/issues/4141 . Looks like kiali handles replicasets quite not correctly in some cases, e.g if labels are changed after RS creation. So in case of Argorollout process, pods and RS are not immutable, argorollout updates their labels.

I think this bug is originated due https://github.com/kiali/kiali/issues/4141#issuecomment-896653917, basically the custom controller is not well identified as a workload and that triggers that the validations logic is missing it on this task.

Probably when the referenced issues is resolved this one should be incorporated then.

Keeping open until this.

hi @Yorkerrr,

I have been able to reproduce this scenario. Thank you again for pointing out. The problem is that validations only consider the Controllers, not the pods. In the case of Argo Rollouts, there is only one rollout (controller) for two different pods (with different labels). The validation (see the snippet bellow) only is able to receive the rollout that happens to have the labels of the stable version. That is why the first subset is valid but not the second one.

I believe that after approaching #4382 will be able to performantly use pods in this validation in order to perform accurately this scenario.

https://github.com/kiali/kiali/blob/fd31f19f427cc984bac993289287338dc43bf3b7/business/checkers/destinationrules/no_dest_checker.go#L95-L102

For documentation's sake, I've created this gist to ease the task to reproduce this scenario for non-versed argo rollouts devs: https://gist.github.com/xeviknal/9ab154d6e34c9101b54e076a16645adb

Moving this issue to waiting for external until #4382 will be merged.

anything news?

Hi @jseongsm2 , now that #4382 is completed, we will continue on this one. Thanks.

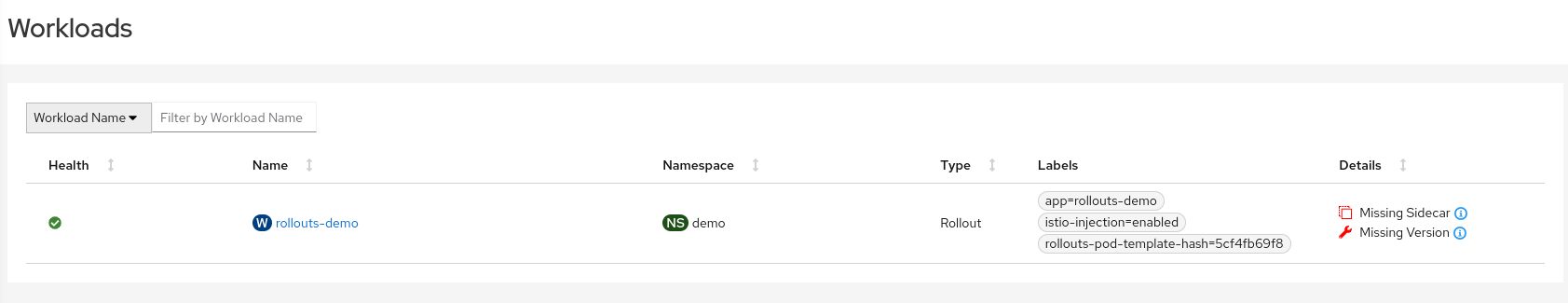

Hello, during the testing of this issue. I see the error, and, also, see that one of the workloads is not listed, well... we might be talking about the same workload really but, the argo rollout controler produces two replica sets, so we should have two workloads I guess, but that is not the case:

So, looking into the description of the validation:

And also looking into this comment (and especifically in the code snippet, that shows that the validation uses the workloads list).

hi @Yorkerrr,

I have been able to reproduce this scenario. Thank you again for pointing out. The problem is that validations only consider the Controllers, not the pods. In the case of Argo Rollouts, there is only one rollout (controller) for two different pods (with different labels). The validation (see the snippet bellow) only is able to receive the rollout that happens to have the labels of the stable version. That is why the first subset is valid but not the second one.

I believe that after approaching #4382 will be able to performantly use pods in this validation in order to perform accurately this scenario.

https://github.com/kiali/kiali/blob/fd31f19f427cc984bac993289287338dc43bf3b7/business/checkers/destinationrules/no_dest_checker.go#L95-L102

For documentation's sake, I've created this gist to ease the task to reproduce this scenario for non-versed argo rollouts devs: https://gist.github.com/xeviknal/9ab154d6e34c9101b54e076a16645adb

Moving this issue to

waiting for externaluntil #4382 will be merged.

I think a good thing to try is to see why there is a missing workload given that there is actually a ReplicaSet for it.

I will investigate that.

@lucasponce @hhovsepy what do you think about this?. To me is better to add this missing workload rather to modify the validation to start using the pods to validate.

@leandroberetta agree to include missing workloads into list of considered Workloads in validation.

@leandroberetta, @hhovsepy, I was considering lookinginto #5261. I see you have already been in this area but the last update was from June. Perhaps we can meet to talkab out the status and the feasibility of #5261?

@leandroberetta, @hhovsepy, I was considering lookinginto #5261. I see you have already been in this area but the last update was from June. Perhaps we can meet to talkab out the status and the feasibility of #5261?

Hi @jshaughn yes, sure, I'm coming back next week. The last thing I was investigating is that on the cache, all controllers just keep 1 workload, even if there is more, so, Rollouts is a controller that owns two active workloads. Some logic (I guess) needs to be done to alllow more workloads if it's a Rollouts controller type.

But we can meet next week and continue discussing this.

Stop monitoring issues :-) see you in a week!