MariaDB Galera cluster crashes with istio mTLS mode STRICT

Bug Description

Hello,

I'm trying to run a mariadb galera cluster with istio. I have to mention that i'm not using bitnami chart but i have my own mariadb image in a statefulset.

galera config:

[galera]

# Galera cluster configuration

wsrep_on = ON

wsrep_provider = /usr/local/mysql/lib/libgalera_smm.so

wsrep_cluster_address = "gcomm://mariadb-0,mariadb-1,mariadb-2"

wsrep_cluster_name = "mariadb-cluster"

wsrep_sst_method = "rsync"

binlog_format = row

default_storage_engine = InnoDB

innodb_autoinc_lock_mode = 2

# Cluster node configuration

bind-address = 0.0.0.0

wsrep_log_conflicts = ON

wsrep_debug = NONE

I also have set services for each mariadb pod, according to "wsrep_cluster_address":

apiVersion: v1

kind: Service

metadata:

name: mariadb

spec:

type: ClusterIP

ports:

- name: tcp-mariadb

port: 3306

protocol: TCP

targetPort: 3306

- name: tcp-grp

port: 4567

protocol: TCP

targetPort: 4567

- name: tcp-ist

port: 4568

protocol: TCP

targetPort: 4568

- name: tcp-sst

port: 4444

protocol: TCP

targetPort: 4444

selector:

app: mariadb

---

apiVersion: v1

kind: Service

metadata:

name: mariadb-0

spec:

type: ClusterIP

ports:

- name: tcp-mariadb

port: 3306

protocol: TCP

targetPort: 3306

- name: tcp-grp

port: 4567

protocol: TCP

targetPort: 4567

- name: tcp-ist

port: 4568

protocol: TCP

targetPort: 4568

- name: tcp-sst

port: 4444

protocol: TCP

targetPort: 4444

selector:

statefulset.kubernetes.io/pod-name: mariadb-0

---

apiVersion: v1

kind: Service

metadata:

name: mariadb-1

spec:

type: ClusterIP

ports:

- name: tcp-mariadb

port: 3306

protocol: TCP

targetPort: 3306

- name: tcp-grp

port: 4567

protocol: TCP

targetPort: 4567

- name: tcp-ist

port: 4568

protocol: TCP

targetPort: 4568

- name: tcp-sst

port: 4444

protocol: TCP

targetPort: 4444

selector:

statefulset.kubernetes.io/pod-name: mariadb-1

---

apiVersion: v1

kind: Service

metadata:

name: mariadb-2

spec:

type: ClusterIP

ports:

- name: tcp-mariadb

port: 3306

protocol: TCP

targetPort: 3306

- name: tcp-grp

port: 4567

protocol: TCP

targetPort: 4567

- name: tcp-ist

port: 4568

protocol: TCP

targetPort: 4568

- name: tcp-sst

port: 4444

protocol: TCP

targetPort: 4444

selector:

statefulset.kubernetes.io/pod-name: mariadb-2

If i deploy this statefulset in a namespace without the label istio-injection=enabled, everything works, the pods connect each to another and they form a fully functioning cluster.

If i set istio-injection=enabled AND i set PeerAuthentication to mtls: mode: STRICT to the namespace, then the cluster fails to form. I even tried to set DestinationRule for each service but with no luck:

apiVersion: security.istio.io/v1beta1

kind: PeerAuthentication

metadata:

name: strictauth-all

spec:

mtls:

mode: STRICT

---

apiVersion: networking.istio.io/v1beta1

kind: DestinationRule

metadata:

name: mariadb0

spec:

host: mariadb-0

trafficPolicy:

tls:

mode: ISTIO_MUTUAL

---

apiVersion: networking.istio.io/v1beta1

kind: DestinationRule

metadata:

name: mariadb1

spec:

host: mariadb-1

trafficPolicy:

tls:

mode: ISTIO_MUTUAL

---

apiVersion: networking.istio.io/v1beta1

kind: DestinationRule

metadata:

name: mariadb2

spec:

host: mariadb-2

trafficPolicy:

tls:

mode: ISTIO_MUTUAL

The problem is that for some reason rsync (cluster SST) is not working through istio-proxy:

Logs on mariadb-0 (initial pod):

2022-04-28 19:58:22 0 [Note] WSREP: Shifting SYNCED -> DONOR/DESYNCED (TO: 2)

2022-04-28 19:58:22 1 [Note] WSREP: Detected STR version: 1, req_len: 112, req: STRv1

2022-04-28 19:58:22 1 [Note] WSREP: Cert index preload: 2 -> 2

2022-04-28 19:58:22 1 [Note] WSREP: Server status change synced -> donor

2022-04-28 19:58:22 0 [Note] WSREP: async IST sender starting to serve tcp://10.10.3.125:4568 sending 2-2, preload starts from 2

2022-04-28 19:58:22 [Note] WSREP_NOTIFY: /usr/local/mysql/bin/wsrep_notify.sh was called with: --status donor

2022-04-28 19:58:22 [Note] WSREP_NOTIFY: /usr/local/mysql/bin/wsrep_notify.sh was called with: --status donor

2022-04-28 19:58:22 0 [Note] WSREP: Donor monitor thread started to monitor

2022-04-28 19:58:22 0 [Note] WSREP: Running: 'wsrep_sst_rsync --role 'donor' --address '10.10.3.125:4444/rsync_sst' --local-port '3306' --socket '/var/run/mysqld/mysqld.sock' --datadir '/data/' --gtid '78930c3b-c72d-11ec-8c72-6f41e51e0c30:2' --gtid-domain-id '0' --mysqld-args --wsrep-new-cluster'

2022-04-28 19:58:22 1 [Note] WSREP: sst_donor_thread signaled with 0

2022-04-28 19:58:22 0 [Note] WSREP: Flushing tables for SST...

2022-04-28 19:58:22 0 [Note] WSREP: pause

2022-04-28 19:58:22 0 [Note] WSREP: Provider paused at 78930c3b-c72d-11ec-8c72-6f41e51e0c30:2 (5)

2022-04-28 19:58:22 0 [Note] WSREP: Server paused at: 2

2022-04-28 19:58:22 0 [Note] WSREP: Tables flushed.

rsync: did not see server greeting

rsync error: error starting client-server protocol (code 5) at main.c(1675) [sender=3.1.3]

WSREP_SST: [ERROR] rsync returned code 5: (20220428 19:58:22.903)

2022-04-28 19:58:22 0 [ERROR] WSREP: Failed to read from: wsrep_sst_rsync --role 'donor' --address '10.10.3.125:4444/rsync_sst' --local-port '3306' --socket '/var/run/mysqld/mysqld.sock' --datadir '/data/' --gtid '78930c3b-c72d-11ec-8c72-6f41e51e0c30:2' --gtid-domain-id '0' --mysqld-args --wsrep-new-cluster

2022-04-28 19:58:22 0 [ERROR] WSREP: Process completed with error: wsrep_sst_rsync --role 'donor' --address '10.10.3.125:4444/rsync_sst' --local-port '3306' --socket '/var/run/mysqld/mysqld.sock' --datadir '/data/' --gtid '78930c3b-c72d-11ec-8c72-6f41e51e0c30:2' --gtid-domain-id '0' --mysqld-args --wsrep-new-cluster: 255 (Unknown error 255)

2022-04-28 19:58:22 0 [Note] WSREP: resume

2022-04-28 19:58:22 0 [Note] WSREP: resuming provider at 5

2022-04-28 19:58:22 0 [Note] WSREP: Provider resumed.

2022-04-28 19:58:22 0 [Note] WSREP: SST sending failed: -255

2022-04-28 19:58:22 0 [Note] WSREP: Server status change donor -> joined

2022-04-28 19:58:22 [Note] WSREP_NOTIFY: /usr/local/mysql/bin/wsrep_notify.sh was called with: --status joined

2022-04-28 19:58:22 0 [ERROR] WSREP: Command did not run: wsrep_sst_rsync --role 'donor' --address '10.10.3.125:4444/rsync_sst' --local-port '3306' --socket '/var/run/mysqld/mysqld.sock' --datadir '/data/' --gtid '78930c3b-c72d-11ec-8c72-6f41e51e0c30:2' --gtid-domain-id '0' --mysqld-args --wsrep-new-cluster

2022-04-28 19:58:22 0 [Note] WSREP: Donor monitor thread ended with total time 0 sec

2022-04-28 19:58:22 0 [Warning] WSREP: 0.0 (mariadb-0): State transfer to 1.0 (mariadb-1) failed: -255 (Unknown error 255)

2022-04-28 19:58:22 0 [Note] WSREP: Shifting DONOR/DESYNCED -> JOINED (TO: 2)

2022-04-28 19:58:22 0 [Note] WSREP: Member 0.0 (mariadb-0) synced with group.

2022-04-28 19:58:22 0 [Note] WSREP: Shifting JOINED -> SYNCED (TO: 2)

and istio-proxy logs from mariadb-1 (or mariadb-2, both look the same):

2022-04-28T20:34:11.112266Z info JWT policy is third-party-jwt

2022-04-28T20:34:11.120934Z warn HTTP request unsuccessful with status: 400 Bad Request

2022-04-28T20:34:11.140093Z info CA Endpoint istiod.istio-system.svc:15012, provider Citadel

2022-04-28T20:34:11.140299Z info Using CA istiod.istio-system.svc:15012 cert with certs: var/run/secrets/istio/root-cert.pem

2022-04-28T20:34:11.140119Z info Opening status port 15020

2022-04-28T20:34:11.140510Z info citadelclient Citadel client using custom root cert: istiod.istio-system.svc:15012

2022-04-28T20:34:11.168819Z info ads All caches have been synced up in 61.101236ms, marking server ready

2022-04-28T20:34:11.169231Z info sds SDS server for workload certificates started, listening on "etc/istio/proxy/SDS"

2022-04-28T20:34:11.169253Z info xdsproxy Initializing with upstream address "istiod.istio-system.svc:15012" and cluster "Kubernetes"

2022-04-28T20:34:11.171267Z info Pilot SAN: [istiod.istio-system.svc]

2022-04-28T20:34:11.173499Z info Starting proxy agent

2022-04-28T20:34:11.173534Z info Epoch 0 starting

2022-04-28T20:34:11.173550Z info Envoy command: [-c etc/istio/proxy/envoy-rev0.json --restart-epoch 0 --drain-time-s 45 --drain-strategy immediate --parent-shutdown-time-s 60 --local-address-ip-version v4 --bootstrap-version 3 --file-flush-interval-msec 1000 --disable-hot-restart --log-format %Y-%m-%dT%T.%fZ %l envoy %n %v -l warning --component-log-level misc:error --concurrency 2]

2022-04-28T20:34:11.175140Z info sds Starting SDS grpc server

2022-04-28T20:34:11.175340Z info starting Http service at 127.0.0.1:15004

2022-04-28T20:34:11.278886Z info xdsproxy connected to upstream XDS server: istiod.istio-system.svc:15012

2022-04-28T20:34:11.341603Z info ads ADS: new connection for node:mariadb-1.cmanea-1

2022-04-28T20:34:11.342517Z info ads ADS: new connection for node:mariadb-1.cmanea-2

2022-04-28T20:34:11.600609Z info cache generated new workload certificate latency=431.381267ms ttl=23h59m59.399404475s

2022-04-28T20:34:11.600657Z info cache Root cert has changed, start rotating root cert

2022-04-28T20:34:11.600692Z info ads XDS: Incremental Pushing:0 ConnectedEndpoints:2 Version:

2022-04-28T20:34:11.600813Z info cache returned workload trust anchor from cache ttl=23h59m59.399190674s

2022-04-28T20:34:11.600827Z info cache returned workload trust anchor from cache ttl=23h59m59.399174174s

2022-04-28T20:34:11.601021Z info cache returned workload certificate from cache ttl=23h59m59.398987473s

2022-04-28T20:34:11.601238Z info ads SDS: PUSH request for node:mariadb-1.cmanea resources:1 size:1.1kB resource:ROOTCA

2022-04-28T20:34:11.601318Z info cache returned workload trust anchor from cache ttl=23h59m59.398684971s

2022-04-28T20:34:11.601379Z info ads SDS: PUSH for node:mariadb-1.cmanea resources:1 size:1.1kB resource:ROOTCA

2022-04-28T20:34:11.601241Z info ads SDS: PUSH request for node:mariadb-1.cmanea resources:1 size:4.0kB resource:default

[2022-04-28T20:34:11.927Z] "- - -" 0 - - - "-" 5 130 27 - "-" "-" "-" "-" "10.10.3.119:3306" outbound|3306||mariadb.cmanea.svc.cluster.local 10.10.1.152:60914 10.216.114.69:3306 10.10.1.152:58574 - -

[2022-04-28T20:34:12.003Z] "- - -" 0 UH - - "-" 0 0 7 - "-" "-" "-" "-" "-" outbound|4567||mariadb-2.cmanea.svc.cluster.local - 10.216.10.33:4567 10.10.1.152:50472 - -

2022-04-28T20:34:13.429735Z info Initialization took 2.320903835s

2022-04-28T20:34:13.429996Z info Envoy proxy is ready

[2022-04-28T20:34:14.656Z] "- - -" 0 NR filter_chain_not_found - "-" 0 0 0 - "-" "-" "-" "-" "-" - - 10.10.1.152:4444 10.10.3.119:48960 - -

[2022-04-28T20:34:12.004Z] "- - -" 0 - - - "-" 3037 4184 3693 - "-" "-" "-" "-" "10.10.3.119:4567" outbound|4567||mariadb-0.cmanea.svc.cluster.local 10.10.1.152:49860 10.216.225.145:4567 10.10.1.152:47238 - -

[2022-04-28T20:34:16.918Z] "- - -" 0 - - - "-" 5 130 5 - "-" "-" "-" "-" "10.10.3.119:3306" outbound|3306||mariadb.cmanea.svc.cluster.local 10.10.1.152:60986 10.216.114.69:3306 10.10.1.152:58646 - -

[2022-04-28T20:34:17.185Z] "- - -" 0 UH - - "-" 0 0 0 - "-" "-" "-" "-" "-" outbound|4567||mariadb-2.cmanea.svc.cluster.local - 10.216.10.33:4567 10.10.1.152:50544 - -

[2022-04-28T20:34:18.185Z] "- - -" 0 UH - - "-" 0 0 0 - "-" "-" "-" "-" "-" outbound|4567||mariadb-2.cmanea.svc.cluster.local - 10.216.10.33:4567 10.10.1.152:50562 - -

[2022-04-28T20:34:20.344Z] "- - -" 0 NR filter_chain_not_found - "-" 0 0 0 - "-" "-" "-" "-" "-" - - 10.10.1.152:4444 10.10.3.119:49028 - -

[2022-04-28T20:34:17.185Z] "- - -" 0 - - - "-" 3553 4424 4219 - "-" "-" "-" "-" "10.10.3.119:4567" outbound|4567||mariadb-0.cmanea.svc.cluster.local 10.10.1.152:49932 10.216.225.145:4567 10.10.1.152:47310 - -

[2022-04-28T20:34:34.637Z] "- - -" 0 - - - "-" 5 130 18 - "-" "-" "-" "-" "10.10.3.119:3306" outbound|3306||mariadb.cmanea.svc.cluster.local 10.10.1.152:32980 10.216.114.69:3306 10.10.1.152:58872 - -

[2022-04-28T20:34:34.814Z] "- - -" 0 UH - - "-" 0 0 0 - "-" "-" "-" "-" "-" outbound|4567||mariadb-2.cmanea.svc.cluster.local - 10.216.10.33:4567 10.10.1.152:50774 - -

IP Addresses for easy identification: mariadb-0 - pod - 10.10.3.119 - service - 10.216.225.145 mariadb-1: - pod - 10.10.1.152 - service - 10.216.94.132 mariadb-2: - pod - 10.10.3.136 - service - 10.216.10.33 mariadb service: 10.216.114.69

Version

kubectl version --short

Client Version: v1.19.4

Server Version: v1.20.15

istioctl version

client version: 1.11.3

control plane version: 1.11.3

data plane version: 1.11.3 (14 proxies)

Additional Information

No response

I'm having exactly the same behaviour.

- I enable Istio and no node can connect to the initial one. Furthermore, there are 0 traffic logs inside the

istio-proxycontainers. - I disable Istio and all works just fine.

So currently I can not use istio only because I am not able to start a galera cluster... I also tried to switch to another service mesh (the linkerd one). With that one all worked fine from the start. I also found some docs related to server first protocol. So it seems that I'm missing some port related hidden config.

Yes, i've also found the docs related to server first protocol and i've tried to set mTLS mode STRICT and it does not work. If i set mTLS mode DISABLE well that only disables mTLS, that's not what i want :)

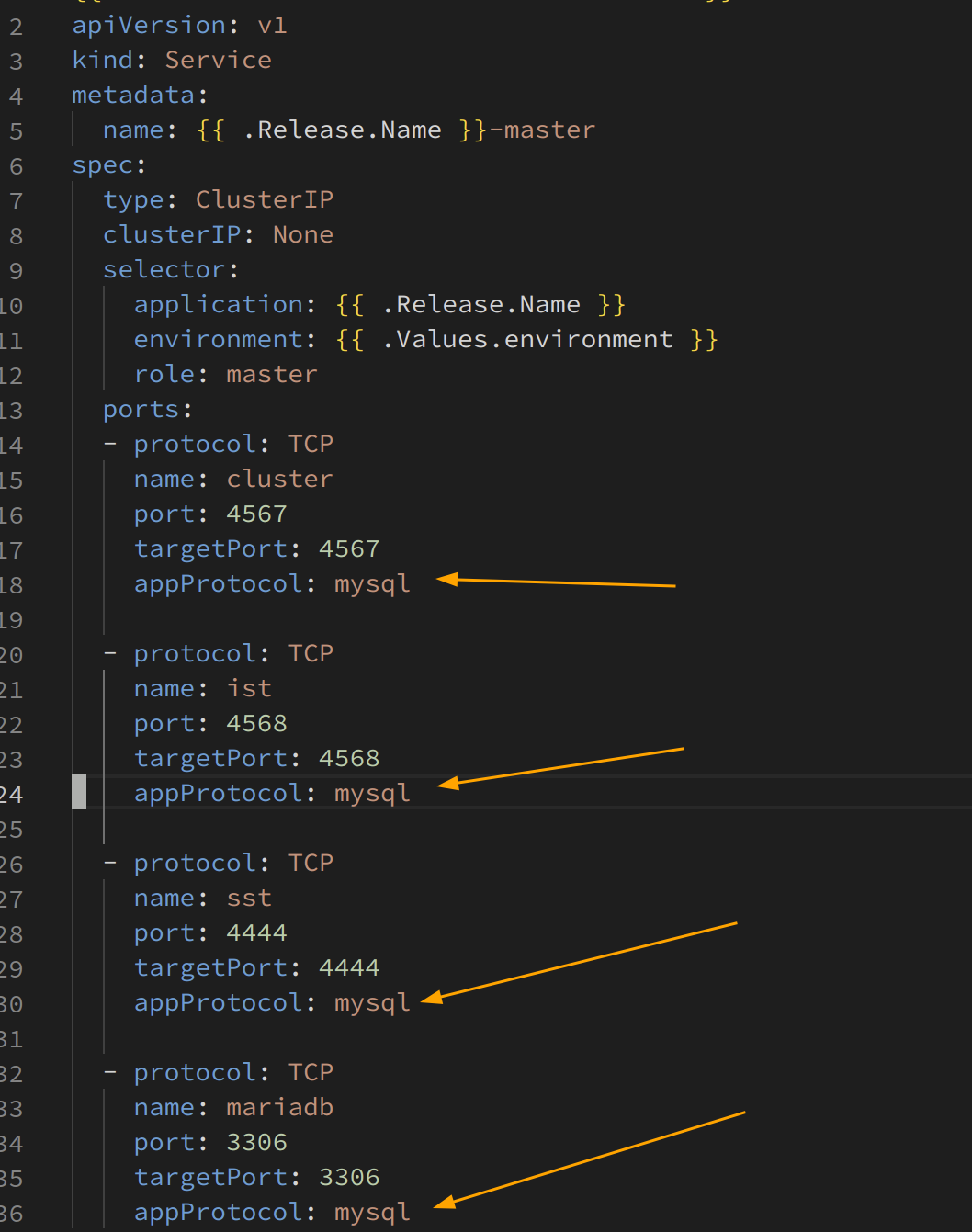

Ok. I figured it out. It's all related to server-first protocol. In order to make it working you have to:

- Set the

PILOT_ENABLE_MYSQL_FILTERenv variable equals to'1'in your pods. - Configure mutual tls for all the traffic between your nodes.

- Setting up the

appProtocolin your services tomysqlas described here.

What do you mean by the first 2 bullets?

Set the PILOT_ENABLE_MYSQL_FILTER env variable equals to '1' in your pods.

Where? In mariadb pods?

Configure mutual tls for all the traffic between your nodes.

How? With PeerAuthentication like below?

apiVersion: security.istio.io/v1beta1

kind: PeerAuthentication

metadata:

name: strictauth-all

spec:

mtls:

mode: STRICT

What do you mean by the first 2 bullets?

Set the PILOT_ENABLE_MYSQL_FILTER env variable equals to '1' in your pods.

Where? In mariadb pods?

Configure mutual tls for all the traffic between your nodes.

How? With PeerAuthentication like below?

apiVersion: security.istio.io/v1beta1 kind: PeerAuthentication metadata: name: strictauth-all spec: mtls: mode: STRICT

Yes and yes.

🚧 This issue or pull request has been closed due to not having had activity from an Istio team member since 2022-04-28. If you feel this issue or pull request deserves attention, please reopen the issue. Please see this wiki page for more information. Thank you for your contributions.

Created by the issue and PR lifecycle manager.