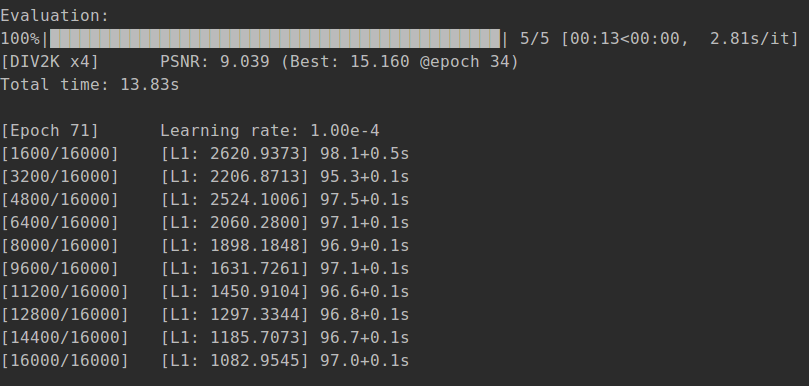

training loss not decrease

Hi I tried to run the code, it somehow worked after I changed the torch version and some codes. For instance, I commented 'plt.plot(axis, self.log[:, i].numpy(), label=label)' since it is told that [ x and y dimension does not match). During the training, however, the loss is not descreasing, but increasing (see the attached figure). I dont know what is the problem, maybe there is something with the code ? Really appreiciate your help.

I experience a similar issue, and add that the training loss is extremely high. Does model directly predict the uint8 RGB values? If so, why was this found necessary instead of output being within range of [0,1].

From what I can tell they:

- apply meanshift by subtraction

- apply their model

- add the residual

- super resolve

- apply meanshift by addition

input and output are both on RGB scale [0,255].

I had the same problem, and at a certain point in the training, loss became very large.Have you solved the problem yet?

In my case, the initializer was the problem. I used LeCunNormal initializer and the loss became extremely large, but KaimingUniform initializer, the loss converged properly.

Thank you very much. Could you please tell me where I should make the change?I actually use the pre-trained model to initialize it, but the effect is very bad.

In my case, the initializer was the problem. I used LeCunNormal initializer and the loss became extremely large, but KaimingUniform initializer, the loss converged properly.

Could you tell me which part of the code is the initialization of the model,please?