Jeff Rasley

Jeff Rasley

Hi @chrjxj, can you try setting `"stage": 1,` in your config json to `"stage": 2,`? I want to confirm if your issue occurs with both stages of zero. I am...

Actually @chrjxj can you set these both to false in your config? I suspect this will fix your issues. ```json "contiguous_gradients": false, "overlap_comm": false, ```

@chrjxj, can you provide the stack trace for the new error message?

@antoiloui, are you also seeing this error? Can you share the deepspeed version you are using and the stack trace? Did you also try turning off `contiguous_gradients` and `overlap_comm`?

Gotcha, I see. Thank you @antoiloui. What version of deepspeed are you running? Is it possible to provide a repro for this error that you're seeing?

@tomekrut are you able to sign the contributor license agreement? Would love to merge your contribution soon! :)

> Hi @jeffra, merge failed for test_pipe.py line 246, in _helper: > > ``` > assert rel_diff(base_avg, test_avg) < 0.03 > AssertionError: assert 0.03816467364302331 < 0.03 > + where 0.03816467364302331...

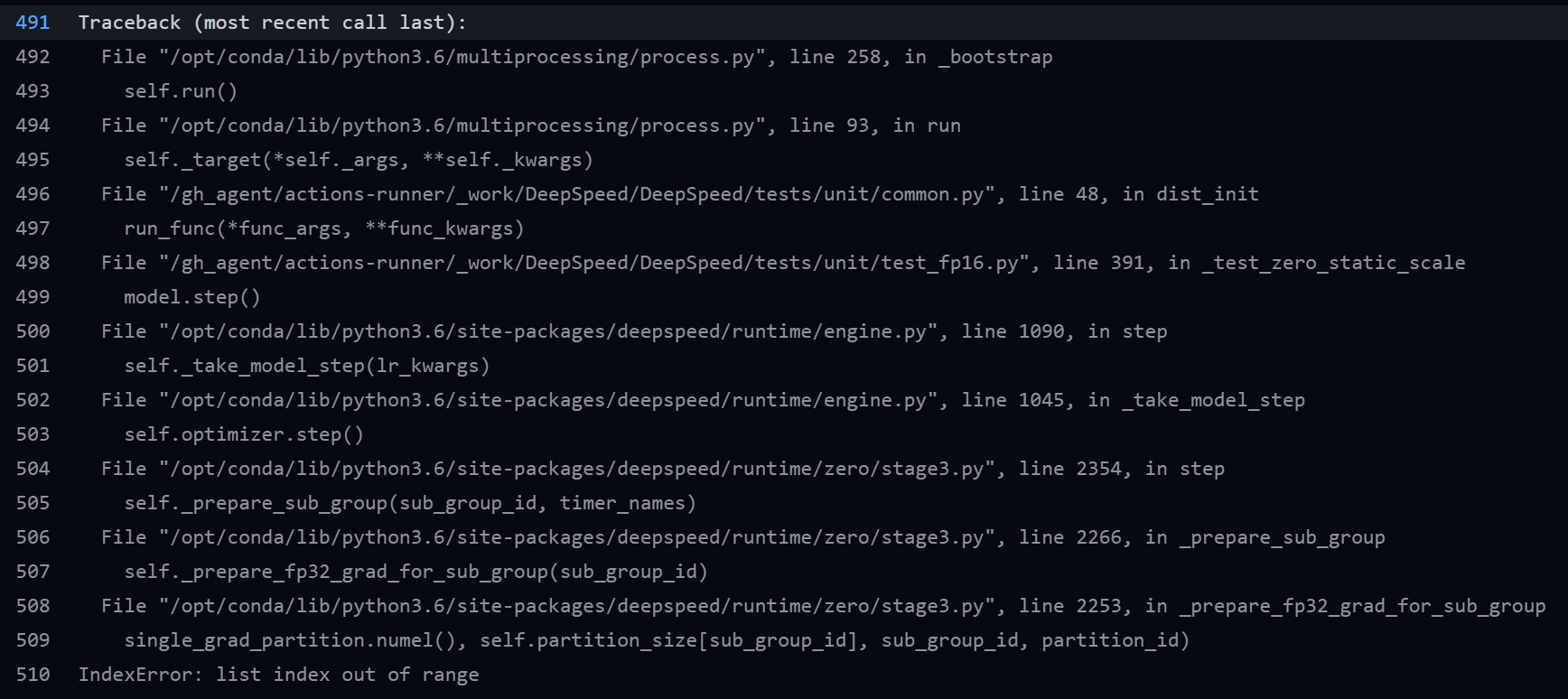

It appears this fixed the previous issue in one spot. However, now I am seeing the same error in a new assert: https://github.com/microsoft/DeepSpeed/blob/da71a8975d7387c903c32abd4ec0ff6f174980e0/deepspeed/runtime/zero/stage3.py#L2251-L2253

> I expected DeepSpeed calls **getstate** of the optimizer to be used, or state_dict() but neither worked for me. What methods of the optimizer do get called when one saves...

Thanks @stas00, I see the issue now. I think I know how we can address this, will give it a try. I was able to repro the issue with your...