missing Rust version of `from_pretrained`

Hi, It is not clear from the README files and code what's the Rust version of the following Python code.

from transformers import BertTokenizer

tokenizer = BertTokenizer.from_pretrained('bert-base-cased')

Thanks in advance!

here is my own attempt to reproduce the Python code in Rust based on my study of the two projects.

use tokenizers::models::wordpiece::WordPiece;

use tokenizers::tokenizer::{Result, Tokenizer};

use tokenizers::decoders::wordpiece::WordPiece as WordPieceDecoder;

use tokenizers::normalizers::bert::BertNormalizer;

use tokenizers::pre_tokenizers::bert::BertPreTokenizer;

fn main() -> Result<()> {

// download from "https://s3.amazonaws.com/models.huggingface.co/bert/bert-base-cased-vocab.txt";

let input_file = "./bert-base-cased-vocab.txt";

let wordpiece_builder = WordPiece::from_files(input_file);

let wordpiece = wordpiece_builder

.build()?;

let mut tokenizer = Tokenizer::new(Box::new(wordpiece));

//tokenizer.with_normalizer(Box::new(BertNormalizer::default()));

tokenizer.with_normalizer(Box::new(BertNormalizer::new(true, true, None, false)));

tokenizer.with_pre_tokenizer(Box::new(BertPreTokenizer));

tokenizer.with_decoder(Box::new(WordPieceDecoder::default()));

let sep = tokenizer.get_model().token_to_id("[SEP]").unwrap();

let cls = tokenizer.get_model().token_to_id("[CLS]").unwrap();

tokenizer.with_post_processor(Box::new(BertProcessing::new(

(String::from("[SEP]"), sep),

(String::from("[CLS]"), cls),

)));

let encoding = tokenizer.encode("I can feel the magic, can you?", true)?;

println!("encoding length is {}", encoding.len());

println!("{:?}", encoding.get_tokens());

println!("{:?}", encoding.get_ids());

Ok(())

output:

encoding length is 11

["[CLS]", "I", "can", "feel", "the", "magic", ",", "can", "you", "?", "[SEP]"]

[101, 146, 1169, 1631, 1103, 3974, 117, 1169, 1128, 136, 102]

It would be great if someone can tell me if this makes sense or not.

Hi, It is not clear from the README files and code what's the Rust version of the following Python code.

from transformers import BertTokenizer tokenizer = BertTokenizer.from_pretrained('bert-base-cased')Thanks in advance!

As far as I am concerned, there is no existence of from_pretrained method, because I didn't get a result when searched around the source code in tokenizers dir.

Hi @jacklanda,

How did you check ? https://github.com/huggingface/tokenizers/search?p=2&q=pretrained

Also, to use this, you need to build from source.

I use a command find . | xargs grep -ri to search the target pattern I want(here it's from_pretrained).

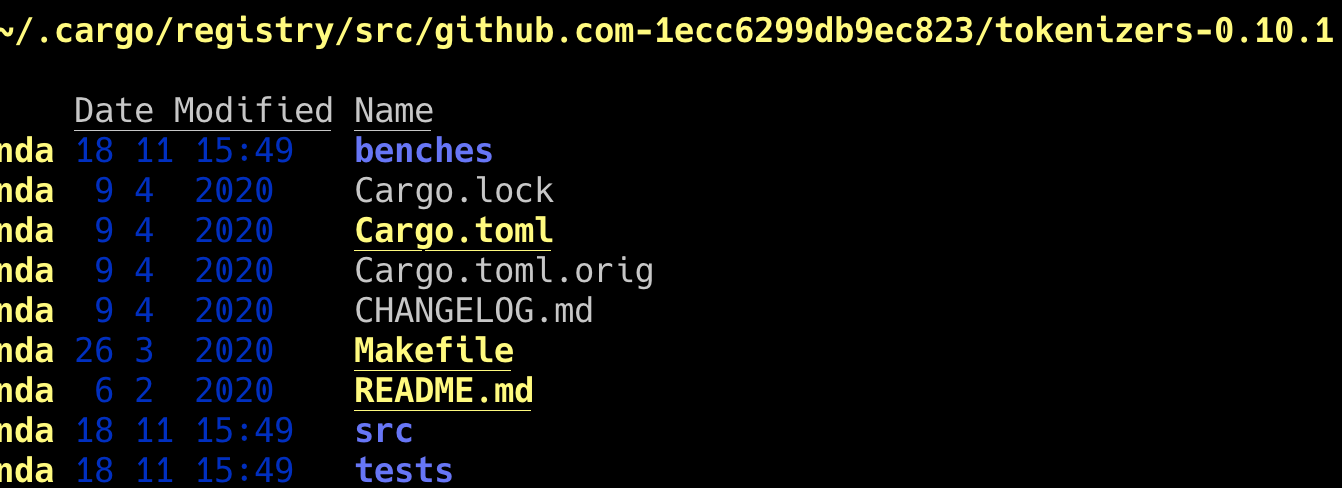

And I do this in this cache dir in the image below:

However, it seems that this directory I do searching is not complete, right? It misses many files which shows in this url: https://github.com/huggingface/tokenizers/search?p=2&q=pretrained

If it's true, I make a wrong judgement obviously :(

However, it seems that this directory I do searching is not complete, right? It misses many files which shows in this url: https://github.com/huggingface/tokenizers/search?p=2&q=pretrained

If it's true, I make a wrong judgement obviously :(

You are using the last version published on crates, which is not master.

This hasn't been released yet.

Got it, thanks for correcting my misunderstanding 👍🏻

Support for from_pretrained appears to have been dropped in the latest version of Rust tokenizers (0.14.1) where it had been supported in version 0.13.3. See the below:

I didn't see a note regarding support for

from_pretrained being dropped in the release notes so figured I would raise the issue here. I also verified that running from_pretrained calls compile and run successfully when using version 0.13.3 and fail on version 0.14.1 when using tokenizers from crates.io.

Updating to reflect that after reviewing the Tokenizer implementation on the 0.14.1 branch you need to add the http feature when installing via cargo add: cargo add [email protected] --features http to get support for from_pretrained.