Pushing single image with multiple tags stores two artifacts with different sha-Hash instead of one artifact

In my CI build pipeline, i.e., Jenkinsfile, I have the following commands:

podman build -t croupier-service:${ASSEMBLY_VERSION} .

podman tag croupier-service:${ASSEMBLY_VERSION} x.y.z.comp/pmt/croupier-service:one

podman tag croupier-service:${ASSEMBLY_VERSION} x.y.z.comp/pmt/croupier-service:${ASSEMBLY_VERSION}

podman tag croupier-service:${ASSEMBLY_VERSION} x.y.z.comp/pmt/croupier-service:${APP_VERSION}-nightly

podman tag croupier-service:${ASSEMBLY_VERSION} x.y.z.comp/pmt/croupier-service:two

podman tag croupier-service:${ASSEMBLY_VERSION} x.y.z.comp/pmt/croupier-service:three

podman push x.y.z.comp/pmt/croupier-service:one

podman push x.y.z.comp/pmt/croupier-service:${ASSEMBLY_VERSION}

podman push x.y.z.comp/pmt/croupier-service:${APP_VERSION}-nightly

podman push x.y.z.comp/pmt/croupier-service:two

podman push x.y.z.comp/pmt/croupier-service:three

Expected behavior Harbor stores one single artifact with five tags.

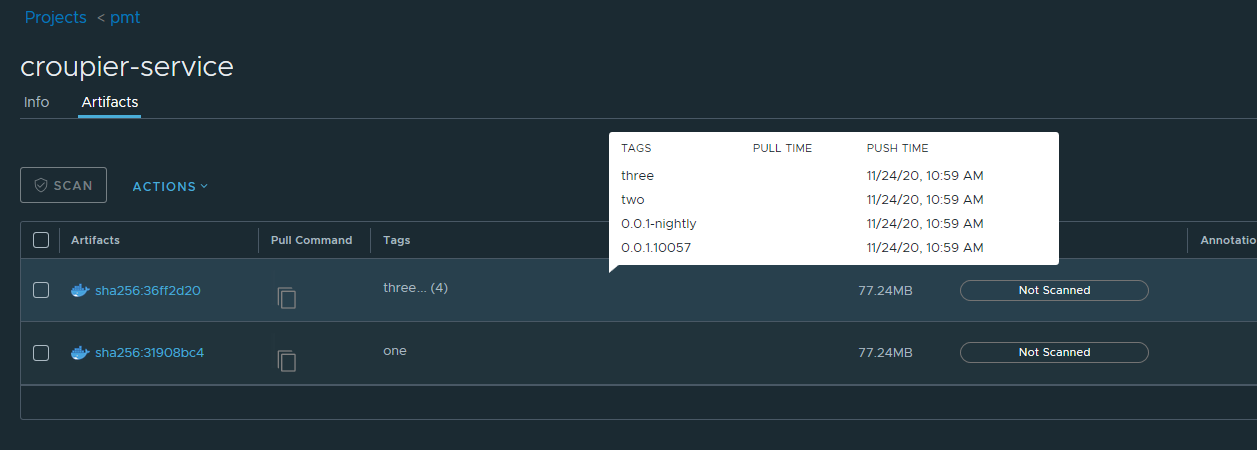

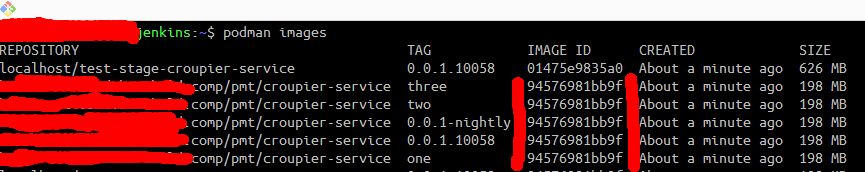

Actual behavior Harbor stores two artifacts with two different sha-Hashes but same image id, see screenshots.

The tag croupier-service:${ASSEMBLY_VERSION} seems to be treated differently, although it belongs to the same image id. Why is that? I doesn't make sense to me, since it was added and pushed between, e.g., the tags one and two, but still ends up as a separate artifact in harbor.

Versions:

- harbor version: 2.1.1

- docker engine version: 19.03.13

- docker-compose version: 1.27.4

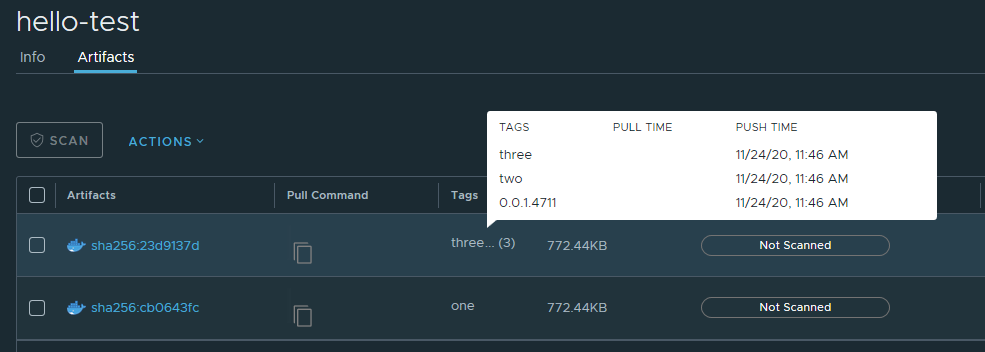

In order to reproduce the problem, run this:

HELLO_VERSION=0.0.1.4711

podman build -t hello-test:${HELLO_VERSION} .

podman run hello-test:${HELLO_VERSION}

podman tag hello-test:${HELLO_VERSION} x.y.z.comp/myproject/hello-test:one

podman tag hello-test:${HELLO_VERSION} x.y.z.comp/myproject/hello-test:${HELLO_VERSION}

podman tag hello-test:${HELLO_VERSION} x.y.z.comp/myproject/hello-test:two

podman tag hello-test:${HELLO_VERSION} x.y.z.comp/myproject/hello-test:three

podman push x.y.z.comp/myproject/hello-test:one

podman push x.y.z.comp/myproject/hello-test:${HELLO_VERSION}

podman push x.y.z.comp/myproject/hello-test:two

podman push x.y.z.comp/myproject/hello-test:three

with a Dockerfile containing this:

FROM busybox:1.32.0

ENTRYPOINT ["echo", "Hello Test!"]

ending up with this:

Alternatively, use docker instead of podman. Same problem occurs.

So that means xxxx/hello-test:one stored in Harbor has a different digest from other tags.

Did you have a chance to try?

You can pull the manifest of different tags and compare.

@reasonerjt thanks for your answer. What do you mean by manifest and how would I pull and compare these?

Do you mean the podman inspect command? Right now, I cannot reproduce the problem anymore. It only occurs in production. I will try and have a look.

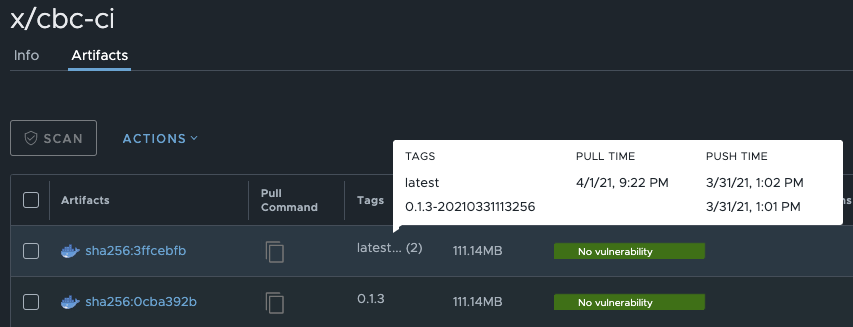

I'm seeing this as well on Harbor v2.0.1-d714b3ea. Pulling both images down shows no difference in their manifests:

> podman images | grep cbc-ci

harbor.example.com/arusso/x/cbc-ci 0.1.3 0495fc27e8b5 34 hours ago 321 MB

harbor.example.com/arusso/x/cbc-ci latest 0495fc27e8b5 34 hours ago 321 MB

> podman rmi 0495fc -f

Untagged: harbor.example.com/arusso/x/cbc-ci:0.1.3

Untagged: harbor.example.com/arusso/x/cbc-ci:latest

Deleted: 0495fc27e8b5dc3e4a20d96b98956145c4c43e15690c8cc00686142565a70d8f

> podman pull arusso/x/cbc-ci:latest

Trying to pull harbor.example.com/arusso/x/cbc-ci:latest...

Getting image source signatures

Copying blob 8b78e2066549 done

Copying config 0495fc27e8 done

Writing manifest to image destination

Storing signatures

0495fc27e8b5dc3e4a20d96b98956145c4c43e15690c8cc00686142565a70d8f

> podman pull arusso/x/cbc-ci:0.1.3

Trying to pull harbor.example.com/arusso/x/cbc-ci:0.1.3...

Getting image source signatures

Copying blob 8b78e2066549 [--------------------------------------] 0.0b / 0.0b

Copying config 0495fc27e8 done

Writing manifest to image destination

Storing signatures

0495fc27e8b5dc3e4a20d96b98956145c4c43e15690c8cc00686142565a70d8f

> podman images | grep cbc-ci

harbor.example.com/arusso/x/cbc-ci 0.1.3 0495fc27e8b5 34 hours ago 321 MB

harbor.example.com/arusso/x/cbc-ci latest 0495fc27e8b5 34 hours ago 321 MB

> diff <(podman inspect cbc-ci:latest) <(podman inspect cbc-ci:0.1.3)

>

Meanwhile, on Harbor itself:

This issue has been automatically marked as stale because it has not had recent activity. It will be closed if no further activity occurs. Thank you for your contributions.

This is still an issue, although I have been ignoring it on our server due to other, more important DevOps work.

we have the same issue.. same image, identical manifest, but different sha256 on harbor (we are on version 2.2.2)

This issue has been automatically marked as stale because it has not had recent activity. It will be closed if no further activity occurs. Thank you for your contributions.

@CurryWurry is this still an issue with you? I haven't had time to investigate further myself.

@svdHero We haven't had the issue for a while now. We don't really push the same image twice that often, so it's kind of a corner case anyways.

This issue is being marked stale due to a period of inactivity. If this issue is still relevant, please comment or remove the stale label. Otherwise, this issue will close in 30 days.

This causes some (minor) problems for us, would be great to get it resolved.

+1 for this issue. I'm building and pushing one image to harbor with latest tag.

Step by step:

- docker build -t docker_registry/project/image_name:latest .

- docker push docker_registry/project/image_name:latest

- docker build -t docker_registry/project/image_name:latest . (the same VM, the same dockerfile, local cache is used. Please don't ask why I build the same twice. I know it doesn't make sense. It needs bigger changes and I going to improve that later)

- docker push docker_registry/project/image_name:latest

Expected behavior: one image on harbor with tag latest Actual behavior: two artifacts on harbor. newer with latest tag. older without any tag

I thought that if I use docker inline cache it should help, but it isn't. And the worst is that sometimes it works as expected.

Is it possible that if harbor is overloaded (push~60 images at the same time from 5 VM-s) then it cannot use cache properly? For now, I've just noticed that the problem occurs when I push all images at the same time. And I cannot reproduce it when I build only one image. All VM-s are configured the same. And when I pull those two artifacts the have the same manifest.

This issue is being marked stale due to a period of inactivity. If this issue is still relevant, please comment or remove the stale label. Otherwise, this issue will close in 30 days.

This issue was closed because it has been stalled for 30 days with no activity. If this issue is still relevant, please re-open a new issue.