Playbooks terminated unexpectedly after 4 hours

Please confirm the following

- [X] I agree to follow this project's code of conduct.

- [X] I have checked the current issues for duplicates.

- [X] I understand that AWX is open source software provided for free and that I might not receive a timely response.

Summary

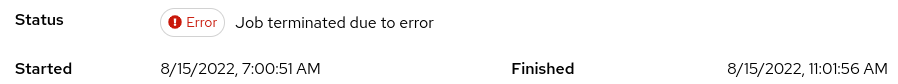

Playbooks running longer than 4 hours are terminated unexpectedly. The Jobs finish with error state in GUI. The exit code "137" Same issue : closed without resolution. Tested on versions: AWX 19.4.0 and AWX 20.0.0

AWX version

20.0.0

Select the relevant components

- [ ] UI

- [x] API

- [ ] Docs

Installation method

kubernetes

Modifications

yes

Ansible version

core 2.13.0.dev0

Operating system

CentOS8

Web browser

Chrome

Steps to reproduce

-

hosts: all gather_facts: no

tasks:

- name: Run Job shell: | while(1){ Write-Output "." start-sleep -seconds 1800 } args: executable: /usr/bin/pwsh async: 43200 poll: 900 register: pwsh_output_job ignore_errors: true

Expected results

Playbook completes successfully

Actual results

Container running the job is terminated after running for 4 hours

Additional information

exitCode: 137

Anything new in this topic?

Hi, we are also seeing this issue on K3s using AWX 19.5.0 and 21.0.0. A few things we observed looking at this:

- Jobs fail after the last task over the 4 hour mark has completed

- for example a wait_for task with a timeout of 8 hours causes the job to fail with error after 8 hours, a 5 hour task fails after 5 hours

- The limit seems to be pretty much exactly 4 hours as jobs running 3h 50min complete successfully

- Jobs are not continued in the background as the Pod is deleted instantly after the job errors

- Also happens with job timeout set in the job template

@spireob maybe you can check below

- https://github.com/ansible/awx/issues/9961

- https://github.com/ansible/awx/issues/11338 it could be related to that issues.

I've the same issue of 4hrs jobs end with errors (in k3s)

We also encounter an issue like this regularly. It seems awx-task just decides that the job is done and kills the pod.

This is what i find in the awx-task log:

2022-05-19 13:20:17,503 INFO [3abf5855276042c595518de57f670161] awx.main.commands.run_callback_receiver Event processing is finished for Job 15161, sending notifications

2022-05-19 13:20:17,503 INFO [3abf5855276042c595518de57f670161] awx.main.commands.run_callback_receiver Event processing is finished for Job 15161, sending notifications

2022-05-19 13:20:18,107 DEBUG [3abf5855276042c595518de57f670161] awx.main.tasks.jobs job 15161 (running) finished running, producing 382 events.

2022-05-19 13:20:18,107 DEBUG [3abf5855276042c595518de57f670161] awx.main.dispatch task c950003b-4c05-49d1-9b45-43e671098931 starting awx.main.tasks.system.handle_success_and_failure_notifications(*[15161])

2022-05-19 13:20:18,109 DEBUG [3abf5855276042c595518de57f670161] awx.analytics.job_lifecycle job-15161 post run

2022-05-19 13:20:18,238 DEBUG [3abf5855276042c595518de57f670161] awx.analytics.job_lifecycle job-15161 finalize run

2022-05-19 13:20:18,342 WARNING [3abf5855276042c595518de57f670161] awx.main.dispatch job 15161 (error) encountered an error (rc=None), please see task stdout for details.

2022-05-19 13:20:18,345 DEBUG [3abf5855276042c595518de57f670161] awx.main.tasks.system Executing error task id ecbb37f9-809d-4317-9d01-af93846de8d6, subtasks: [{'type': 'job', 'id': 15161}]

All the while the task output just says "canceled". If there is anything i can help analyse this please tell me what to do.

It is not related to the linked issues above.

*edit: sorry, missing data about the system

AWX: 21.0.0 running on K3S v1.23.6

exactly same issue

can confirm that this also happens on RedHat Ansible Automation Platform 2.1 on OpenShift 4.8

can confirm that this also happens on RedHat Ansible Automation Platform 2.1 on OpenShift 4.8

Have you opened a case to RedHat?

can confirm that this also happens on RedHat Ansible Automation Platform 2.1 on OpenShift 4.8

Have you opened a case to RedHat?

yes but no news yet

can confirm that this also happens on RedHat Ansible Automation Platform 2.1 on OpenShift 4.8

Have you opened a case to RedHat?

yes but no news yet

Okay, could you please keep us posted on this case status? Also there should be SLA for paid subscription. Issue is quite critical as for me.

Okay, could you please keep us posted on this case status? Also there should be SLA for paid subscription. Issue is quite critical as for me.

Will do. In the meantime we could largely work around the issue by splitting the job into multiple separate jobs connected via workflow.

I got a similar problem today, after four hours the task fell.

@spireob maybe you can check below

https://github.com/ansible/awx/issues/9961

https://github.com/ansible/awx/issues/11338

it could be related to that issues.

I've the same issue of 4hrs jobs end with errors (in k3s)

For my installation I don't believe it's k3s related as I have a 500 Mb limit for logs. More than that I don't even see log files created under /var/log/pods/, just empty folders.

Also I'm using custom EE built with ansible 2.9 as suggested in some @AlanCoding repo, so I believe the issue is not related to ansible-runner, but related to awx-task that seems like have some timeout for waiting output from a task.

Same issue on AWX: 21.0.0 running on K3S v1.23.6 :( any workaround to this problem?

same problem with awx 21.1.0 & k3s v1.21.7+k3s1 for now, where I "know" that the last task ends like it should, I re-run the playbook with the remaining tags only

well, I can only assume that the last task ended without error, as I don't see an "ok", "changed" or "failed"....

Okay, could you please keep us posted on this case status? Also there should be SLA for paid subscription. Issue is quite critical as for me.

Will do. In the meantime we could largely work around the issue by splitting the job into multiple separate jobs connected via workflow.

@meis4h Is there any news from support?

Does this problem occur in the paid version and there is no solution? There are few people working more than 4 hours?

We are also having this issue running the latest AWX, k3s, and docker backend. Container log size is set to 500mb and allowed to have 4 files. (Single log file is generated and gets nowhere near 500mb)

This is a pretty big show stopper for long running maintenance playbooks.

Can we get an update on this? This issue has been open since February and i've seen numerous closed issues with the same problem.

We are also having this issue running the latest AWX, k3s, and docker backend. Container log size is set to 500mb and allowed to have 4 files. (Single log file is generated and gets nowhere near 500mb)

This is a pretty big show stopper for long running maintenance playbooks.

Can we get an update on this? This issue has been open since February and i've seen numerous closed issues with the same problem.

same here

We have exactly the same issue.

Okay, could you please keep us posted on this case status? Also there should be SLA for paid subscription. Issue is quite critical as for me.

Will do. In the meantime we could largely work around the issue by splitting the job into multiple separate jobs connected via workflow.

@meis4h Is there any news from support?

@meis4h could you please update?

Also lets probably call active developers like @AlanCoding 😅

@adpavlov sadly there is nothing new to report 😕

exactly same issue

exactly same issue

Same issue here. Upgraded to the latest AWX version this morning but it still occurs. Use case is running restic prune commands which take a long time to complete.

Env:

- on-prem k8s 1.23.5

- awx operator 0.28.0

- awx 21.5.0

Same here. Does the apparent 4h limit affect the entire job template, or just a single task? Maybe using async tasks would be a way around here? What is bad is that the automation pod gets removed with all information what has happened, and the task container just throws EOF handler like with normal job end:

2022-09-07 17:14:27,661 INFO [3c69d3938c974b689cc1cdb49acadf04] awx.main.commands.run_callback_receiver Starting EOF event processing for Job 2076

2022-09-07 17:14:27,661 INFO [3c69d3938c974b689cc1cdb49acadf04] awx.main.commands.run_callback_receiver Starting EOF event processing for Job 2076

2022-09-07 17:14:28,591 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.main.tasks.jobs job 2076 (running) finished running, producing 80 events.

2022-09-07 17:14:28,595 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.analytics.job_lifecycle job-2076 post run

2022-09-07 17:14:28,937 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.analytics.job_lifecycle job-2076 finalize run

2022-09-07 17:14:28,957 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.main.dispatch task f1c62c03-fdca-45d8-8363-a66710f19910 starting awx.main.tasks.system.update_inventory_computed_fields(*[3])

2022-09-07 17:14:28,977 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.main.models.inventory Going to update inventory computed fields, pk=3

2022-09-07 17:14:28,999 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.main.models.inventory Finished updating inventory computed fields, pk=3, in 0.022 seconds

2022-09-07 17:14:29,144 WARNING [3c69d3938c974b689cc1cdb49acadf04] awx.main.dispatch job 2076 (error) encountered an error (rc=None), please see task stdout for details.

2022-09-07 17:14:29,200 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.main.dispatch task 4671db40-b07f-47e5-8810-bfb76ee45d8d starting awx.main.tasks.system.handle_work_error(*['4671db40-b07f-47e5-8810-bfb76ee45d8d'])

2022-09-07 17:14:29,201 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.main.tasks.system Executing error task id 4671db40-b07f-47e5-8810-bfb76ee45d8d, subtasks: [{'type': 'job', 'id': 2076}]

2022-09-07 17:14:29,224 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.main.dispatch task 4671db40-b07f-47e5-8810-bfb76ee45d8d starting awx.main.tasks.system.handle_work_success(*[])

2022-09-07 17:14:29,224 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.main.dispatch task aefe7820-cf95-4baf-9cfe-f25cf5d5cde4 starting awx.main.scheduler.tasks.run_task_manager(*[])

2022-09-07 17:14:29,224 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.main.scheduler Running task manager.

2022-09-07 17:14:29,238 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.main.scheduler Starting Scheduler

2022-09-07 17:14:29,248 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.main.dispatch task 83ec5dcb-f7c1-4fdc-829e-2bfb1c72e34b starting awx.main.scheduler.tasks.run_task_manager(*[])

2022-09-07 17:14:29,248 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.main.scheduler Running task manager.

2022-09-07 17:14:29,262 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.main.scheduler Not running scheduler, another task holds lock

2022-09-07 17:14:29,272 DEBUG [3c69d3938c974b689cc1cdb49acadf04] awx.main.scheduler Finishing Scheduler

This does not differ from normal job termination due to task error. The thing is, that last task that took so long has no json nor output available. What is weird though, the long running task performed its action and AWX could just run next task. So startint with start-at-task would allow to continue here. I wonder where does the time limit hit.

Well, async tasks did not help at all... 4:01 and job template fails. Seems that the automation container gets just terminated, but I would not blame k8s for that, since there are many different distributions (i use k3d) and all fail precisely after 4h. My last job with async_status poll every 5 minutes terminated at exact 4:01:22 after 7 retries - could not perform next retry.... I will try to trace that from inside automation pod as well as from k8s perspective... check also source code for that... this is really weird.

Hmm - I quess I found the reason - kubelet by default terminates streaming connections after 4h. Streaming automation pod logs is a streaming connection. See https://kubernetes.io/docs/reference/command-line-tools-reference/kubelet/ and search for "--streaming-connection-idle-timeout". I once again checked all messages in this Issue, and everyone has a variant of k3s / k3d. This options seems to only work for docker backend. K3d uses containerd-shim-runc-v2 and I have found an Issue for containerd exactly regarding this https://github.com/containerd/cri/issues/1057 One might try to increase this timeout somehow... maybe this will be a workaround... But a permanent solution would be to reimplement AWX part that uses those streaming connections to re-try closed ones instead of assuming that the connection got closed == container finished its work. I cannot track that part in the source code :/

I would call you a genius:) However, I set this timeout of k8s to 0 (meaning no timeout) in my k3s installation and pod survived 4 hours 27 minutes! But still resulted in Error...

Sadly yes, as k3s with containerio backend seems to ignore this parameter and you would need to switch to docker backend somehow. I would also if I only knew how, and adding --docker to kubelet arguments what should do the trick does not give any usefull effect besides log entries that this is legacy and will be ignored (used --kubelet-arg=docker@server:0 actually)

See also https://github.com/k3s-io/k3s/issues/1936 ...

But as already written - the problem is not that kubelet/k3s do not support removing this timeout, the main Problem is, that AWX does not try to re-connect to logs output after the stream gets closed. There should be for sure a retry mechanism and proper verification if the pod just disconnected or is really done and closed. I hope this will be fixed with this Issue. All other options are just vague workarounds... Interestingly this seems also to be the problem with the commercial AWX version, or can it handle jobs running longer than 4h on k3s/k3d with crio backend?

I'm using docker backend actually and passed --kubelet-arg=streaming-connection-idle-timeout=0 to k3s, but still no luck