_tkinter.tclerror no display name and no $display environment variable

When I tried to execute the demo_tiny_resnet101.sh it shows this error. I have added the following line in demo_net.py, but still getting the error.

import matplotlib

matplotlib.use('Agg')

The error message

Hi,

Be sure to add the line before import matplotlib.pyplot as plt

Like this:

import matplotlib

matplotlib.use('Agg')

import matplotlib.pyplot as plt

So that it can become effective in plt

I have added those line, but still getting the same error. However, I can run the tiny_resnet101_wider_train.sh and tiny_resnet101_eval.sh file successfully. It creates a directory named pred , where I can see different folders from WIDER_face. Are these images from WIDER_val? It doesn't match with WIDER_val_bbx_gt list. I can not understand how the evaluation is done.

Wider_val_bbx_gt corresponding value

Hi,

Perhaps try adding those lines at the very top of demo.py.

If it still won't work, a temporary workaround is to set DRAW_SCORE_COLORBAR of tiny_resnet101.yml into False. The visualization output will use cv2 engine instead, but does not come with colorbar.

As for the generated 'pred' directory from tiny_resnet101_wider_train.sh, those text file within directory comply with WIDER-face dataset evaluation code format, you need to use eval_tool code to inspect the result.

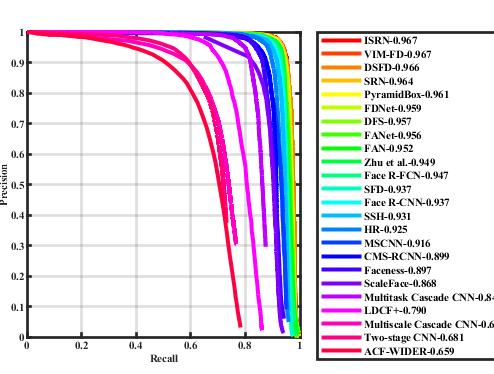

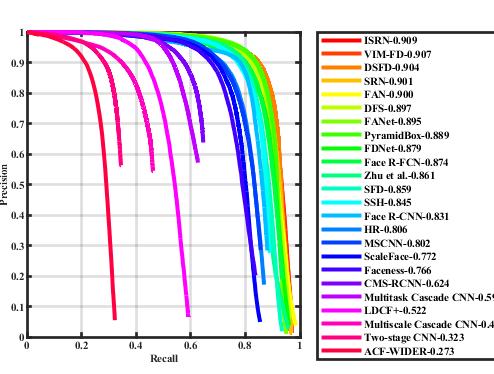

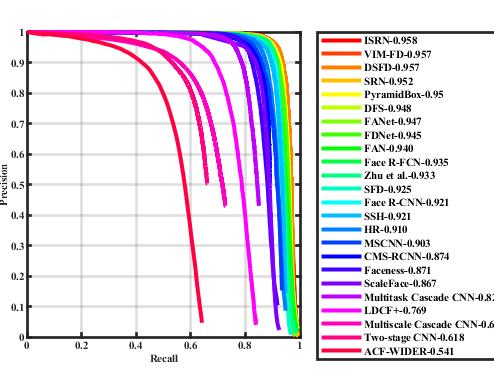

Hi, sorry for late reply. I got the evaluation code from wider face dataset, copied the pred directory that I found from running tiny_resnet101_wider_train.sh and tiny_resnet101_eval.sh in eval_tool. Then I run the wider_eval.m in eval_tools folder. I got some PR curve inside eval_tools->plot->figure->Val folder for wider_face hard, medium and easy validation set. But, I can't understand which one is the PR curve for Tiny_face and which wider_face dataset it used for comparision? Is it wider_face_val/ wider_face_train/wider_face_test? Overall I don't understand the evaluation process. Could you please help me to understand?

Hi, If you did not change the legend_name in evaluation code, your result is probably 'faceness' in the graph. The evaluation code will read the .mat files in ground_truth directory and also read the files in 'pred' to generate the PR-curve of your alogrithm. If what you want to know is the detail of PR-curve, i recommend you to look up other resources (like this one). Which i believe can give you better explanation of the concept.

Thanks a lot. Just being curious, how could you generate this PR curve for wider face medium validation dataset? Your Tiny-tf-baseline PR value is far better than my faceness PR value, but I also generated pred for Wider_face_validation set. Am I doing anything wrong?

Hi, I trained my model with Imagenet pretrained weight and trained it for quite a long time(50 epoches). Yet i also have to admit that it may require a bit of luck to get the good model result, since the model training can be unstable. For now the best strategy of getting the best model is to evalute on each epoch checkpoint and pick the highest score.

Thanks I will do that. What is the RefBox_N25_scaled.mat insde data/ folder? I want to convert my YOLO .h5 weight to .npy as like as Imagenet pretrained weight. If the conversion not possible can I train this model with .h5 file? Please let me know if you have any idea about this. TIA