bert

bert copied to clipboard

bert copied to clipboard

TensorFlow code and pre-trained models for BERT

_x-posted to bert-serving repo, as well, with apologies, as I, am not sure which is the culprit._ I'm using this command to start the server: `bert-serving-start -model_dir ./multi_cased_L-12_H-768_A-12/ -num_worker 4...

I am trying to use a custom dataset (similar to MRPC) to fine-tune the BERT model. I am running this python run_classifier.py \ --task_name=mrpc \ --do_train=true \ --do_eval=true \ --data_dir=$GLUE_DIR...

Traceback (most recent call last): File "D:/desk/bert-master333/bert-master/run_classifier.py", line 1024, in tf.app.run() File "D:\anaconda\envs\tensorflow\lib\site-packages\tensorflow\python\platform\app.py", line 40, in run _run(main=main, argv=argv, flags_parser=_parse_flags_tolerate_undef) File "D:\anaconda\envs\tensorflow\lib\site-packages\absl\app.py", line 303, in run _run_main(main, args) File "D:\anaconda\envs\tensorflow\lib\site-packages\absl\app.py",...

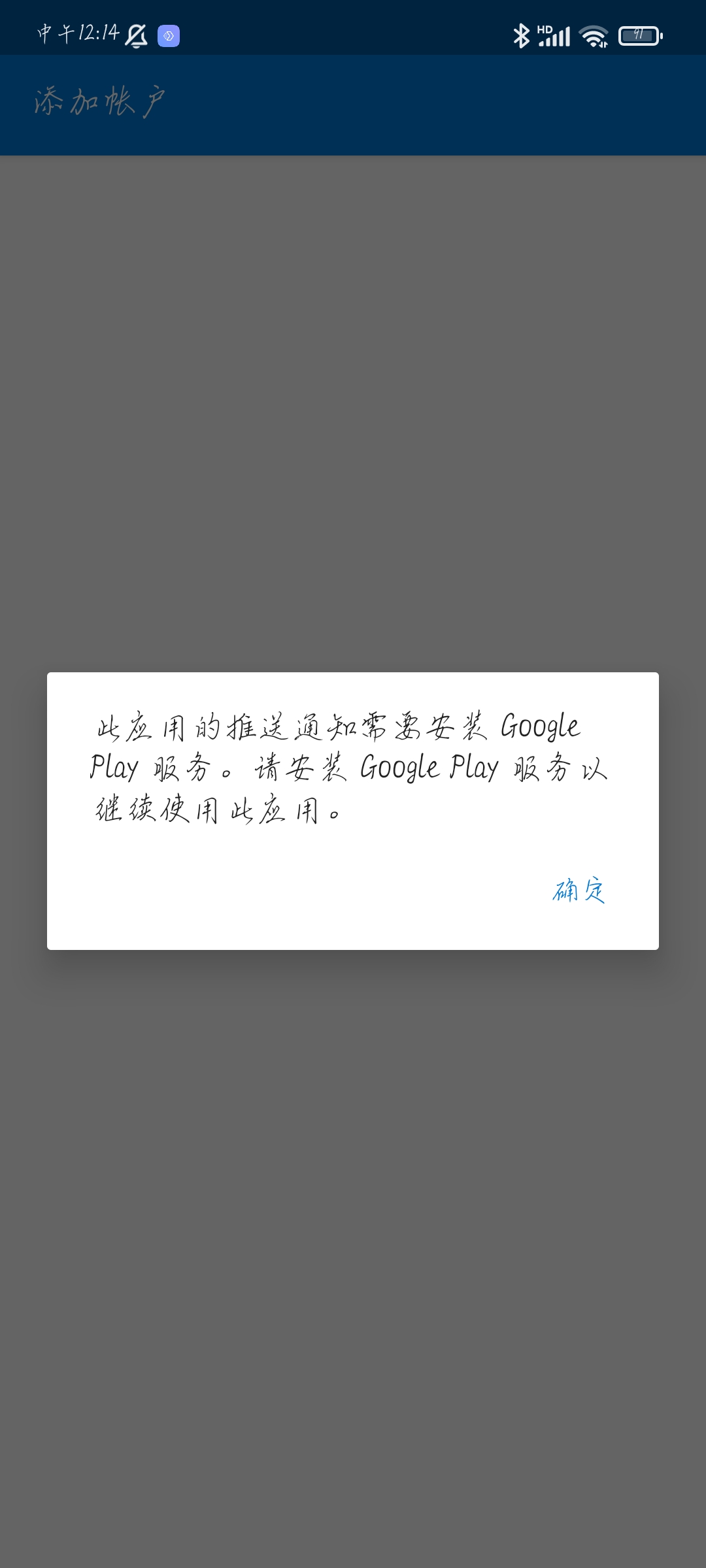

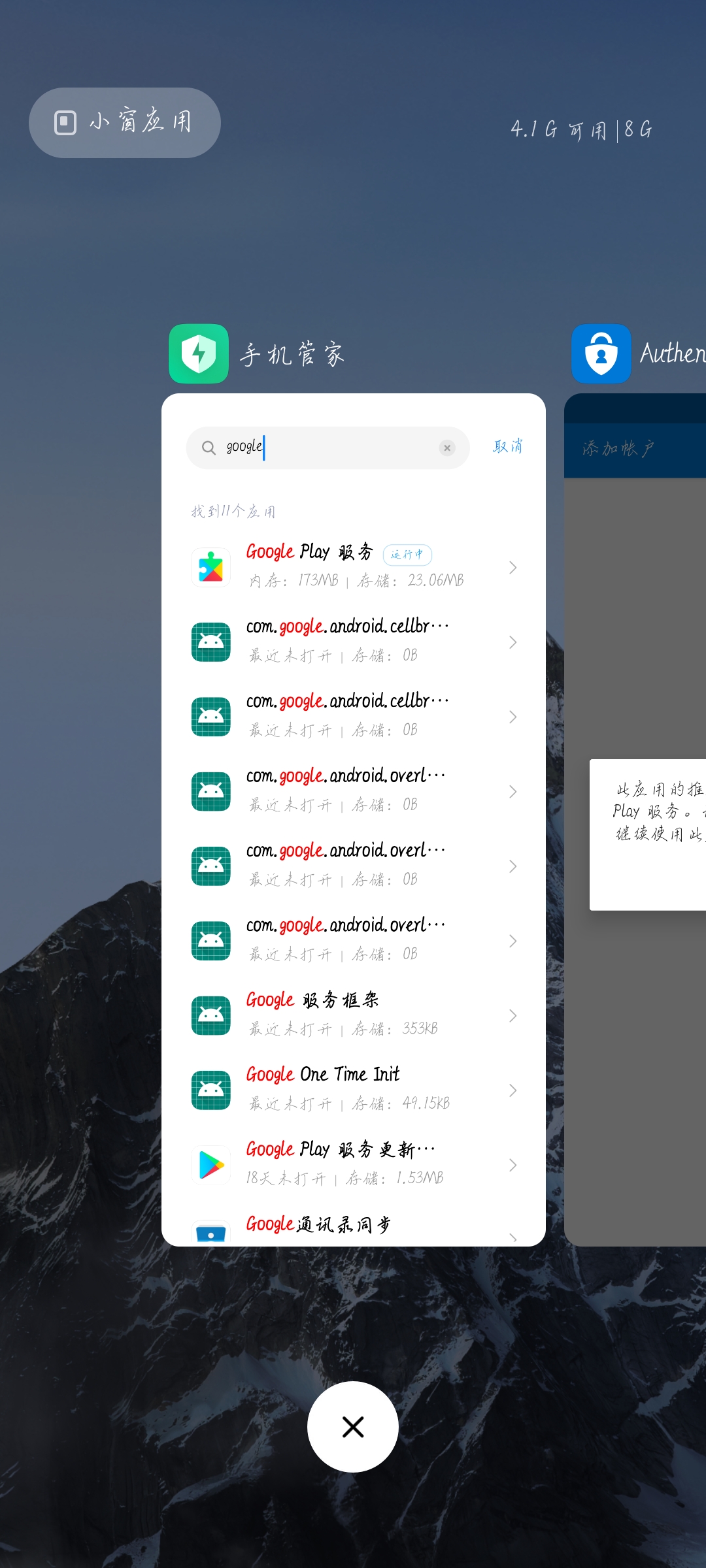

如题   https://user-images.githubusercontent.com/70051913/126055520-42fbe174-04d3-4498-9c9f-fdd94c6d6557.mp4

Hi, I want to represent 3 subsequent sequences like this: CLS SEQ1 SEP SEQ2 SEP SEQ3 SEP but when I check the token_ids I saw that I have 1 for...

Hello. I'm working on pretraining BERT project using GCP (Google Cloud Platform). Before I get started to use TPU for executing run_pretraining.py, I got stuck in creating pretraining data. Here...

Hi followed the instruction shared in [Pre-training with BERT](https://github.com/google-research/bert#pre-training-with-bert) i ran these 2 file as mentioned in the instruction , python create_pretraining_data.py python run_pretraining.py after running those 2 file i...

I tried to use BERT on Google colab. I got the model and glue data folder setup like as follow: [!export BERT_BASE_DIR=/content/uncased_L-12_H-768_A-12 !export GLUE_DIR=/content/glue_data !python run_classifier.py \ --task_name=MRPC \ --do_train=true...

Because the chinese pretrained vocab does not include all the english words, so I split english words into characters. Then how do I represent whitespace between english words?

ValueError: Tensor conversion requested dtype string for Tensor with dtype float32: