Dogleg nonlinear optimizer

@luisenp Is this issue still open ?

Hi @jeffin07, yes this is open!

@luisenp Can you please guide me. I would like to work on it

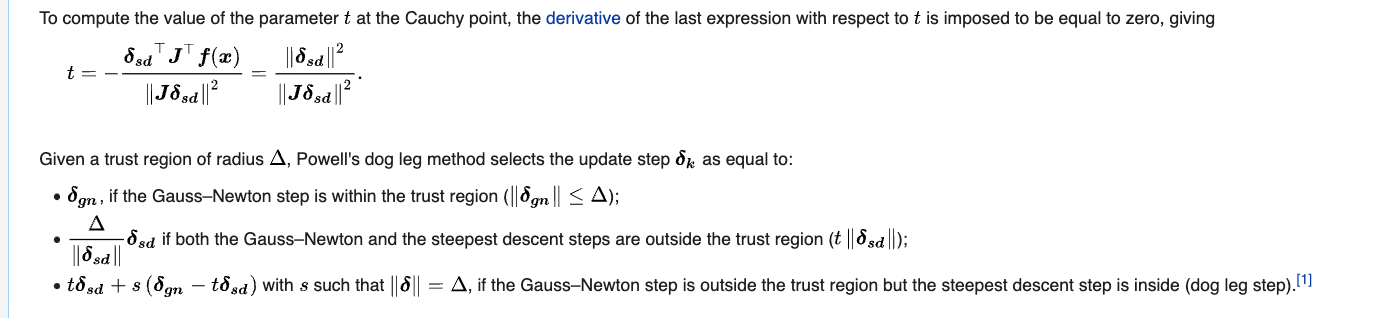

Awesome! The idea would be to implement this optimization method.

As a guide, you can use the code for Gauss-Newton. This function computes \delta_{gn} in the link above. Note that this delta is batched, so the shape would be something like batch_size x total_error_dim.

To compute delta_{sd}, you need access to the linearization A and b matrices (J and f(x), in the wiki notation,respectively). This is easy to do when using the dense solvers, since you will have access to self.linear_solver.linearization.Atb. However, when using sparse solvers we might need to add some other functionality to access this (cc @maurimo). But we can start with the dense solver in the mean time.

Note that all of this has to be differentiable, so you'll probably have to use torch.where carefully when computing the final step size (which might be different for each element in the batch).

Let me know if you have follow up questions.

So my understanding is only about accessing the linearization from the different solver classes. I think this should be quite straightforward, if there is no unified way to do so then this is a good opportunity to enforce that there should indeed be one! The sparse linearization will provide different representations for A,b, but it's indeed an alternative case that can be handled separately.

Hi @jeffin07, following up on the status of this issue. Let us know if you have more questions.

@mhmukadam Sorry i was not able to work on this,was a little busy. I will let u know if i encounter any questions

@luisenp @mhmukadam Guass_Netwon in the compute_delta function it just call the self.linear_solver.solve(). I can't find the exact computations for the \delta_{gn} so i'm a little confused how to proceed. Also for the optimizer i need to calculate delta_{sd}. So i have to do delta_{sd}] in the compute_delta function right ? Sorry if i'm asking too many questions

Hi @jeffin07, that's correct, delta_gn is just delta_gn = self.linear_solver.solve(). For the easiest example of how the linear solvers do this computation, you can check here, and the _solve_system method of its subclasses (in the same file).

That's correct, in the dodleg optimizer the return value would be delta_sd.

@luisenp Will the compute_delta function for dogleg optimizer returns

self.linear_solver.linearization.Ata * self.linear_solver.linearization.Atb , Something like this ?

Hi @jeffin07, I'm really sorry, somehow I missed this comment until today. I also noticed my previous comment was not entirely accurate, the return value should follow this:

Hi @jeffin07. I was wondering if you were blocked on this and if there was anything we could do to help. In case it's useful, the dogleg implementation of GTSAM is a good reference to look at (in this folder).

@luisenp Sorry for the late reply.I missed the comments. Thank you for the reference. I will take a look and update you on this ASAP. Again Sorry for the late reply

@luisenp we need to calculate the delta_sd=-J'F(x), here J and F(x) which can be accessed using self.linear_solver.linearization.Atb. When looking at this link link our compute_delta function will need 3 inputs .What confuses me is that in GaussNewton we're just calling self.linear_solver.solve() to calculate delta_gn and no inputs are passed although it uses J and F(x) matrices. Can you help me to understand this ?

In their code, the input delta refers to the trust region radius, which we can probably keep as a member variable (of type tensor, for handling batches)*. The other two quantities can be directly computed from the linearization, so, as far as I can tell, we don't need to have any inputs. The sd direction we can get as you suggested (what they label dx_u), while the gn direction we can get with self.linear_solver.solve() (what they label dx_n).

*We might need an optimizer.reset() method if we do this, to make sure we don't keep trust regions between different optimizer calls.

Hi @jeffin07, any updates/timeline on this? We are targeting 0.1.1 release in the next week or two.

@mhmukadam Sorry I was really caught up at work. May be you can assign others