Excessive logging on EKS API server for external-secrets-webhook

Describe the solution you'd like I'm not using external-secrets-webhook, it's disabled from chart

set {

name = "webhook.create"

value = "false"

}

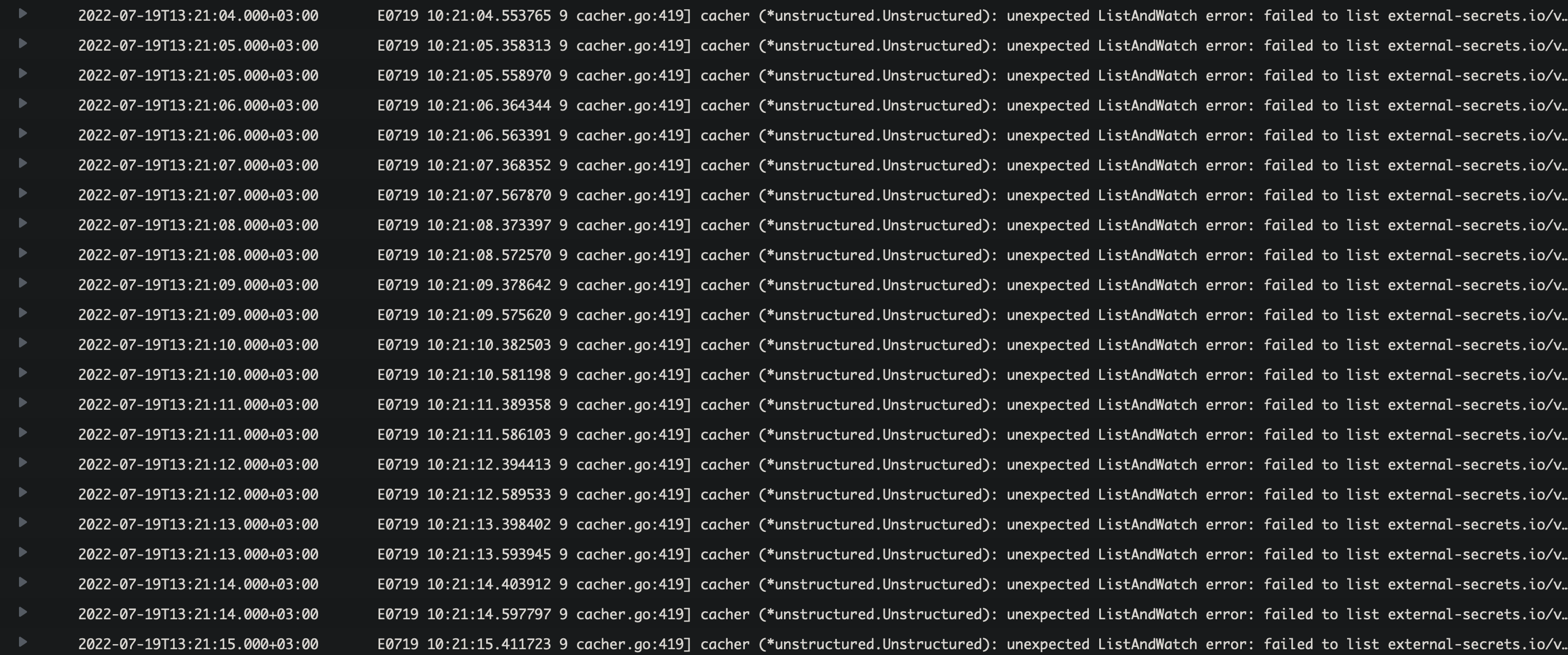

All is well, except I'm seeing an excessive amount of logs from api server which I'd like to suppress:

E0716 21:02:22.952017 10 cacher.go:419] cacher (*unstructured.Unstructured): unexpected ListAndWatch error: failed to list external-secrets.io/v1alpha1, Kind=ClusterSecretStore: conversion webhook for external-secrets.io/v1beta1, Kind=ClusterSecretStore failed: Post "https://external-secrets-webhook.external-secrets.svc:443/convert?timeout=30s": service "external-secrets-webhook" not found; reinitializing...

They're about 2 messages per second.

I'm using terraform to deploy the external secrets helm chart. My setup:

resource "helm_release" "external_secrets" {

name = "external-secrets"

repository = "https://charts.external-secrets.io"

chart = "external-secrets"

version = "0.5.6"

namespace = "external-secrets"

set {

name = "installCRDs"

value = "true"

}

set {

name = "serviceAccount.name"

value = "external-secrets-controller"

}

set {

name = "serviceAccount.create"

value = "false"

}

set {

name = "certController.create"

value = "false"

}

set {

name = "webhook.create"

value = "false"

}

}

Any chance I can suppress these errors?

I'm using external-secrets to sync secrets between an EKS cluster and AWS's Secret Manager, using my own Service Account w/ OIDC, and all is well, secrets are being sync`ed as expected.

I don't use the webhook, and don't understand why I'm being bombarded with these messages on kube-apiserver log-group:

Any pointers where to stop this call from?

from which pod are these logs?

from which pod are these logs?

They are from kube's api-server (cloudwatch api-server log group, since it's EKS)

I see :face_with_spiral_eyes: :palm_tree: . So, the CRDs contain a conversion webhook which point to the webhook service. This is only called if you still have v1alpha1 resources.

I think you have to migrate them so the conversion webhook won't be triggered.

hm, I'm on apiVersion: external-secrets.io/v1beta1, for both CRDs (ClusterSecretStore and ExternalSecrets)

This was a fresh install on "0.5.6".

😬

hmm, from the error message: failed to list external-secrets.io/v1alpha1 it seems that someone tries to list v1alpha1 which triggers the webhook. who could that be?

I see some references to name: v1alpha1 in 0.5.6's external-secrets chart, although the CRDs running on the cluster are on beta. I'll update to 0.5.8 and try again.

Thanks for taking the time 🙏

I see some references to

name: v1alpha1in 0.5.6's external-secrets chart, although the CRDs running on the cluster are on beta. I'll update to 0.5.8 and try again.Thanks for taking the time 🙏

These references are not for Custom Resources. These messages basically say that you have ExternalSecrets in v1alpha1 installed. If that is the case, the Webhook is kinda mandatory (or, you can convert manually the manifests). Unless you change it, I don't believe upgrading to 0.5.8 will do any difference to you.

An easy way to double check this would be to run the following command in your cluster:

k get externalsecrets.external-secrets.io -A -o jsonpath='{range .items[*]}{@.apiVersion}{"\t"}{@.metadata.namespace}{"\t"}{@.metadata.name}{"\n"}'

An easy way to double check this would be to run the following command in your cluster:

k get externalsecrets.external-secrets.io -A -o jsonpath='{range .items[*]}{@.apiVersion}{"\t"}{@.metadata.namespace}{"\t"}{@.metadata.name}{"\n"}'

This is the output (I'm running it in the same namespace as action runners)

external-secrets.io/v1beta1 actions-runner-system github-token-controller-manager

v1

do you have any other external-secrets deployment installed in your cluster @mattpopa ?

do you have any other external-secrets deployment installed in your cluster @mattpopa ?

No, just one. I've also tested this on 2 EKS clusters where I use external-secrets to sync Kube <-> AWS Secrets Manager.

I've updated to 0.5.8 and have the same issue.

Both are on external-secrets.io/v1beta1, and both work as expected in terms of updating secrets, except the excessive logging issue, which I don't understand.

If you have a client trying to get ExternalSecrets from your cluster, then this might be as well the issue. This client would be probably failing, though.

We are seeing same errors in our cluster and are using latest version 0.5.9 with webhook disabled. We see both v1alpha1 and v1beta1 messages. Below are some messages:

cacher (*unstructured.Unstructured): unexpected ListAndWatch error: failed to list external-secrets.io/v1alpha1, Kind=SecretStore: conversion webhook for external-secrets.io/v1beta1, Kind=SecretStore failed: Post \"https://crds-eso-install-webhook.crds-eso.svc:443/convert?timeout=30s\": service \"crds-eso-install-webhook\" not found; reinitializing...

cacher (*unstructured.Unstructured): unexpected ListAndWatch error: failed to list external-secrets.io/v1alpha1, Kind=SecretStore: conversion webhook for external-secrets.io/v1beta1, Kind=SecretStore failed: Post \"https://crds-eso-install-webhook.crds-eso.svc:443/convert?timeout=30s\": service \"crds-eso-install-webhook\" not found; reinitializing...

12 cacher.go:419] cacher (*unstructured.Unstructured): unexpected ListAndWatch error: failed to list external-secrets.io/v1alpha1, Kind=SecretStore: conversion webhook for external-secrets.io/v1beta1, Kind=SecretStore failed: Post \"https://crds-eso-install-webhook.crds-eso.svc:443/convert?timeout=30s\": service \"crds-eso-install-webhook\" not found; reinitializing...",

We do not have any objects with v1alpha1

The issue happens when you create a secretstore. This was created on a brand new install of ESO 0.5.9. I was able to create the crds, and operator and did not witness the error logs. After I created my first secretstore as per below config, the flood messages started.

apiVersion: external-secrets.io/v1beta1

kind: SecretStore

metadata:

name: app-secretstore

spec:

provider:

aws:

service: SecretsManager

region: us-east-1

auth:

jwt:

serviceAccountRef:

name: sa-account

Flood messages:

E0826 18:00:59.008647 10 cacher.go:419] cacher (*unstructured.Unstructured): unexpected ListAndWatch error: failed to list external-secrets.io/v1alpha1, Kind=SecretStore: conversion webhook for external-secrets.io/v1beta1, Kind=SecretStore failed: Post "https://crds-eso-install-webhook.crds-eso.svc:443/convert?timeout=30s": service "crds-eso-install-webhook" not found; reinitializing...

We do not have a webhook, the webhook is disabled in values file, nor do we have any objects with v1alpha1...

You can reproduce this by following the above steps

With a clean install it works without issues. Running 0.5.9 with the following manifests:

helm install external-secrets \

external-secrets/external-secrets \

-n external-secrets \

--create-namespace \

--set installCRDs=true \

--set webhook.create=false \

--set certController.create=false

apiVersion: v1

kind: ServiceAccount

metadata:

name: sa-account

annotations:

eks.amazonaws.com/role-arn: arn:aws:iam::xxxxxxxxxxxx:role/mj-poc

---

apiVersion: external-secrets.io/v1beta1

kind: SecretStore

metadata:

name: app-secretstore

spec:

provider:

aws:

service: SecretsManager

region: eu-west-1

auth:

jwt:

serviceAccountRef:

name: sa-account

---

apiVersion: external-secrets.io/v1beta1

kind: ExternalSecret

metadata:

name: example

spec:

refreshInterval: 1m

secretStoreRef:

name: app-secretstore

kind: SecretStore

target:

name: secret-to-be-created

creationPolicy: Owner

data:

- secretKey: basic-auth-user

remoteRef:

key: basic-auth-user

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"secretsmanager:GetResourcePolicy",

"secretsmanager:GetSecretValue",

"secretsmanager:DescribeSecret",

"secretsmanager:ListSecretVersionIds"

],

"Resource": [

"*"

]

}

]

}

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Principal": {

"Federated": "arn:aws:iam::xxxxxxxx:oidc-provider/oidc.eks.eu-west-1.amazonaws.com/id/xxxxxxxxxxxx"

},

"Action": "sts:AssumeRoleWithWebIdentity",

"Condition": {

"StringEquals": {

"oidc.eks.eu-west-1.amazonaws.com/id/xxxxxxxxxxxxx:sub": "system:serviceaccount:default:sa-account"

}

}

}

]

}

Thanks for testing, what EKS cluster version did you use?

I ran this on a fairly rusty: 1.21.14-eks-18ef993

Have the same issue after upgrade to EKS 1.22(1.23).

W1025 08:07:07.523722 10 reflector.go:324] storage/cacher.go:/external-secrets.io/clustersecretstores: failed to list external-secrets.io/v1alpha1, Kind=ClusterSecretStore: conversion webhook for external-secrets.io/v1beta1, Kind=ClusterSecretStore failed: Post "https://external-secrets-operator-webhook.external-secrets.svc:443/convert?timeout=30s": service "external-secrets-operator-webhook" not found

E1025 08:07:07.523760 10 cacher.go:424] cacher (*unstructured.Unstructured): unexpected ListAndWatch error: failed to list external-secrets.io/v1alpha1, Kind=ClusterSecretStore: conversion webhook for external-secrets.io/v1beta1, Kind=ClusterSecretStore failed: Post "https://external-secrets-operator-webhook.external-secrets.svc:443/convert?timeout=30s": service "external-secrets-operator-webhook" not found; reinitializing...

I do not have v1alpha1 objects and a webhook(disabled in values).

@jonjesse, @mattpopa, did you find a fix?

@jonjesse, @mattpopa, did you find a fix?

I did not. Nothing is using v1alpha1 on our clusters. I've enabled the webhook as a workaround.

@Yuriy6735 can you please paste the ClusterSecretStore CRDs? Does it have a spec.conversion set?

There shouldn't be a spec.conversion set if you install it with certController.create=false.

I have spec.conversion in the ClusterSecretStore

spec:

conversion:

strategy: Webhook

webhook:

clientConfig:

caBundle: Cf==

service:

name: kubernetes

namespace: default

path: /convert

port: 443

conversionReviewVersions:

- v1

ClusterSecretStore CRD exactly the same as in the crds directory except spec.conversion

EKS version - v1.23.9-eks-ba74326 Installation method:

helm install external-secrets \

external-secrets/external-secrets \

-n external-secrets \

--create-namespace \

--set installCRDs=true \

--set webhook.create=false \

--set certController.create=false

It may be related to helm installation and https://github.com/external-secrets/external-secrets/blob/main/deploy/crds/bundle.yaml#L2877 Pulled helm chart CRDs contains

conversion:

strategy: Webhook

webhook:

conversionReviewVersions:

- v1

clientConfig:

service:

name: {{ include "external-secrets.fullname" . }}-webhook

namespace: {{ .Release.Namespace | quote }}

path: /convert

I guess the issue is the conversion webhook not getting configured properly due to certController.create=false. My assumption is that if we remove the conversion part from the CRD then this will work. Can you patch that manually to see if it resolves the issue with log spamming?

I'm not sure if/how to deal with that inside the helm chart :raised_eyebrow:

Confirm, installed CRDs from https://github.com/external-secrets/external-secrets/tree/main/config/crds/bases

and helm chart with installCRDs: false do not generate spamming logs.

(certController and webhook disabled)

This issue is stale because it has been open 90 days with no activity. Remove stale label or comment or this will be closed in 30 days.

Hi @moolen

I still get the error with version v0.8.1 and the following value-file snippet:

installCRDs: true

certController:

create: false

webhook:

create: false

We are running OCP 4.10 and the kube-api logs are spammed with the following error message:

W0331 14:11:23.107567 18 reflector.go:324] storage/cacher.go:/external-secrets.io/externalsecrets: failed to list external-secrets.io/v1alpha1, Kind=ExternalSecret: conversion webhook for external-secrets.io/v1beta1, Kind=ExternalSecret failed: Post "https://external-secrets-webhook.ccb-external-secrets-operator.svc:443/convert?timeout=30s": service "external-secrets-webhook" not found E0331 14:11:23.107591 18 cacher.go:424] cacher (*unstructured.Unstructured): unexpected ListAndWatch error: failed to list external-secrets.io/v1alpha1, Kind=ExternalSecret: conversion webhook for external-secrets.io/v1beta1, Kind=ExternalSecret failed: Post "https://external-secrets-webhook.ccb-external-secrets-operator.svc:443/convert?timeout=30s": service "external-secrets-webhook" not found; reinitializing...

Do I miss anything here? I've thought, that this error will disappear with #2113

We're just using v1beta1 CR version

Best

Did you try to set crds.conversion.enabled=false ?

That should do the trick

Hey, I'm a bit late to the party, but this exact log-spamming happened to us recently after updating prometheus to v2.40+ (v43+ helm chart version).

I didn't find any traces of newer prometheus (or its default crds/rules/etc.) trying to list/access external-secrets.io/v1alpha1 secrets, but errors immediately stopped after rolling back to v2.39. Did any of you that experienced the same issue had prometheus stack installed on EKS/openshift? If so, this would shed some light on the issue

Similar to other people here, we had no v1alpha1 secrets/stores from the start (almost a year now), and no webhook /certController enabled. And we never saw any service trying to list/access alpha secrets to this day.

Anyways, crds.conversion.enabled=false and updating to external secrets v0.8.1 helped in this case with 2.40 prometheus, thanks @moolen

@rsolovev we are also facing the issue, but our prometheus is very old version. Just curious how did you checked whether the prometheus components making call to v1alpha1? if there is a method please let me know.