MPI-parallel DDS with replicas

Re-implementation of #2499

Description of changes:

- the

DipolarDirectSumCpumethod was rewritten to support replicas and MPI-parallelization- API change: a new argument

n_replicaswas added, defaults to 0 so that old ESPResSo scripts still work

- API change: a new argument

- the original

DipolarDirectSumWithReplicaCpumethod was removed - the minimum image convention is now tested

Hi @jngrad @RudolfWeeber @fweik Did testing of the MDdS implementation vs. p3m and theory. Will write with some detail the conclusion for completeness.

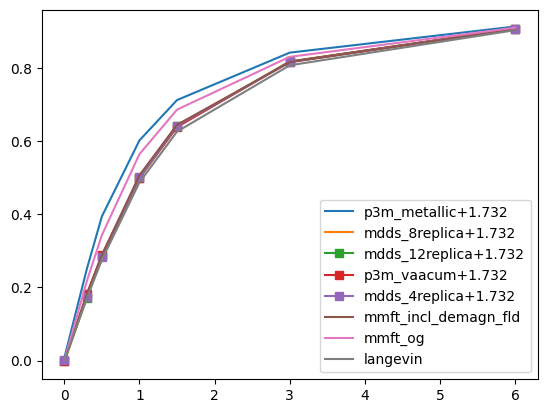

The test is magnetisation of a ferrofluid, 3d pbc, \phi=4%, lambda=3. Validation against Langevin magnetisation law and modified mean-field theory (MMFT2; 2nd order). Key subtlety is the fact that direct sum and p3m use different boundary conditions (bc), therefore are not comparable as such. There are several ways to go about this.

By default, p3m use the so-called metallic bc. MMFT2 should agree with p3m in this case. Direct sum solves for essentially a finite system (given enough replicas one can argue a pseudo-infinite system) in vacuum, which in effect means that there is implicitly a demagnetisation field in the results obtained from the direct sum. I expanded the MMFT2 to account for the demagnetisation field and should therefore be able to fit the direct sum results. Alternatively, p3m has a surface correction method implemented which is run if the \epsilon parameter is not 0. To my understanding setting the \epsilon param to 1 corresponds to p3m using vacuum boundary conditions and should therefore match MDdS and MMFT2 with the demagnetisation field. The figure attached fits my expectations. I would say that MDdS is functioning as intended. Hope this is helpful. Cheers.