djl

djl copied to clipboard

djl copied to clipboard

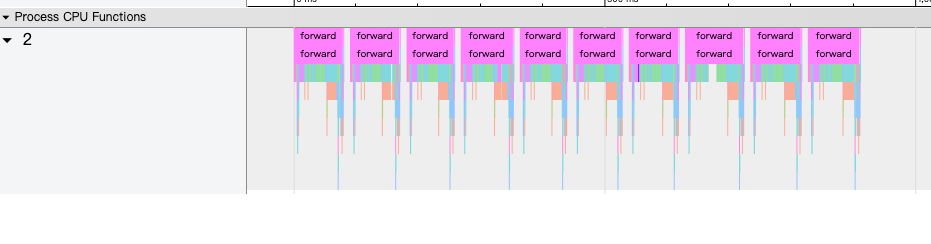

No CUDA events show up in the profiler result

Description

https://docs.djl.ai/master/docs/development/profiler.html I follow this page and set useCuda=true but I cannot see any cuda events in the profiler result

Expected Behavior

CUDA events

How to Reproduce?

JniUtils.startProfile(true, true, true);

for (int i = 0; i < 10; i++) {

predict(input);

}

JniUtils.stopProfile("/tmp/" + ptModel.getName());

log.info("save profile file to {}", "/tmp/" + ptModel.getName());

Environment Info

DJL version 0.20.0 pytorch-native-cu116 torch version 1.12.1

Hi @jestiny0 , I just tested this issue on the newest DJL version in Linux. However, it worked normally; the issue was not reproduced.

My test code is in engines/pytorch/pytorch-engine/src/test/java/ai/djl/pytorch/integration/ProfilerTest.java. In Line 66, you can switch between useCuda=true and false. The output build/profile.json when setting useCuda=true has no missing events compared with setting it false.

I believe this unit test example is equivalent to your example. Could you run it and see where the issue is or how to reproduce it?

In case the issue is reproduced, I find that the Java part of this JniUtils.startProfile and JniUtils.stopProfile is very simple. The relevant JNI is also aligned with the pytorch implementation: https://github.com/pytorch/pytorch/blob/master/torch/csrc/autograd/profiler_legacy.cpp

So in case the issue is reproduced, could you also estimate if this is an issue from DJL or from native pytorch?

@KexinFeng I still can't get the GPU-related events. I'm using DJL 0.20.0 and CUDA version 11.6. Could it be related to the CUDA version?

Here is my testing environment. The difference I spotted is that DJL version is 0.22.0. Maybe you can first see if this bug-free execution is reproducible on your machine?

os.name: Linux

-------------- Directories --------------

temp directory: /tmp

DJL cache directory: /home/ubuntu/.djl.ai

Engine cache directory: /home/ubuntu/.djl.ai

------------------ CUDA -----------------

GPU Count: 1

CUDA: 116

ARCH: 75

GPU(0) memory used: 104792064 bytes

----------------- Engines ---------------

DJL version: 0.22.0-SNAPSHOT

[WARN ] - No matching cuda flavor for linux found: cu116mkl/sm_75.

[DEBUG] - Loading mxnet library from: /home/ubuntu/.djl.ai/mxnet/1.9.1-mkl-linux-x86_64/libmxnet.so

[WARN ] - No matching cuda flavor for linux found: cu116mkl/sm_75.

Default Engine: MXNet:1.9.0, capabilities: [

CPU_SSE,

SIGNAL_HANDLER,

LAPACK,

BLAS_OPEN,

CPU_SSE2,

DIST_KVSTORE,

CPU_SSE3,

OPENMP,

OPENCV,

MKLDNN,

]

MXNet Library: /home/ubuntu/.djl.ai/mxnet/1.9.1-mkl-linux-x86_64/libmxnet.so

Default Device: cpu()

PyTorch: 2

MXNet: 0

XGBoost: 10

LightGBM: 10

OnnxRuntime: 10

TensorFlow: 3

--------------- Hardware --------------

Available processors (cores): 8

Byte Order: LITTLE_ENDIAN

Free memory (bytes): 492866816

Maximum memory (bytes): 8296333312

Total memory available to JVM (bytes): 520093696

Heap committed: 520093696

Heap nonCommitted: 40943616

GCC:

gcc (Ubuntu 9.4.0-1ubuntu1~20.04.1) 9.4.0

Copyright (C) 2019 Free Software Foundation, Inc.

This is free software; see the source for copying conditions. There is NO

warranty; not even for MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE.