Cross cluster communication in Dapr

In what area(s)?

/area runtime /area docs /area/components-contrib

Design for Cross cluster communication in Dapr

This document is a proposal to enable communication across clusters in a container environment,and discusses the design options to support this behaviour in Dapr enabled clusters.

Table of Contents

-

Objective / Scenarios

-

Goals

-

Out of Scope

-

Requirements

-

Design Constraints

-

Some concepts to understand the design better

- Difference between multi-tenancy and multi-cluster

- What is Service Discovery

- How does name resolution work in Dapr today?

-

Strategies for Multi-cluster communication in a container environment

- Gateways

- Flat Network

- Selective End Point Discovery

-

Proposed Design for Multi-Cluster Communication in Dapr

- per Cluster

- per App

-

Additional References

Objective

In Kubernetes there are scenarios where multiple users - for example - dev, QA teams want to use a cluster environment. These can be seggregated via namespaces. Multi-tenancy is the ability to have multiple distinct namespaces within a single cluster. Quotas and policies can be applied for each namespace and this enables simple solution to seggregate tenants.

Multi-cluster environment is where there are many seperate clusters that need to communicate. These clusters can exist in a falt network setup where every pod IP is reachable from every other pod. Setting up such clusters is beyind the scope of this proposal.

Also, multiple clusters could be federated to allow for communication.

Both these scenarios are in the scope of this proposal.

The intention of this proposal is to enable Dapr features like actor invocation , service invocation to work seamlessly and in a cohesive manner multi-cluster environments.

Common Scenarios for Multicluster

-

Scenario 1 :

We have multiple clusters for security reasons with clear access policies for communication across these clusters. I should be able to securely communicate between the applications running in these clusters.

-

Scenario 2 :

I have multiple clusters that have distributed applications, that interact with each other to deliver an end-to-end experience. Microservices in one cluster have to constantly interact with microservices in other cluster.

-

Scenario 3 :

I have multiple clusters for high availability. My applications run as multiple copies across these clusters. It is very important that when one cluster fails, the other cluster takes up the traffic, with minimal disruption to the user. I want to be able to define policy on which services should be leveraged as primary or failover.

-

TBD -- Add more scenarios

These scenarios lead to the following requirements -

-

Requirement 1 :

Applications within a cluster should be able to communicate with applications deployed in other connected clusters, without having to make changes in the implementation/code.

-

Requirement 2 :

It should be possible to securely communicate across the clusters by using a common root CA to allow mTLS encrypted traffic across services.

-

Requirement 3 :

It should be possible to define policies to disambiguate service discovery when multiple instances of the app are running in multiple clusters.

For example if there are multiple instances of the target app being invoked, user should be able to specify if the local app be given first priority vs. app running on a cluster in the a same region vs. app running on a cluster in the same availability region vs. app running in a different region.

-

Requirement 4 :

Daprized applications should be able to communicate with the another instance of the target application when a failover happens

Goals

This document will focus on the following goals :

-

Enable Service Discovery - Enhance name resolution to function for name resolution across clusters to address Listed Scenarios to support -

- Service Invocation across clusters - Actor Invocation across clusters -

Enable definition of access policy in a multi-cluster environment

Out of Scope

- Networking setup and reachability between clusters is a pre-requisite.

- Setting up mTLS across multiple clusters is not in scope for this proposal

- Ingress configuration is not in scope for this proposal

Requirement

| Priority | Requirement | Description |

|---|---|---|

| P0 | Setup Configuration | Enable configuration to be setup for an app to discover applications in other clusters. |

| P0 | Name Resolution Building Block | Name Resolution should continue to function as-is in case of cluster and non-cluster environments |

| P0 | Enhance Dapr name-resolution components to support cross-cluster communication | Name resolution components should support cross-cluster service discovery in adherence with policies specified |

| P0 | Service invocation across clusters | Support Service Invocation across cross-cluster services with no impact on SDK / API |

| TBD | Tracing | Ensure trace can be correlated across cross-cluster communication |

| TBD | Control plane service | Control plane service to manage cross cluster access policy |

| TBD | Actor communication across clusters | Support actors to invoke calls across clusters |

** P0 requirements are targetted for design and implementation for 1.10, while we continue to evolve the design and implementation for rest of the scenarios.

Constraints

Following are the constraints to ensure the new solution does not break existing implementation :

-

There should be no breaking SDK changes to enable multi-cluster communication

-

There should be no assumptions on the cluster topology. When a new configuration is added, App should be able to consume the new configuration without needing a restart

-

Tracing should be supported across clusters

Concepts

What is Service Discovery

In the world of microservices implemented using Dapr, destination services are referred to by their registered app name. The process of translating the registered app name to a internal gRPC port of the dapr side car is part of service discovery in Dapr.

This is achieved via name resolution building block and components.

Depending on the name resolution component user chooses, a registry is maintained to keep track of services and their IP Addresses. Each name resolution component has different strategy to build this registry and to keep it updated. This strategy also impacts the ability of the name resolution component to scale.

Also the name resolution component is responsible to health checking and keeping the service registry updated. This enables the service to work without having to build and maintain awareness of about other services in the network.

How does service discovery work in Dapr today?

Name resolution is a building block in Dapr. Loading a name resolution component is mandatory in both self-hosted and kubernetes modes. This building block enables -

- app registry with the chosen or default name resolution component and

- lookup for resolving the app name to target IP as part of service discovery.

Dapr supports multiple components for name resolution. Each component has a distinct strategy to resolve and provide the IP address for a given name. Following table documents a high level strategy for each supported component and its ability to support multi-cluster environments.

| Component | Domain Name Resolution Strategy | Supports Multi-Cluster |

|---|---|---|

| mDNS | Supports name resolution in a small network by issuing multicast UDP query to all hosts in the network, expecting the host to identify itself. This is not scalable across large networks and works well for Dapr self-hosted mode | No |

| Hashicorp Consul | Provides DNS registry and query interface | Yes |

| Kubernetes | Configured as default name-resolution component in kubernetes mode by dapr. DNS pod and service are deployed on the cluster | No |

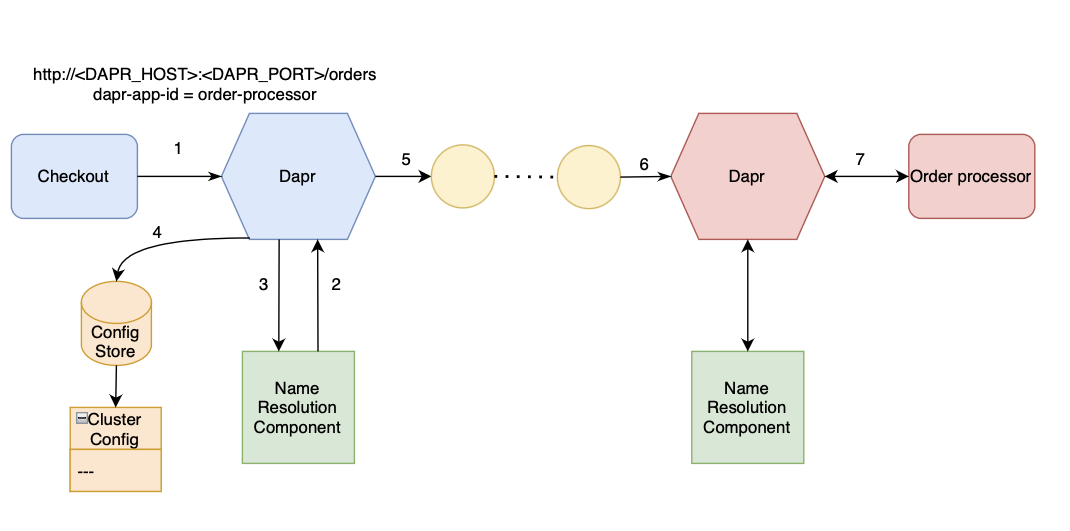

Below is an illustration of Name Resolution in a single cluster standalone mode for Service invocation quickstart in standalone mode.

Strategies for Multi-cluster communication in a container environment

There are multiple strategies to support cross cluster communication :

-

Gateways -

Clusters are managed via independent service meshes and operate in unified trust domain (aka. share a common root certificate/signing certificate). An ingress gateway is configured to accept trusted traffic across these clusters. The downside of this approach is additional overhead of configuring the networks to allow cross-cluster traffic.

-

Flat Network -

All the pods in each cluster have a non-overlapping IP address. Clusters can communicate via VPN tunnels, to ensure all pods are able to communicate with all other pods on the network.

This scenario has scalability issues, overhead of managing non-overlapping IP addresses across clusters,and puts all the clusters in a common fault-domain.

-

Selective End-point discovery -

This approach enables limited visibility of services in the cluster, for each application. Sidecar for a pod is configured with list of end points that a service wants to talk to.

If the pod is in another cluster, that is assumed to be reachable via an ingress gateway, then service discovery provides the IP address of the ingress gateway instead. When a pod routes traffic to a ingress gateway, the ingress gateway uses ServerName Indication (SNI) to know where the traffic is destined. This approach does not require a flat network. We will discuss the design details for this approach in the subsequent sections.

Proposed Approach for Multi-Cluster Communication in Dapr

As discussed above, there are several approaches to achieve cross-cluster communication in Dapr.

-

Dapr should support mTLS traffic between dapr side cars running different clusters configured via any of the listed strategies.

-

Dapr name resolution should enable service discovery in all the above scenarios.

-

Dapr should provide a standard approach to setup and run applications in a multi-cluster environment, irrespective of the network provider.

-

Users should be able to specify required configuration via a configuration store.

-

Dapr name resolution component should be enhanced to refer to the configuration store to retrieve cluster configuation information to help in resolving to the right target address.

For example, if the orderProcessor application in the above example is running in three different clusters, checkout application should get the right destination order processor application details and dapr side car should be able to communicate with the obtained IP endpoint.

-

In the initial phase, we propose that the Configuration of allowed endpoints per application for each side-car be setup via Configuration Store.

Since configuration store does not support 'SET' API to add configuration, this would need to be setup directly in the configuration store.

Additional References

obviously your proposal goes further than this but just in case you find it useful here is a proof of concept for implementing Gateways across clusters.

I like the overall approach here. The out-of-scope, constraints and requirements sections are all well laid out.

The downside of this approach is additional overhead of configuring the networks to allow cross-cluster traffic.

Can you explain what network configuration needs to be done to allow cross-cluster traffic in the gateway scenario? In my eyes, the two clusters operate independently and the gateway accepts external traffic and then distributes it to the internal instances. From a configuration point of view, the network ingress has a Dapr sidecar running next to it which is used to forward the traffic to the correct instance. Very similar to the architecture described here: https://dev.to/stevenjdh/create-a-kubernetes-nginx-controller-with-dapr-support-3e8n.

@jjcollinge @yaron2 - I am going through the shared references and will revert back soon. Thanks for sharing 👍

I like the overall approach here. The out-of-scope, constraints and requirements sections are all well laid out.

The downside of this approach is additional overhead of configuring the networks to allow cross-cluster traffic.

Can you explain what network configuration needs to be done to allow cross-cluster traffic in the gateway scenario? In my eyes, the two clusters operate independently and the gateway accepts external traffic and then distributes it to the internal instances. From a configuration point of view, the network ingress has a Dapr sidecar running next to it which is used to forward the traffic to the correct instance. Very similar to the architecture described here: https://dev.to/stevenjdh/create-a-kubernetes-nginx-controller-with-dapr-support-3e8n.

@yaron2 - I went through the shared example where incoming traffic to nginx forwards all traffic to the Dapr sidecar using host path matching. And Dapr sidecar in-turn converts this into a gRPC call and forwards the request to right app within the cluster.

For egress, when app wants to invoke another app, we could have two options -

- Manual Configuration - App is aware of the FQDN of target app and can specify the same [ very difficult and unmanageable in mid/ large clusters via manual configuration ]

- Discovery based on policy - side car infers the target based on configured policies like - local only, some weightage to cluster indicating priority etc. This configuration needs to be performed in the service mesh or name resolver.

This scenario is indicated in Requirement 3

@akhilac1 is this proposal also covering Dapr standalone deployments across VMs to communicate? Cross-cluster communication for selfhosted is a necessary feature.

@akhilac1 is this proposal also covering Dapr standalone deployments across VMs to communicate? Cross-cluster communication for selfhosted is a necessary feature.

@berndverst - Thanks for looking at this proposal. Seeking clarity so that I can include the right scenario. Communication across daprd running on different VMs would still work if the VMs were running in the same cluster. Do you see a scenario where self hosted daprd needs to communicate with another self-hosted daprd in a different cluster?

@akhilac1 is this proposal also covering Dapr standalone deployments across VMs to communicate? Cross-cluster communication for selfhosted is a necessary feature.

@berndverst - Thanks for looking at this proposal. Seeking clarity so that I can include the right scenario. Communication across daprd running on different VMs would still work if the VMs were running in the same cluster. Do you see a scenario where self hosted daprd needs to communicate with another self-hosted daprd in a different cluster?

Some users want to be able to use Azure Container Instances for example to run distinct Dapr apps and their sidecars (which are effectively in self hosted mode) but be able to communicate with each other using service to service invocation. This is a cross-cluster scenario, where each cluster is of size 1.

How do you define cluster by the way?

I may want to do service invocation with Dapr in standalone mode from one VM in one VNET to another VM in another VNET. That should be possible somehow.

I believe in the original cross-cluster communication proposal from a year ago or so we mentioned this scenario.

I want to start with the scenarios themselves and really see a practical example that a customer would actually put into production, with a real need and get that customer to talk openly on what their problem space is. Regarding scenarios 1-3. For 1 I it seems to be the same as scenarios 2. For scenario 2 I have never come across a case of cross cluster actors and even cross cluster service innovation between apps is limited, hence my ask for a true customer. Scenario 3 is not cross cluster, this is failover across clusters, and given this proposal is focused on name resolution as the primary feature deliverable, that is not relevant IMO.

The confusion arises here because it talks about clusters, when as is pointed out with the VM question, this is simply providing another level of scoping on name resolution. In other words I want to "Resolve this named application running this named thing" ( thing being cluster, organization, foobar group) and these named things are networked together. For example, VMs in a VNET, node in a K8s cluster, Raspberry PIs with network cables between them and a router. Let's call this a compute group to avoid the cluster confusion. The primary scenario that I have seen for this global name resolution is when you have a single central compute group that is distributing work to other compute groups because it knows the specialization on each one. For example one compute group is a super computer, another has special GPUs etc. An orchestrator of compute groups as it were. You are never really going to have a checkout application call an order application in another compute group.

In terms of implementation, the naming service has to be globally available to all the compute groups and each compute group registers its name and applications with this naming service. In other words, the naming service is a global service. At this point, this is little to do with Dapr project, other than Dapr simply uses this service and understands that is can resolve with Compute Group->Application Name in a call.

IMO this is a completely separate project in itself, and not part of Dapr org, which is a set of distributed applications APIs and hence this proposal although useful for Dapr should be move to another project entirely to build a global name resolution service that includes other features that are needed including metadata discovery for the capabilities of the applications (services) in each compute group and other challenges like that.

Dapr newbie here, but a real user. Scenarios 1 and 2 are what I'd like to see solved. I think scenario 3 may be better solved via a service mesh or something like Skupper.

We enable Kubernetes cross-cluster connectivity using ghostunnel with Pod hostAliases (or /etc/hosts):

client (TCP) → ghostunnel-client (TLS) → Envoy (TLS passthrough) → (TLS) ghostunnel-server → (TCP) server

The client looks up the server hostname which resolves to localhost (127.0.0.1) and makes the connection to the server which is tunneled over the ghostunnel MTLS connection to the real server. This works just as well if the client and/or server are VMs instead of Kubernetes Pod.s as all that is required is ghostunnel running on each end.

If the Dapr instances can communicate with each other over MTLS then connectivity can be routed via any ingress controller or load-balancer that supports SNI (which should be all of them). Service discovery would still need to be solved but in the absence of any global naming service a simple selective endpoint mapping similar to our hostAliases (or /etc/hosts) approach would work.

I believe this the Selective End-point discovery option described above.

Then if the Dapr Service invocation supported tunneling arbitrary TCP connections then it could be used in all of the places we currently use ghostunnel. Several services use protocols that do not TLS, GRPC or HTTP - for example: the Postgres wire protocol has a weird server-speaks-first initial handshake where the client has to send an ‘S’ if it wants a secure connection, so is not compatible with TLS SNI (even though the clients send it); the native NATS protocol is server-speaks-first so is incompatible with TLS SNI and can’t easily be proxied; Kerberos is TCP.

Essentially one option to enable cross-anything Dapr instance communication may be to embed the ghostunnel in Dapr (maybe even resuing some code), improve it so that the tunnel can be established in either direction, and then allow the hostname of the remote Dapr instance to be configured for service discovery.

This issue has been automatically marked as stale because it has not had activity in the last 60 days. It will be closed in the next 7 days unless it is tagged (pinned, good first issue, help wanted or triaged/resolved) or other activity occurs. Thank you for your contributions.

This issue has been automatically closed because it has not had activity in the last 67 days. If this issue is still valid, please ping a maintainer and ask them to label it as pinned, good first issue, help wanted or triaged/resolved. Thank you for your contributions.

This issue has been automatically marked as stale because it has not had activity in the last 60 days. It will be closed in the next 7 days unless it is tagged (pinned, good first issue, help wanted or triaged/resolved) or other activity occurs. Thank you for your contributions.

This issue has been automatically closed because it has not had activity in the last 67 days. If this issue is still valid, please ping a maintainer and ask them to label it as pinned, good first issue, help wanted or triaged/resolved. Thank you for your contributions.

@msfussell "You are never really going to have a checkout application call an order application in another compute group." :-) We're wrestling with a use case like this one right now. We have current set of applications in multiple compute groups that need to send information (let's call it "telemetry" data, but it isn't) to a central or regional compute group. The ideal mechanism because of lower costs and less complexity would be to use Dapr service-to-service invocation, but we can't because of this limitation. The alternatives are much more complex and expensive.

We do this today with Skupper in kubernetes. However, you must turn off mtls in the s2s invocation. We are experimenting with using cert-manager with an external issuer to see if we can have both clusters trust the same cert

This issue has been automatically marked as stale because it has not had activity in the last 60 days. It will be closed in the next 7 days unless it is tagged (pinned, good first issue, help wanted or triaged/resolved) or other activity occurs. Thank you for your contributions.

keep alive

Watching, keep it alive!

This issue has been automatically marked as stale because it has not had activity in the last 60 days. It will be closed in the next 7 days unless it is tagged (pinned, good first issue, help wanted or triaged/resolved) or other activity occurs. Thank you for your contributions.

This issue has been automatically closed because it has not had activity in the last 67 days. If this issue is still valid, please ping a maintainer and ask them to label it as pinned, good first issue, help wanted or triaged/resolved. Thank you for your contributions.

keep alive

This issue has been automatically marked as stale because it has not had activity in the last 60 days. It will be closed in the next 7 days unless it is tagged (pinned, good first issue, help wanted or triaged/resolved) or other activity occurs. Thank you for your contributions.

This issue has been automatically closed because it has not had activity in the last 67 days. If this issue is still valid, please ping a maintainer and ask them to label it as pinned, good first issue, help wanted or triaged/resolved. Thank you for your contributions.

This issue has been automatically marked as stale because it has not had activity in the last 60 days. It will be closed in the next 7 days unless it is tagged (pinned, good first issue, help wanted or triaged/resolved) or other activity occurs. Thank you for your contributions.

This issue has been automatically closed because it has not had activity in the last 67 days. If this issue is still valid, please ping a maintainer and ask them to label it as pinned, good first issue, help wanted or triaged/resolved. Thank you for your contributions.

keep alive