[Proposal] Distributed Lock API design

Note: this proposal has been updated (as v0.2) after the review and discusion on the first version(as v0.1).

Here is the first version (as v0.1) of this proposal. There are some modifications in v0.2

In what area(s)?

/area runtime

0. tl;dr

This proposal try to add TryLock and Unlock API.

The Lock Renewal API might be controversial and will not be added into the first version

1. Why it is needed

Application developers need distributed locks to keep their data safe from race conditions,but implementing a distributed lock correctly is challenging,since you have to be careful to prevent deadlock or some incorrect corner cases from happening.

An easy-to-use distributed lock API provided by runtime sidecar can be helpful. Actually we have already implemented this API in Layotto ,and hope to contribute it to Dapr.

2. Evaluation of products on the market

| System | tryLock(non-blocking lock) | Blocking lock(based on watch) | Availability | Write operations are linearizable | sequencer(chubby's feature) | Lock renewal |

|---|---|---|---|---|---|---|

| Stand-alone redis | yes | x | unavailable when single failure | yes | yes(need poc) | yes |

| redis cluster | yes | x | yes | no. Locks will be unsafe when fail-over happens | yes(need poc) | yes |

| redis Redlock | yes | |||||

| consul | yes | |||||

| zookeeper | yes | yes | yes. the election completes within 200 ms | yes | yes use zxid as sequencer | yes |

| etcd | yes | yes | yes | yes | yes use revision | yes lease.KeepAlive |

There are some differences in feature supporting.

2. High-level design

2.1. API

2.1.0. Design principles

We are faced with many temptations. In fact, there are many lock related features that can be supported (blocking locks, reentrant locks, read-write locks, sequencer, etc.)

But after all, our goal is to design a general API specification, so we should be as conservative as possible in API definition.Start simple, abstract the simplest and most commonly used functions into API specifications, and wait for user feedback before considering adding more abstraction into API specification.

2.1.1. TryLock/Unlock API

The most basic locking and unlocking API.

TryLock is non-blocking, it return directly if the lock is not obtained.

proto:

// Distributed Lock API

// A non-blocking method trying to get a lock with ttl.

rpc TryLock(TryLockRequest)returns (TryLockResponse) {}

rpc Unlock(UnlockRequest)returns (UnlockResponse) {}

message TryLockRequest {

// Required. The lock store name,e.g. `redis`.

string store_name = 1;

// Required. resource_id is the lock key. e.g. `order_id_111`

// It stands for "which resource I want to protect"

string resource_id = 2;

// Required. lock_owner indicate the identifier of lock owner.

// You can generate a uuid as lock_owner.For example,in golang:

//

// req.LockOwner = uuid.New().String()

//

// This field is per request,not per process,so it is different for each request,

// which aims to prevent multi-thread in the same process trying the same lock concurrently.

//

// The reason why we don't make it automatically generated is:

// 1. If it is automatically generated,there must be a 'my_lock_owner_id' field in the response.

// This name is so weird that we think it is inappropriate to put it into the api spec

// 2. If we change the field 'my_lock_owner_id' in the response to 'lock_owner',which means the current lock owner of this lock,

// we find that in some lock services users can't get the current lock owner.Actually users don't need it at all.

// 3. When reentrant lock is needed,the existing lock_owner is required to identify client and check "whether this client can reenter this lock".

// So this field in the request shouldn't be removed.

string lock_owner = 3;

// Required. expire is the time before expire.The time unit is second.

int32 expire = 4;

}

message TryLockResponse {

bool success = 1;

}

message UnlockRequest {

string store_name = 1;

// resource_id is the lock key.

string resource_id = 2;

string lock_owner = 3;

}

message UnlockResponse {

enum Status {

SUCCESS = 0;

LOCK_UNEXIST = 1;

LOCK_BELONG_TO_OTHERS = 2;

INTERNAL_ERROR = 3;

}

Status status = 1;

}

Q: What is the time unit of the expire field?

A: Seconds.

Q: Can we force the user to set the number of seconds to be large enough(instead of too small)?

A: There is no way to limit it at compile time or startup, forget it

Q: What would happen if different applications pass the same lock_owner?

case 1. If two apps with different app-id pass the same lock_owner,they won't conflict because lock_owner is grouped by 'app-id ',while 'app-id' is configurated in sidecar's static config(configurated in config.json or passed as parameters at startup)

case 2.If two apps with same app-id pass the same lock_owner,they will conflict and the second app will obtained the same lock already used by the first app.Then the correctness property will be broken.

So user has to care about the uniqueness property of lock_owner.

Q: Why not add metadata field

A: Try to be conservative at the beginning, wait until someone feedbacks that there is a need, or find that there is a need to be added in the process of implementing the component

Q: How to add features such as sequencer and reentrant locks in the future?

A: Add feature options in the API parameters,and the component must also implement the Support() function

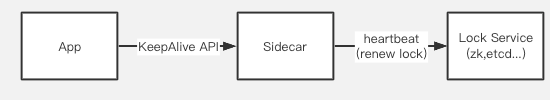

2.1.2. Lock Renewal API

Renewal API aims to refresh the existing lock and postpone the expiration time.

Lock Renewal API won't be in the first version of this API. Here are some considerations for discussion.

Solution A: add an API "LockKeepAlive"

rpc LockKeepAlive(stream LockKeepAliveRequest) returns (stream LockKeepAliveResponse){}

message LockKeepAliveRequest {

// resource_id is the lock key.

string resource_id = 1;

string lock_owner = 2;

// expire is the time to expire

int64 expire = 3;

}

message LockKeepAliveResponse {

enum Status {

SUCCESS = 0;

LOCK_UNEXIST = 1;

LOCK_BELONG_TO_OTHERS = 2;

}

// resource_id is the lock key.

string resource_id = 1;

Status status = 2;

}

Users have to start a thread or coroutine to periodically renew the lock.

The input parameters and return results of this API are all streams. App and sidecar only need to maintain one connection. Each time the lock needs to be renewed, the connection is reused to transfer the renewal request.

Q: Why not put the lock renewal as a stream parameter into tryLock?

-

Lock renewal is not a high frequency demand, so we want trylock to be as simple as possible;

-

Single responsibility principle.When we want to add a blocking lock,the renewal API can be reused;

Q: The renewal logic is too complicated, can we make it transparent to users?

A: sdk can do this logic for users.Sdk will start a thread,coroutine or nodejs timing event to automatically renew the lease

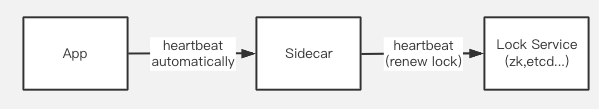

Solution B: Make users not aware of the renewal logic

Our sidecar can automatically renew the lease with heartbeat. App and sidecar maintain a heartbeat for failure detection.

Disadvantages/difficulties:

- If we reuse a public heartbeat, it is difficult to customize the heartbeat interval

An option is to ensure that the heartbeat interval low enough, such as 1 time per second

- How to ensure reliable failure detection?

For example, the following java code unlock() method may fail:

try{

}finally{

lock.unlock()

}

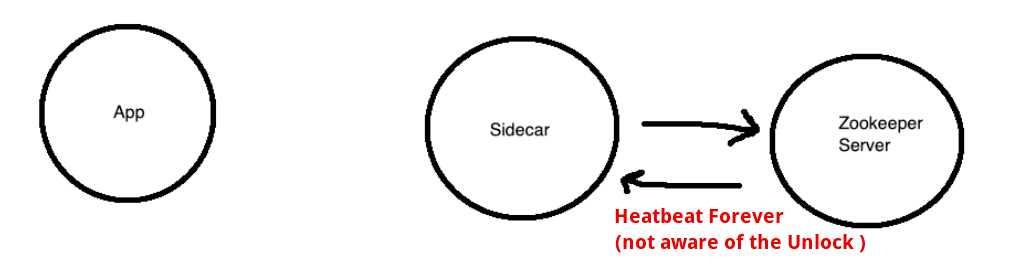

If it is a lock in JVM, unlock can guarantee success (unless the entire JVM fails), but unlock may fail if it is called via the network. How to ensure that the heartbeat is interrupted after the call fails?

Here shows the corner case:

Solving this case requires the app to report some fine-grained status with the heartbeat.

We can define a http callback SPI, which is polled and detected by the sidecar, and the data structure returned by the callback is as follows:

{

"status": "UP",

"details": {

"lock": [

{

"resource_id": "res1",

"lock_owner": "dasfdasfasdfa",

"type": "unlock_fail"

}

],

"xxx": []

}

}

The application has to handle status collection, reporting, cleaning up after the report is successful, and limiting the map capacity (for example, what if the map is too large when report fails too much times?), which requires the app to implement some complex logic, and it must be put in the SDK.

-

This implementation is actually the same as Solution A. It opens a separate connection for status management and failure detection, and user reports the status through this public connection when necessary.

-

Solution B actually make API spec rely on heartbeat logic. It relies on the heartbeat interval and the data structure returned by the heartbeat. It is equivalent to that the API spec relies on the implementation of sidecar, unless we can also standardize the heartbeat API (including interval, returned data structure, etc.)

Conclusion

At present, the Lock Renewal API might be controversial and will not be added into the first version.

Personally I prefer the solution A.Let the SDK do the renewal logic. Although users have to directly deal with the lease renewal logic when using grpc, it is not hard for developers to understand.

I put it here to see your opinion.

3. Future work

- Reentrant Lock

There will be some counting logic.We need to consider whether all locks support reentrancy by default, or add a feature option in the parameter to identify that the user needs it to be reentrant

- Heartbeat API

If we want to implement more coordinator APIs such as blocking lock and leader election,we need a heartbeat API for failure detection,like LeaseKeepAlive in Etcd.

-

Blocking lock

-

Sequencer

4. Reference

The Chubby lock service for loosely-coupled distributed systems

Thanks for this detailed proposal, distributed locks for leader elections and similar scenarios is a feature that I've personally wanted to see in Dapr for a long time now.

I agree with your assessment to start with the basics and punt reentrant locks and leases to phase 2.

What I'm missing from this proposal is a little more detail on how the implementation will work - namely, what is the lock service that you are referring to - is this a Dapr state store? if so, is it any state store, or only state stores that support First-Write-Wins to handle concurrency when trying to acquire a lock? Personally, supporting state stores with FWW seems like a very reasonable approach to me.

Thanks for the proposal. I do think that the solution A to keep the lock is simpler to use and understand.

Do you think we can have the lock API as part of the first iteration?

This issue has been automatically marked as stale because it has not had activity in the last 30 days. It will be closed in the next 7 days unless it is tagged (pinned, good first issue, help wanted or triaged/resolved) or other activity occurs. Thank you for your contributions.

sorry for not replying ,I will improve this proposal according to above discussion after finishing my work. So don't close it 😢

Not closing @seeflood, this is a good proposal and I would like to have this in 1.5 if possible.

Pinned to avoid closing by stale bot.

Sorry for not replying,I'm back and it's time to continue working on it! Let's first look at the remaining issues:

What I'm missing from this proposal is a little more detail on how the implementation will work - namely, what is the lock service that you are referring to - is this a Dapr state store? if so, is it any state store, or only state stores that support First-Write-Wins to handle concurrency when trying to acquire a lock? Personally, supporting state stores with FWW seems like a very reasonable approach to me.

Good point. There are two approaches to implement distributed lock components:

- Write a new type of component from scratch,we can call them "lock store components". Actually I'm going to do this. I will write a redis standalone lock component or an etcd lock component as an example.The interface of these components will be:

type LockStore interface {

// Init this component

Init(metadata Metadata) error

// Features return the feature set this component supports

Features() []Feature

// TryLock tries to acquire a lock

TryLock(req *TryLockRequest) (*TryLockResponse, error)

// Unlock tries to release a lock

Unlock(req *UnlockRequest) (*UnlockResponse, error)

}

- Another option: reuse state store components with FWW and build distributed lock API on them.

FWW has the semantic of "Set-if-not-exist",which is a subset of "Compare-and-Set" and can be used to implement

tryLock. However, FWW can't be used to implement theunlockmethod,which needs "Compare-and-Set" semantic. Besides,the existing state components don't have the "Set-with-ttl" method,which is necessary to implement a distributed lock. Considering we will add more features to distributed lock API and have to modify the components from time to time, it's better to write a new type of component.

@yaron2 @artursouza Hi guys,can I start writing code based on discussion above?

Update: I missed the fact that state API already support "set-with-ttl",but it does not affect the truth that state stores have no "compare-and-set" method.

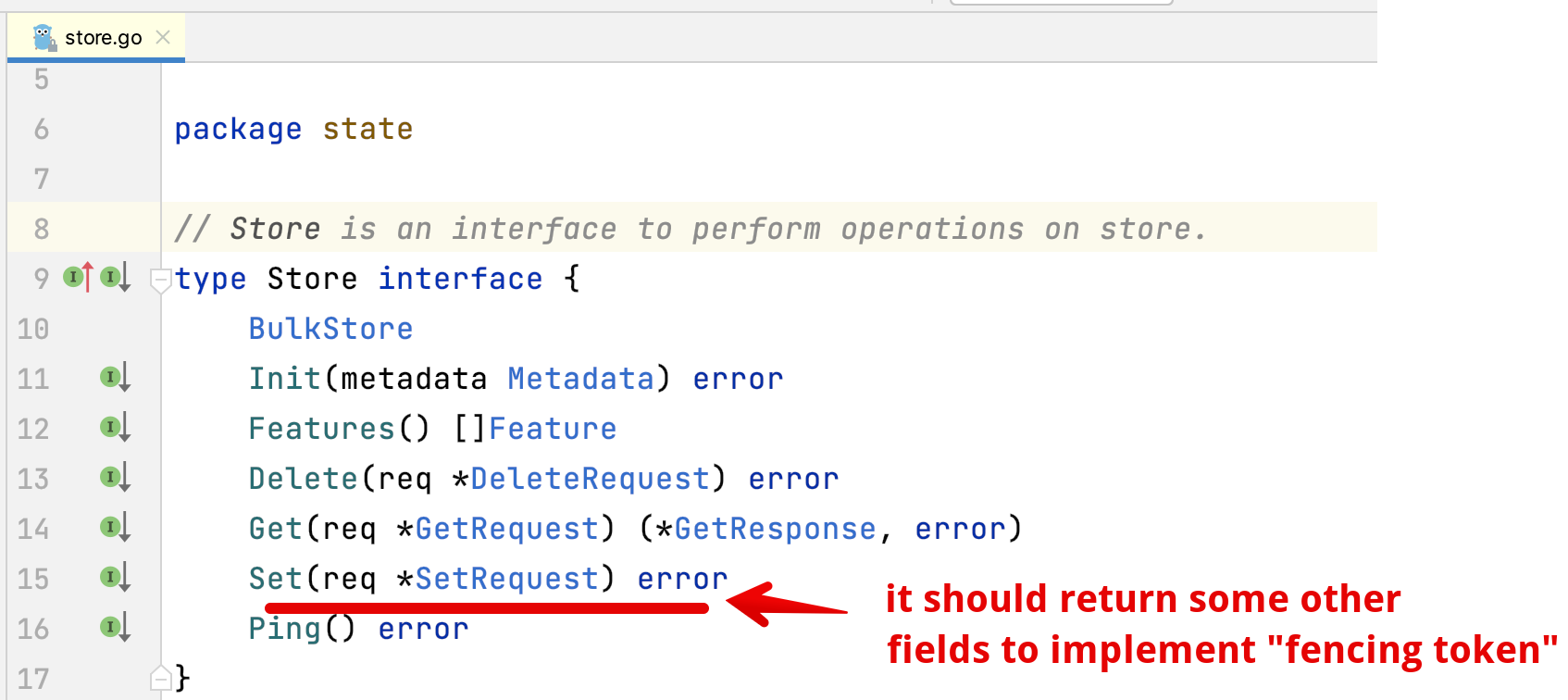

Q: Why not just add a "compare-and-set" method to state store components and build the distributed lock API on them? A: Considering we will add more features to distributed lock API and have to modify the components from time to time, it's better to write a new type of component. For example, let's say that I implement the distributed lock API with existing state stores:

func (a *api) Unlock(ctx context.Context, req *runtimev1pb.UnlockRequest) (*runtimev1pb.UnlockResponse, error) {

// ....

store, err := a.getStateStore(in.StoreName)

// suppose i add a `CompareAndSet` method for state store components

result,err := store.CompareAndSet(convert(req))

// ...

}

func (a *api) TryLock(ctx context.Context, req *runtimev1pb.TryLockRequest) (*runtimev1pb.TryLockResponse, error) {

// ....

store, err := a.getStateStore(in.StoreName)

// set with FWW and TTL

err = store.Set(convertTrylockRequest(req))

// ...

}

After that,if we want to support the "fencing token" feature (or "sequencer" proposed by chubby) , we need the state store components to return an incremental number and some other metadata:

In this scenario,it's not appropriate to modify all the state store components just for one small(but important) feature.

So it's would be more extendable to write a new type of "lock store component",like:

In this scenario,it's not appropriate to modify all the state store components just for one small(but important) feature.

So it's would be more extendable to write a new type of "lock store component",like:

func (a *api) TryLock(ctx context.Context, req *runtimev1pb.TryLockRequest) (*runtimev1pb.TryLockResponse, error) {

// ....

lockStore, err := a.getLockStore(in.StoreName)

result,err := lockStore.TryLock(convertTrylockRequest(req))

// ...

}

@seeflood can the TryLockResponse include additional data like which app is owning the lock this can be helpful in troubleshooting

@jigargandhi Some implementation (e.g. redis Redlock) can not return the current lock owner when TryLock and Unlock failed.

So instead of giving the user a field that may be empty, we can let each component try their best to log the current lock owner ( a random uuid) .It's helpful in troubleshooting too. What do you think?

It's an interesting proposal. Recently we happen to have a need to implement a distributed lock. When will this API supported be Dapr?

@sora-kashiwagi Thanks for your feedback! I'm trying to collect feedback on this proposal. I will start coding once it gets enough attention

@seeflood personally I really favor adding capabilities to existing state stores. There's two reasons for this:

- Avoiding duplication of code - see the configuration stores, for example. we are seeing brand new implementations of a store, and I would like to avoid that as much as possible

- Reuse of existing features like TTL, FWW

- Consistency - see how we implemented Transactions. We could have had a different component type of a TransactionalStateStore, but that would have resulted, again, in much more code to maintain which is mostly duplicate. Instead, we added it as a capability to the existing state stores, and so far that has worked great. I'd really like to continue that line.

Thanks for the proposal. It said "Although users have to directly deal with the lease renewal logic when using grpc, it is not hard for developers to understand." My experience is that these sort of things may not be hard for some developers to understand, but will definitely be hard for others not so experienced to understand.

So I encourage you to build Dapr features that are easy to use for a very wide level of experience. This is a great guiding light since this characteristic is present in very many of the best selling and most widely used software products, and other products as well -- like cars, appliances, air planes, golf carts, cake mixes, etc. Go for easy to use makes happy customers! Thanks.

Thanks all for your great suggestions!

It's 23:00 Beijing time. After a day’s work, I finally have time to sit down and write a new proposal :)

Here's proposal v0.2.0

It is basically the same as v0.1.0, except for some optimizations based on discussion above.

About code reuse

Make it consistent with how we implement TransactionalStore

The component interface will be :

type LockStore interface {

// Init this component

Init(metadata Metadata) error

// TryLock tries to acquire a lock

TryLock(req *TryLockRequest) (*TryLockResponse, error)

// Unlock tries to release a lock

Unlock(req *UnlockRequest) (*UnlockResponse, error)

}

The implementation will be consistent with TransactionalStore.

We add a new Feature value for state store:

const (

// FeatureETag is the feature to etag metadata in state store.

FeatureETag Feature = "ETAG"

// FeatureTransactional is the feature that performs transactional operations.

FeatureTransactional Feature = "TRANSACTIONAL"

FeatureTryLock Feature = "TRYLOCK"

)

// Feature names a feature that can be implemented by PubSub components.

type Feature string

If a state store component has the feature of FeatureTryLock,it should implement the LockStore interface, so that we can cast this Store into a LockStore during startup.

Pros

- Code reuse. For example,Some (not all) of the components can reuse existing features like TTL, FWW.

For example,

LockStore.Init(metadata)can reuse code ofStore.Init(metadata).

Cons

- This design forces distributed lock components to provide CRUD capabilities.

For example ,it makes the red-lock component harder to implement.

For example, Chubby was a lock service in google and since it does not provide CRUD capabilities (I'm not sure about this because I haven't worked at google), it can't be implemented as a LockStore component.

I think this shortcoming is acceptable.

Aside:Extract a new layer from the current components

We can extract a new layer from the current components.For example, the redis state component and redis pubsub component should reuse the same code in redis_util.go to construct and init a new redis client.

It might be the easiest way to reduce the redundancy of the current code.

I think it's appropriate to submit a separate issue for it, so I won’t discuss it here and it won't be included in the PR of distributed lock API.

Make it easy to use

I like the design principles mentioned by @georgestevens99 . It's better to hide the lease renewal logic from users. To achieve this goal, we can make the Dapr sdk automatically renew the lock. Of course, if the user uses dapr directly through grpc or http, he still has to handle this logic by himself.

Finally ,wish you success in your new adventure :) @yaron2

This is a good proposal! What about adding the Close() error function to release resources (locks and connections) immediately in this method when closing dapr.

@Taction Thanks for your feedback!

In proposal v0.2, the LockStore interface is actually a special kind of state store component.

Since Dapr already has the functionality to close the state store components , there is no need for us to add another Close() . See https://github.com/dapr/dapr/blob/99e039a102c7e9e84c15646fdf74f4190f5434b4/pkg/runtime/runtime.go#L1889

It's similar to the implementation of TransactionalStore: reuse the existing functions of the state store as much as possible ,including the init and close functionalities

Thanks @seeflood I think this is a very good iteration of the proposal.

Also, since Dapr components are feature oriented, I think it's OK to have a state store with a Lock feature but not a CRUD feature.

@yaron2 OK. I will start coding recently

@yaron2 OK. I will start coding recently

Hold on for just a bit! I want an approval on this from @artursouza too, then you can start :)

@yaron2 OK :)

I have a concern about making the distributed lock API to fit into the state store. These are difference use cases. There might be a way to implement the lock API in some of the components that also offer state store. I propose we keep those separate. The more "optional" APIs we add to a building block, less portable it becomes. We might end up with very few actual compatible implementations as the combination of features increases.

I have a concern about making the distributed lock API to fit into the state store. These are difference use cases. There might be a way to implement the lock API in some of the components that also offer state store. I propose we keep those separate. The more "optional" APIs we add to a building block, less portable it becomes. We might end up with very few actual compatible implementations as the combination of features increases.

Yeah I agree, but this proposal supports the solution.

Either lockstore components or existing state store components can implement the interface. The factory loaders in daprd are interface based, which means that they can load both state store components that implement the interface and separate lockstore components.

This also allows lockstore components to gradually make it into statestore components in the future.

I have a concern about making the distributed lock API to fit into the state store. These are difference use cases. There might be a way to implement the lock API in some of the components that also offer state store. I propose we keep those separate. The more "optional" APIs we add to a building block, less portable it becomes. We might end up with very few actual compatible implementations as the combination of features increases.

Yeah I agree, but this proposal supports the solution. Either lockstore components or existing state store components can implement the interface. The factory loaders in

daprdare interface based, which means that they can load both state store components that implement the interface and separate lockstore components.This also allows lockstore components to gradually make it into statestore components in the future.

I agree portability is a value we want to preserve without diluting, and also want to watch out for both "API explosion" and "option explosion" as we do more features like this. That said I think I prefer the problem of more optional APIs in a building block versus more duplicate building block APIs and a matrix of components to cover each feature permutation. Could we get more specific what the focal Statestore components are that will likely support at first? Is this mainly about Zookeeper and Redis variants? Or is it everything in the table shown in the proposal (and maybe more)?

Before we optimize for reuse of component code, what will actually be reused? The storage for lock seems to be separate from the state storage we have now. Components that implement both interfaces would need to create two different "tables" even if only one is used. I am leaning towards no reuse and keep it separate from state store.

One more point is how this will affect existing state stores that are stable. Does it mean we have to mark each capability as alpha-beta-stable? I would rather not overindex a building block with too much functionality.

One more point is how this will affect existing state stores that are stable. Does it mean we have to mark each capability as alpha-beta-stable? I would rather not overindex a building block with too much functionality.

Again, this is not an issue as we don't need to add it as a state store feature.

Then, we get the best of both worlds, and can have separate implementations and reuse a state store if that makes more sense on a case by case basis.

I prefer to keep it separate from the state store. Maybe we can create an adapter to reuse state store codes, just like:

- dapr/components-contrib#1305

I prefer to keep it separate from the state store. Maybe we can create an adapter to reuse state store codes, just like:

See my comment above.